JDK版本为1.8_191

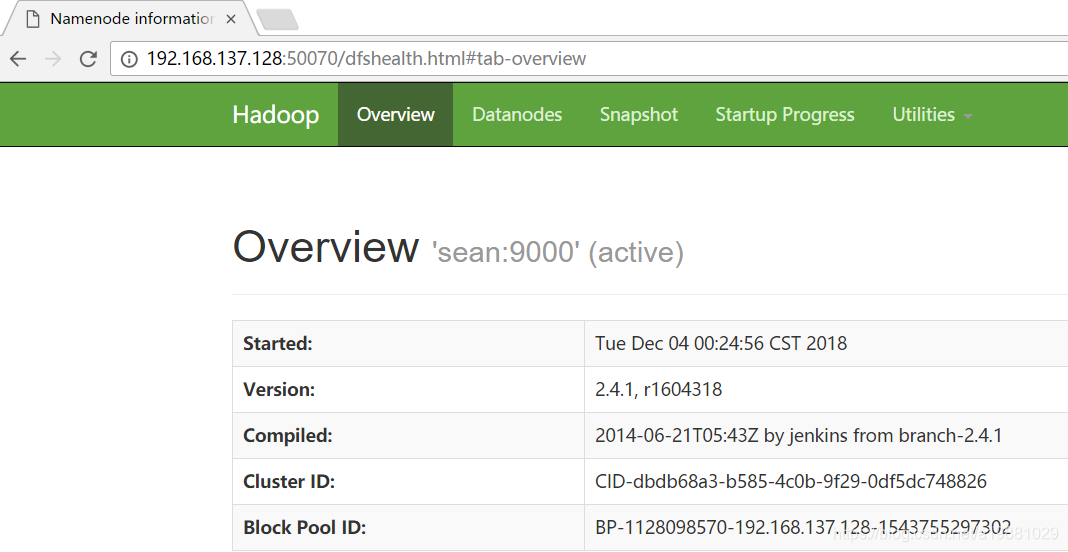

Hadoop版本为2.4.1

Distribution为Ubuntu14

1,创建hadoop账号

#创建hadoop用户组

root@sean:~# groupadd hadoop

#在hadoop用户组下创建hadoop账号

root@sean:~# useradd -d /home/hadoop -m -g hadoop hadoop

#修改hadoop账号密码

root@sean:~# passwd hadoop

Enter new UNIX password:

Retype new UNIX password:

passwd: password updated successfully2,配置SSH免密码登陆

使用root账号安装sshd服务(ssh服务端)

root@sean:~# apt-get update

root@sean:~# apt-get install openssh-server修改sshd服务配置,修改完成后需重启sshd服务

root@sean:~# vi /etc/ssh/sshd_config

#修改如下内容

RSAAuthentication yes

PubkeyAuthentication yes使用hadoop账号生成密钥

#切换至hadoop账号

root@sean:~# su hadoop

hadoop@sean:/root$ cd ~

hadoop@sean:~$ which ssh-keygen

/usr/bin/ssh-keygen

hadoop@sean:~$ /usr/bin/ssh-keygen -t rsa 然后一路回车,最终将在/home/hadoop/.ssh/路径下生成私钥id_rsa和公钥id_rsa.pub

将公钥中的内容复制到/home/hadoop/.ssh/authorized_keys文件

hadoop@sean:~$ cat .ssh/id_rsa.pub >> .ssh/authorized_keys第一次登陆将会将证书内容保存在/home/hadoop/.ssh/known_hosts文件中,以后再次登陆将不需要输入密码

hadoop@sean:~$ ssh hadoop@192.168.137.1283,安装JDK

hadoop@sean:~$ tar -xzf jdk-8u191-linux-x64.tar.gz配置环境变量

hadoop@sean:~$ vi .bashrc

#添加如下内容

export JAVA_HOME=/home/hadoop/jdk1.8.0_191

export PATH=$JAVA_HOME/bin:$PATH

export CLASSPATH=.:$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

hadoop@sean:~$ source .bashrc4,安装Hadoop

在Hadoop官网下载hadoop-2.4.1.tar.gz并上传至服务器/home/hadoop路径下

hadoop@sean:~$ tar -xzf hadoop-2.4.1.tar.gz

hadoop@sean:~$ mv hadoop-2.4.1 hadoop配置环境变量

hadoop@sean:~$ vi .bashrc

#添加如下内容

export HADOOP_HOME=/home/hadoop/hadoop

export PATH=$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$PATH

hadoop@sean:~$ source .bashrc 5,修改Hadoop配置文件

hadoop@sean:~$ cd hadoop/etc/hadoop

hadoop@sean:~/hadoop/etc/hadoop$ vi hadoop-env.sh

#即便配置了JAVA_HOME环境变量也需修改

#否则启动时会报JAVA_HOME is not set and could not be found

export JAVA_HOME=/home/hadoop/jdk1.8.0_191修改core-site.xml(伪分布式使用IP地址而不是主机名或localhost的好处是不需要修改/etc/hosts,当然全分布式还是需要修改的):

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>fs.default.name</name>

<!-- 如果不设置端口(hdfs://localhost),则默认使用8020端口 -->

<value>hdfs://192.168.137.128:9000</value>

</property>

<property>

<name>hadoop.tmp.dir</name>

<value>/home/hadoop/tmp/hadoop</value>

</property>

</configuration>修改hdfs-site.xml:

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.name.dir</name>

<value>file:///home/hadoop/hdfs/namenode</value>

</property>

<property>

<name>dfs.data.dir</name>

<value>file:///home/hadoop/hdfs/datanode</value>

</property>

</configuration>修改yarn-site.xml:

<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

</configuration>创建mapred-site.xml并修改:

hadoop@sean:~/hadoop/etc/hadoop$ cp mapred-site.xml.template mapred-site.xml

hadoop@sean:~/hadoop/etc/hadoop$ vi mapred-site.xml<?xml version="1.0"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>mapreduce.framework.name</name>

<value>yarn</value>

</property>

</configuration>6,初始化HDFS文件系统

特别要注意的是,Hadoop并不识别带“_”的主机名,所以如果你的主机名带有“_”,一定要进行修改

hadoop@sean:~/hadoop/etc/hadoop$ hdfs namenode -format输出中无异常信息说明初始化成功

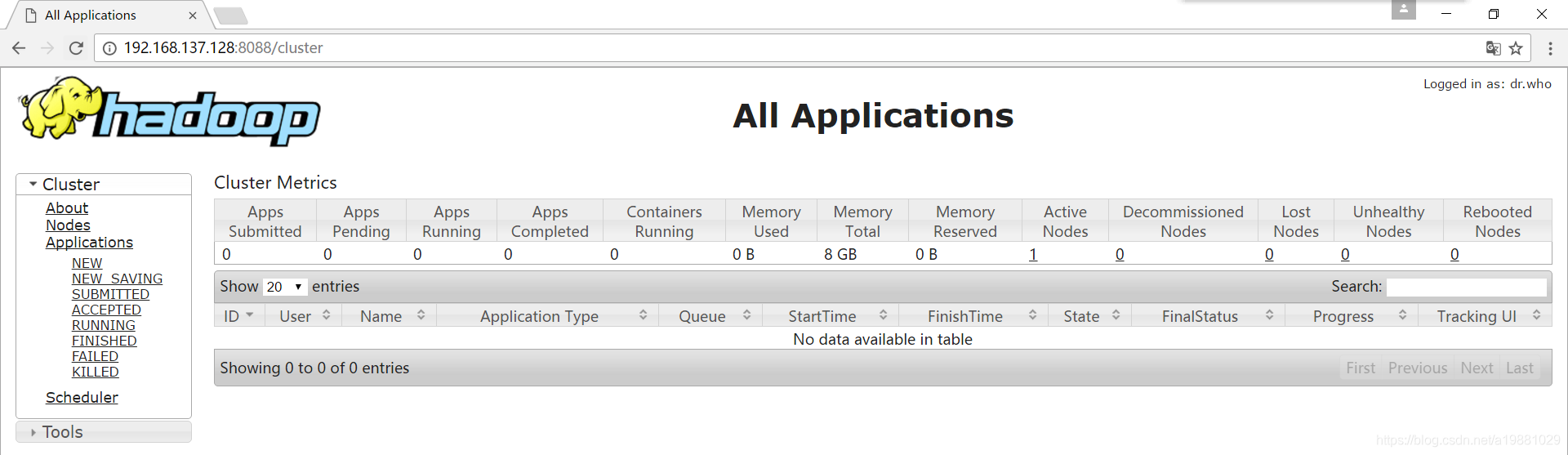

7,启动Hadoop

hadoop@sean:~$ start-dfs.sh

Starting namenodes on [sean]

sean: starting namenode, logging to /home/hadoop/hadoop/logs/hadoop-hadoop-namenode-sean.out

localhost: starting datanode, logging to /home/hadoop/hadoop/logs/hadoop-hadoop-datanode-sean.out

Starting secondary namenodes [0.0.0.0]

0.0.0.0: starting secondarynamenode, logging to /home/hadoop/hadoop/logs/hadoop-hadoop-secondarynamenode-sean.out

hadoop@sean:~$ start-yarn.sh

starting yarn daemons

starting resourcemanager, logging to /home/hadoop/hadoop/logs/yarn-hadoop-resourcemanager-sean.out

localhost: starting nodemanager, logging to /home/hadoop/hadoop/logs/yarn-hadoop-nodemanager-sean.out

hadoop@sean:~$ jps

15221 NameNode

16168 NodeManager

15500 SecondaryNameNode

16045 ResourceManager

16269 Jps

15342 DataNode可以看到所有Hadoop守护进程均已启动

4315

4315

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?