算法

算法借鉴了Converting a fisheye image into a panoramic, spherical or perspective projection,核心内容如下:

Software: fish2sphere

Usage: fish2sphere [options] tgafile

Options

-w n sets the output image size, default: 4 fisheye image width

-a n sets antialiasing level, default: 2

-s n fisheye aperture (degrees), default: 180

-c x y fisheye center, default: center of image

-r n fisheye radius, default: half the fisheye image width

-x n tilt camera, default: 0

-y n roll camera, default: 0

-z n pan camera, default: 0

-b n n longitude range for blending, default: no blending

-o n create a textured mesh as OBJ model, three types: 0,1,2

-d debug mode

Updates July/August 2016

Added export of textured hemisphere as OBJ file.

Added variable order of rotations, occur in the order they appear in the command line.

Source fisheye image.

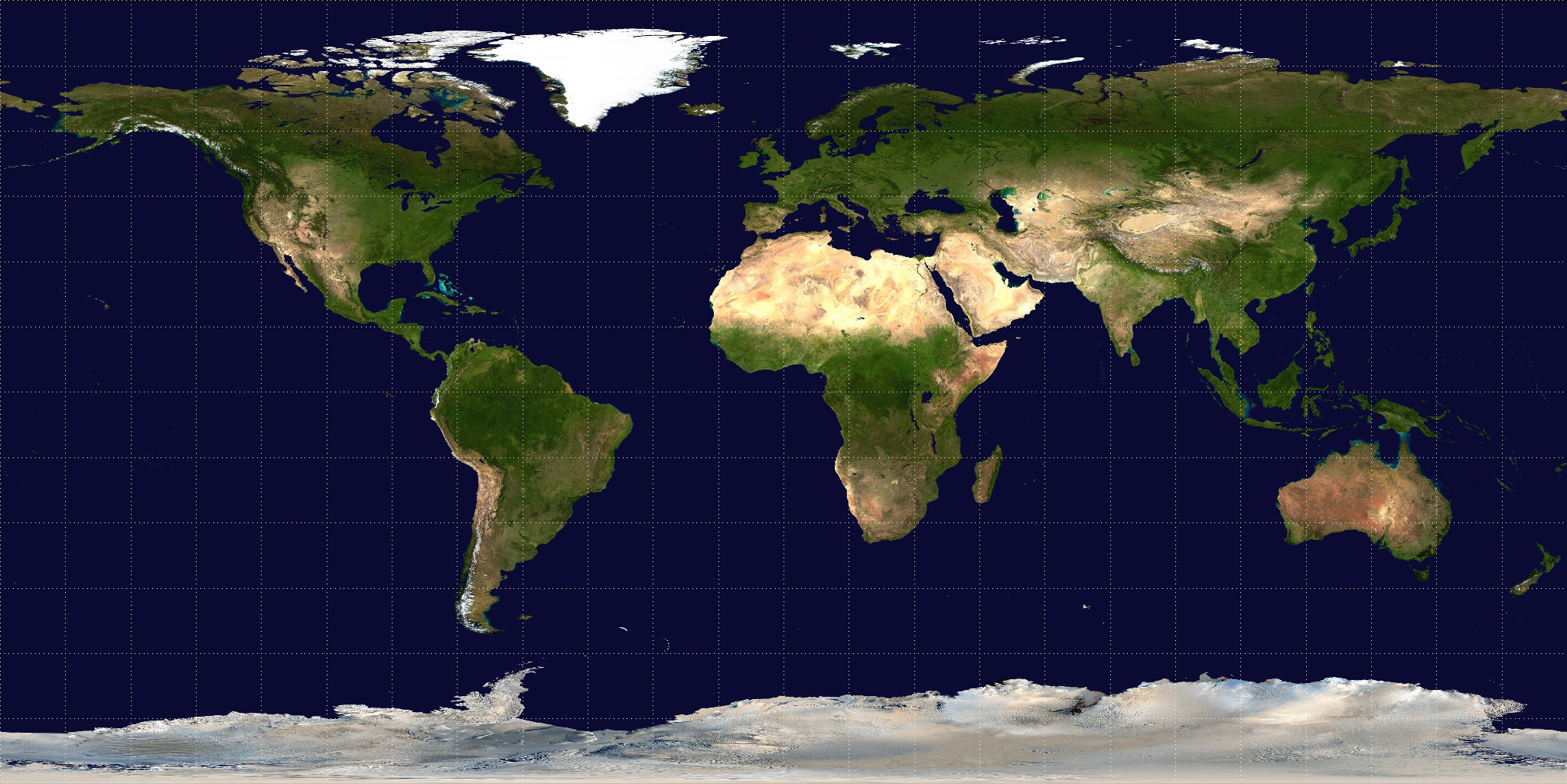

Transformation using the default settings. Since a 180 degree (in this case) fisheye captures half the visible universe from a single position, so it makes sense that it occupies half of a spherical (equirectangular) projection, which captures the entire visible universe from a single position.

In this case the camera is not perfectly horizontal, this and other adjustments can be made. In the example here the lens was pointing downwards slightly, the correction results in more of the south pole being visible and less of the north pole.

Note that the fisheye angle is not limited to 180 degrees, indeed one application for this is in the pipeline to create 360 spherical panoramas from 2 cameras, each with a fisheye lens with a field of view greater than 180 to provide a blend zone.

This can be readily implemented in the OpenGL Shader Language, the following example was created in the Quartz Composer Core Image Filter.

// Fisheye to spherical conversion

// Assumes the fisheye image is square, centered, and the circle fills the image.

// Output (spherical) image should have 2:1 aspect.

// Strange (but helpful) that atan() == atan2(), normally they are different.

kernel vec4 fish2sphere(sampler src)

{

vec2 pfish;

float theta,phi,r;

vec3 psph;

float FOV = 3.141592654; // FOV of the fisheye, eg: 180 degrees

float width = samplerSize(src).x;

float height = samplerSize(src).y;

// Polar angles

theta = 2.0 * 3.14159265 * (destCoord().x / width - 0.5); // -pi to pi

phi = 3.14159265 * (destCoord().y / height - 0.5); // -pi/2 to pi/2

// Vector in 3D space

psph.x = cos(phi) * sin(theta);

psph.y = cos(phi) * cos(theta);

psph.z = sin(phi);

// Calculate fisheye angle and radius

theta = atan(psph.z,psph.x);

phi = atan(sqrt(psph.x*psph.x+psph.z*psph.z),psph.y);

r = width * phi / FOV;

// Pixel in fisheye space

pfish.x = 0.5 * width + r * cos(theta);

pfish.y = 0.5 * width + r * sin(theta);

return sample(src, pfish);

}The transformation can be performed in realtime using warp mesh files for software such as warpplayer or the VLC equivalent VLCwarp. A sample mesh file is given here: fish2sph.data. Showing the result in action is below.

文中算法的数学意义是将全景图(Equirectangular投影)中的像素转换成角度,然后依据角度转换成一个完美半球上的坐标点,再将这个坐标点转换成鱼眼画面中的某个像素,至此实现鱼眼画面转全景图的目的。

在使用的时候需要注意上文算法所使用的是左手坐标系,在实际使用的时候可能需要转换。

CUDA实现

1 第一个并行代码——实现两张图叠加

看似简单的图片叠加实际上会牵扯到线程并行,块并行,显存/内存拷贝,内存溢出等问题。

首先在头文件中添加 CI_RESULT overlyTwoImg(Mat &img1 , Mat &img2)接口:

#include <stdio.h>

#include "include_openCV\opencv2\opencv.hpp"

using namespace cv;

typedef enum {

CI_OK,

CI_ERROR

}CI_RESULT;

class input_engine {

public:

CI_RESULT initCUDA();

CI_RESULT overlyTwoImg(Mat &img1 , Mat &img2);

private:

char* err_str;

};

#endif // !_CUDA_H_然后在CUDA文件中写具体实现,关键代码如下:

__global__ void overlyImg(uchar* a, uchar* b, const int width , const int height) {

int x = threadIdx.x + blockIdx.x*blockDim.x;

int y = threadIdx.y + blockIdx.y*blockDim.y;

if ((x >= width) || (y >= height)) { return; }

int t = (int)(a[(x + y*width

最低0.47元/天 解锁文章

最低0.47元/天 解锁文章

1435

1435

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?