自编码器是一个三层的feed-forward神经网络模型,输入层经过隐含层的特征表示后再重构出跟输入层逼近的输出层,中间的隐含层是特征表示层,表示对输入层学习到的特征,这些特征可能更好地表示了数据,如果用学到的特征来训练数据分类或回归可能学习效果更好,于是就有了自我学习和无监督特征学习。

如果我们有很多的未标注数据,那就更好了,我们可以用自编码器学习特征表示,然后用学到的特征表示对已标注数据提取特征,再用机器学习算法比如softmax regression进行训练、预测,即先经过无监督的特征学习,然后再经过有监督的学习。未标注数据与已标注数据来自同一分布时就是半监督学习,来自不同分布就是无监督学习,比如我们的目标是要区分摩托车和汽车,如果未标注数据也是摩托车或汽车,那么这个问题就是半监督学习,如果不是则是自我学习。

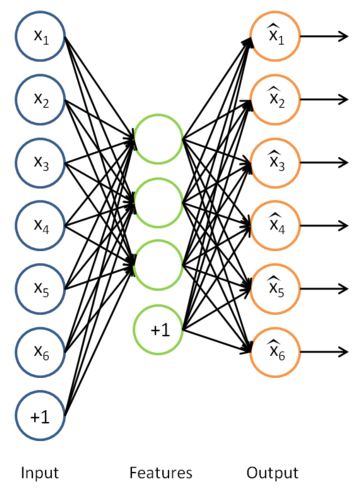

自编码的网络结构如下:

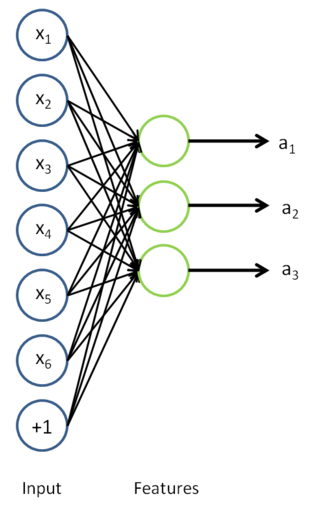

通过自编码器得到特征表示的模型参数W1和b1,我们就可以用W1和b1对已标注数据进行特征提取,即算出它们的激活值。

实验数据也是MNIST数据集,这次把5-9类的数据作为无标注数据学习特征表示,然后在0-4类的数据中分为训练集和测试集来运行模型,实验结果的预测准确率为98.32%,而直接用图像像素作为输入得到准确率为96.74%。

%% CS294A/CS294W Self-taught Learning Exercise

% Instructions

% ------------

%

% This file contains code that helps you get started on the

% self-taught learning. You will need to complete code in feedForwardAutoencoder.m

% You will also need to have implemented sparseAutoencoderCost.m and

% softmaxCost.m from previous exercises.

%

%% ======================================================================

% STEP 0: Here we provide the relevant parameters values that will

% allow your sparse autoencoder to get good filters; you do not need to

% change the parameters below.

inputSize = 28 * 28;

numLabels = 5;

hiddenSize = 200;

sparsityParam = 0.1; % desired average activation of the hidden units.

% (This was denoted by the Greek alphabet rho, which looks like a lower-case "p",

% in the lecture notes).

lambda = 3e-3; % weight decay parameter

beta = 3; % weight of sparsity penalty term

maxIter = 400;

%% ======================================================================

% STEP 1: Load data from the MNIST database

%

% This loads our training and test data from the MNIST database files.

% We have sorted the data for you in this so that you will not have to

% change it.

% Load MNIST database files

mnistData = loadMNISTImages('mnist/train-images-idx3-ubyte');

mnistLabels = loadMNISTLabels('mnist/train-labels-idx1-ubyte');

% Set Unlabeled Set (All Images)

% Simulate a Labeled and Unlabeled set

labeledSet = find(mnistLabels >= 0 & mnistLabels <= 4);

unlabeledSet = find(mnistLabels >= 5); %5-9类作为无标签数据集用来学习特征表示

%已标注数据分一半分别用于训练softmax和测试

numTrain = round(numel(labeledSet)/2);

trainSet = labeledSet(1:numTrain);

testSet = labeledSet(numTrain+1:end);

unlabeledData = mnistData(:, unlabeledSet);

trainData = mnistData(:, trainSet);

trainLabels = mnistLabels(trainSet)' + 1; % Shift Labels to the Range 1-5

testData = mnistData(:, testSet);

testLabels = mnistLabels(testSet)' + 1; % Shift Labels to the Range 1-5

% Output Some Statistics

fprintf('# examples in unlabeled set: %d\n', size(unlabeledData, 2));

fprintf('# examples in supervised training set: %d\n\n', size(trainData, 2));

fprintf('# examples in supervised testing set: %d\n\n', size(testData, 2));

%% ======================================================================

% STEP 2: Train the sparse autoencoder

% This trains the sparse autoencoder on the unlabeled training

% images.

% Randomly initialize the parameters

theta = initializeParameters(hiddenSize, inputSize);

%% ----------------- YOUR CODE HERE ----------------------

% Find opttheta by running the sparse autoencoder on

% unlabeledTrainingImages

opttheta = theta;

%用minFunc里的L-BFGS算法训练sparse autoencoder的模型,要用到sparse autoencoder的计算损失的代码

addpath minFunc/

options.Method = 'lbfgs';

options.maxIter = 400;

options.display = 'on';

[opttheta, cost] = minFunc( @(p) sparseAutoencoderCost(p, ...

inputSize, hiddenSize, ...

lambda, sparsityParam, ...

beta, unlabeledData), ...

theta, options);

%% -----------------------------------------------------

% Visualize weights

W1 = reshape(opttheta(1:hiddenSize * inputSize), hiddenSize, inputSize);

display_network(W1');

%%======================================================================

%% STEP 3: Extract Features from the Supervised Dataset

%

% You need to complete the code in feedForwardAutoencoder.m so that the

% following command will extract features from the data.

trainFeatures = feedForwardAutoencoder(opttheta, hiddenSize, inputSize, ...

trainData);

testFeatures = feedForwardAutoencoder(opttheta, hiddenSize, inputSize, ...

testData);

%%======================================================================

%% STEP 4: Train the softmax classifier

softmaxModel = struct;

%% ----------------- YOUR CODE HERE ----------------------

% Use softmaxTrain.m from the previous exercise to train a multi-class

% classifier.

% Use lambda = 1e-4 for the weight regularization for softmax

% You need to compute softmaxModel using softmaxTrain on trainFeatures and

% trainLabels

%softmax训练过程

options.maxIter = 100;

lambda = 1e-4;

inputSize = hiddenSize;

softmaxModel = softmaxTrain(inputSize, 5, lambda, ...

trainFeatures, trainLabels, options);

%% -----------------------------------------------------

%%======================================================================

%% STEP 5: Testing

%% ----------------- YOUR CODE HERE ----------------------

% Compute Predictions on the test set (testFeatures) using softmaxPredict

% and softmaxModel

%用到softmax练习中的预测函数

[pred] = softmaxPredict(softmaxModel, testFeatures);

acc = mean(pred(:) == testLabels(:));

fprintf('Accuracy: %0.3f%%\n', acc*100);

%% -----------------------------------------------------

% Classification Score

fprintf('Test Accuracy: %f%%\n', 100*mean(pred(:) == testLabels(:)));

% (note that we shift the labels by 1, so that digit 0 now corresponds to

% label 1)

%

% Accuracy is the proportion of correctly classified images

% The results for our implementation was:

%

% Accuracy: 98.3%

%

%

参考:

http://ufldl.stanford.edu/wiki/index.php/Self-Taught_Learning_to_Deep_Networks

7556

7556

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?