1、基本概念

决策树是一类常见的机器学习方法,决策树是基于树结构来进行决策的。

一般的,一颗决策树包含一个根节点,若干个内部节点和若干个叶节点,叶节点对应于决策结果,其他每个节点则对应于一个属性测试;每个节点包含的样本集合根据属性测试的结果被划分到子节点中;根节点包含样本全集,从根节点到每个叶节点的路径对应了一个判定测试序列。

2、决策树算法

(1)计算信息熵

from math import log

import operator

#计算给定数据集的信息熵

def calcShannonEnt(dataSet):

numEntries = len(dataSet)

labelCounts = {}

for featVec in dataSet:

currentLabel = featVec[-1]

if currentLabel not in labelCounts.keys(): #为所有可能分类创建字典

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnt = 0.0

for key in labelCounts:

prob = float(labelCounts[key])/numEntries

shannonEnt -= prob * log(prob,2) #以2为底数求对数

return shannonEnt #熵越高,混合的数据也越多

#创建数据集,三列(特征、特征的值,预期结果)

def createDataSet():

dataSet = [[1, 1, 'yes'],

[1, 1, 'yes'],

[1, 0, 'no'],

[0, 1, 'no'],

[0, 1, 'no']]

labels = ['no surfacing','flippers']

return dataSet, labels

dataSet,labels = createDataSet()

print "dataSet:"

print dataSet

print "\n"

print "信息熵:"

print calcShannonEnt(dataSet)信息熵的计算结果:

dataSet:

[[1, 1, 'yes'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

信息熵:

0.928771237955

(2)划分数据集

"""

extend和append的区别:

>>> a=[1,2,3]

>>> b=[2,3,4]

>>> a.append(b)

>>> a

[1, 2, 3, [2, 3, 4]]

>>> a=[1,2,3]

>>> b=[2,3,4]

>>> a.extend(b)

>>> a

[1, 2, 3, 2, 3, 4]

"""

from math import log

import operator

def calcShannonEnt(dataSet):#计算信息熵

numEntries = len(dataSet)

labelCounts = {}

for featVec in dataSet:

currentLabel = featVec[-1]

if currentLabel not in labelCounts.keys(): #为所有可能分类创建字典

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnt = 0.0

for key in labelCounts:

prob = float(labelCounts[key])/numEntries

shannonEnt -= prob * log(prob,2) #以2为底数求对数

return shannonEnt

def createDataSet():#创建数据集,三列(特征、特征的值,预期结果)

dataSet = [[1, 1, 'yes'],

[1, 1, 'yes'],

[1, 0, 'no'],

[0, 1, 'no'],

[0, 1, 'no']]

labels = ['no surfacing','flippers']

return dataSet, labels

def splitDataSet(dataSet, axis, value):#划分数据集,dataSet数据集,axis划分数据集的特征,value需要返回的特征的值

retDataSet = []

for featVec in dataSet:

if featVec[axis] == value:

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

return retDataSet

dataSet,labels = createDataSet()

print "dataSet:"

print dataSet

retDataSet = splitDataSet(dataSet,1,0)

print "splitDataSet(dataSet,1,0):"

print retDataSet

retDataSet = splitDataSet(dataSet,1,1)

print "splitDataSet(dataSet,1,1):"

print retDataSet

retDataSet = splitDataSet(dataSet,0,1)

print "splitDataSet(dataSet,0,1):"

print retDataSet

retDataSet = splitDataSet(dataSet,0,0)

print "splitDataSet(dataSet,0,0):"

print retDataSet划分数据集结果:

dataSet:

[[1, 1, 'yes'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

splitDataSet(dataSet,1,0):

[[1, 'no']]

splitDataSet(dataSet,1,1):

[[1, 'yes'], [1, 'yes'], [0, 'no'], [0, 'no']]

splitDataSet(dataSet,0,1):

[[1, 'yes'], [1, 'yes'], [0, 'no']]

splitDataSet(dataSet,0,0):

[[1, 'no'], [1, 'no']]

(3)选择最好的数据集划分方式

from math import log

import operator

def calcShannonEnt(dataSet):#计算信息熵

numEntries = len(dataSet)

labelCounts = {}

for featVec in dataSet:

currentLabel = featVec[-1]

if currentLabel not in labelCounts.keys(): #为所有可能分类创建字典

labelCounts[currentLabel] = 0

labelCounts[currentLabel] += 1

shannonEnt = 0.0

for key in labelCounts:

prob = float(labelCounts[key])/numEntries

shannonEnt -= prob * log(prob,2) #以2为底数求对数

return shannonEnt

def createDataSet():#创建数据集,三列(特征、特征的值,预期结果)

dataSet = [[1, 1, 'yes'],

[1, 1, 'yes'],

[1, 0, 'no'],

[0, 1, 'no'],

[0, 1, 'no']]

labels = ['no surfacing','flippers']

return dataSet, labels

def splitDataSet(dataSet, axis, value):#划分数据集,dataSet数据集,axis划分数据集的特征,value需要返回的特征的值

retDataSet = []

for featVec in dataSet:

if featVec[axis] == value:

reducedFeatVec = featVec[:axis]

reducedFeatVec.extend(featVec[axis+1:])

retDataSet.append(reducedFeatVec)

return retDataSet

def chooseBestFeatureToSplit(dataSet):#遍历整个数据集,循环计算信息熵和splitDataSet()函数,找到最好的特征划分方式

numFeatures = len(dataSet[0]) - 1 #剩下的特征的个数

baseEntropy = calcShannonEnt(dataSet) #计算数据集的熵,放到baseEntropy中

bestInfoGain = 0.0; bestFeature = -1

for i in range(numFeatures):

featList = [example[i] for example in dataSet]

uniqueVals = set(featList)

newEntropy = 0.0

for value in uniqueVals: #下面是计算每种划分方式的信息熵,特征i个,每个特征value个值

subDataSet = splitDataSet(dataSet, i, value)

prob = len(subDataSet)/float(len(dataSet))

newEntropy += prob * calcShannonEnt(subDataSet)

infoGain = baseEntropy - newEntropy #计算i个特征的信息熵

print i #输出特征值

print infoGain #输出特征值的信息熵

if (infoGain > bestInfoGain):

bestInfoGain = infoGain

bestFeature = i

return bestFeature

dataSet,labels = createDataSet()

print "dataSet:"

print dataSet

bestFeature = chooseBestFeatureToSplit(dataSet)

print "bestFeature:"

print bestFeature

最好的划分方式:

dataSet:

[[1, 1, 'yes'], [1, 1, 'yes'], [1, 0, 'no'], [0, 1, 'no'], [0, 1, 'no']]

0

0.0947862376665

1

0.128771237955

bestFeature:

1

(4)递归构建决策树

得到原始的数据集,然后基于最好的属性值划分数据集,由于特征值可能多于两个,因此可能存在大于两个分支的数据集划分。

第一次划分之后,数据将被向下传递到树分支的下一个节点,在这个节点上,可以再次划分数据,采用递归的原则处理数据集。递归结束的条件:

程序遍历完所有划分数据集的属性,或者每个分支下的所有实例都具有相同的分类。

如果所有实例具有相同的分类,则得到一个叶子节点或者终止块,任何到达叶子节点的数据必然属于叶子节点的分类。

(5)创建树的函数代码

#创建树,两个参数,数据集和标签列表

def createTree(dataSet,labels):

classList = [example[-1] for example in dataSet] #classList变量包含了数据集的所有类标签

if classList.count(classList[0]) == len(classList):

return classList[0] #类标签完全相同就停止继续划分

if len(dataSet[0]) == 1: #遍历完所有特征时返回出现次数最多的

return majorityCnt(classList)

bestFeat = chooseBestFeatureToSplit(dataSet) #选取数据集中的最好特征存储在bestFeat中

bestFeatLabel = labels[bestFeat]

myTree = {bestFeatLabel:{}} #存储树的所有信息

del(labels[bestFeat])

featValues = [example[bestFeat] for example in dataSet]

uniqueVals = set(featValues)

for value in uniqueVals:

subLabels = labels[:] #复制类标签,并将其存储在新列表变量subLabels中

#这样做的原因是:python语言中函数参数是列表类型时,参数是按照引用方式传递的,

#为了保证每次调用函数createTree()时不改变原始列表的内容,使用新变量subLabels代替原始列表。

myTree[bestFeatLabel][value] = createTree(splitDataSet(dataSet, bestFeat, value),subLabels)

#递归调用createTree()函数,得到的返回值插入到字典变量myTree中

return myTree 其中当所有的特征都用完时,采用多数表决的方法来决定该叶子节点的分类,即该叶节点中属于某一类最多的样本数,那么我们就说该叶节点属于那一类。

def majorityCnt(classList):

classCount={}

for vote in classList:

if vote not in classCount.keys(): classCount[vote] = 0

classCount[vote] += 1

sortedClassCount = sorted(classCount.iteritems(), key=operator.itemgetter(1), reverse=True)

return sortedClassCount[0][0](6)使用决策树进行分类

def classify(inputTree,featLabels,testVec):#决策树的分类函数

firstStr = inputTree.keys()[0]

secondDict = inputTree[firstStr]

featIndex = featLabels.index(firstStr)

key = testVec[featIndex]

valueOfFeat = secondDict[key]

if isinstance(valueOfFeat, dict):

classLabel = classify(valueOfFeat, featLabels, testVec)

else: classLabel = valueOfFeat

return classLabel(7)决策树的存储

def storeTree(inputTree,filename): #存储决策树

import pickle

fw = open(filename,'w')

pickle.dump(inputTree,fw) #pickle序列化对象,可以在磁盘上保存对象

fw.close()

def grabTree(filename): #并在需要的时候将其读取出来

import pickle

fr = open(filename)

return pickle.load(fr)3、Matplotlib注解

Matplotlib提供了一个注解工具annotations,它可以在数据图形上添加文本注释。

#encoding:utf-8

import matplotlib.pyplot as plt

decisionNode = dict(boxstyle="sawtooth", fc="0.8")

leafNode = dict(boxstyle="round4", fc="0.8")

arrow_args = dict(arrowstyle="<-")

def getNumLeafs(myTree):#递归计算获取叶节点的数目

numLeafs = 0

firstStr = myTree.keys()[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__=='dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

numLeafs += getNumLeafs(secondDict[key])

else: numLeafs +=1

return numLeafs

def getTreeDepth(myTree):#递归计算获取树的深度

maxDepth = 0

firstStr = myTree.keys()[0]

secondDict = myTree[firstStr]

for key in secondDict.keys():

if type(secondDict[key]).__name__=='dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

thisDepth = 1 + getTreeDepth(secondDict[key])

else: thisDepth = 1

if thisDepth > maxDepth: maxDepth = thisDepth

return maxDepth

def plotNode(nodeTxt, centerPt, parentPt, nodeType):#绘制带箭头的注解

createPlot.ax1.annotate(nodeTxt, xy=parentPt, xycoords='axes fraction',

xytext=centerPt, textcoords='axes fraction',

va="center", ha="center", bbox=nodeType, arrowprops=arrow_args )

def plotMidText(cntrPt, parentPt, txtString):#在父子节点间填充信息,绘制线上的标注

xMid = (parentPt[0]-cntrPt[0])/2.0 + cntrPt[0]

yMid = (parentPt[1]-cntrPt[1])/2.0 + cntrPt[1]

createPlot.ax1.text(xMid, yMid, txtString, va="center", ha="center", rotation=30)

def plotTree(myTree, parentPt, nodeTxt):#计算宽和高,递归,决定整个树图的绘制

numLeafs = getNumLeafs(myTree)

depth = getTreeDepth(myTree)

firstStr = myTree.keys()[0]

cntrPt = (plotTree.xOff + (1.0 + float(numLeafs))/2.0/plotTree.totalW, plotTree.yOff)

plotMidText(cntrPt, parentPt, nodeTxt)

plotNode(firstStr, cntrPt, parentPt, decisionNode)

secondDict = myTree[firstStr]

plotTree.yOff = plotTree.yOff - 1.0/plotTree.totalD

for key in secondDict.keys():

if type(secondDict[key]).__name__=='dict':#test to see if the nodes are dictonaires, if not they are leaf nodes

plotTree(secondDict[key],cntrPt,str(key)) #recursion

else: #it's a leaf node print the leaf node

plotTree.xOff = plotTree.xOff + 1.0/plotTree.totalW

plotNode(secondDict[key], (plotTree.xOff, plotTree.yOff), cntrPt, leafNode)

plotMidText((plotTree.xOff, plotTree.yOff), cntrPt, str(key))

plotTree.yOff = plotTree.yOff + 1.0/plotTree.totalD

#if you do get a dictonary you know it's a tree, and the first element will be another dict

def createPlot(inTree):#这里是真正的绘制,上面是逻辑绘制

fig = plt.figure(1, facecolor='white')

fig.clf()

axprops = dict(xticks=[], yticks=[])

createPlot.ax1 = plt.subplot(111, frameon=False, **axprops) #no ticks

#createPlot.ax1 = plt.subplot(111, frameon=False) #ticks for demo puropses

plotTree.totalW = float(getNumLeafs(inTree))

plotTree.totalD = float(getTreeDepth(inTree))

plotTree.xOff = -0.5/plotTree.totalW; plotTree.yOff = 1.0;

plotTree(inTree, (0.5,1.0), '')

plt.show()

"""

def createPlot():#简单绘制图形的节点和箭头

fig = plt.figure(1, facecolor='white')

fig.clf()

createPlot.ax1 = plt.subplot(111, frameon=False) #ticks for demo puropses

plotNode('a decision node', (0.5, 0.1), (0.1, 0.5), decisionNode)

plotNode('a leaf node', (0.8, 0.1), (0.3, 0.8), leafNode)

plt.show()

"""

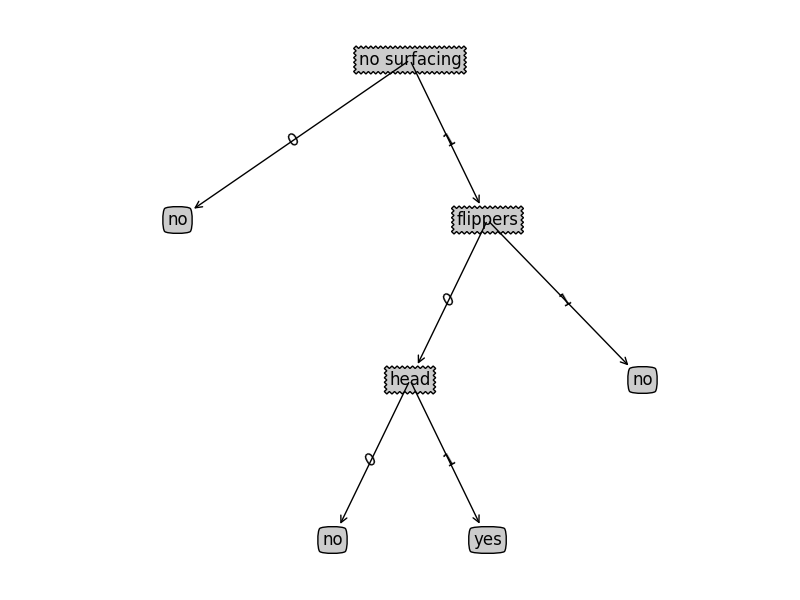

def retrieveTree(i):#预先存储树的信息

listOfTrees =[{'no surfacing': {0: 'no', 1: {'flippers': {0: 'no', 1: 'yes'}}}},

{'no surfacing': {0: 'no', 1: {'flippers': {0: {'head': {0: 'no', 1: 'yes'}}, 1: 'no'}}}}

]

return listOfTrees[i]

createPlot(retrieveTree(1))

328

328

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?