spark的安装见上一篇博客。程序完成统计build.sbt文件中包括scala和spark的行数。

scala代码

文件名:SimpleJob.scala/*** SimpleJob.scala ***/

import spark.SparkContext

import SparkContext._

object SimpleJob {

def main(args: Array[String]) {

val logFile = "build.sbt" // Should be some file on your system

val sc = new SparkContext("local", "Simple Job", "$YOUR_SPARK_HOME",

List("target/scala-2.9.3/simple-project_2.9.3-1.0.jar"))

val logData = sc.textFile(logFile, 2).cache()

val numAs = logData.filter(line => line.contains("scala")).count()

val numBs = logData.filter(line => line.contains("spark")).count()

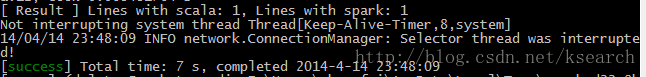

println("[ Result ] Lines with scala: %s, Lines with spark: %s".format(numAs, numBs))

}

}

build.sbt

name := "Simple Project"

version := "1.0"

scalaVersion := "2.9.3"

libraryDependencies += "org.spark-project" %% "spark-core" % "0.7.3"

resolvers ++= Seq(

"Akka Repository" at "http://repo.akka.io/releases/",

"Spray Repository" at "http://repo.spray.cc/")build.sbt中版本号的问题

之前配置一直出问题,后来发现以下目录

*:\Users\myname\.ivy2\cache\org.spark-project\spark-core_2.9.3\ivy-0.7.3.xml

于是将scalaVersion改为2.9.3,spark-core改为0.7.3,就没问题了。

程序目录结构

find ..

./simple.sbt

./src

./src/main

./src/main/scala

./src/main/scala/SimpleJob.scala运行

在SimpleJob目录下,运行

sbt package sbt run

效果

sbt package效果:

参考资料

1.Spark官方文档——本地编写并运行scala程序 http://www.cnblogs.com/vincent-hv/p/3298416.html

534

534

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?