-

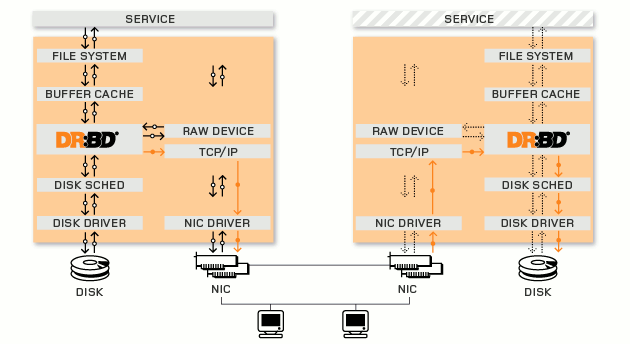

协议A:异步复制协议。本地写成功后立即返回,数据放在发送buffer中,可能丢失。

-

协议B:内存同步(半同步)复制协议。本地写成功并将数据发送到对方后立即返回,如果双机掉电,数据可能丢失。

-

协议C:同步复制协议。本地和对方写成功确认后返回。如果双机掉电或磁盘同时损坏,则数据可能丢失。

-

drbdadm:高级管理工具,管理/etc/drbd.conf,向drbdsetup和drbdmeta发送指令。

-

drbdsetup:配置装载进kernel的DRBD模块,平时很少直接用。

-

drbdmeta:管理META数据结构,平时很少直接用。

-

Resource name:可以是除了空白字符的任意的ACSII码字符

-

DRBD device:在双方节点上,此DRBD设备的设备文件;一般为/dev/drbdN,其主设备号147

-

Disk configuration:在双方节点上,各自提供的存储设备

-

Nerwork configuration:双方数据同步时所使用的网络属性

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

|

resource web {

#资源名为“web”

on node1.magedu.com {

#设置节点cluster1

device

/dev/drbd0

;

#指出drbd的标示名

disk

/dev/sda5

;

#指出作为drbd的设备

address 172.16.100.11:7789;

#指定ip和端口号

meta-disk internal;

#网络通信属性,指定drbd的元数据在本机

}

on node2.magedu.com {

device

/dev/drbd0

;

disk

/dev/sda5

;

address 172.16.100.12:7789;

meta-disk internal;

}

}

|

-

一个磁盘,或者是磁盘的某一个分区。

-

一个soft raid 设备。

-

一个LVM的逻辑卷。

-

一个EVMS(Enterprise Volume Management System,企业卷管理系统)的卷。

-

其他任何的块设备。

-

安装drbd

-

配置资源文件(定义资料名称,磁盘,节点信息,同步限制等)

-

将drbd加入到系统服务chkconfig --add drbd

-

初始化资源组drbdadm create-md resource_name

-

启动服务 service drbd start

-

设置primary主机,并同步数据

-

分区、格式化/dev/drbd*

-

一个节点进行挂载

-

查看状态

-

CentOS 6.4 X86_64

-

kmod-drbd84-8.4.2-1.el6_3.elrepo.x86_64

-

drbd84-utils-8.4.2-1.el6.elrepo.x86_64

|

1

2

3

4

5

6

7

8

|

[root@node1 src]

# wget http://download.fedoraproject.org/pub/epel/6/x86_64/epel-release-6-8.noarch.rpm

[root@node1 src]

# rpm -ivh epel-release-6-8.noarch.rpm

warning: epel-release-6-8.noarch.rpm: Header V3 RSA

/SHA256Signature

, key ID 0608b895: NOKEY

Preparing...

########################################### [100%]

1:epel-release

########################################### [100%]

[root@node1 src]

# rpm --import /etc/pki/rpm-gpg/RPM-GPG-KEY-EPEL-6

[root@node1 ~]

# rpm -ivh http://elrepo.org/elrepo-release-6-5.el6.elrepo.noarch.rpm

[root@node1 ~]

# yum list

|

|

1

2

3

4

5

6

7

8

|

[root@node2 src]

# wget http://download.fedoraproject.org/pub/epel/6/x86_64/epel-release-6-8.noarch.rpm

[root@node2 src]

# rpm -ivh epel-release-6-8.noarch.rpm

warning: epel-release-6-8.noarch.rpm: Header V3 RSA

/SHA256Signature

, key ID 0608b895: NOKEY

Preparing...

########################################### [100%]

1:epel-release

########################################### [100%]

[root@node2 src]

# rpm --import /etc/pki/rpm-gpg/RPM-GPG-KEY-EPEL-6

[root@node2 ~]

# rpm -ivh http://elrepo.org/elrepo-release-6-5.el6.elrepo.noarch.rpm

[root@node2 ~]

# yum list

|

|

1

|

[root@node1 ~]

# yum -y install drbd84 kmod-drbd84

|

|

1

|

[root@node2 ~]

# yum -y install drbd84 kmod-drbd84

|

|

1

2

3

4

5

|

[root@node1 ~]

# ll /etc/drbd.conf /etc/drbd.d/

-rw-r--r-- 1 root root 133 9月 6 2012

/etc/drbd

.conf

/etc/drbd

.d/:

总用量 4

-rw-r--r-- 1 root root 1650 9月 6 2012 global_common.conf

|

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

|

[root@node1 ~]

# vim /etc/drbd.conf #查看主配置文件

# You can find an example in /usr/share/doc/drbd.../drbd.conf.example

include

"drbd.d/global_common.conf"

;

include

"drbd.d/*.res"

;

[root@node1 ~]

# cat /etc/drbd.d/global_common.conf #查看主配置文件

global {

usage-count

yes

;

# minor-count dialog-refresh disable-ip-verification

}

common {

handlers {

pri-on-incon-degr

"/usr/lib/drbd/notify-pri-on-incon-degr.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f"

;

pri-lost-after-sb

"/usr/lib/drbd/notify-pri-lost-after-sb.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f"

;

local

-io-error

"/usr/lib/drbd/notify-io-error.sh; /usr/lib/drbd/notify-emergency-shutdown.sh; echo o > /proc/sysrq-trigger ; halt -f"

;

# fence-peer "/usr/lib/drbd/crm-fence-peer.sh";

# split-brain "/usr/lib/drbd/notify-split-brain.sh root";

# out-of-sync "/usr/lib/drbd/notify-out-of-sync.sh root";

# before-resync-target "/usr/lib/drbd/snapshot-resync-target-lvm.sh -p 15 -- -c 16k";

# after-resync-target /usr/lib/drbd/unsnapshot-resync-target-lvm.sh;

}

startup {

# wfc-timeout degr-wfc-timeout outdated-wfc-timeout wait-after-sb

}

options {

# cpu-mask on-no-data-accessible

}

disk {

# size max-bio-bvecs on-io-error fencing disk-barrier disk-flushes

# disk-drain md-flushes resync-rate resync-after al-extents

# c-plan-ahead c-delay-target c-fill-target c-max-rate

# c-min-rate disk-timeout

}

net {

# protocol timeout max-epoch-size max-buffers unplug-watermark

# connect-int ping-int sndbuf-size rcvbuf-size ko-count

# allow-two-primaries cram-hmac-alg shared-secret after-sb-0pri

# after-sb-1pri after-sb-2pri always-asbp rr-conflict

# ping-timeout data-integrity-alg tcp-cork on-congestion

# congestion-fill congestion-extents csums-alg verify-alg

# use-rle

}

}

|

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

|

[root@node1 ~]

# cat /etc/drbd.d/global_common.conf

global {

usage-count no;

#让linbit公司收集目前drbd的使用情况,yes为参加,我们这里不参加设置为no

# minor-count dialog-refresh disable-ip-verification

}

common {

handlers {

pri-on-incon-degr

"/usr/lib/drbd/notify-pri-on-incon-degr.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f"

;

pri-lost-after-sb

"/usr/lib/drbd/notify-pri-lost-after-sb.sh; /usr/lib/drbd/notify-emergency-reboot.sh; echo b > /proc/sysrq-trigger ; reboot -f"

;

local

-io-error

"/usr/lib/drbd/notify-io-error.sh; /usr/lib/drbd/notify-emergency-shutdown.sh; echo o > /proc/sysrq-trigger ; halt -f"

;

# fence-peer "/usr/lib/drbd/crm-fence-peer.sh";

# split-brain "/usr/lib/drbd/notify-split-brain.sh root";

# out-of-sync "/usr/lib/drbd/notify-out-of-sync.sh root";

# before-resync-target "/usr/lib/drbd/snapshot-resync-target-lvm.sh -p 15 -- -c 16k";

# after-resync-target /usr/lib/drbd/unsnapshot-resync-target-lvm.sh;

}

startup {

# wfc-timeout degr-wfc-timeout outdated-wfc-timeout wait-after-sb

}

options {

# cpu-mask on-no-data-accessible

}

disk {

# size max-bio-bvecs on-io-error fencing disk-barrier disk-flushes

# disk-drain md-flushes resync-rate resync-after al-extents

# c-plan-ahead c-delay-target c-fill-target c-max-rate

# c-min-rate disk-timeout

on-io-error detach;

#同步错误的做法是分离

}

net {

# protocol timeout max-epoch-size max-buffers unplug-watermark

# connect-int ping-int sndbuf-size rcvbuf-size ko-count

# allow-two-primaries cram-hmac-alg shared-secret after-sb-0pri

# after-sb-1pri after-sb-2pri always-asbp rr-conflict

# ping-timeout data-integrity-alg tcp-cork on-congestion

# congestion-fill congestion-extents csums-alg verify-alg

# use-rle

cram-hmac-alg

"sha1"

;

#设置加密算法sha1

shared-secret

"mydrbdlab"

;

#设置加密key

}

}

|

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

|

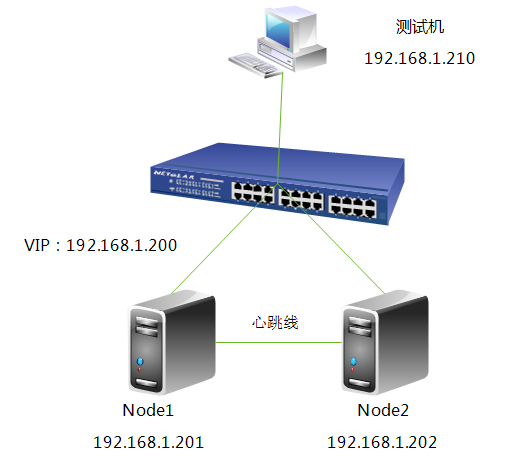

[root@node1 drbd.d]

# cat web.res

resource web {

on node1.

test

.com {

device

/dev/drbd0

;

disk

/dev/sdb

;

address 192.168.1.201:7789;

meta-disk internal;

}

on node2.

test

.com {

device

/dev/drbd0

;

disk

/dev/sdb

;

address 192.168.1.202:7789;

meta-disk internal;

}

}

|

|

1

2

3

4

5

6

7

8

|

[root@node1 drbd.d]

# scp global_common.conf web.res node2:/etc/drbd.d/

The authenticity of host

'node2 (192.168.1.202)'

can't be established.

RSA key fingerprint is da:20:3d:2a:ef:4f:03:

bc

:4d:91:5e:82:25:e7:8c:ec.

Are you sure you want to

continue

connecting (

yes

/no

)?

yes

^[[A

Warning: Permanently added

'node2,192.168.1.202'

(RSA) to the list of known hosts.

root@node2's password:

global_common.conf 100% 1724 1.7KB

/s

00:00

web.res 100% 285 0.3KB

/s

00:00

|

|

1

2

3

4

5

|

[root@node1 ~]

# drbdadm create-md web

Writing meta data...

initializing activity log

NOT initializing bitmap

New drbd meta data block successfully created.

|

|

1

2

3

4

5

|

[root@node2 ~]

# drbdadm create-md web

Writing meta data...

initializing activity log

NOT initializing bitmap

New drbd meta data block successfully created.

|

|

1

2

3

4

5

6

7

8

|

[root@node1 ~]

# service drbd start

Starting DRBD resources: [

create res: web

prepare disk: web

adjust disk: web

adjust net: web

]

.

|

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

|

[root@node2 ~]

# service drbd start

Starting DRBD resources: [

create res: web

prepare disk: web

adjust disk: web

adjust net: web

]

..........

***************************************************************

DRBD's startup script waits

for

the peer node(s) to appear.

- In

case

this node was already a degraded cluster before the

reboot the timeout is 0 seconds. [degr-wfc-timeout]

- If the peer was available before the reboot the timeout will

expire after 0 seconds. [wfc-timeout]

(These values are

for

resource

'web'

; 0 sec -> wait forever)

To abort waiting enter

'yes'

[ 11]:

.

|

|

1

2

3

4

5

|

[root@node1 ~]

# cat /proc/drbd

version: 8.4.2 (api:1

/proto

:86-101)

GIT-

hash

: 7ad5f850d711223713d6dcadc3dd48860321070c build by dag@Build64R6, 2012-09-06 08:16:10

0: cs:Connected ro:Secondary

/Secondary

ds:Inconsistent

/Inconsistent

C r-----

ns:0 nr:0 dw:0 dr:0 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:20970844

|

|

1

2

3

4

5

|

[root@node2 ~]

# cat /proc/drbd

version: 8.4.2 (api:1

/proto

:86-101)

GIT-

hash

: 7ad5f850d711223713d6dcadc3dd48860321070c build by dag@Build64R6, 2012-09-06 08:16:10

0: cs:Connected ro:Secondary

/Secondary

ds:Inconsistent

/Inconsistent

C r-----

ns:0 nr:0 dw:0 dr:0 al:0 bm:0 lo:0 pe:0 ua:0 ap:0 ep:1 wo:f oos:20970844

|

|

1

2

|

[root@node1 ~]

# drbd-overview

0:web

/0

Connected Secondary

/Secondary

Inconsistent

/Inconsistent

C r-----

|

|

1

2

|

[root@node2 ~]

# drbd-overview

0:web

/0

Connected Secondary

/Secondary

Inconsistent

/Inconsistent

C r-----

|

|

1

2

3

4

5

6

7

8

9

10

11

12

|

[root@node1 ~]

# drbd-overview #node1为主节点

0:web

/0

SyncSource Primary

/Secondary

UpToDate

/Inconsistent

C r---n-

[>...................]

sync

'ed: 5.1% (19440

/20476

)M

注:大家可以看到正在同步数据,得要一段时间

[root@node2 ~]

# drbd-overview #node2为从节点

0:web

/0

SyncTarget Secondary

/Primary

Inconsistent

/UpToDate

C r-----

[==>.................]

sync

'ed: 17.0% (17016

/20476

)M

同步完成后,查看一下

[root@node1 ~]

# drbd-overview

0:web

/0

Connected Primary

/Secondary

UpToDate

/UpToDate

C r-----

[root@node2 ~]

# drbd-overview

0:web

/0

Connected Secondary

/Primary

UpToDate

/UpToDate

C r-----

|

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

|

[root@node1 ~]

# mke2fs -j /dev/drbd

drbd/ drbd1 drbd11 drbd13 drbd15 drbd3 drbd5 drbd7 drbd9

drbd0 drbd10 drbd12 drbd14 drbd2 drbd4 drbd6 drbd8

[root@node1 ~]

# mke2fs -j /dev/drbd0

mke2fs 1.41.12 (17-May-2010)

文件系统标签=

操作系统:Linux

块大小=4096 (log=2)

分块大小=4096 (log=2)

Stride=0 blocks, Stripe blocks

1310720 inodes, 5242711 blocks

262135 blocks (5.00%) reserved

for

the super user

第一个数据块=0

Maximum filesystem blocks=4294967296

160 block

groups

32768 blocks per group, 32768 fragments per group

8192 inodes per group

Superblock backups stored on blocks:

32768, 98304, 163840, 229376, 294912, 819200, 884736, 1605632, 2654208,

4096000

正在写入inode表: 完成

Creating journal (32768 blocks):

完成

Writing superblocks and filesystem accounting information: 完成

This filesystem will be automatically checked every 28 mounts or

180 days, whichever comes first. Use tune2fs -c or -i to override.

[root@node1 ~]

#

[root@node1 ~]

# mkdir /drbd

[root@node1 ~]

# mount /dev/drbd0 /drbd/

[root@node1 ~]

# mount

/dev/sda2

on /

type

ext4 (rw)

proc on

/proc

type

proc (rw)

sysfs on

/sys

type

sysfs (rw)

devpts on

/dev/pts

type

devpts (rw,gid=5,mode=620)

tmpfs on

/dev/shm

type

tmpfs (rw)

/dev/sda1

on

/boot

type

ext4 (rw)

/dev/sda3

on

/data

type

ext4 (rw)

none on

/proc/sys/fs/binfmt_misc

type

binfmt_misc (rw)

/dev/drbd0

on

/drbd

type

ext3 (rw)

[root@node1 ~]

# cd /drbd/

[root@node1 drbd]

# cp /etc/inittab /drbd/

[root@node1 drbd]

# ll

总用量 20

-rw-r--r-- 1 root root 884 8月 17 13:50 inittab

drwx------ 2 root root 16384 8月 17 13:49 lost+found

|

|

1

2

|

[root@node1 ~]

# umount /drbd/

[root@node1 ~]

# drbdadm secondary web

|

|

1

2

3

4

|

[root@node1 ~]

# drbd-overview

0:web

/0

Connected Secondary

/Secondary

UpToDate

/UpToDate

C r-----

node2:

[root@node2 ~]

# drbdadm primary web

|

|

1

2

3

4

|

[root@node2 ~]

# drbd-overview

0:web

/0

Connected Primary

/Secondary

UpToDate

/UpToDate

C r-----

[root@node2 ~]

# mkdir /drbd

[root@node2 ~]

# mount /dev/drbd0 /drbd/

|

|

1

2

3

4

|

[root@node2 ~]

# ll /drbd/

总用量 20

-rw-r--r-- 1 root root 884 8月 17 13:50 inittab

drwx------ 2 root root 16384 8月 17 13:49 lost+found

|

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

|

[root@node ~]

# drbdadm primary --force resource

配置资源双主模型的示例:

resource mydrbd {

net {

protocol C;

allow-two-primaries

yes

;

}

startup {

become-primary-on both;

}

disk {

fencing resource-and-stonith;

}

handlers {

# Make sure the other node is confirmed

# dead after this!

outdate-peer

"/sbin/kill-other-node.sh"

;

}

on node1.magedu.com {

device

/dev/drbd0

;

disk

/dev/vg0/mydrbd

;

address 172.16.200.11:7789;

meta-disk internal;

}

on node2.magedu.com {

device

/dev/drbd0

;

disk

/dev/vg0/mydrbd

;

address 172.16.200.12:7789;

meta-disk internal;

}

}

|

206

206

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?