视频黑场检测一般应用在数字电视领域。

就我目前遇到的需要检测视频黑场主要是在视频播放器读取视频缩略图时,取有图像的视频帧解析成图片。

算法的原理是在一帧图像上取几个不同区域,对该区域进行计算。如果提取之前用ffmpeg 的swscale函数进行了rgb转换,判断该区域是不是黑色就没有难点。但这样会牺牲效率;

引用别人的算法原理:(都是好几年前的算法)

数字电视图像层静帧和黑场报警的判断算法

基于OpenCV的数字视频缺陷检测快速算法

下面的代码是我实现的,当得到一个关键帧后退出,将这帧图像装换成rgb24的图像

// initialize SWS context for software scaling

sws_ctx = sws_getContext(pCodecCtx->width, pCodecCtx->height,

pCodecCtx->pix_fmt, width, height, PIX_FMT_RGB24, SWS_BILINEAR,

NULL, NULL, NULL);

// Read frames and save first five frames to disk

bool isReturn = false;

while (av_read_frame(pFormatCtx, &packet) >= 0) {

// Is this a packet from the video stream?

if (packet.stream_index == videoStream) {

// Decode video frame

avcodec_decode_video2(pCodecCtx, pFrame, &frameFinished, &packet);

// Did we get a video frame?

if (frameFinished) {

if (pFrame->key_frame == 1) {

//.....................

//这儿需要将判断黑场的算法代码加进来,要在每次转换之前去做判断。如果是黑场不退出,继续读出下一帧的图像,进行判断。指导不是黑场,转换成rgb,拷贝到Android bitmap中。avcodec_decode_video2解码出的 pFrame为原始的yuv420p数据。uv是颜色分量。直接与黑白有关系的y直接判断y的数值范围在整个区域中占的比重,能大概计算出是不是黑场,但有一个地方需要注意,大部分的视频的原始宽高跟解码后的宽高是一致的,但有些视频的宽高不一致。导致原始图像最右边有个黑边。这个处理的时候需要考虑这个黑边,使其适应所有的视频文件。

// Convert the image from its native format to RGB

sws_scale(sws_ctx, (uint8_t const * const *) pFrame->data,

pFrame->linesize, 0, pCodecCtx->height,

pFrameRGB->data, pFrameRGB->linesize);

isReturn = true;

}

}

}

// Free the packet that was allocated by av_read_frame

av_free_packet(&packet);

if (isReturn) {

LOGE("---------------------------return");

newBitmap = env->CallStaticObjectMethod(bitmapCls,

createBitmapFunction, width, height, bitmapConfig);

int ret;

void* bitmapPixels;

if ((ret = AndroidBitmap_lockPixels(env, newBitmap, &bitmapPixels))

< 0) {

LOGE("AndroidBitmap_lockPixels() failed ! error=1");

return NULL;

}

//这儿实现将rgb数据与Android bitmap对象中像素值对应起来。

uint32_t* newBitmapPixels = (uint32_t*) bitmapPixels;

int height = 0;

for (height = 0; height < height; height++) {

int x = 0;

for (x = 0; x < width; x++) {

uint8_t b = (uint8_t) (pFrameRGB->data[0][0 + 3 * x

+ (height * width * 3)]);

uint8_t g = pFrameRGB->data[0][1 + 3 * x

+ (height * width * 3)];

uint8_t r = pFrameRGB->data[0][2 + 3 * x

+ (height * width * 3)];

newBitmapPixels[x + height * width] = (0xFF << 24)

| (r << 16) | (g << 8) | b;

}

}

AndroidBitmap_unlockPixels(env, newBitmap);

break;

}

}

// Free the RGB image

av_free(buffer);

av_free(&pFrameRGB);

// Free the YUV frame

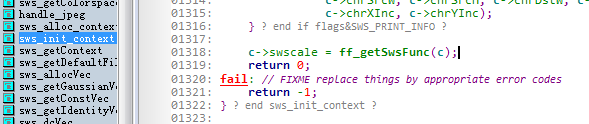

av_free(&pFrame);解码后的黑边sws_scale能处理掉。所以这儿,我们需要研究一下ffmpeg的scale源码,解决解码后的黑边问题。

/**

* swscale wrapper, so we don't need to export the SwsContext.

* Assumes planar YUV to be in YUV order instead of YVU.

*/

int attribute_align_arg sws_scale(struct SwsContext *c,

const uint8_t * const srcSlice[],

const int srcStride[], int srcSliceY,

int srcSliceH, uint8_t *const dst[],

const int dstStride[])

{

int i;

const uint8_t *src2[4] = { srcSlice[0], srcSlice[1], srcSlice[2], srcSlice[3] };

uint8_t *dst2[4] = { dst[0], dst[1], dst[2], dst[3] };

// do not mess up sliceDir if we have a "trailing" 0-size slice

if (srcSliceH == 0)

return 0;

if (!check_image_pointers(srcSlice, c->srcFormat, srcStride)) {

av_log(c, AV_LOG_ERROR, "bad src image pointers\n");

return 0;

}

if (!check_image_pointers(dst, c->dstFormat, dstStride)) {

av_log(c, AV_LOG_ERROR, "bad dst image pointers\n");

return 0;

}

if (c->sliceDir == 0 && srcSliceY != 0 && srcSliceY + srcSliceH != c->srcH) {

av_log(c, AV_LOG_ERROR, "Slices start in the middle!\n");

return 0;

}

if (c->sliceDir == 0) {

if (srcSliceY == 0) c->sliceDir = 1; else c->sliceDir = -1;

}

//这个if没有执行

if (usePal(c->srcFormat)) {

for (i = 0; i < 256; i++) {

int r, g, b, y, u, v;

if (c->srcFormat == AV_PIX_FMT_PAL8) {

uint32_t p = ((const uint32_t *)(srcSlice[1]))[i];

r = (p >> 16) & 0xFF;

g = (p >> 8) & 0xFF;

b = p & 0xFF;

} else if (c->srcFormat == AV_PIX_FMT_RGB8) {

r = ( i >> 5 ) * 36;

g = ((i >> 2) & 7) * 36;

b = ( i & 3) * 85;

} else if (c->srcFormat == AV_PIX_FMT_BGR8) {

b = ( i >> 6 ) * 85;

g = ((i >> 3) & 7) * 36;

r = ( i & 7) * 36;

} else if (c->srcFormat == AV_PIX_FMT_RGB4_BYTE) {

r = ( i >> 3 ) * 255;

g = ((i >> 1) & 3) * 85;

b = ( i & 1) * 255;

} else if (c->srcFormat == AV_PIX_FMT_GRAY8 ||

c->srcFormat == AV_PIX_FMT_YA8) {

r = g = b = i;

} else {

assert(c->srcFormat == AV_PIX_FMT_BGR4_BYTE);

b = ( i >> 3 ) * 255;

g = ((i >> 1) & 3) * 85;

r = ( i & 1) * 255;

}

y = av_clip_uint8((RY * r + GY * g + BY * b + ( 33 << (RGB2YUV_SHIFT - 1))) >> RGB2YUV_SHIFT);

u = av_clip_uint8((RU * r + GU * g + BU * b + (257 << (RGB2YUV_SHIFT - 1))) >> RGB2YUV_SHIFT);

v = av_clip_uint8((RV * r + GV * g + BV * b + (257 << (RGB2YUV_SHIFT - 1))) >> RGB2YUV_SHIFT);

c->pal_yuv[i] = y + (u << 8) + (v << 16) + (0xFFU << 24);

switch (c->dstFormat) {

case AV_PIX_FMT_BGR32:

#if !HAVE_BIGENDIAN

case AV_PIX_FMT_RGB24:

#endif

c->pal_rgb[i] = r + (g << 8) + (b << 16) + (0xFFU << 24);

break;

case AV_PIX_FMT_BGR32_1:

#if HAVE_BIGENDIAN

case AV_PIX_FMT_BGR24:

#endif

c->pal_rgb[i] = 0xFF + (r << 8) + (g << 16) + ((unsigned)b << 24);

break;

case AV_PIX_FMT_RGB32_1:

#if HAVE_BIGENDIAN

case AV_PIX_FMT_RGB24:

#endif

c->pal_rgb[i] = 0xFF + (b << 8) + (g << 16) + ((unsigned)r << 24);

break;

case AV_PIX_FMT_RGB32:

#if !HAVE_BIGENDIAN

case AV_PIX_FMT_BGR24:

#endif

default:

c->pal_rgb[i] = b + (g << 8) + (r << 16) + (0xFFU << 24);

}

}

}

// copy strides, so they can safely be modified

if (c->sliceDir == 1) {

// slices go from top to bottom

int srcStride2[4] = { srcStride[0], srcStride[1], srcStride[2],

srcStride[3] };

int dstStride2[4] = { dstStride[0], dstStride[1], dstStride[2],

dstStride[3] };

reset_ptr(src2, c->srcFormat);

reset_ptr((const uint8_t **) dst2, c->dstFormat);

/* reset slice direction at end of frame */

if (srcSliceY + srcSliceH == c->srcH)

c->sliceDir = 0;

//调用此处的swscale函数进行转换,c是通过sws_getContext得到的函数。

return c->swscale(c, src2, srcStride2, srcSliceY, srcSliceH, dst2,

dstStride2);

} else {

// slices go from bottom to top => we flip the image internally

int srcStride2[4] = { -srcStride[0], -srcStride[1], -srcStride[2],

-srcStride[3] };

int dstStride2[4] = { -dstStride[0], -dstStride[1], -dstStride[2],

-dstStride[3] };

src2[0] += (srcSliceH - 1) * srcStride[0];

if (!usePal(c->srcFormat))

src2[1] += ((srcSliceH >> c->chrSrcVSubSample) - 1) * srcStride[1];

src2[2] += ((srcSliceH >> c->chrSrcVSubSample) - 1) * srcStride[2];

src2[3] += (srcSliceH - 1) * srcStride[3];

dst2[0] += ( c->dstH - 1) * dstStride[0];

dst2[1] += ((c->dstH >> c->chrDstVSubSample) - 1) * dstStride[1];

dst2[2] += ((c->dstH >> c->chrDstVSubSample) - 1) * dstStride[2];

dst2[3] += ( c->dstH - 1) * dstStride[3];

reset_ptr(src2, c->srcFormat);

reset_ptr((const uint8_t **) dst2, c->dstFormat);

/* reset slice direction at end of frame */

if (!srcSliceY)

c->sliceDir = 0;

return c->swscale(c, src2, srcStride2, c->srcH-srcSliceY-srcSliceH,

srcSliceH, dst2, dstStride2);

}

}

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?