基于IntelliJ IDEA开发Spark的Maven项目——Scala语言

1、Maven管理项目在JavaEE普遍使用,开发Spark项目也不例外,而Scala语言开发Spark项目的首选。因此需要构建Maven-Scala项目来开发Spark项目,本文采用的工具是IntelliJ IDEA 2016,IDEA工具越来越被大家认可,开发Java, Python ,scala 支持都非常好

下载链接 : https://www.jetbrains.com/idea/download/

安装直接下一步即可

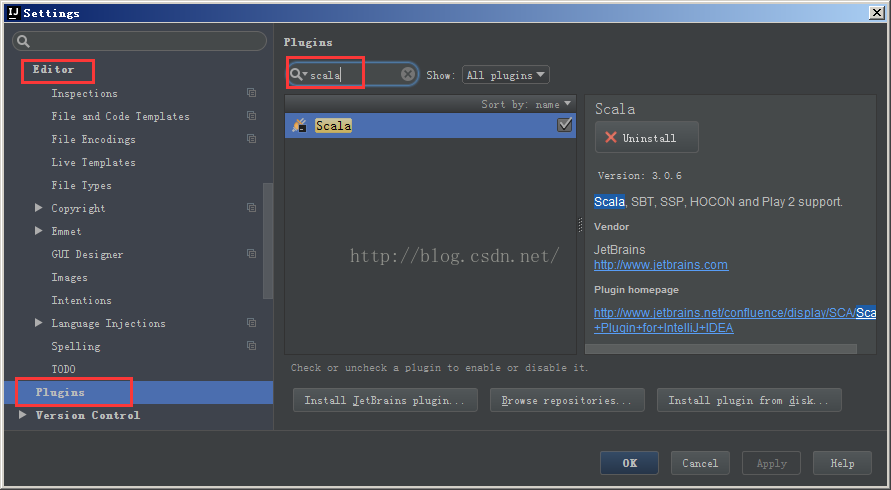

2、安装scala插件,File->Settings->Editor->Plugins,搜索scala即可安装

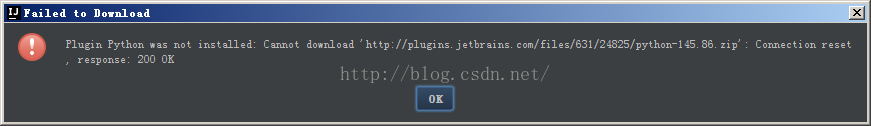

可能由于网络的原因下载不了,可以采取离线安装的方式,例如:

提示下载失败后,根据提示的地址下载离线安装包 http://plugins.jetbrains.com/files/631/24825/python-145.86.zip

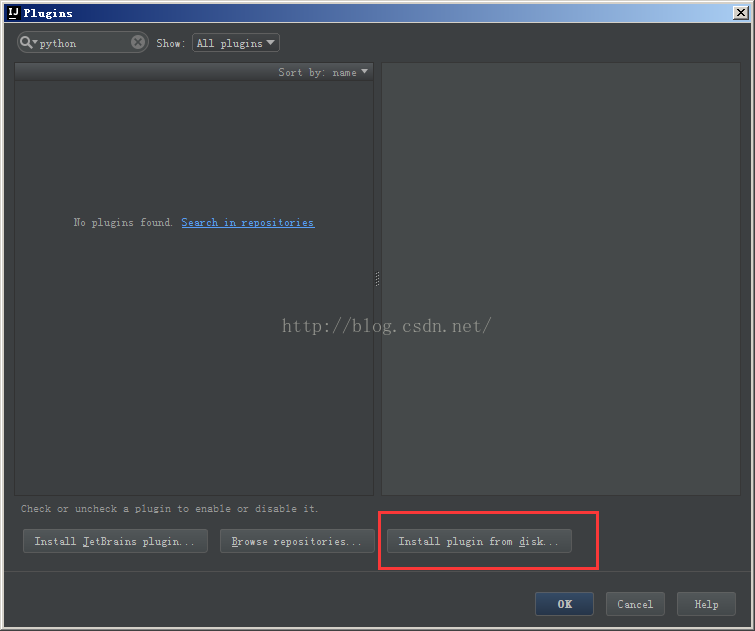

在界面选择离线安装即可:

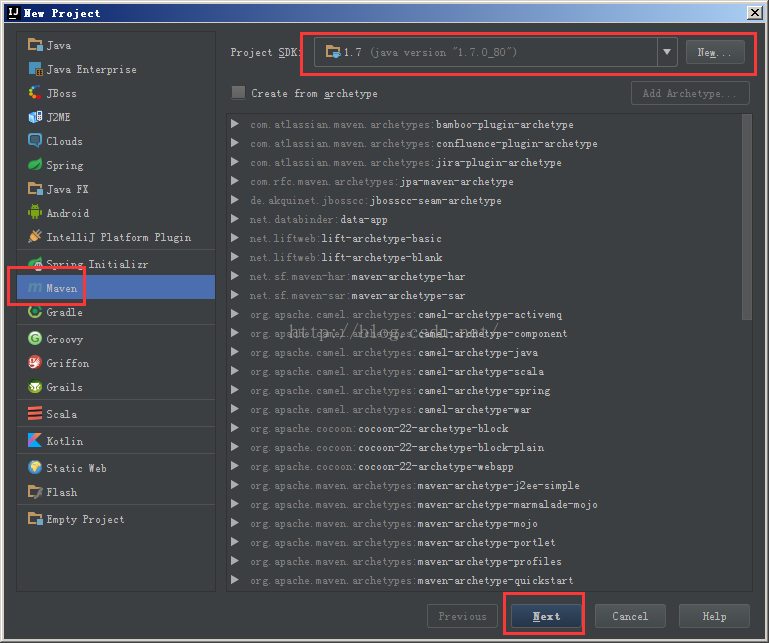

3、创建Maven工程,File->New Project->Maven

选择相应的JDK版本,直接下一步

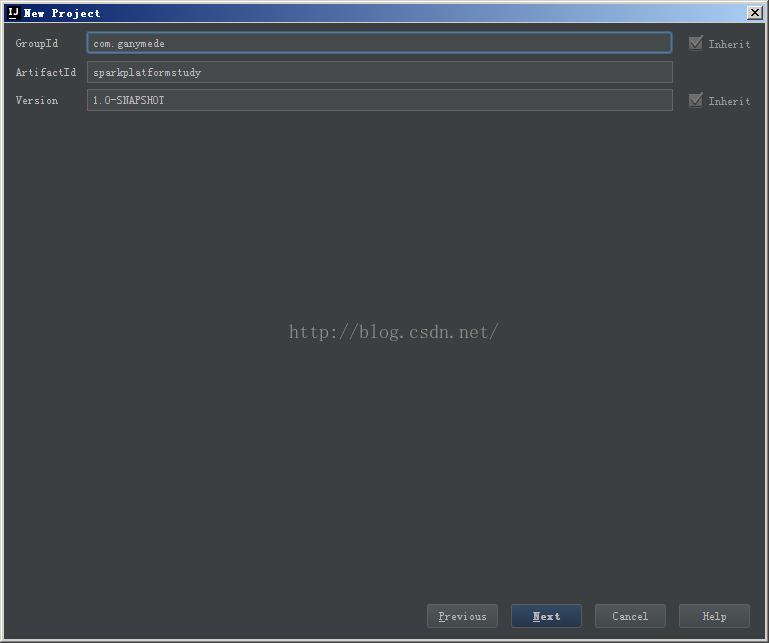

设定Maven项目的GroupId及ArifactId

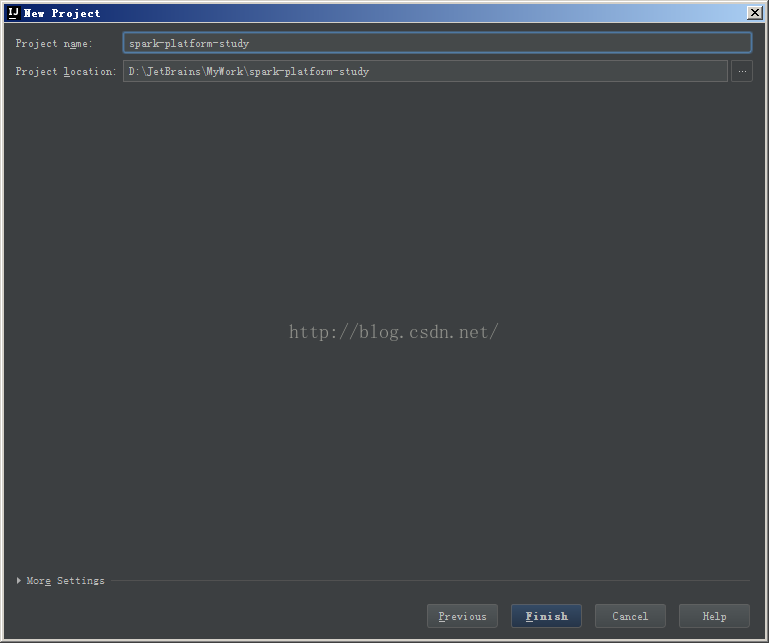

创建项目的工程名称,点击完成即可

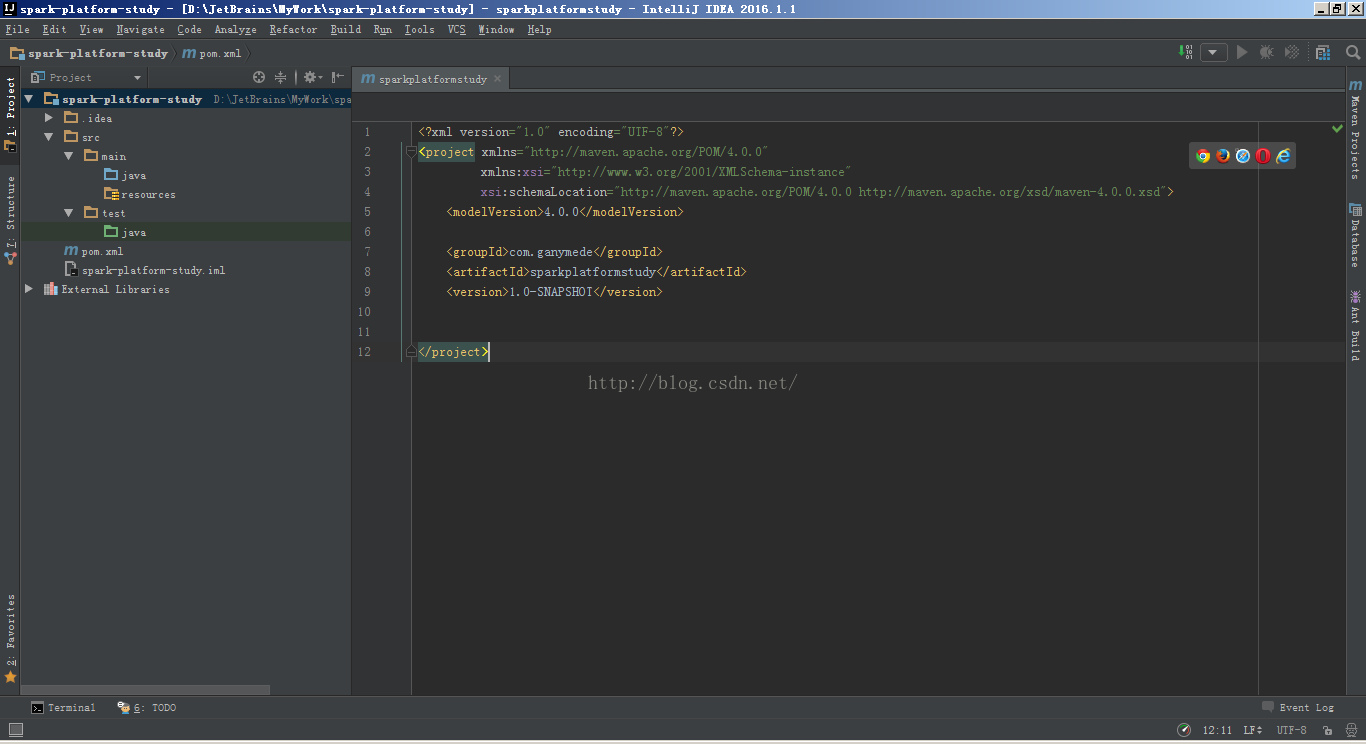

创建Maven工程完毕,默认是Java的,没关系后面我们再添加scala与spark的依赖

4、修改Maven项目的pom.xml文件,增加scala与spark的依赖

- <?xml version="1.0" encoding="UTF-8"?>

- <project xmlns="http://maven.apache.org/POM/4.0.0"

- xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

- xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

- <modelVersion>4.0.0</modelVersion>

- <groupId>com.ganymede</groupId>

- <artifactId>sparkplatformstudy</artifactId>

- <version>1.0-SNAPSHOT</version>

- <properties>

- <project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

- <spark.version>1.6.0</spark.version>

- <scala.version>2.10</scala.version>

- <hadoop.version>2.6.0</hadoop.version>

- </properties>

- <dependencies>

- <dependency>

- <groupId>org.apache.spark</groupId>

- <artifactId>spark-core_${scala.version}</artifactId>

- <version>${spark.version}</version>

- </dependency>

- <dependency>

- <groupId>org.apache.spark</groupId>

- <artifactId>spark-sql_${scala.version}</artifactId>

- <version>${spark.version}</version>

- </dependency>

- <dependency>

- <groupId>org.apache.spark</groupId>

- <artifactId>spark-hive_${scala.version}</artifactId>

- <version>${spark.version}</version>

- </dependency>

- <dependency>

- <groupId>org.apache.spark</groupId>

- <artifactId>spark-streaming_${scala.version}</artifactId>

- <version>${spark.version}</version>

- </dependency>

- <dependency>

- <groupId>org.apache.hadoop</groupId>

- <artifactId>hadoop-client</artifactId>

- <version>2.6.0</version>

- </dependency>

- <dependency>

- <groupId>org.apache.spark</groupId>

- <artifactId>spark-streaming-kafka_${scala.version}</artifactId>

- <version>${spark.version}</version>

- </dependency>

- <dependency>

- <groupId>org.apache.spark</groupId>

- <artifactId>spark-mllib_${scala.version}</artifactId>

- <version>${spark.version}</version>

- </dependency>

- <dependency>

- <groupId>mysql</groupId>

- <artifactId>mysql-connector-java</artifactId>

- <version>5.1.39</version>

- </dependency>

- <dependency>

- <groupId>junit</groupId>

- <artifactId>junit</artifactId>

- <version>4.12</version>

- </dependency>

- </dependencies>

- <!-- maven官方 http://repo1.maven.org/maven2/ 或 http://repo2.maven.org/maven2/ (延迟低一些) -->

- <repositories>

- <repository>

- <id>central</id>

- <name>Maven Repository Switchboard</name>

- <layout>default</layout>

- <url>http://repo2.maven.org/maven2</url>

- <snapshots>

- <enabled>false</enabled>

- </snapshots>

- </repository>

- </repositories>

- <build>

- <sourceDirectory>src/main/scala</sourceDirectory>

- <testSourceDirectory>src/test/scala</testSourceDirectory>

- <plugins>

- <plugin>

- <!-- MAVEN 编译使用的JDK版本 -->

- <groupId>org.apache.maven.plugins</groupId>

- <artifactId>maven-compiler-plugin</artifactId>

- <version>3.3</version>

- <configuration>

- <source>1.7</source>

- <target>1.7</target>

- <encoding>UTF-8</encoding>

- </configuration>

- </plugin>

- </plugins>

- </build>

- </project>

<?xml version="1.0" encoding="UTF-8"?>

<project xmlns="http://maven.apache.org/POM/4.0.0"

xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance"

xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com.ganymede</groupId>

<artifactId>sparkplatformstudy</artifactId>

<version>1.0-SNAPSHOT</version>

<properties>

<project.build.sourceEncoding>UTF-8</project.build.sourceEncoding>

<spark.version>1.6.0</spark.version>

<scala.version>2.10</scala.version>

<hadoop.version>2.6.0</hadoop.version>

</properties>

<dependencies>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_${scala.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-sql_${scala.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-hive_${scala.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_${scala.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.hadoop</groupId>

<artifactId>hadoop-client</artifactId>

<version>2.6.0</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming-kafka_${scala.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-mllib_${scala.version}</artifactId>

<version>${spark.version}</version>

</dependency>

<dependency>

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.39</version>

</dependency>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

</dependencies>

<!-- maven官方 http://repo1.maven.org/maven2/ 或 http://repo2.maven.org/maven2/ (延迟低一些) -->

<repositories>

<repository>

<id>central</id>

<name>Maven Repository Switchboard</name>

<layout>default</layout>

<url>http://repo2.maven.org/maven2</url>

<snapshots>

<enabled>false</enabled>

</snapshots>

</repository>

</repositories>

<build>

<sourceDirectory>src/main/scala</sourceDirectory>

<testSourceDirectory>src/test/scala</testSourceDirectory>

<plugins>

<plugin>

<!-- MAVEN 编译使用的JDK版本 -->

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-compiler-plugin</artifactId>

<version>3.3</version>

<configuration>

<source>1.7</source>

<target>1.7</target>

<encoding>UTF-8</encoding>

</configuration>

</plugin>

</plugins>

</build>

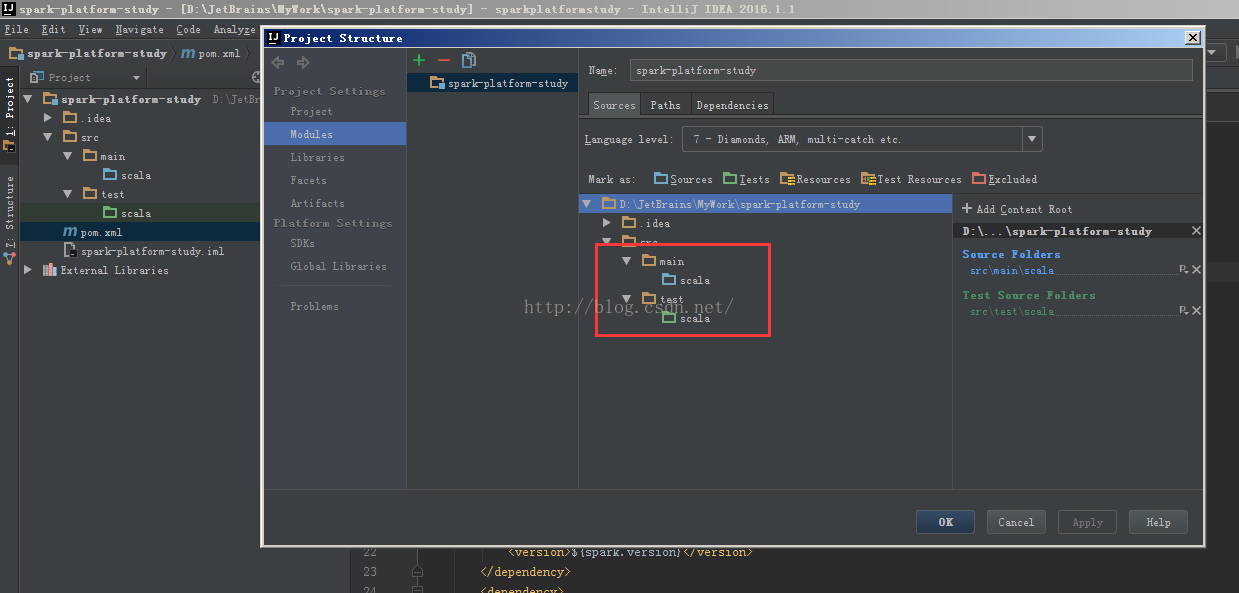

</project>5、删除项目的java目录,新建scala并设置源文件夹

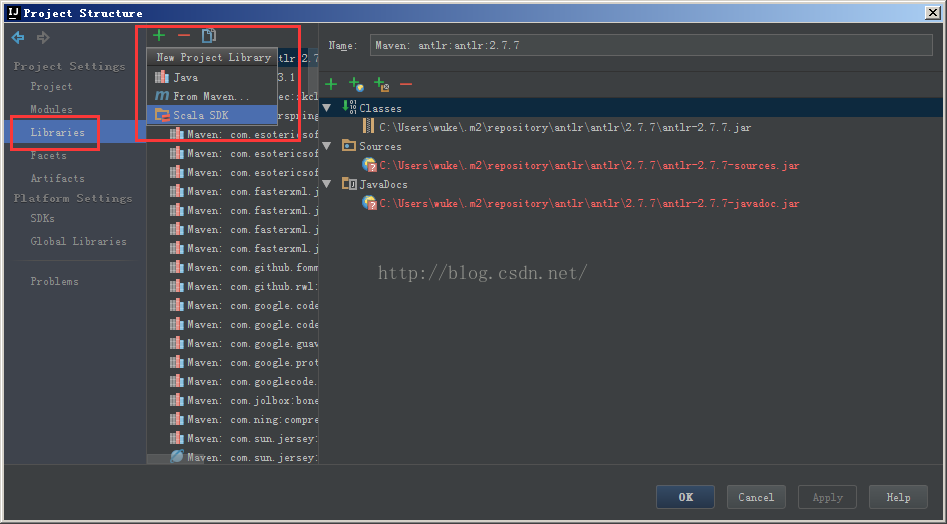

添加scala的SDK

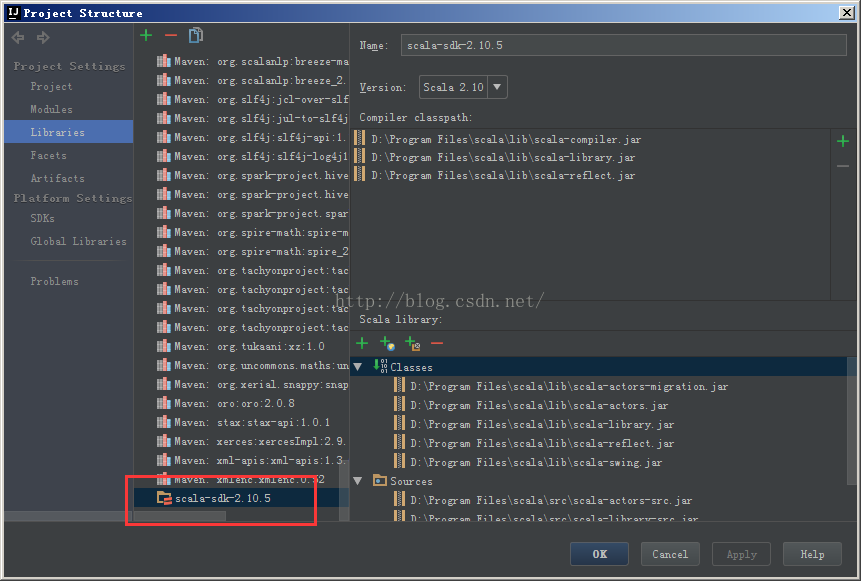

添加scala的SDK成功

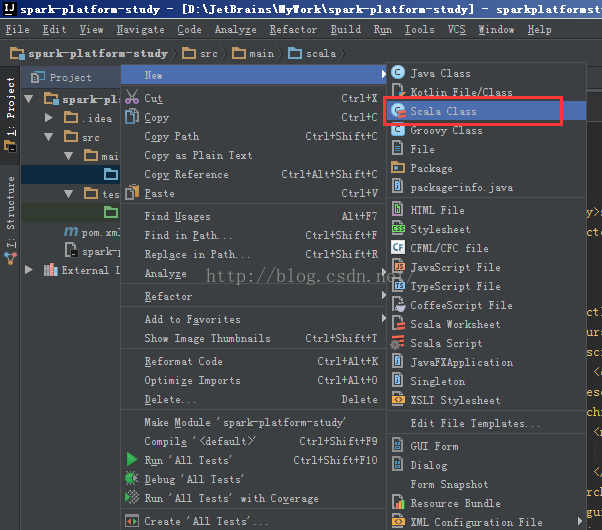

6、开发Spark实例

测试案例来自spark官网的mllib例子 http://spark.apache.org/docs/latest/mllib-data-types.html

- import org.apache.spark.{SparkConf, SparkContext}

- /**

- * Created by wuke on 2016/7/5.

- */

- object LoadLibSVMFile extends App{

- import org.apache.spark.mllib.regression.LabeledPoint

- import org.apache.spark.mllib.util.MLUtils

- import org.apache.spark.rdd.RDD

- val conf = new SparkConf().setAppName("LogisticRegressionMail").setMaster("local")

- val sc = new SparkContext(conf)

- val examples: RDD[LabeledPoint] = MLUtils.loadLibSVMFile(sc, "data/mllib/sample_libsvm_data.txt")

- println(examples.first)

- }

import org.apache.spark.{SparkConf, SparkContext}

/**

* Created by wuke on 2016/7/5.

*/

object LoadLibSVMFile extends App{

import org.apache.spark.mllib.regression.LabeledPoint

import org.apache.spark.mllib.util.MLUtils

import org.apache.spark.rdd.RDD

val conf = new SparkConf().setAppName("LogisticRegressionMail").setMaster("local")

val sc = new SparkContext(conf)

val examples: RDD[LabeledPoint] = MLUtils.loadLibSVMFile(sc, "data/mllib/sample_libsvm_data.txt")

println(examples.first)

}测试通过

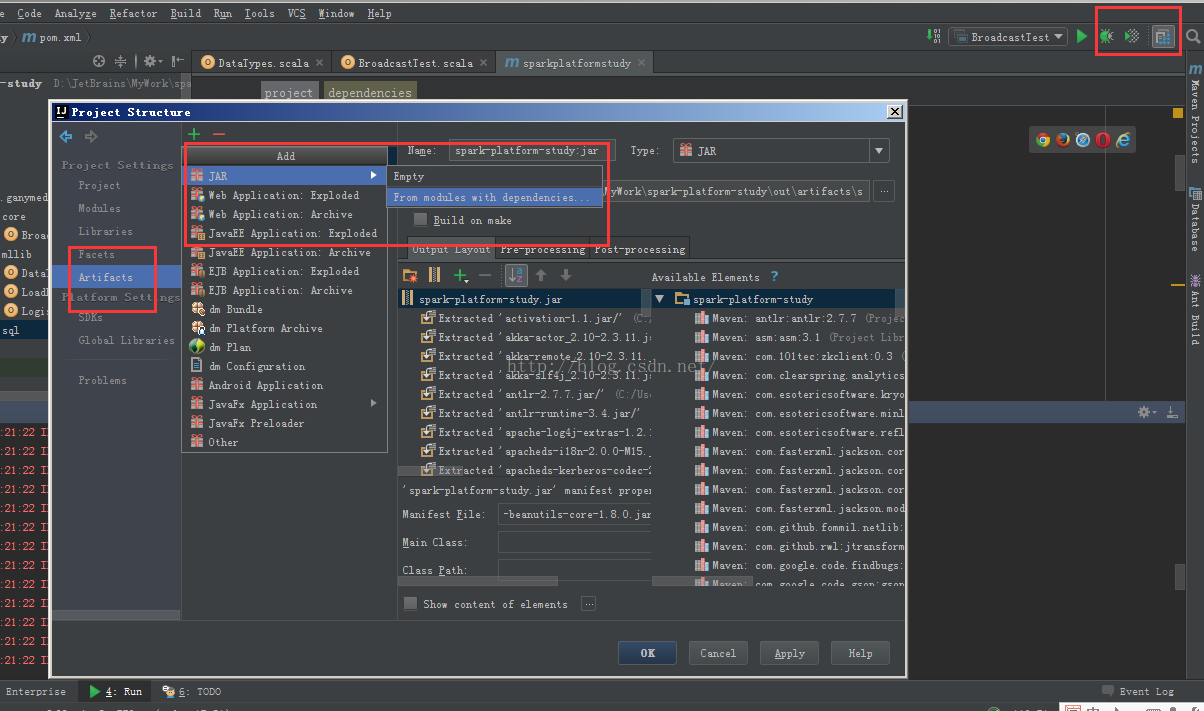

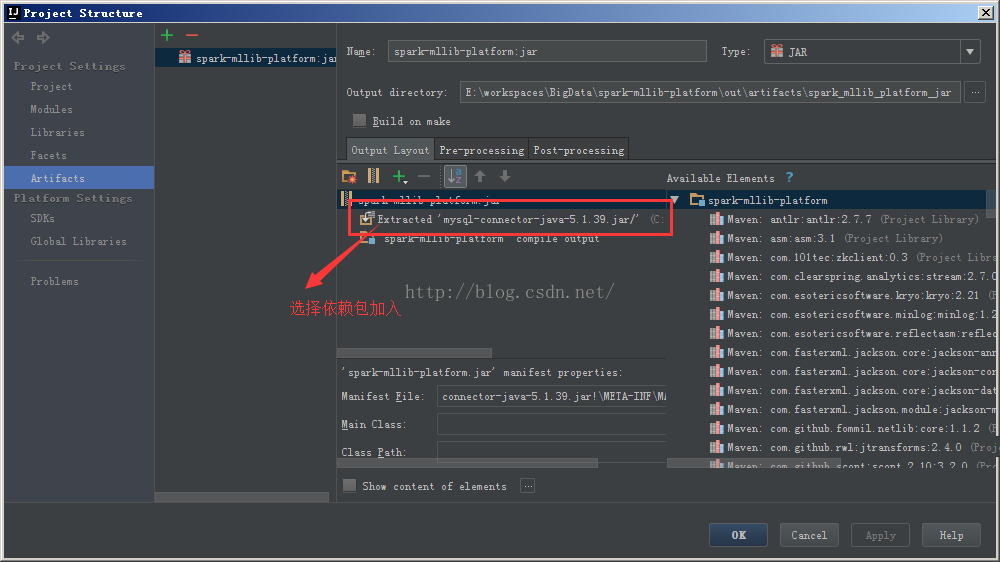

7、打包编译,线上发布

注意选择依赖包

5430

5430

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?