概述

主要是为了练习使用CrawlSpider类的rules变量中定义多个Rule的用法,体会Scrapy框架的强大、灵活性。

因此,对抓取到的内容只是保存到JSON文件中,没有进行进一步的处理。

源码

items.py

class CnblogNewsItem(scrapy.Item):

# 新闻标题

title=scrapy.Field()

# 投递人

postor=scrapy.Field()

# 发布时间

pubtime=scrapy.Field()

# 新闻内容

content=scrapy.Field()spiders/cnblognews_spider.py

# !/usr/bin/env python

# -*- coding:utf-8 -*-

from scrapy.spider import CrawlSpider,Rule

from scrapy.linkextractors import LinkExtractor

from myscrapy.items import CnblogNewsItem

class CnblogNewsSpider(CrawlSpider):

"""

博客园新闻爬虫Spider

爬取新闻列表链接数据

爬取每一条新闻的详情页数据

"""

name = 'cnblognews'

allowed_domains=['news.cnblogs.com']

start_urls=['https://news.cnblogs.com/n/page/1/']

# 新闻页的LinkExtractor,使用正则规则提取

page_link_extractor=LinkExtractor(allow=(r'page/\d+'))

# 每一条新闻的LinkExtractor,使用XPath规则提取

detail_link_extractor=LinkExtractor(restrict_xpaths=(r'//h2[@class="news_entry"]'))

rules = [

# 新闻页提取规则,follow=True,跟进

Rule(link_extractor=page_link_extractor,follow=True),

# 新闻详情页提取规则,follow=False,不跟进

Rule(link_extractor=detail_link_extractor,callback='parse_detail',follow=False)

]

def parse_detail(self,response):

"""处理新闻详情页数据回调方法"""

# print(response.url)

title=response.xpath('//div[@id="news_title"]/a/text()')[0].extract()

postor = response.xpath('//span[@class="news_poster"]/a/text()')[0].extract()

pubtime = response.xpath('//span[@class="time"]/text()')[0].extract()

content = response.xpath('//div[@id="news_body"]/p/text()').extract()

item=CnblogNewsItem()

item['title']=title

item['postor']=postor

item['pubtime']=pubtime

item['content']=content

yield itempipelines.py

class CnblognewsPipeline(object):

"""博客园新闻Item PipeLIne"""

def __init__(self):

self.f=open('cnblognews.json',mode='w')

def process_item(self,item,spider):

news=json.dumps(dict(item),ensure_ascii=False,indent=4).strip().encode('utf-8')

self.f.write(news+',\n')

def close_spider(self,spider):

self.f.close()settings.py

ITEM_PIPELINES = {

'myscrapy.pipelines.CnblognewsPipeline': 1,

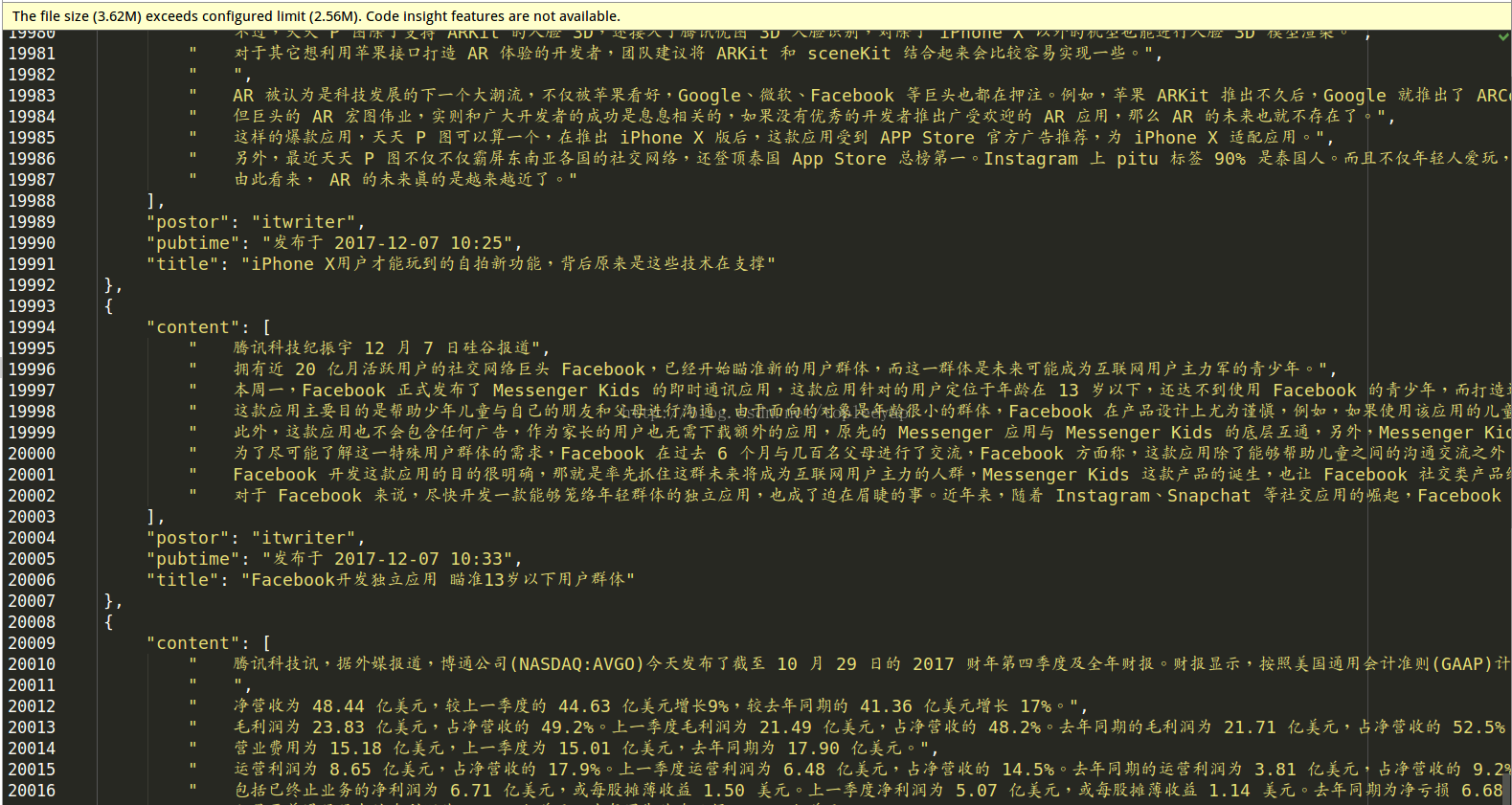

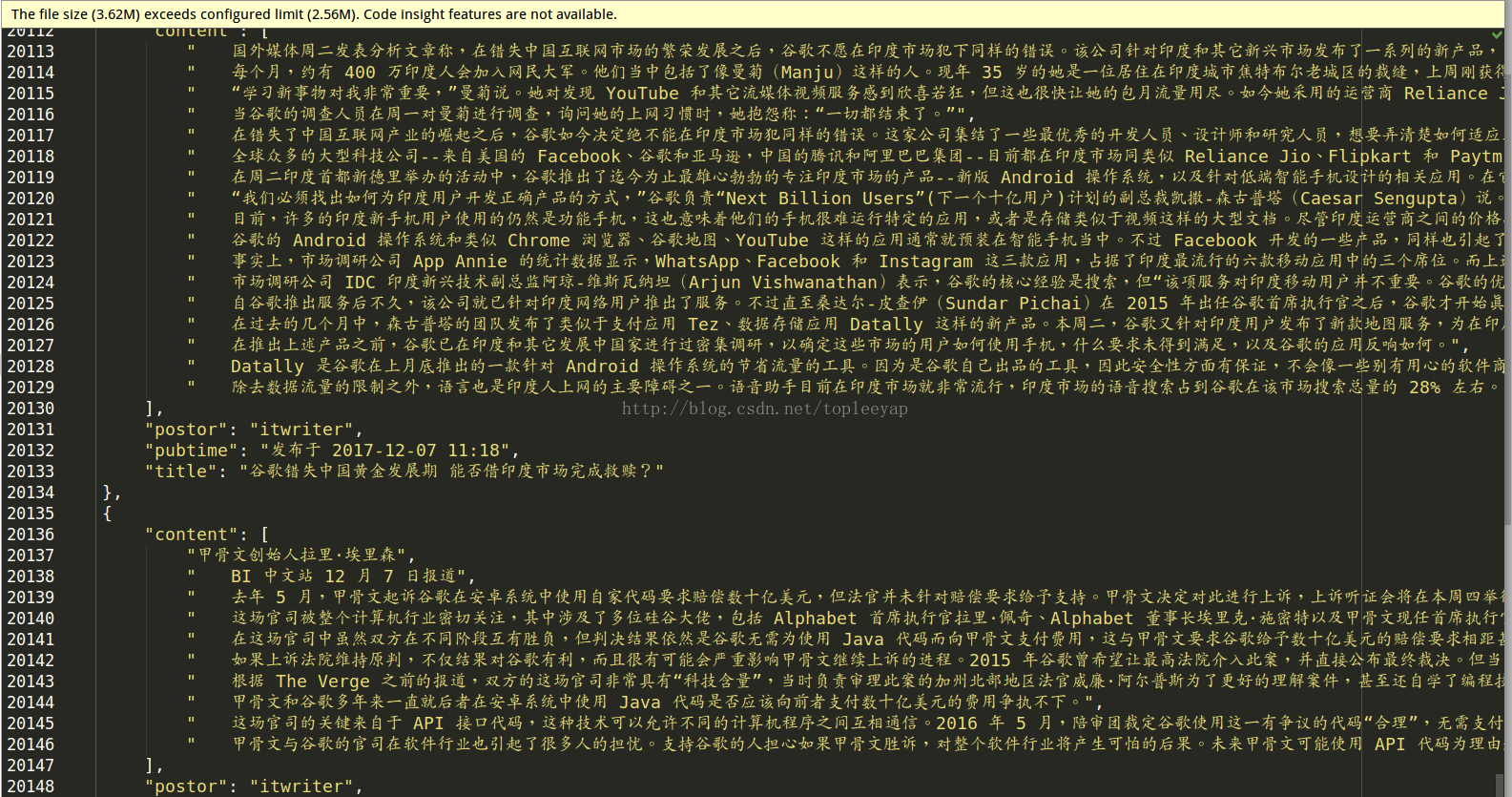

}运行结果

1111

1111

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?