之前在笔记本上安上了tensorflow1.0版本,可以在本地运行tf的程序。

今天看了一个rnn的例子,关于线性和非线性序列的分类问题。对于一个list,如果形如[1,2,3,4,5]这种有序的就说是分为class 0,[1,3,10,7]这种随机生成的序列就分为class 1。通过这个例子,对rnn的理解更明确了。

代码解析如下:

生成数据:

class ToySequenceData(object):

def __init__(self, n_samples=1000, max_seq_len=20, min_seq_len=3,

max_value=1000):

self.data = []

self.labels = []

self.seqlen = []

for i in range(n_samples):

len = random.randint(min_seq_len, max_seq_len)

self.seqlen.append(len)

if random.random() < .5:

rand_start = random.randint(0, max_value - len)

s = [[float(i)/max_value] for i in range(rand_start, rand_start + len)]

s += [[0.] for i in range(max_seq_len - len)]

self.data.append(s)

self.labels.append([1., 0.])

else:

s = [[float(random.randint(0, max_value))/max_value] for i in range(len)]

s += [[0.] for i in range(max_seq_len - len)]

self.data.append(s)

self.labels.append([0., 1.])

self.batch_id = 0

def next(self, batch_size):

if self.batch_id == len(self.data):

self.batch_id = 0

batch_data = (self.data[self.batch_id:min(self.batch_id + batch_size, len(self.data))])

batch_labels = (self.labels[self.batch_id:min(self.batch_id + batch_size, len(self.data))])

batch_seqlen = (self.seqlen[self.batch_id:min(self.batch_id + batch_size, len(self.data))])

self.batch_id = min(self.batch_id + batch_size, len(self.data))

return batch_data, batch_labels, batch_seqlen

设置参数:

learning_rate = 0.01

training_iters = 1000000

batch_size = 128

display_step = 10

seq_max_len = 20

n_hidden = 64

n_classes = 2

trainset = ToySequenceData(n_samples=1000, max_seq_len=seq_max_len)

testset = ToySequenceData(n_samples=500, max_seq_len=seq_max_len)

设置占位符及rnn隐藏层到输出层的参数:

x = tf.placeholder("float", [None, seq_max_len, 1])

y = tf.placeholder("float", [None, n_classes])

seqlen = tf.placeholder(tf.int32, [None])

weights = {

'out': tf.Variable(tf.random_normal([n_hidden, n_classes]))

}

biases = {

'out': tf.Variable(tf.random_normal([n_classes]))

}seqlen代表序列的实际长度。weights的形状为[n_hidden,n_class]代表隐藏层到输出层的权重矩阵。

rnn函数:

def dynamicRNN(x, seqlen, weights, biases):

x = tf.transpose(x, [1, 0, 2])

x = tf.reshape(x, [-1, 1])

x = tf.split(axis=0, num_or_size_splits=seq_max_len, value=x)

lstm_cell = tf.contrib.rnn.BasicLSTMCell(n_hidden)

outputs, states = tf.contrib.rnn.static_rnn(lstm_cell, x, dtype=tf.float32,sequence_length=seqlen) print(outputs)

outputs = tf.stack(outputs)

print(outputs)

outputs = tf.transpose(outputs, [1, 0, 2])

print(outputs)

batch_size = tf.shape(outputs)[0]

print(batch_size)#128

index = tf.range(0, batch_size) * seq_max_len + (seqlen - 1)#?

outputs = tf.gather(tf.reshape(outputs, [-1, n_hidden]), index)

print(outputs) return tf.matmul(outputs, weights['out']) + biases['out']取名为dynamicRNN可能是因为数据的生成是动态的,函数先是对输入数据进行处理。

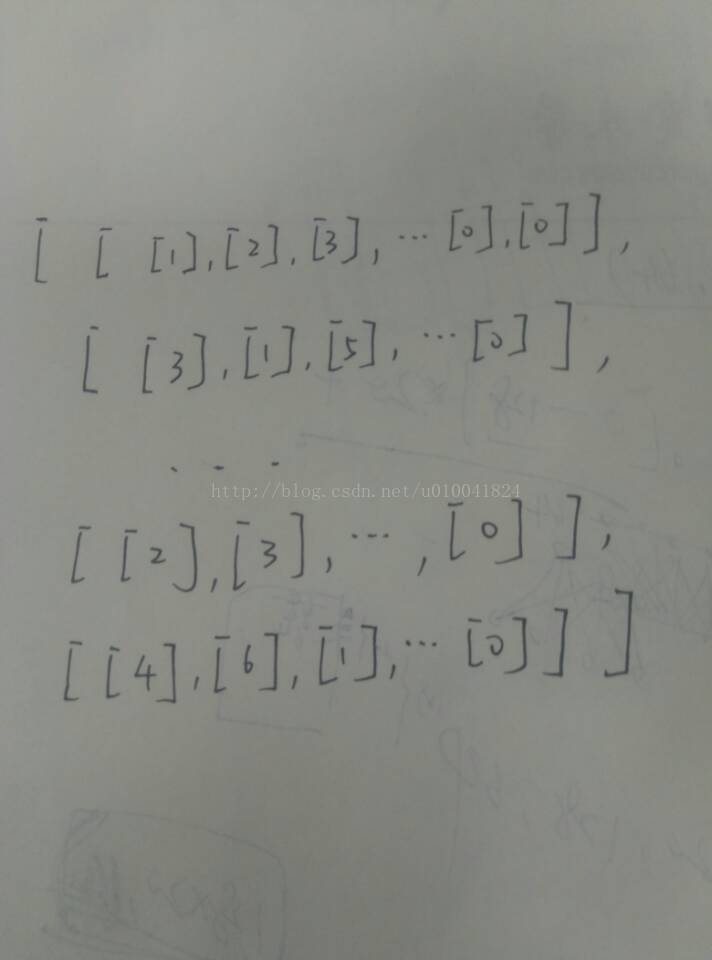

上面说明了输入数据的形式,循环神经网络要求输入要把循环维度放在第一位。比如对于一个list=[1,2,3,4,5,6,7,8],循环次数是8,要分别把i(i=1~8)作为第i次输入。如果输入是一批数据list1=[1,2,3,4,5,6,7,8],list2=[2,1,5,3,1,4,7,2],就把数据处理成[[1,2],[2,1],[3,5],[4,3],[5,1],[6,4],[7,7],[8,2]],投入训练。

tf.contrib.rnn.BasicLSTMCell和tf.contrib.rnn.static_rnn函数是tf中创建lstm单元和对输入数据运行rnn的函数。rnn的特点是一个单元在循环时,共享三个矩阵的权重(三个矩阵在之前博客的rnn展开图中可以看到)。

函数的中间部分是对输出的outputs进行处理。从outputs的打印结果来看:

[<tf.Tensor 'rnn/cond/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_1/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_2/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_3/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_4/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_5/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_6/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_7/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_8/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_9/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_10/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_11/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_12/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_13/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_14/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_15/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_16/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_17/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_18/Merge:0' shape=(?, 64) dtype=float32>, <tf.Tensor 'rnn/cond_19/Merge:0' shape=(?, 64) dtype=float32>]

Tensor("stack:0", shape=(20, ?, 64), dtype=float32)

Tensor("transpose_1:0", shape=(?, 20, 64), dtype=float32)

Tensor("strided_slice:0", shape=(), dtype=int32)

Tensor("Gather:0", shape=(?, 64), dtype=float32)

通过transpose操作将输出形状变回为[batch_size,n_steps,n_hidden],再reshape成为[batch_size*n_steps,n_hidden]。

对于中间index理解,通过实验更加清楚。

import tensorflow as tf

x = tf.placeholder(tf.int32,[None])

with tf.Session() as sess:

print(sess.run(tf.range(0,4)*4+x-1,feed_dict={x:[1,2,3,4]}))[ 0 5 10 15]sess = tf.Session()

params = tf.constant([6,3,4,1,5,9,10])

indices = tf.constant([2,0,2,5])

output = tf.gather(params,indices)

print(sess.run(output))

sess.close()[4 6 4 9]

可以看出index和gather操作是为了得到这一批数据中,每个list在最后一次有效循环(list长度)结束时的输出值。

训练和测试:

pred = dynamicRNN(x, seqlen, weights, biases)

cost = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=pred, labels=y))

optimizer = tf.train.GradientDescentOptimizer(learning_rate=learning_rate).minimize(cost)

correct_pred = tf.equal(tf.argmax(pred,1), tf.argmax(y,1))

accuracy = tf.reduce_mean(tf.cast(correct_pred, tf.float32))

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

step = 1

while step * batch_size < training_iters:

batch_x, batch_y, batch_seqlen = trainset.next(batch_size)

sess.run(optimizer, feed_dict={x: batch_x, y: batch_y, seqlen: batch_seqlen})

if step % display_step == 0:

acc = sess.run(accuracy, feed_dict={x: batch_x, y: batch_y, seqlen: batch_seqlen})

loss = sess.run(cost, feed_dict={x: batch_x, y: batch_y, seqlen: batch_seqlen})

print("Iter " + str(step*batch_size) + ", Minibatch Loss= " + "{:.6f}".format(loss) + ", Training Accuracy= " + "{:.5f}".format(acc))

step += 1

print("Optimization Finished!")

test_data = testset.data

test_label = testset.labels

test_seqlen = testset.seqlen

print("Testing Accuracy:", sess.run(accuracy, feed_dict={x: test_data, y: test_label, seqlen: test_seqlen}))结果准确率为97.2%。

652

652

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?