并行求pi(练习规约)

这个例子比较好。考察了对于各级内存的使用。实质就是数据规约。

注意:

(1)动态申请share memory空间时,需要在<<< >>>的第3个参数处指明空间大小(当然,不能超过share memory的总大小)。然后再kernel函数里,用的时候直接,这样使用:

·extern float __shared__ s_pi[];//

代码:

/*

calculate PI: the usage of reduction.

author:riesman

date: 2017.3.2

*/

#include <stdio.h>

#include <stdlib.h>

#include <math.h>

//for cuda.

#include <cuda_runtime.h>

#include <helper_cuda.h>

#define EPS 1e-3

/* devide kernel*/

__global__ void

innerBlockReduction(float *sum, int num){

int id = blockDim.x * blockIdx.x + threadIdx.x;

int gid = id;

float temp;

int gap;

//TODO:OK

//float __shared__ s_pi[256];//len is:blockDim

extern float __shared__ s_pi[];//len is:blockDim

s_pi[threadIdx.x] = 0.f;

while(gid < num){

#if 0

s_pi[threadIdx.x] += 1;

#endif

#if 1

temp = (gid+0.5f)/num;//horizotal coordicate.

s_pi[threadIdx.x] += 4.f/(1+temp*temp);

#endif

gid += blockDim.x * gridDim.x;

}

gap = blockDim.x >> 1;

for(; gap>0; gap >>= 1){

if(threadIdx.x < gap){

s_pi[threadIdx.x] += s_pi[threadIdx.x + gap];

}

__syncthreads();

}

if(threadIdx.x == 0){

sum[blockIdx.x] = s_pi[0];

//printf("sum[%d]=%f\n",blockIdx.x, sum[blockIdx.x]);

}

}

__global__ void

interBlockReduction(float *sum, int num){

int id = threadIdx.x;

int gap;

extern float __shared__ buffer[];

buffer[id] = sum[id];

__syncthreads();

for(gap = (blockDim.x/2); gap>0; gap=(gap/2)){

if(threadIdx.x < gap){

buffer[threadIdx.x] += buffer[threadIdx.x + gap];

}

__syncthreads();

}

if(threadIdx.x == 0){

//TODO;

//*pi = buffer[0];

sum[0] = buffer[0]/num;

//printf("sum:%f\n", buffer[0]);

}

}

/*host kernel */

float cpuPI(int num){

float sum = 0.f;

int i;

float temp;

for(i=0; i<num; i++){

temp = (i+0.5f)/num;//horizotal coordicate.

sum += 4/(1+temp*temp);

// printf("%f\n", sum);

}

return sum/num;

//return sum;

}

void dealError(cudaError_t* err){

if(*err != cudaSuccess){

printf("%s\n", cudaGetErrorString(cudaGetLastError()));

exit(0);

}

}

int main(){

int num = 1024;

float serialPI = 0.f;

float parallelPI= 0.f;

cudaError_t err = cudaSuccess;

int threadsPerBlock = 256;

//int blocksPerGrid = (num + threadsPerBlock - 1 )/threadsPerBlock;

int blocksPerGrid = num/threadsPerBlock;

printf("blocknum:%d\n", blocksPerGrid);

/*allocate device memory */

float *d_A = NULL;

int size = blocksPerGrid * sizeof(float);

err = cudaMalloc((void ** )&d_A, size);

dealError(&err);

innerBlockReduction<<<blocksPerGrid, threadsPerBlock, sizeof(float)*threadsPerBlock>>>(d_A, num);

err = cudaGetLastError();

dealError(&err);

#if 0

err = cudaMemcpy(h_A, d_A, size, cudaMemcpyDeviceToHost);

dealError(&err);

#endif

int threadsPerBlock2 = blocksPerGrid;

int blocksPerGrid2 = 1;

interBlockReduction<<<blocksPerGrid2, threadsPerBlock2, sizeof(double)*threadsPerBlock2>>>(d_A, num);

//interBlockReduction<<<blocksPerGrid2, threadsPerBlock2>>>(d_A, num, ¶llelPI);

#if 0

err = cudaMemcpy(¶llelPI, &d_parallelPI, sizeof(float), cudaMemcpyDeviceToHost);

dealError(&err);

#endif

#if 1

err = cudaMemcpy(¶llelPI, &d_A[0], sizeof(float), cudaMemcpyDeviceToHost);

dealError(&err);

#endif

/* result validation */

serialPI = cpuPI(num);

printf("serialPI:%f\n", serialPI);

printf("parallelPI:%f\n", parallelPI);

if(fabs(serialPI - parallelPI) > EPS){

printf("have error!\n");

}else

printf("no error!\n");

/* free */

err = cudaFree(d_A);

dealError(&err);

return 0;

}

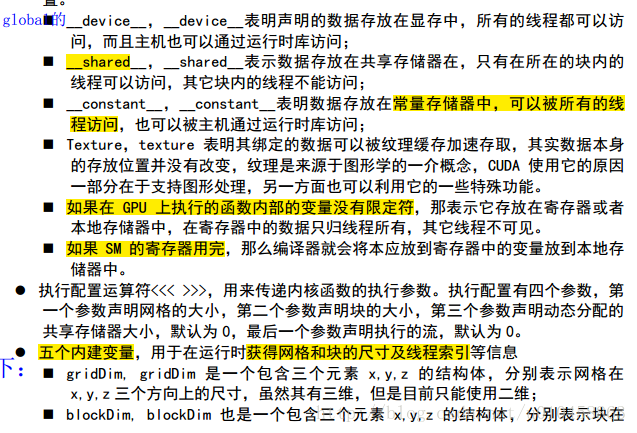

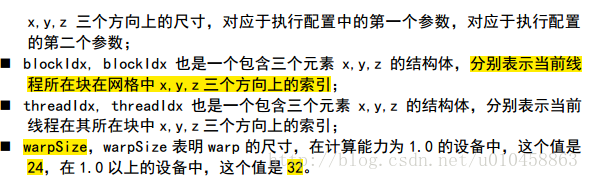

变量的类型

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?