转载自:http://blog.csdn.net/ssw_1990/article/details/23911901

1、手工处理分隔符格式

大部分存储在磁盘上的表格型数据都能用pandas.read_table进行加载。然而,有时还是需要做一些手工处理。由于接收到含有畸形行的文件而使read_table出毛病的情况并不少见。为了说明这些基本工具,看看下面这个简单的CSV文件:

- In [1]: !cat ch06/ex7.csv

- "a","b","c"

- "1","2","3"

- "1","2","3","4"

- import csv

- f = open('ch06/ex7.csv')

- reader = csv.reader(f)

- In [1]: for line in reader:

- .....: print line

- ['a', 'b', 'c']

- ['1', '2', '3']

- ['1', '2', '3', '4']

- In [2]: lines = list(csv.reader(open('ch06/ex7.csv')))

- In [3]: header, values = lines[0], lines[1:]

- In [4]: data_dict = {h: v for h, v in zip(header, zip(*values))}

- In [5]: data_dict

- Out[5]: {'a': ('1', '1'), 'b': ('2', '2'), 'c': ('3', '3')}

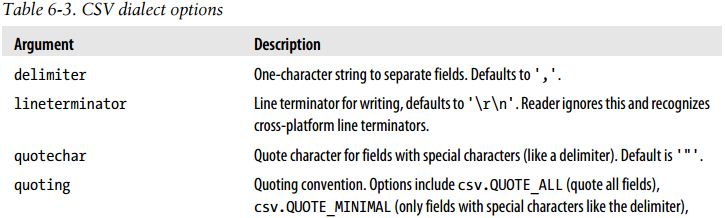

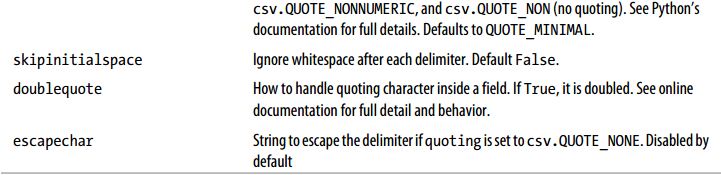

- class my_dialect(csv.Dialect):

- lineterminator = '\n'

- delimiter = ';'

- quotechar = '"'

- reader = csv.reader(f, dialect=my_dialect)

- reader = csv.reader(f, delimiter='|'

注意:

对于那些使用复杂分隔符或多字符分隔符的文件,csv模块就无能为力了。这种情况下,你就只能使用字符串的split方法或正则表达式方法re.split进行行拆分和其他整理工作了。

要手工输出分隔符文件,你可以使用csv.writer。它接受一个已打开且可写的文件对象以及跟csv.reader相同的那些语支和格式化选项:

- with open('mydata.csv', 'w') as f:

- writer = csv.writer(f, dialect=my_dialect)

- writer.writerow(('one', 'two', 'three'))

- writer.writerow(('1', '2', '3'))

- writer.writerow(('4', '5', '6'))

- writer.writerow(('7', '8', '9'))

2、JSON数据

JSON(JavaScript Object Notation的简称)已经成为通过HTTP请求在Web浏览器和其他应用程序之间发送数据的标准格式之一。它是一种比表格型文本格式(如CSV)灵活得多的数据格式。下面是一个例子:

- obj = """

- {"name": "Wes",

- "places_lived": ["United States", "Spain", "Germany"],

- "pet": null,

- "siblings": [{"name": "Scott", "age": 25, "pet": "Zuko"},

- {"name": "Katie", "age": 33, "pet": "Cisco"}]

- }

- """

- In [6]: import json

- In [7]: result = json.loads(obj)

- In [8]: result

- Out[8]:

- {u'name': u'Wes',

- u'pet': None,

- u'places_lived': [u'United States', u'Spain', u'Germany'],

- u'siblings': [{u'age': 25, u'name': u'Scott', u'pet': u'Zuko'},

- {u'age': 33, u'name': u'Katie', u'pet': u'Cisco'}]}

- In [9]: asjson = json.dumps(result)

如何将(一个或一组)JSON对象转换为DataFrame或其他便于分析的数据结构就由你决定了。最简单方便的方式是:向DataFrame构造器传入一组JSON对象,并选取数据字段的子集:

- In [10]: siblings = DataFrame(result['siblings'], columns=['name', 'age'])

- In [11]: siblings

- Out[11]:

- name age

- 0 Scott 25

- 1 Katie 33

3、XML和HTML:Web信息收集

Python有许多可以读写HTML和XML格式数据的库。lxml(http://lxml.de)就是其中之一,它能够高效且可靠地解析大文件。lxml有多个编程接口。首先我要用lxml.html处理HTML,然后再用lxml.objectify做一些XML处理。

许多网站都将数据放到HTML表格中以便在浏览器中查看,但不能以一种更易于机器阅读的格式(如JSON、HTML或XML)进行下载。我发现Yahoo! Finance的股票期权数据就是这样。可能你对这种数据不熟悉:期权是指使你有权从现在开始到未来某个时间(到期日)内以某个特定价格(执行价)买进(看涨期权)或卖出(看跌期权)某公司股票的衍生合约。人们的看涨和看跌期权交易有多种执行价和到期日,这些数据都可以在Yahoo! Finance的各种表格中找到。

首先,找到你希望获取数据的URL,利用urllib2将其打开,然后用lxml解析得到的数据流,如下所示:

- from lxml.html import parse

- from urllib2 import urlopen

- parsed = parse(urlopen('http://finance.yahoo.com/q/op?s=AAPL+Options'))

- doc = parsed.getroot()

- In [1]: links = doc.findall('.//a')

- In [2]: links[15:20]

- Out[2]:

- [<Element a at 0x6c488f0>,

- <Element a at 0x6c48950>,

- <Element a at 0x6c489b0>,

- <Element a at 0x6c48a10>,

- <Element a at 0x6c48a70>]

但这些是表示HTML元素的对象。要得到URL和链接文本,你必须使用各对象的get方法(针对URL)和text_content方法(针对显示文本):

- In [3]: lnk = links[28]

- In [4]: lnk

- Out[4]: <Element a at 0x6c48dd0>

- In [5]: lnk.get('href')

- Out[5]: 'http://biz.yahoo.com/special.html'

- In [6]: lnk.text_content()

- Out[6]: 'Special Editions'

- In [7]: urls = [lnk.get('href') for lnk in doc.findall('.//a')]

- In [8]: urls[-10:]

- Out[8]:

- ['http://info.yahoo.com/privacy/us/yahoo/finance/details.html',

- 'http://info.yahoo.com/relevantads/',

- 'http://docs.yahoo.com/info/terms/',

- 'http://docs.yahoo.com/info/copyright/copyright.html',

- 'http://help.yahoo.com/l/us/yahoo/finance/forms_index.html',

- 'http://help.yahoo.com/l/us/yahoo/finance/quotes/fitadelay.html',

- 'http://help.yahoo.com/l/us/yahoo/finance/quotes/fitadelay.html',

- 'http://www.capitaliq.com',

- 'http://www.csidata.com',

- 'http://www.morningstar.com/']

现在,从文档中找出正确表格的办法就是反复试验了。有些网站会给目标表格加上一个id属性。我确定有两个分别放置看涨数据和看跌数据的表格:

- tables = doc.findall('.//table')

- calls = tables[9]

- puts = tables[13]

- In [7]: rows = calls.findall('.//tr')

对于标题行和数据行,我们希望获取每个单元格内的文本。对于标题行,就是th单元格,而对于数据行,则是td单元格:

- def _unpack(row, kind='td'):

- elts = row.findall('.//%s' % kind)

- return [val.text_content() for val in elts]

- In [8]: _unpack(rows[0], kind='th')

- Out[8]: ['Strike', 'Symbol', 'Last', 'Chg', 'Bid', 'Ask', 'Vol', 'Open Int']

- In [9]: _unpack(rows[1], kind='td')

- Out[9]:

- ['295.00',

- 'AAPL120818C00295000',

- '310.40',

- ' 0.00',

- '289.80',

- '290.80',

- '1',

- '169']

- from pandas.io.parsers import TextParser

- def parse_options_data(table):

- rows = table.findall('.//tr')

- header = _unpack(rows[0], kind='th')

- data = [_unpack(r) for r in rows[1:]]

- return TextParser(data, names=header).get_chunk()

- In [10]: call_data = parse_options_data(calls)

- In [11]: put_data = parse_options_data(puts)

- In [12]: call_data[:10]

- Out[12]:

- Strike Symbol Last Chg Bid Ask Vol Open Int

- 0 295 AAPL120818C00295000 310.40 0.0 289.80 290.80 1 169

- 1 300 AAPL120818C00300000 277.10 1.7 284.80 285.60 2 478

- 2 305 AAPL120818C00305000 300.97 0.0 279.80 280.80 10 316

- 3 310 AAPL120818C00310000 267.05 0.0 274.80 275.65 6 239

- 4 315 AAPL120818C00315000 296.54 0.0 269.80 270.80 22 88

- 5 320 AAPL120818C00320000 291.63 0.0 264.80 265.80 96 173

- 6 325 AAPL120818C00325000 261.34 0.0 259.80 260.80 N/A 108

- 7 330 AAPL120818C00330000 230.25 0.0 254.80 255.80 N/A 21

- 8 335 AAPL120818C00335000 266.03 0.0 249.80 250.65 4 46

- 9 340 AAPL120818C00340000 272.58 0.0 244.80 245.80 4 30

4、利用lxml.objectify解析XML

XML(Extensible Markup Language)是另一种常见的支持分层、嵌套数据以及元数据的结构化数据格式。之前,我介绍了lxml库及其lxml.html接口。这里我将介绍另一个用于操作XML数据的接口,即lxml.objectify。

纽约大都会运输署(MTA)发布了一些有关其公交和列车服务的数据资料(http://www.mta.info/developers/download.html)。这里,我们将看看包含在一组XML文件中的运行情况数据。每项列车或公交服务都有各自的文件(如Metro-North Railroad的文件是Performance_MNR.xml),其中每条XML记录就是一条月度数据,如下所示:

- <INDICATOR>

- <INDICATOR_SEQ>373889</INDICATOR_SEQ>

- <PARENT_SEQ></PARENT_SEQ>

- <AGENCY_NAME>Metro-North Railroad</AGENCY_NAME>

- <INDICATOR_NAME>Escalator Availability</INDICATOR_NAME>

- <DESCRIPTION>Percent of the time that escalators are operational

- systemwide. The availability rate is based on physical observations performed

- the morning of regular business days only. This is a new indicator the agency

- began reporting in 2009.</DESCRIPTION>

- <PERIOD_YEAR>2011</PERIOD_YEAR>

- <PERIOD_MONTH>12</PERIOD_MONTH>

- <CATEGORY>Service Indicators</CATEGORY>

- <FREQUENCY>M</FREQUENCY>

- <DESIRED_CHANGE>U</DESIRED_CHANGE>

- <INDICATOR_UNIT>%</INDICATOR_UNIT>

- <DECIMAL_PLACES>1</DECIMAL_PLACES>

- <YTD_TARGET>97.00</YTD_TARGET>

- <YTD_ACTUAL></YTD_ACTUAL>

- <MONTHLY_TARGET>97.00</MONTHLY_TARGET>

- <MONTHLY_ACTUAL></MONTHLY_ACTUAL>

- </INDICATOR>

- from lxml import objectify

- path = 'Performance_MNR.xml'

- parsed = objectify.parse(open(path))

- root = parsed.getroot()

- data = []

- skip_fields = ['PARENT_SEQ', 'INDICATOR_SEQ', 'DESIRED_CHANGE', 'DECIMAL_PLACES']

- for elt in root.INDICATOR:

- el_data = {}

- for child in elt.getchildren():

- if child.tag in skip_fields:

- continue

- el_data[child.tag] = child.pyval

- data.append(el_data)

最后,将这组字典转换为一个DataFrame:

- In [13]: perf = DataFrame(data)

- In [14]: perf

- Out[14]:

- Empty DataFrame

- Columns: array([], dtype=int64)

- Index: array([], dtype=int64)

- from StringIO import StringIO

- tag = '<a href="http://www.google.com">Google</a>'

- root = objectify.parse(StringIO(tag)).getroot()

- In [15]: root

- Out[15]: <Element a at 0x88bd4b0>

- In [16]: root.get('href')

- Out[16]: 'http://www.google.com'

- In [17]: root.text

- Out[17]: 'Google'

432

432

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?