1、算法工作原理

对给定的训练数据集和输入数据集(待分类或回归的数据集),首先确定在训练数据集中距离输入实例的k个最近邻的实例点,然后利用这k个实例点的类别的多数来预测输入实例的类别。

由此可知,k近邻算法的三个要素为:

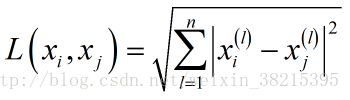

距离度量:

一般为欧式距离

也可为其他距离,如: Lp 距离或 Minkowski 距离。k值的选择:k值的选择对k近邻算法的结果产生重大的影响。若选择较小的k值,只有与实例点较近的训练实例才会对预测结果起作用,缺点是预测结果会对近邻的实例点非常敏感;若选择较大的k值,与实例点较远的训练实例也会对预测结果产生影响,使得预测发生错误。k值一般选取一个较小的值,通常采用交叉验证法来选取最优的k值。

分类决策规则:往往是多数表决,及有输入实例的k个近邻的训练实例中的多数类别决定输入实例的类别。

2、python实现kNN算法(不调用sklearn库)

版本:python3.6 ; 数据集见文末 ;所有程序可去我的码云下载。

2.1、数据读取

关于python的数据读取,本人之前曾因此陷入了迷茫。。。,好在终于理出了点头绪,如同样有困惑的同学可参考python .txt文件读取及数据处理

代码如下,代码存放于命名为kNN.py的文件中:

from numpy import *

import operator

import random

'''python实现KNN,返回列表类型的数据集'''

def createDataSet(filename,split):

trainingSet = []

testSet = []

with open(filename) as csvfile:

lines=csvfile.readlines()

for line in lines:

lineData=line.strip().split(',')

if random.random() < split:

trainingSet.append(lineData)

else:

testSet.append(lineData)

return trainingSet,testSet

'''获取数据的特征值和标签值,返回np.narray类型的数据集'''

def dataLabel(dataSet):

data=[]

label=[]

for x in range(len(dataSet)-1):

data.append([float(tk) for tk in dataSet[x][:-1]])

label.append(dataSet[x][-1])

return array(data),array(label)2.2、kNN实现

代码同样存放于kNN.py文件中。

def classify0(inX,dataSet,label,k):

#返回“数组”的行数,如果shape[1]返回的则是数组的列数

dataSetSize=dataSet.shape[0]

#计算待分类数据与已知数据间的差值,tile函数为扩充函数,在行方向上重复dataSize次

diffMat=tile(inX,(dataSetSize,1))-dataSet

#求平方

sqDiffMat=diffMat**2

#求和,矩阵的每一行相加axis=1

Distances=sqDiffMat.sum(axis=1)

#求开方

sqrtDis=Distances**0.5

#排序,原数组从小到大排序,并返回其原索引值

sortedIndex=sqrtDis.argsort()

#定义一个空字典

classCount={}

for i in range(k):

#返回距离最近的k个点所对应的标签值

voteILabel=label[sortedIndex[i]]

#存放到字典中,get(key,defalut=None)函数查找键值key的值,若不存在则返回的defalut的值

classCount[voteILabel]=classCount.get(voteILabel,0)+1

#排序 classCount.iteritems()

sortedClassCount=sorted(classCount.items(),key=operator.itemgetter(1),reverse=True)

return sortedClassCount[0][0]2.3、主函数

变量赋值,并调用相关函数实现待分类数据集的分类。

#__author__=='qustl_000'

#-*- coding: utf-8 -*-

import kNN

from numpy import *

'''numpy实现KNN'''

traingData,testData=kNN.createDataSet(r"iris.txt",0.7)

trainCharacter,trainLabel=kNN.dataLabel(traingData)

testCharacter,testLabel=kNN.dataLabel(testData)

'''计算错误率'''

errorCount = 0

for i in range(len(testCharacter)):

classifyResult=kNN.classify0(testCharacter[i],trainCharacter,trainLabel,3)

if(classifyResult !=testLabel[i]):

errorCount+=1

errorRate=float(errorCount/(len(testCharacter)-1))

print(errorRate)3、利用sklearn库实现kNN

此部分通过参考sklearn-neighbor官方说明完成

'''****************************sklearn 实现KNN*****************************'''

'''****************用于找到最近的邻居*****************'''

from sklearn.neighbors import NearestNeighbors

#定义一个数组

X_train=array([[-1,-1],[-2,-1],[-3,-2],[1,1],[2,1],[3,2]])

x_test=array([[-1,1],[-1,0]])

def findNeighbors():

'''

NearestNeibors参数解释:

(1)n_neighbors=5,默认值为5,表示查询k个最近邻的数目

(2)algorithm='auto',指定用于计算最近邻的算法,auto表示用最合适的算法计算最近邻

(3)fit(x)表示用X来训练算法

'''

nbrs=NearestNeighbors(n_neighbors=3,algorithm='ball_tree').fit(X_train)

#返回距离每个点k个最近的点和距离指数,indics可以理解为表示点的下标,distancestor为距离

distances,indices=nbrs.kneighbors(x_test)

print(distances)

print(indices)

#输出的是求解n个最近邻点后的矩阵图,1表示是最近邻点,0表示不是最近邻点

print(nbrs.kneighbors_graph(x_test).toarray())

'''********************测试KDTree*********************'''

def TestKDtree():

from sklearn.neighbors import KDTree

'''

leaf_size:叶子的个数

metric:计算距离的方法,默认为minkowski,如欧拉法euclidean

'''

kdtree=KDTree(X_train,leaf_size=30,metric='euclidean')

print(kdtree.query(x_test,k=3,return_distance=False))

'''********************测试BallTree*********************'''

def TestBallTree():

from sklearn.neighbors import BallTree

Btree=BallTree(X_train,leaf_size=30,metric='euclidean')

print(Btree.query(x_test,k=3,return_distance=False))

'''********************Sklearn kNN分类实例*********************'''

def classifyIris():

from sklearn.datasets import load_iris

from sklearn import neighbors

import sklearn

#查看iris数据集

iris=load_iris()

#print(iris)

'''

KNeighborsClaaifier(n_neighbors=5, weights=’uniform’, algorithm=’auto’,

leaf_size=30, p=2, metric=’minkowski’, metric_params=None,

n_jobs=1, **kwargs)

'''

knn=neighbors.KNeighborsClassifier(n_neighbors=3,algorithm='kd_tree')

#训练数据集

knn.fit(iris.data,iris.target)

#分类

predict=knn.predict([[0.1,0.2,0.3,0.4]])

print(predict)

print(iris.target_names[predict])4、数据集

5.1,3.5,1.4,0.2,Iris-setosa

4.9,3.0,1.4,0.2,Iris-setosa

4.7,3.2,1.3,0.2,Iris-setosa

4.6,3.1,1.5,0.2,Iris-setosa

5.0,3.6,1.4,0.2,Iris-setosa

5.4,3.9,1.7,0.4,Iris-setosa

4.6,3.4,1.4,0.3,Iris-setosa

5.0,3.4,1.5,0.2,Iris-setosa

4.4,2.9,1.4,0.2,Iris-setosa

4.9,3.1,1.5,0.1,Iris-setosa

5.4,3.7,1.5,0.2,Iris-setosa

4.8,3.4,1.6,0.2,Iris-setosa

4.8,3.0,1.4,0.1,Iris-setosa

4.3,3.0,1.1,0.1,Iris-setosa

5.8,4.0,1.2,0.2,Iris-setosa

5.7,4.4,1.5,0.4,Iris-setosa

5.4,3.9,1.3,0.4,Iris-setosa

5.1,3.5,1.4,0.3,Iris-setosa

5.7,3.8,1.7,0.3,Iris-setosa

5.1,3.8,1.5,0.3,Iris-setosa

5.4,3.4,1.7,0.2,Iris-setosa

5.1,3.7,1.5,0.4,Iris-setosa

4.6,3.6,1.0,0.2,Iris-setosa

5.1,3.3,1.7,0.5,Iris-setosa

4.8,3.4,1.9,0.2,Iris-setosa

5.0,3.0,1.6,0.2,Iris-setosa

5.0,3.4,1.6,0.4,Iris-setosa

5.2,3.5,1.5,0.2,Iris-setosa

5.2,3.4,1.4,0.2,Iris-setosa

4.7,3.2,1.6,0.2,Iris-setosa

4.8,3.1,1.6,0.2,Iris-setosa

5.4,3.4,1.5,0.4,Iris-setosa

5.2,4.1,1.5,0.1,Iris-setosa

5.5,4.2,1.4,0.2,Iris-setosa

4.9,3.1,1.5,0.1,Iris-setosa

5.0,3.2,1.2,0.2,Iris-setosa

5.5,3.5,1.3,0.2,Iris-setosa

4.9,3.1,1.5,0.1,Iris-setosa

4.4,3.0,1.3,0.2,Iris-setosa

5.1,3.4,1.5,0.2,Iris-setosa

5.0,3.5,1.3,0.3,Iris-setosa

4.5,2.3,1.3,0.3,Iris-setosa

4.4,3.2,1.3,0.2,Iris-setosa

5.0,3.5,1.6,0.6,Iris-setosa

5.1,3.8,1.9,0.4,Iris-setosa

4.8,3.0,1.4,0.3,Iris-setosa

5.1,3.8,1.6,0.2,Iris-setosa

4.6,3.2,1.4,0.2,Iris-setosa

5.3,3.7,1.5,0.2,Iris-setosa

5.0,3.3,1.4,0.2,Iris-setosa

7.0,3.2,4.7,1.4,Iris-versicolor

6.4,3.2,4.5,1.5,Iris-versicolor

6.9,3.1,4.9,1.5,Iris-versicolor

5.5,2.3,4.0,1.3,Iris-versicolor

6.5,2.8,4.6,1.5,Iris-versicolor

5.7,2.8,4.5,1.3,Iris-versicolor

6.3,3.3,4.7,1.6,Iris-versicolor

4.9,2.4,3.3,1.0,Iris-versicolor

6.6,2.9,4.6,1.3,Iris-versicolor

5.2,2.7,3.9,1.4,Iris-versicolor

5.0,2.0,3.5,1.0,Iris-versicolor

5.9,3.0,4.2,1.5,Iris-versicolor

6.0,2.2,4.0,1.0,Iris-versicolor

6.1,2.9,4.7,1.4,Iris-versicolor

5.6,2.9,3.6,1.3,Iris-versicolor

6.7,3.1,4.4,1.4,Iris-versicolor

5.6,3.0,4.5,1.5,Iris-versicolor

5.8,2.7,4.1,1.0,Iris-versicolor

6.2,2.2,4.5,1.5,Iris-versicolor

5.6,2.5,3.9,1.1,Iris-versicolor

5.9,3.2,4.8,1.8,Iris-versicolor

6.1,2.8,4.0,1.3,Iris-versicolor

6.3,2.5,4.9,1.5,Iris-versicolor

6.1,2.8,4.7,1.2,Iris-versicolor

6.4,2.9,4.3,1.3,Iris-versicolor

6.6,3.0,4.4,1.4,Iris-versicolor

6.8,2.8,4.8,1.4,Iris-versicolor

6.7,3.0,5.0,1.7,Iris-versicolor

6.0,2.9,4.5,1.5,Iris-versicolor

5.7,2.6,3.5,1.0,Iris-versicolor

5.5,2.4,3.8,1.1,Iris-versicolor

5.5,2.4,3.7,1.0,Iris-versicolor

5.8,2.7,3.9,1.2,Iris-versicolor

6.0,2.7,5.1,1.6,Iris-versicolor

5.4,3.0,4.5,1.5,Iris-versicolor

6.0,3.4,4.5,1.6,Iris-versicolor

6.7,3.1,4.7,1.5,Iris-versicolor

6.3,2.3,4.4,1.3,Iris-versicolor?

5.6,3.0,4.1,1.3,Iris-versicolor

5.5,2.5,4.0,1.3,Iris-versicolor

5.5,2.6,4.4,1.2,Iris-versicolor

6.1,3.0,4.6,1.4,Iris-versicolor

5.8,2.6,4.0,1.2,Iris-versicolor

5.0,2.3,3.3,1.0,Iris-versicolor

5.6,2.7,4.2,1.3,Iris-versicolor

5.7,3.0,4.2,1.2,Iris-versicolor

5.7,2.9,4.2,1.3,Iris-versicolor

6.2,2.9,4.3,1.3,Iris-versicolor

5.1,2.5,3.0,1.1,Iris-versicolor

5.7,2.8,4.1,1.3,Iris-versicolor

6.3,3.3,6.0,2.5,Iris-virginica

5.8,2.7,5.1,1.9,Iris-virginica

7.1,3.0,5.9,2.1,Iris-virginica

6.3,2.9,5.6,1.8,Iris-virginica

6.5,3.0,5.8,2.2,Iris-virginica

7.6,3.0,6.6,2.1,Iris-virginica

4.9,2.5,4.5,1.7,Iris-virginica

7.3,2.9,6.3,1.8,Iris-virginica

6.7,2.5,5.8,1.8,Iris-virginica

7.2,3.6,6.1,2.5,Iris-virginica

6.5,3.2,5.1,2.0,Iris-virginica

6.4,2.7,5.3,1.9,Iris-virginica

6.8,3.0,5.5,2.1,Iris-virginica

5.7,2.5,5.0,2.0,Iris-virginica

5.8,2.8,5.1,2.4,Iris-virginica

6.4,3.2,5.3,2.3,Iris-virginica

6.5,3.0,5.5,1.8,Iris-virginica

7.7,3.8,6.7,2.2,Iris-virginica

7.7,2.6,6.9,2.3,Iris-virginica

6.0,2.2,5.0,1.5,Iris-virginica

6.9,3.2,5.7,2.3,Iris-virginica

5.6,2.8,4.9,2.0,Iris-virginica

7.7,2.8,6.7,2.0,Iris-virginica

6.3,2.7,4.9,1.8,Iris-virginica

6.7,3.3,5.7,2.1,Iris-virginica

7.2,3.2,6.0,1.8,Iris-virginica

6.2,2.8,4.8,1.8,Iris-virginica

6.1,3.0,4.9,1.8,Iris-virginica

6.4,2.8,5.6,2.1,Iris-virginica

7.2,3.0,5.8,1.6,Iris-virginica

7.4,2.8,6.1,1.9,Iris-virginica

7.9,3.8,6.4,2.0,Iris-virginica

550

550

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?