本人是py3.2 调用的是bing数据库,

httplib2 和 bs4 模块可以网上找找。

httplib2支持压缩,所以用他了,默认的不支持。

import sys,os

from bs4 import BeautifulSoup

import httplib2

import urllib.parse as up

ip=sys.argv[1]

os.chdir(sys.path[0])

url=r'http://cn.bing.com/search?count=100&q=ip:'

httphead={'User-Agent':'Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 5.1; Trident/4.0; User-agent: Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; http://bsalsa.com) ; .NET CLR 2.0.50727; .NET CLR 3.0.4506.2152)',

'Cookie':'SRCHUID=V=2&GUID=79E9F92F75B54E60B4588D130264EFD4; MUID=0A81369FC80C6E532B69359EC9026E42; SRCHD=SM=1&MS=2196069&D=2160426&AF=NOFORM; SRCHUSR=AUTOREDIR=0&GEOVAR=&DOB=20120209; _SS=SID=C8C39DCC3EA342E2859C472E445A1BEC; _UR=D=0; RMS=F=O&A=Q; SCRHDN=ASD=0&DURL=#',

'Referer':'http://cn.bing.com/'}

def get(ip):

h=httplib2.Http()

res,cont=h.request(url+ip,headers=httphead)

soup=BeautifulSoup(cont)

dit=dict()

for i in soup.findAll('div',attrs={'class':'sb_tlst'}):

dit[up.urlparse(i.a['href']).netloc]=i.a.text

open(r'dns1.txt','at',encoding='utf8').write('--------'+ip+'-----------\n')

for i in dit:

try:

print(i,dit[i],encoding='utf8')

except:

print(i,dit[i])

print(i,dit[i],file=open(r'dns1.txt','at',encoding='utf8'))

open(r'dns1.txt','at',encoding='utf8').write('-------------------\n\n\n')

get(ip)

'''

ip='98.126.110.'

for i in range(1,256):

print ("正在处理:",ip+str(i))

get(ip+str(i))

'''

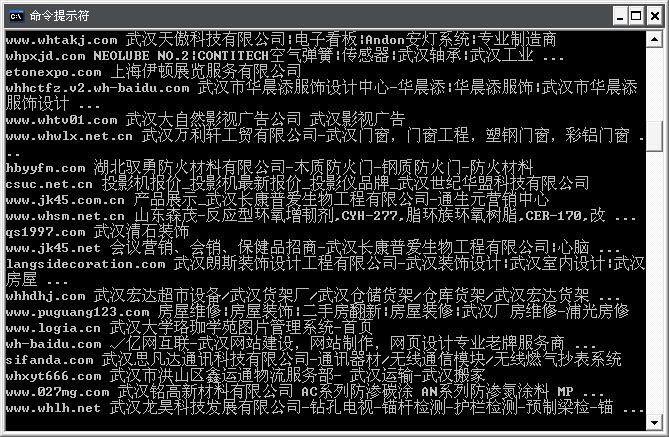

以下是出了上面功能以外,外加自动化wwwscan的例子。 用了线程池技术。可以修改线程数量

import httplib2,os,queue,threading,argparse,sys,socket,time,iptools

from bs4 import BeautifulSoup

import urllib.parse as up

wwwscanpath=r'D:\TOOLS\tools\cgilist'

parser=argparse.ArgumentParser(description='Python旁注自动化扫描工具 \n\t\t\t by Yatere')

parser.add_argument('-ip',metavar='StartIP StopIP',help='The target IP address Range',nargs='+',default=None)

parser.add_argument('-dir',help="The wwwscan's DIR(default:%s)"%wwwscanpath,default=wwwscanpath)

parser.add_argument('-no',help='Thread Number',type=int,default=3)

arg=parser.parse_args()

print (len(arg.ip))

if len(sys.argv)==1 or len(arg.ip)==1:

parser.print_help()

exit()

dit=dict()

class wscan(threading.Thread):

def __init__(self,que):

super(wscan,self).__init__()

self.queue=que

self.start()

def run(self):

while True:

if self.queue.empty():

break

foo=self.queue.get()

os.system(foo)

self.queue.task_done()

def getrdom(ip):

url=r'http://cn.bing.com/search?count=100&q=ip:'+ip

httphead={'User-Agent':'Mozilla/4.0 (compatible; MSIE 8.0; Windows NT 5.1; Trident/4.0; User-agent: Mozilla/4.0 (compatible; MSIE 6.0; Windows NT 5.1; SV1; http://bsalsa.com) ; .NET CLR 2.0.50727; .NET CLR 3.0.4506.2152)',

'Cookie':'SRCHUID=V=2&GUID=79E9F92F75B54E60B4588D130264EFD4; MUID=0A81369FC80C6E532B69359EC9026E42; SRCHD=SM=1&MS=2196069&D=2160426&AF=NOFORM; SRCHUSR=AUTOREDIR=0&GEOVAR=&DOB=20120209; _SS=SID=C8C39DCC3EA342E2859C472E445A1BEC; _UR=D=0; RMS=F=O&A=Q; SCRHDN=ASD=0&DURL=#',

'Referer':'http://cn.bing.com/'}

h=httplib2.Http()

res,cont=h.request(url,headers=httphead)

soup=BeautifulSoup(cont)

for i in soup.findAll('div',attrs={'class':'sb_tlst'}):

dit[up.urlparse(i.a['href']).netloc]=i.a.text

open(r'dns.txt','at').write('--------'+ip+'-----------\n')

for i in dit:

print(i,dit[i],file=open(r'dns.txt','at'))

open(r'dns.txt','at').write('-------------------\n\n\n')

os.chdir(arg.dir)

for ip in iptools.IpRange(arg.ip[0],arg.ip[1]):

getrdom(ip)

input('按下回车继续...')

que=queue.Queue(1000)

for i in dit:

que.put('wwwscan.exe '+i+' -m 20')

for i in range(arg.no):

wscan(que)

que.join()

6万+

6万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?