1、前提:

了解jsoup、solr等相关的技术、会搭建solr

2、我将爬虫的网站都写在一个xml中,所以先要解析这个xml,得到其网址,然后定时去爬虫

package com.tmzs.pc.jsoup;

import java.io.File;

import java.util.ArrayList;

import java.util.Iterator;

import java.util.List;

import java.util.Timer;

import org.dom4j.Document;

import org.dom4j.Element;

import org.dom4j.io.SAXReader;

import com.tmzs.pc.entity.InfoXml;

/**

* @功能 定时器,定时的爬虫

* @author admin

*

*/

public class TestTimer {

public static final List<InfoXml> infoList = new ArrayList<InfoXml>() ;

public static void main(String[] args) throws Exception {

parseXml() ;

MyTask myTask = new MyTask();

Timer timer = new Timer();

timer.schedule(myTask, 4000, 1000*60*5); //4000后执行,每次间隔1000*60*5=5分钟

}

/**

* @功能 解析XML

* @throws Exception

*/

private static void parseXml() throws Exception {

SAXReader reader = new SAXReader();

Document document = reader.read(new File("e:/Channel.xml"));

Element root = document.getRootElement(); //获取根节点

List<Element> nodes = root.elements("tree");

for (Iterator it = nodes.iterator(); it.hasNext();) {

Element tree = (Element) it.next();

List<Element> channels = tree.elements("channel");

//Channel

for (Iterator ite = channels.iterator(); ite.hasNext();) {

Element channel = (Element) ite.next();

InfoXml ix = new InfoXml() ;

ix.setTreeName(tree.attributeValue("name")) ;

ix.setTreeOpen(Integer.parseInt(tree.attributeValue("open"))) ;

ix.setChannelName(channel.element("name").getTextTrim()) ;

ix.setChannelLink(channel.element("link").getTextTrim()) ;

ix.setChannelType(channel.element("type").getTextTrim()) ;

ix.setChannelAllNewsNum(Integer.parseInt(channel.element("AllNewsNum").getTextTrim())) ;

ix.setChannelMaxNewsNum(Integer.parseInt(channel.element("MaxNewsNum").getTextTrim())) ;

ix.setChannelTTU(Integer.parseInt(channel.element("TTU").getTextTrim())) ;

ix.setChannelUnReadNewsNum(Integer.parseInt(channel.element("UnReadNewsNum").getTextTrim())) ;

infoList.add(ix) ;

}

}

for(int i=0;i<infoList.size();i++){

InfoXml ix = infoList.get(i) ;

System.out.println("getTreeName="+ix.getTreeName()+",getChannelName="+ix.getChannelName()

+",channelType="+ix.getChannelType()+",link="+ix.getChannelLink());

}

}

}

3、执行定时器的方法,即爬虫。但是要考虑一个问题,爬虫的内容是否会重复。这里我是弄了连个Map,将爬虫的视频标题(title)放入Map里面作为Key。然后做了一个循环,当10次后爬虫的数据的title在Map里面没有,就清楚Map,说的不好,请看代码:

还有一点就是<link>标签得不到,所以我借助了rssjlib.jar

package com.tmzs.pc.jsoup;

import java.io.BufferedReader;

import java.io.IOException;

import java.io.InputStream;

import java.io.InputStreamReader;

import java.io.StringReader;

import java.net.MalformedURLException;

import java.net.URL;

import java.net.URLConnection;

import java.text.ParseException;

import java.text.SimpleDateFormat;

import java.util.Date;

import java.util.HashMap;

import java.util.Iterator;

import java.util.List;

import java.util.Locale;

import java.util.Map;

import java.util.TimerTask;

import org.apache.solr.client.solrj.impl.HttpSolrServer;

import org.apache.solr.common.SolrInputDocument;

import org.jsoup.Jsoup;

import org.jsoup.nodes.Document;

import org.jsoup.nodes.Element;

import org.jsoup.select.Elements;

import com.rsslibj.elements.Channel;

import com.rsslibj.elements.Item;

import com.rsslibj.elements.RSSReader;

import com.tmzs.pc.entity.InfoXml;

import com.tmzs.pc.entity.Video;

import com.tmzs.pc.jdbc.JdbcAction;

/**

* @功能 爬虫

* @author admin

*

*/

public class MyTask extends TimerTask{

/**

* @功能 循环遍历XML

*/

private static Channel channel; //RSS实体

Document doc = null;

Map oldMap = new HashMap();

Map newMap = new HashMap();

int index = 1 ;

int oldZeroNum = 0 ;

boolean flag = true ;

public void run(){

List<InfoXml> urls = TestTimer.infoList ;

int zeroNum = 0 ;

for(int j=0;j<urls.size();j++){

InfoXml ix = urls.get(j) ;

String url = ix.getChannelLink();

URL ur;

List<Item> items = null ;

try {

ur = new URL(url);

InputStream inputstream = ur.openStream();

BufferedReader reader = null;

//由于百度编码不是UTF-8,所以你懂得

if("百度".equals(ix.getTreeName())){

flag = true ;

reader = new BufferedReader(new InputStreamReader(inputstream,"GB2312"));

}else{

flag = false ;

reader = new BufferedReader(new InputStreamReader(inputstream));

}

StringBuilder data = new StringBuilder();

String line = null;

while( (line = reader.readLine()) != null ) {

data.append(line);

}

reader.close();

inputstream.close();

//解析字符串

StringReader read = new StringReader(data.toString());

System.out.println("data.toString()="+data.toString());

doc = Jsoup.parse(data.toString());

// 创建RSS阅读对象的

RSSReader rssReader = new RSSReader();

// 设置读取内容

rssReader.setReader(read);

channel = rssReader.getChannel();

items = channel.getItems();

} catch (MalformedURLException e1) {

e1.printStackTrace();

} catch (IOException e) {

e.printStackTrace();

} catch (electric.xml.ParseException e) {

e.printStackTrace();

}

Elements element = doc.getElementsByTag("channel");

Elements elems = doc.getElementsByTag("item");

for(int i=0;i<elems.size();i++){

Item item = items.get(i) ;

Element elem = elems.get(i) ;

Elements title = elem.getElementsByTag("title") ;

if(index == 1){

oldMap.put(title.html(), 1) ;

//保存数据

insertData(element,elem,ix,channel,item) ;

}else if(index > 1){

if(oldMap.containsKey(title.html())){

zeroNum ++ ;

}else{

if(!newMap.containsKey(title.html())){

newMap.put(title.html(), 1) ;

insertData(element,elem,ix,channel,item) ;

}

}

}

}

}

//判断是否清楚Map

if(zeroNum == 0){

oldZeroNum++;

if(oldZeroNum == 10){

oldMap.clear();

oldZeroNum = 0;

oldMap = newMap;

newMap.clear();

}

}

index++ ;

}

/**

* @功能 保存数据

* @param element

* @param elem

*/

private void insertData(Elements element, Element elem, InfoXml ix,Channel channel,Item item) {

String sourceLink = channel.getLink() ;

String itemLink = item.getLink() ;

Elements sourceTitle = element.get(0).getElementsByTag("title") ;

// Elements sourceLink = element.get(0).getElementsByTag("link") ;

Elements sourceDesc = element.get(0).getElementsByTag("description") ;

Elements sourceImageUrl = element.get(0).getElementsByTag("url") ;

Elements title = elem.getElementsByTag("title") ;

Elements guid = elem.getElementsByTag("guid") ;

// Elements link = elem.getElementsByTag("link") ;

Elements description = elem.getElementsByTag("description") ;

Elements duration = elem.getElementsByTag("itunes:duration") ;

Elements keyword = elem.getElementsByTag("itunes:keywords") ;

Elements author = elem.getElementsByTag("author") ;

Elements pubDate = elem.getElementsByTag("pubDate") ;

Elements enclosure = elem.getElementsByTag("enclosure") ;

Elements itemSource = elem.getElementsByTag("source") ; //条目源,如:百度中新闻来自新闻网

String itemFileType = enclosure.attr("type") ;

String itemFileLink = enclosure.attr("url") ;

SimpleDateFormat sf = new SimpleDateFormat("EEE, dd MMM yyyy hh:mm:ss z",Locale.ENGLISH);

SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss") ;

String itemDate = "";

try {

if(!flag){

java.util.Date d = sf.parse(pubDate.html());

itemDate = sdf.format(d) ;

}else{

itemDate = pubDate.html().replaceAll("T", " ").replaceAll(".000Z", "") ;

}

} catch (ParseException e) {

e.printStackTrace();

}

int itemDuration = 0 ;

if(!"".equals(duration.html())){

itemDuration = parseDate(duration.html()) ;

}

Video v = new Video() ;

v.setId(new Date().getTime()+"") ;

v.setTypeName(ix.getChannelType()) ;

v.setClassName(ix.getTreeName()+"_"+ix.getChannelName()) ;

v.setSourceDesc(sourceDesc.first().html()) ;

if(sourceImageUrl.first()!=null){

v.setSourceImageLink(sourceImageUrl.first().html()) ;

}else{

v.setSourceImageLink("") ;

}

v.setSourceLink(sourceLink) ;

v.setSourceTitle(sourceTitle.first().html()) ;

v.setItemDate(sdf.format(new Date())) ; //solr处理时间

v.setItemTitle(title.html()) ;

v.setItemLink(itemLink) ;

v.setItemPubDate(itemDate) ;

v.setItemDesc(description.html()) ;

v.setItemAuthor(author.html()) ;

v.setItemSource(itemSource.html()) ; //条目源,如:百度中新闻来自新闻网

v.setItemKeyword(keyword.html()) ;

v.setItemDuration(itemDuration) ;

v.setItemGuid(guid.html()) ;

v.setItemFileLink(itemFileLink) ;

v.setItemFileType(itemFileType) ;

v.setState(400) ;

writerSolr(v) ;

// JdbcAction.insert(v) ;

}

/**

* @功能 写进索引

*/

public void writerSolr(Video v) {

// TODO Auto-generated method stub

try {

//获取连接服务

HttpSolrServer solrServer= SolrServer.getInstance().getServer();

SolrInputDocument doc1 = new SolrInputDocument();

doc1.addField("id", v.getId());

doc1.addField("typename", v.getTypeName());

doc1.addField("classname", v.getClassName());

doc1.addField("itemauthor", v.getItemAuthor());

doc1.addField("itemdate", v.getItemDate());

doc1.addField("itemdesc", v.getItemDesc());

doc1.addField("itemduration", v.getItemDuration());

doc1.addField("itemfilelink", v.getItemFileLink());

doc1.addField("itemfiletype", v.getItemFileType());

doc1.addField("itemguid", v.getItemGuid());

doc1.addField("itemkeyword", v.getItemKeyword());

doc1.addField("itemlink", v.getItemLink());

doc1.addField("itempubdate", v.getItemPubDate());

doc1.addField("itemsource", v.getItemSource());

doc1.addField("itemtitle", v.getItemTitle());

doc1.addField("sourcedesc", v.getSourceDesc());

doc1.addField("sourceimagelink", v.getSourceImageLink());

doc1.addField("sourcelink", v.getSourceLink());

doc1.addField("sourcetitle", v.getSourceTitle());

solrServer.add(doc1);

solrServer.commit();

} catch (Exception e) {

e.printStackTrace();

}

}

/**

* @功能 将形如XX:XX的时间转为秒数

* @param html

* @return

*/

private static int parseDate(String duration) {

int itemDuration = 0 ;

SimpleDateFormat sdf = new SimpleDateFormat("mm:ss") ;

try {

Date d = sdf.parse(duration) ;

itemDuration = d.getMinutes()*60+d.getSeconds() ;

} catch (ParseException e) {

e.printStackTrace();

}

return itemDuration;

}

public static void main(String[] args) throws Exception {

SimpleDateFormat sf = new SimpleDateFormat("EEE, dd MMM yyyy hh:mm:ss z",Locale.ENGLISH);

SimpleDateFormat sdf = new SimpleDateFormat("yyyy-MM-dd HH:mm:ss") ;

SimpleDateFormat sd = new SimpleDateFormat("yyyy-MM-dd'T'HH:mm:ss.SSSZ") ;

String itemDate = "";

try {

java.util.Date d = sf.parse("Mon, 19 Mar 2012 10:36:17 +0800");

System.out.println(sd.parse("2013-12-04T08:08:45.000Z"));

itemDate = sdf.format(d) ;

} catch (ParseException e) {

e.printStackTrace();

}

System.out.println(sdf.parse(itemDate));

}

}

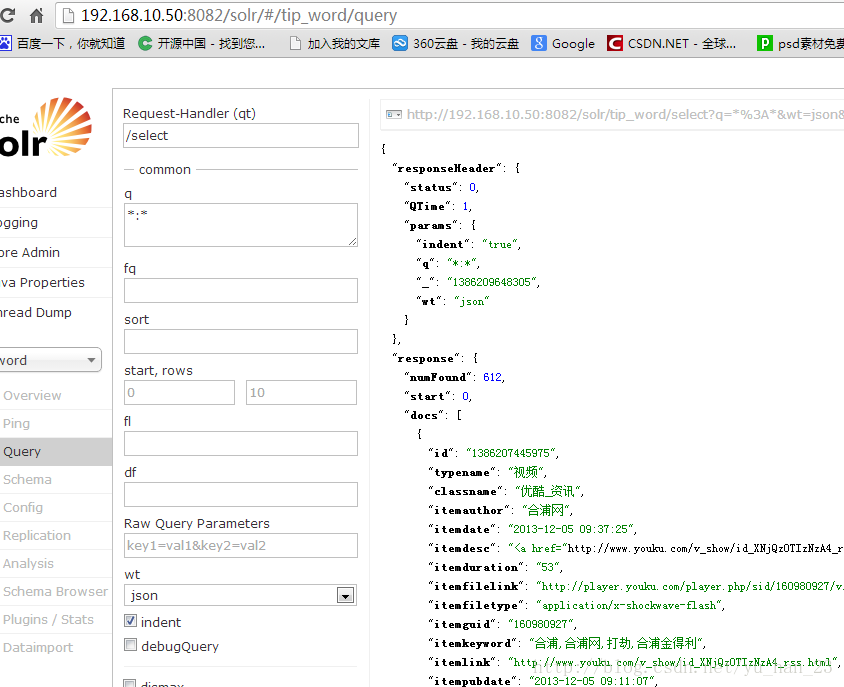

4、爬完之后,在solr页面可以看到

2945

2945

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?