3、代码实现(Python)

(1)机器学习库(sklearn.linear_model)

代码:

from sklearn import linear_model

from sklearn.linear_model import LinearRegression

import matplotlib.pyplot as plt#用于作图

from pylab import *

mpl.rcParams[‘font.sans-serif’] = [‘SimHei’]

mpl.rcParams[‘axes.unicode_minus’] = False

import numpy as np#用于创建向量

reg=linear_model.LinearRegression(fit_intercept=True,normalize=False)

x=[[32.50235],[53.4268],[61.53036],[47.47564],[59.81321],[55.14219],[52.14219],[39.29957],

[48.10504],[52.55001],[45.41873],[54.35163],[44.16405],[58.16847],[56.72721]]

y=[31.70701,68.7776,62.56238,71.54663,87.23093,78.21152,79.64197,59.17149,75.33124,71.30088,55.16568,82.47885,62.00892

,75.39287,81.43619]

reg.fit(x,y)

k=reg.coef_#获取斜率w1,w2,w3,…,wn

b=reg.intercept_#获取截距w0

x0=np.arange(30,60,0.2)

y0=k*x0+b

print(“k={0},b={1}”.format(k,b))

plt.scatter(x,y)

plt.plot(x0,y0,label=‘LinearRegression’)

plt.xlabel(‘X’)

plt.ylabel(‘Y’)

plt.legend()

plt.show()

结果:

k=[1.36695374],b=0.13079331831460195

(2)Python详细实现(方法1)

代码:

#方法1

import numpy as np

import matplotlib.pyplot as plt

from pylab import *

mpl.rcParams[‘font.sans-serif’] = [‘SimHei’]

mpl.rcParams[‘axes.unicode_minus’] = False

#数据生成

data = []

for i in range(100):

x = np.random.uniform(3., 12.)

mean=0, std=1

eps = np.random.normal(0., 1)

y = 1.677 * x + 0.039 + eps

data.append([x, y])

data = np.array(data)

#统计误差

y = wx + b

def compute_error_for_line_given_points(b, w, points):

totalError = 0

for i in range(0, len(points)):

x = points[i, 0]

y = points[i, 1]

computer mean-squared-error

totalError += (y - (w * x + b)) ** 2

average loss for each point

return totalError / float(len(points))

#计算梯度

def step_gradient(b_current, w_current, points, learningRate):

b_gradient = 0

w_gradient = 0

N = float(len(points))

for i in range(0, len(points)):

x = points[i, 0]

y = points[i, 1]

grad_b = 2(wx+b-y)

b_gradient += (2/N) * ((w_current * x + b_current) - y)

grad_w = 2(wx+b-y)*x

w_gradient += (2/N) * x * ((w_current * x + b_current) - y)

update w’

new_b = b_current - (learningRate * b_gradient)

new_w = w_current - (learningRate * w_gradient)

return [new_b, new_w]

#迭代更新

def gradient_descent_runner(points, starting_b, starting_w, learning_rate, num_iterations):

b = starting_b

w = starting_w

update for several times

for i in range(num_iterations):

b, w = step_gradient(b, w, np.array(points), learning_rate)

return [b, w]

def main():

learning_rate = 0.0001

initial_b = 0 # initial y-intercept guess

initial_w = 0 # initial slope guess

num_iterations = 1000

print(“迭代前 b = {0}, w = {1}, error = {2}”

.format(initial_b, initial_w,

compute_error_for_line_given_points(initial_b, initial_w, data))

)

print(“Running…”)

[b, w] = gradient_descent_runner(data, initial_b, initial_w, learning_rate, num_iterations)

print(“第 {0} 次迭代结果 b = {1}, w = {2}, error = {3}”.

format(num_iterations, b, w,

compute_error_for_line_given_points(b, w, data))

)

plt.plot(data[:,0],data[:,1], color=‘b’, marker=‘+’, linestyle=‘–’,label=‘true’)

plt.plot(data[:,0],w*data[:,0]+b,color=‘r’,label=‘predict’)

plt.xlabel(‘X’)

plt.ylabel(‘Y’)

plt.legend()

plt.show()

if name == ‘main’:

main()

结果:

迭代前 b = 0, w = 0, error = 186.61000821356697

Running…

第 1000 次迭代结果 b = 0.20558501549252192, w = 1.6589067569038516, error = 0.9963685680112963

(3)Python详细实现(方法2)

代码:

#方法2

import numpy as np

import pandas as pd

import matplotlib.pyplot as plt

import matplotlib as mpl

mpl.rcParams[“font.sans-serif”]=[“SimHei”]

mpl.rcParams[“axes.unicode_minus”]=False

y = wx + b

#Import data

file=pd.read_csv(“data.csv”)

def compute_error_for_line_given(b, w):

totalError = np.sum((file[‘y’]-(w*file[‘x’]+b))**2)

return np.mean(totalError)

def step_gradient(b_current, w_current, learningRate):

b_gradient = 0

w_gradient = 0

N = float(len(file[‘x’]))

for i in range (0,len(file[‘x’])):

grad_b = 2(wx+b-y)

b_gradient += (2 / N) * ((w_current * file[‘x’] + b_current) - file[‘y’])

grad_w = 2(wx+b-y)*x

w_gradient += (2 / N) * file[‘x’] * ((w_current * file[‘x’] + b_current) - file[‘x’])

update w’

new_b = b_current - (learningRate * b_gradient)

new_w = w_current - (learningRate * w_gradient)

return [new_b, new_w]

def gradient_descent_runner( starting_b, starting_w, learning_rate, num_iterations):

b = starting_b

w = starting_w

update for several times

for i in range(num_iterations):

b, w = step_gradient(b, w, learning_rate)

return [b, w]

def main():

learning_rate = 0.0001

initial_b = 0 # initial y-intercept guess

initial_w = 0 # initial slope guess

num_iterations = 100

print(“Starting gradient descent at b = {0}, w = {1}, error = {2}”

.format(initial_b, initial_w,

compute_error_for_line_given(initial_b, initial_w))

)

print(“Running…”)

[b, w] = gradient_descent_runner(initial_b, initial_w, learning_rate, num_iterations)

print(“After {0} iterations b = {1}, w = {2}, error = {3}”.

format(num_iterations, b, w,

compute_error_for_line_given(b, w))

)

plt.plot(file[‘x’],file[‘y’],‘ro’,label=‘线性回归’)

plt.xlabel(‘X’)

plt.ylabel(‘Y’)

plt.legend()

plt.show()

if name == ‘main’:

main()

结果:

Starting gradient descent at b = 0, w = 0, error = 75104.71822821398

Running…

After 100 iterations b = 0 0.014845

1 0.325621

2 0.036883

3 0.502265

4 0.564917

5 0.479366

6 0.568968

7 0.422619

8 0.565073

9 0.393907

10 0.216854

11 0.580750

12 0.379350

13 0.361574

14 0.511651

dtype: float64, w = 0 0.999520

1 0.994006

2 0.999405

3 0.989645

4 0.990683

5 0.991444

6 0.989282

7 0.989573

8 0.988498

9 0.992633

10 0.995329

11 0.989490

12 0.991617

13 0.993872

14 0.991116

dtype: float64, error = 6451.5510231710905

数据:

(4)Python详细实现(方法3)

#方法3

import numpy as np

points = np.genfromtxt(“data.csv”, delimiter=“,”)

#从数据读入到返回需要两个迭代循环,第一个迭代将文件中每一行转化为一个字符串序列,

#第二个循环迭代对每个字符串序列指定合适的数据类型:

y = wx + b

def compute_error_for_line_given_points(b, w, points):

totalError = 0

for i in range(0, len(points)):

x = points[i, 0]

y = points[i, 1]

computer mean-squared-error

totalError += (y - (w * x + b)) ** 2

average loss for each point

return totalError / float(len(points))

def step_gradient(b_current, w_current, points, learningRate):

b_gradient = 0

w_gradient = 0

N = float(len(points))

for i in range(0, len(points)):

x = points[i, 0]

y = points[i, 1]

grad_b = 2(wx+b-y)

b_gradient += (2 / N) * ((w_current * x + b_current) - y)

grad_w = 2(wx+b-y)*x

w_gradient += (2 / N) * x * ((w_current * x + b_current) - y)

update w’

new_b = b_current - (learningRate * b_gradient)

new_w = w_current - (learningRate * w_gradient)

return [new_b, new_w]

def gradient_descent_runner(points, starting_b, starting_w, learning_rate, num_iterations):

b = starting_b

w = starting_w

update for several times

for i in range(num_iterations):

b, w = step_gradient(b, w, np.array(points), learning_rate)

return [b, w]

def main():

learning_rate = 0.0001

initial_b = 0 # initial y-intercept guess

initial_w = 0 # initial slope guess

num_iterations = 1000

print(“Starting gradient descent at b = {0}, w = {1}, error = {2}”

.format(initial_b, initial_w,

compute_error_for_line_given_points(initial_b, initial_w, points))

)

print(“Running…”)

[b, w] = gradient_descent_runner(points, initial_b, initial_w, learning_rate, num_iterations)

print(“After {0} iterations b = {1}, w = {2}, error = {3}”.

format(num_iterations, b, w,

compute_error_for_line_given_points(b, w, points))

)

if name == ‘main’:

main()

4、案例——房屋与价格、尺寸

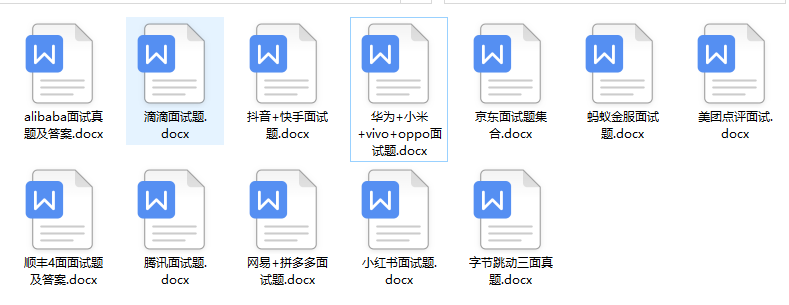

自我介绍一下,小编13年上海交大毕业,曾经在小公司待过,也去过华为、OPPO等大厂,18年进入阿里一直到现在。

深知大多数Python工程师,想要提升技能,往往是自己摸索成长或者是报班学习,但对于培训机构动则几千的学费,着实压力不小。自己不成体系的自学效果低效又漫长,而且极易碰到天花板技术停滞不前!

因此收集整理了一份《2024年Python开发全套学习资料》,初衷也很简单,就是希望能够帮助到想自学提升又不知道该从何学起的朋友,同时减轻大家的负担。

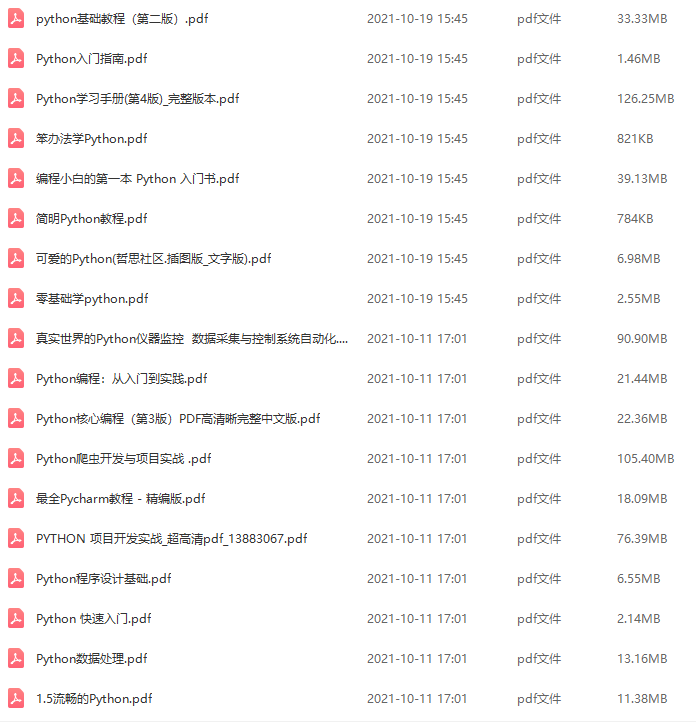

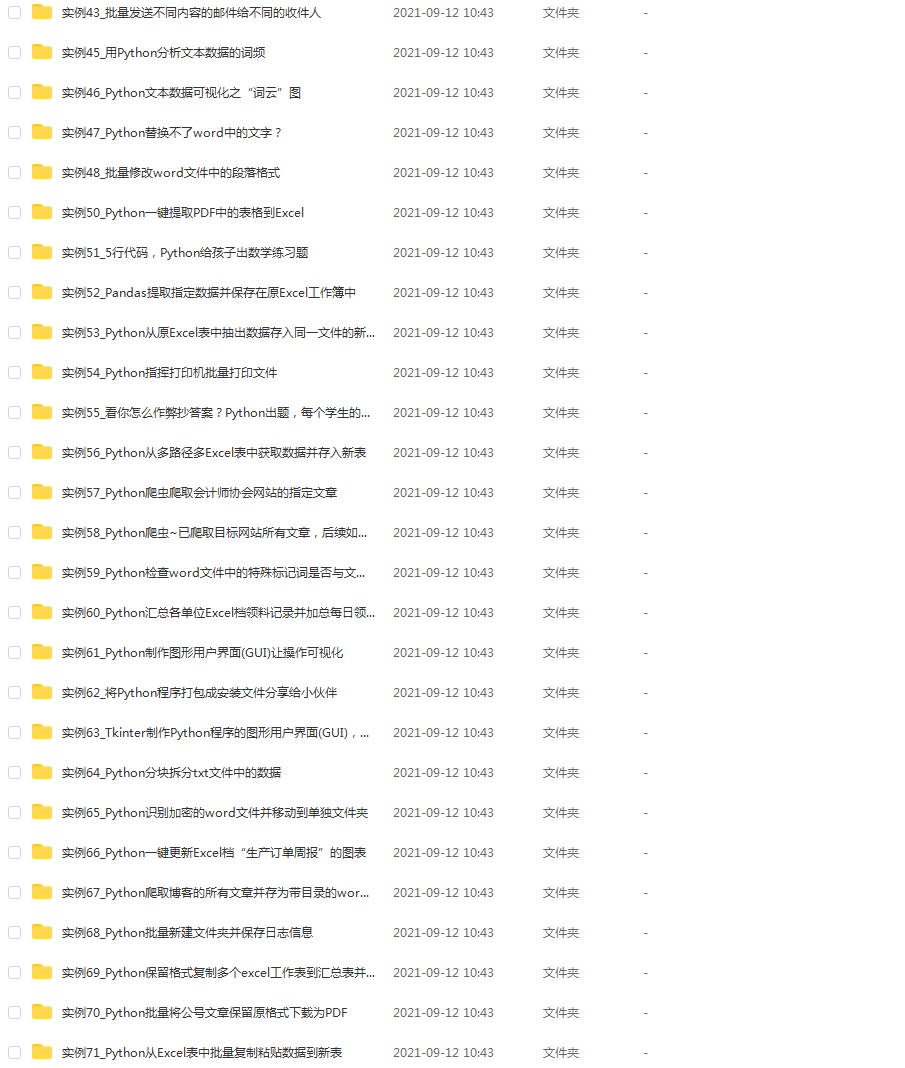

既有适合小白学习的零基础资料,也有适合3年以上经验的小伙伴深入学习提升的进阶课程,基本涵盖了95%以上Python开发知识点,真正体系化!

由于文件比较大,这里只是将部分目录大纲截图出来,每个节点里面都包含大厂面经、学习笔记、源码讲义、实战项目、讲解视频,并且后续会持续更新

如果你觉得这些内容对你有帮助,可以添加V获取:vip1024c (备注Python)

最后

不知道你们用的什么环境,我一般都是用的Python3.6环境和pycharm解释器,没有软件,或者没有资料,没人解答问题,都可以免费领取(包括今天的代码),过几天我还会做个视频教程出来,有需要也可以领取~

给大家准备的学习资料包括但不限于:

Python 环境、pycharm编辑器/永久激活/翻译插件

python 零基础视频教程

Python 界面开发实战教程

Python 爬虫实战教程

Python 数据分析实战教程

python 游戏开发实战教程

Python 电子书100本

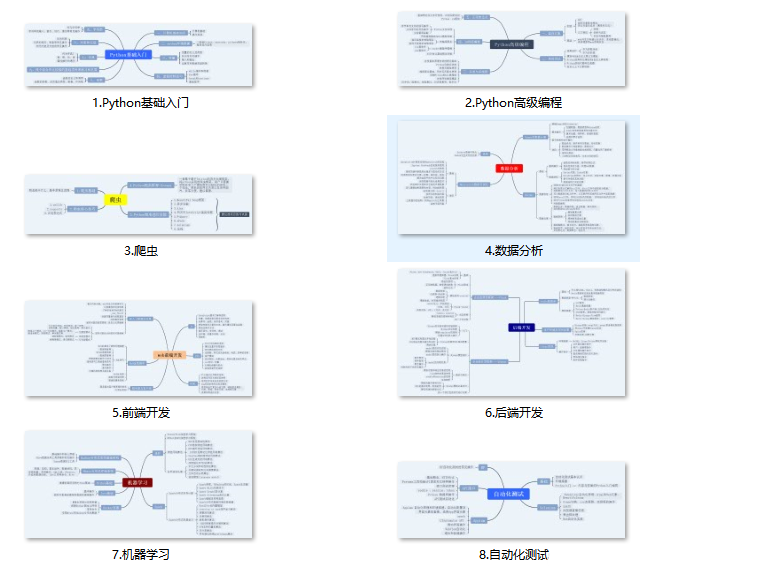

Python 学习路线规划

一个人可以走的很快,但一群人才能走的更远。不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎扫码加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

注Python)**

[外链图片转存中…(img-RIFUyl3l-1712885432518)]

最后

不知道你们用的什么环境,我一般都是用的Python3.6环境和pycharm解释器,没有软件,或者没有资料,没人解答问题,都可以免费领取(包括今天的代码),过几天我还会做个视频教程出来,有需要也可以领取~

给大家准备的学习资料包括但不限于:

Python 环境、pycharm编辑器/永久激活/翻译插件

python 零基础视频教程

Python 界面开发实战教程

Python 爬虫实战教程

Python 数据分析实战教程

python 游戏开发实战教程

Python 电子书100本

Python 学习路线规划

一个人可以走的很快,但一群人才能走的更远。不论你是正从事IT行业的老鸟或是对IT行业感兴趣的新人,都欢迎扫码加入我们的的圈子(技术交流、学习资源、职场吐槽、大厂内推、面试辅导),让我们一起学习成长!

[外链图片转存中…(img-1RcAkcmy-1712885432518)]

685

685

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?