配置集群方案

Ubuntu下的配置apache虚拟主机方案:

对其中的Master节点配置虚拟主机,可以通过Chrome浏览器访问目录。

安装虚拟主机之前,先安装Apache2

sudo apt-get install apache2

再安装php5

sudo apt-get install php5

然后,进入 /etc/apache2/sites-available文件夹,添加”*.conf”文件

往该文件里写入

<VirtualHost *:80> ServerName author.xxx.com ServerAdmin author.xxx.com DocumentRoot "/home/author" <Directory "/home/author"> Options Indexes AllowOverride all Order allow,deny IndexOptions Charset=UTF-8 Allow from all Require all granted </Directory> <ifModule dir_module> DirectoryIndex index.html </ifModule> ErrorLog ${APACHE_LOG_DIR}/authors_errors.log CustomLog ${APACHE_LOG_DIR}/authors_access.log combined </VirtualHost>

这样的结果是,当Url中访问author.xxx.com时,是有文件夹的树状列表显示的。如果想关掉树状列表显示(为了安全),可以将

Options Indexes IndexOptions Charset=UTF-8

改成

Options FollowSymLinks

这边

paul_errors.log

paul_access.log

都位于 /usr/log/apache2中,可以查看apache的日志,用root权限。

配置文件完成之后,则设置的配置文件运行以下命令:

sudo a2ensite xxx.conf

sudo /etc/init.d/apache2 restart

mac下的配置apache虚拟主机方案:

前面基本一致,除了重新启动配置文件不同:

sudo apachectl -v //查看apache版本 sudo apachectl -t //查看虚拟文件配置是否语法正确 sudo apachectl -k restart //重新启动Apache

hadoop部署集群碰到问题(版本为2.7及以上)

该搭建集群具体参数参考本主上一篇文章“机房4台服务器集群网络配置"

在Master上执行下列查看语句之后,出现如下错误

hdfs dfsadmin -report

Configured Capacity: 0 (0 B) Present Capacity: 0 (0 B) DFS Remaining: 0 (0 B) DFS Used: 0 (0 B) DFS Used%: NaN% Under replicated blocks: 0 Blocks with corrupt replicas: 0 Missing blocks: 0 Missing blocks (with replication factor 1):

所有值都为0,且得不到其他slave1,slave2,slave3的反馈消息。

解决方法:

mkdir /home/hadoop/usr/hadoop/conf

新建配置文件夹

文件夹下放入以下配置文件

core-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>hadoop.tmp.dir</name> <value>/usr/hadoop/tmp</value> <description>A base for other temporary directories.</description> </property> <!--file system properties--> <property> <name>fs.default.name</name> <value>hdfs://192.168.223.1:9000</value> </property> </configuration>

hdfs-site.xml

<?xml version="1.0" encoding="UTF-8"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>dfs.replication</name> <value>1</value> </property> </configuration>

mapred-site.xml(老版本下job,task配置)

<?xml version="1.0"?> <?xml-stylesheet type="text/xsl" href="configuration.xsl"?> <configuration> <property> <name>mapred.job.tracker</name> <value>http://192.168.223.1:9001</value> </property> </configuration>

mapred-site.xml(使用hadoop2.2之后的配置)

<configuration> <property> <name>mapreduce.framework.name</name> <value>yarn</value> </property> </configuration>

yarn-site.xml(Master下的配置文件)

<?xml version="1.0" encoding="UTF-8"?> <configuration> <property> <name>yarn.resourcemanager.hostname</name> <value>resourcemanager.company.com</value> </property> <property> <description>Classpath for typical applications.</description> <name>yarn.application.classpath</name> <value> $HADOOP_CONF_DIR, $HADOOP_COMMON_HOME/*,$HADOOP_COMMON_HOME/lib*/, $HADOOP_HDFS_HOME/*,$HADOOP_HDFS_HOME/lib/*, $HADOOP_MAPRED_HOME/*,$HADOOP_MAPRED_HOME/lib/*, $HADOOP_YARN_HOME/*,$HADOOP_YARN_HOME/lib/* </value>

</property>

<property>

<name>yarn.nodemanager.aux-services</name>

<value>mapreduce_shuffle</value>

</property>

<property>

<name>yarn.nodemanager.local-dirs</name>

<value>file:///data/1/yarn/local,file:///data/2/yarn/local,file:///data/3/yarn/local</value>

</property>

<property>

<name>yarn.nodemanager.log-dirs</name>

<value>file:///data/1/yarn/logs,file:///data/2/yarn/logs,file:///data/3/yarn/logs</value>

</property>

<property>

<name>yarn.log.aggregation-enable</name>

<value>true</value>

</property>

<property>

<description>Where to aggregate logs</description>

<name>yarn.nodemanager.remote-app-log-dir</name>

<value>hdfs://<namenode-host.company.com>:8020/var/log/hadoop-yarn/apps</value>

</property><!-- Site specific YARN configuration properties --></configuration>

为了配合yarn-site.xml中的配置,需要配置

- 创建 yarn.nodemanager.local-dirs 本地目录:

$ sudo mkdir -p /data/1/yarn/local /data/2/yarn/local /data/3/yarn/local /data/4/yarn/local

- 创建 yarn.nodemanager.log-dirs 本地目录:

$ sudo mkdir -p /data/1/yarn/logs /data/2/yarn/logs /data/3/yarn/logs /data/4/yarn/logs

-

将 yarn.nodemanager.local-dirs 目录的所有者配置为 hadoop 用户:

$ sudo chown -R hadoop:hadoop /data/1/yarn/local /data/2/yarn/local /data/3/yarn/local /data/4/yarn/local

-

将 yarn.nodemanager.log-dirs 目录的所有者配置为 hadoop 用户:

$ sudo chown -R hadoop:hadoop /data/1/yarn/logs /data/2/yarn/logs /data/3/yarn/logs /data/4/yarn/logs

yarn-site.xml在slave中的配置,用于与master节点通信,所以IP与端口号都是master节点的:

<?xml version="1.0"?> <configuration> <property> <name> yarn.nodemanager.aux-services </name> <value> mapreduce_shuffle </value> </property> <property> <name> yarn.nodemanager.auxservices.mapreduce.shuffle.class </name> <value> org.apache.hadoop.mapred.ShuffleHandler </value> </property> <property> <name> yarn.resourcemanager.address </name> <value> 192.168.223.1:8032 </value> </property> <property> <name> yarn.resourcemanager.scheduler.address </name> <value> 192.168.223.1:8030 </value> </property> <property> <name> yarn.resourcemanager.resource-tracker.address </name> <value> 192.168.223.1:8031 </value> </property> <property> <name> yarn.resourcemanager.hostname </name> <value> 192.168.223.1 </value> </property> <!-- Site specific YARN configuration properties --> </configuration>

master

192.168.223.1

slaves(在master节点上的配置文件相应ip地方换上以下相应的ip)

192.168.223.2 192.168.223.3 192.168.223.4

slaves(在slave节点上的配置文件)

localhost

启动方法如下:

hadoop@master:/usr/hadoop$hadoop namenode -format hadoop@master:/usr/hadoop$sbin/start-all.sh(如果已经启动,则先运行sbin/stop-all.sh)

查看方法(执行以下命令)

hadoop@master:/usr/hadoop$hdfs dfsadmin -report

得到如下结果,则表示安装正确

Configured Capacity: 4958160830464 (4.51 TB) Present Capacity: 4699621490688 (4.27 TB) DFS Remaining: 4699621404672 (4.27 TB) DFS Used: 86016 (84 KB) DFS Used%: 0.00% Under replicated blocks: 0 Blocks with corrupt replicas: 0 Missing blocks: 0 Missing blocks (with replication factor 1): 0 ------------------------------------------------- Live datanodes (3): Name: 192.168.223.3:50010 (slave3) Hostname: slave3 Decommission Status : Normal Configured Capacity: 1697554399232 (1.54 TB) DFS Used: 28672 (28 KB) Non DFS Used: 88462258176 (82.39 GB) DFS Remaining: 1609092112384 (1.46 TB) DFS Used%: 0.00% DFS Remaining%: 94.79% Configured Cache Capacity: 0 (0 B) Cache Used: 0 (0 B) Cache Remaining: 0 (0 B) Cache Used%: 100.00% Cache Remaining%: 0.00% Xceivers: 1 Last contact: Sat Nov 14 21:40:02 CST 2015 Name: 192.168.223.2:50010 (slave2) Hostname: slave2 Decommission Status : Normal Configured Capacity: 1697938153472 (1.54 TB) DFS Used: 28672 (28 KB) Non DFS Used: 88474435584 (82.40 GB) DFS Remaining: 1609463689216 (1.46 TB) DFS Used%: 0.00% DFS Remaining%: 94.79% Configured Cache Capacity: 0 (0 B) Cache Used: 0 (0 B) Cache Remaining: 0 (0 B) Cache Used%: 100.00% Cache Remaining%: 0.00% Xceivers: 1 Last contact: Sat Nov 14 21:40:02 CST 2015 Name: 192.168.223.4:50010 (slave4) Hostname: slave4 Decommission Status : Normal Configured Capacity: 1562668277760 (1.42 TB) DFS Used: 28672 (28 KB) Non DFS Used: 81602646016 (76.00 GB) DFS Remaining: 1481065603072 (1.35 TB) DFS Used%: 0.00% DFS Remaining%: 94.78% Configured Cache Capacity: 0 (0 B) Cache Used: 0 (0 B) Cache Remaining: 0 (0 B) Cache Used%: 100.00% Cache Remaining%: 0.00% Xceivers: 1 Last contact: Sat Nov 14 21:40:02 CST 2015

创建HDFS文件系统的命令

hadoop fs -mkdir -p /user/[current login user]

创建完HDFS文件系统用户之后,你就可以访问HDFS文件系统,具体对HDFS分布式文件系统的命令请参考以下网址

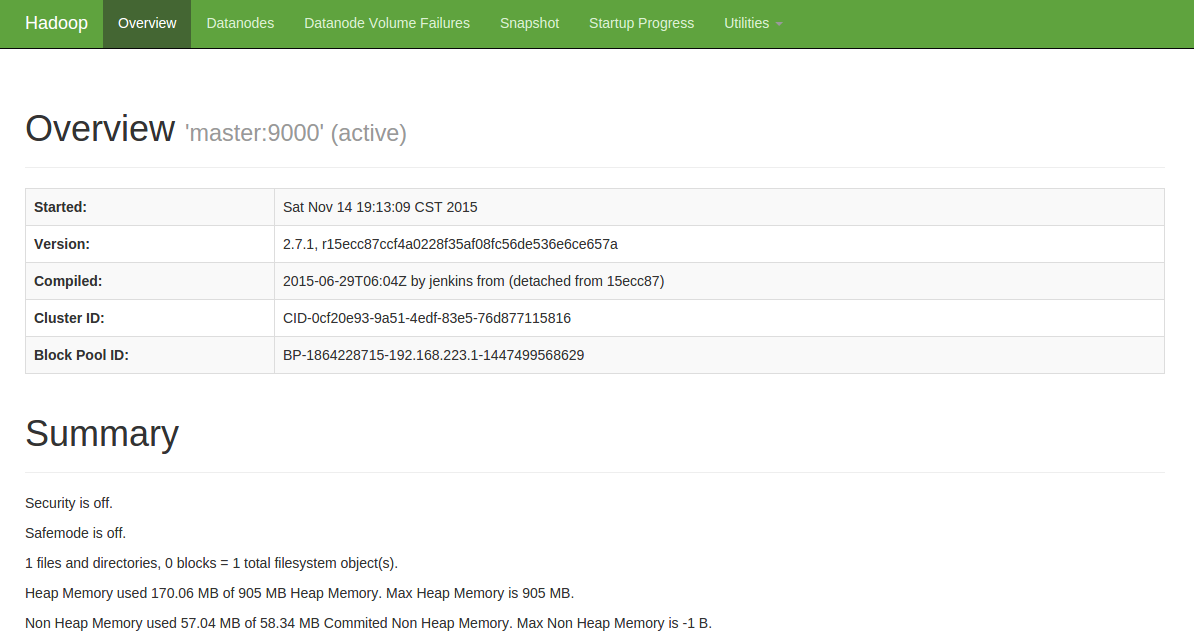

网页访问hadoop当前性能

http://10.1.8.200:50070/(这边的ip为外网访问master节点的ip,读者自己设置自己的ip)

如下图所示:

具体安装则参考网址

从 MapReduce 1 (MRv1) 迁移到 MapReduce 2 (MRv2, YARN)

362

362

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?