前面博客提到的是在startproject的中自已新建spider文件夹中的主函数

其实现在可以用一条指令就可以把这部分也省了。来源自官方:http://python.gotrained.com/scrapy-tutorial-web-scraping-craigslist/

在命令行中输入

scrapy genspider jobs https://newyork.craigslist.org/search/egr

//jobs是自己起的名字,后面网址是开爬的网址最后在spiders目录下会出现jobs.py,为爬虫主函数,如下

# -*- coding: utf-7 -*-

import scrapy

class JobsSpider(scrapy.Spider):

name = 'jobs'

allowed_domains = ['https://newyork.craigslist.org/search/egr']

//这里要修改为newyork.craigslist.org,不然报错

start_urls = ['http://https://newyork.craigslist.org/search/egr/']

def parse(self, response):

pass

//以上都是通过genspider指令自动生成的

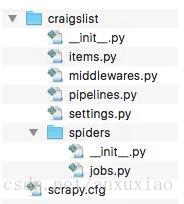

~ 这是整个结构目录

下面主要进行parse部分,因为这是最关键接部分,从最简单的开始,看下面一行解析代码

titles = response.xpath('//a[@class="result-title hdrlnk"]/text()').extract()

// 为起始点

/a为某种标签后面的@class=... 是指标签中必需要有该元素 text()指a中指向的文本信息 extract()是将网页中所有满足上述条件的值都返回给a

extract_first()则只返回第一个。 对就源码

<a href="/brk/egr/6085878649.html" data-id="6085878649" class="result-title hdrlnk">Chief Engineer</a>上一博客提到的用scrapy shell xxxxxx.com后输入print(response),会返回 <200 https://newyork.craigslist.org/search/egr> ,说明response是对于整个网页地址的连接,相应的print(response.body)将打印出整个网页的内容。但是在爬虫是我们只需要我们需要的部分内容,就需要使用response.xpath(),就如上述titles的提取一样。之后可以print(titles),整个代码修改如下:

import scrapy

class JobsSpider(scrapy.Spider):

name = "jobs"

allowed_domains = ["craigslist.org"]

start_urls = ['https://newyork.craigslist.org/search/egr']

def parse(self, response):

titles = response.xpath('//a[@class="result-title hdrlnk"]/text()').extract()

print(titles)执行 $ scrapy crawl jobs

后打印出信息

[u'Junior/ Mid-Level Architect for Immediate Hire', u'SE BUSCA LLANTERO/ LOOKING FOR TIRE CAR WORKER CON EXPERIENCIA', u'Draftsperson/Detailer', u'Controls/ Instrumentation Engineer', u'Project Manager', u'Tunnel Inspectors - Must be willing to Relocate to Los Angeles', u'Senior Designer - Large Scale', u'Construction Estimator/Project Manager', u'CAD Draftsman/Estimator', u'Project Manager']有点太乱了,希望能按固定样式输出,就可以采用:

for title in titles:

yield {'Title': title}

//这里yield与print功能是相似的,但scrapy中用的是yield.在scrapy中数据可以存储为CSV, JSON 或 XML三种格式。如我们将上面的输出的titles信息保存

scrapy crawl jobs -o result.csv

在当然目录下会出现一个新的.csv文件。

接着上面进行扩展,对某一titles中的岗位细节介绍进行爬取。

<li class="result-row" data-pid="6112478644">

<a href="/brk/egr/6112478644.html" class="result-image gallery empty"></a>

<p class="result-info">

<span class="icon icon-star" role="button">

<span class="screen-reader-text">favorite this post</span>

</span>

<time class="result-date" datetime="2017-05-01 12:35" title="Mon 01 May 12:35:41 PM">May 1</time>

<a href="/brk/egr/6112478644.html" data-id="6112478644" class="result-title hdrlnk">Project Architect</a>

<span class="result-meta">

<span class="result-hood"> (Brooklyn)</span>

<span class="result-tags">

<span class="maptag" data-pid="6112478644">map</span>

</span>

<span class="banish icon icon-trash" role="button">

<span class="screen-reader-text">hide this posting</span>

</span>

<span class="unbanish icon icon-trash red" role="button" aria-hidden="true"></span>

<a href="#" class="restore-link">

<span class="restore-narrow-text">restore</span>

<span class="restore-wide-text">restore this posting</span>

</a>

</span>

</p>

</li>在如上的源码中,使用

jobs = response.xpath('//p[@class="result-info"]')

//注意这里没有extract(),相当于这里是个容器,里面包含了所有信息,对容器中的东西进一步提取

for job in jobs

title=job.xpath(‘a/text()’).extract_first()

yield{‘Title’:title}

其他信息提取也一样。

``

for job in jobs:

title = job.xpath('a/text()').extract_first()

address = job.xpath('span[@class="result-meta"]/span[@class="result-hood"]/text()').extract_first("")[2:-1]

relative_url = job.xpath('a/@href').extract_first()//这里得到是相对路径,/brk/egr/6112478644.html,要得到https://的绝对路径需要用下面

absolute_url = response.urljoin(relative_url)

//通过此来得到绝对路径

yield{'URL':absolute_url, 'Title':title, 'Address':address}总结:从浅入深,从一个title信息的提取,到多种信息的提取。下面我们将进行递归对子页信息进行提取。

首先找到子页源码:

<a href="/search/egr?s=120" class="button next" title="next page">next > </a>

之后获得下页的全路径,与前面相同用href属性

relative_next_url = response.xpath('//a[@class="button next"]/@href').extract_first()

absolute_next_url = response.urljoin(relative_next_url)

yield Request(absolute_next_url, callback=self.parse)

//这里使用递归与前面解析提取的信息一致,故使用同一函数最终整个完整程序为

import scrapy

from scrapy import Request

class JobsSpider(scrapy.Spider):

name = "jobs"

allowed_domains = ["craigslist.org"]

start_urls = ["https://newyork.craigslist.org/search/egr"]

def parse(self, response):

jobs = response.xpath('//p[@class="result-info"]')

for job in jobs:

relative_url = job.xpath('a/@href').extract_first()

absolute_url = response.urljoin(relative_url)

title = job.xpath('a/text()').extract_first()

address = job.xpath('span[@class="result-meta"]/span[@class="result-hood"]/text()').extract_first("")[2:-1]

yield Request(absolute_url, callback=self.parse_page, meta={'URL': absolute_url, 'Title': title, 'Address':address})

relative_next_url = response.xpath('//a[@class="button next"]/@href').extract_first()

absolute_next_url = "https://newyork.craigslist.org" + relative_next_url

yield Request(absolute_next_url, callback=self.parse)

def parse_page(self, response):

url = response.meta.get('URL')

title = response.meta.get('Title')

address = response.meta.get('Address')

description = "".join(line for line in response.xpath('//*[@id="postingbody"]/text()').extract())

compensation = response.xpath('//p[@class="attrgroup"]/span[1]/b/text()').extract_first()

employment_type = response.xpath('//p[@class="attrgroup"]/span[2]/b/text()').extract_first()

yield{'URL': url, 'Title': title, 'Address':address, 'Description':description, 'Compensation':compensation, 'Employment Type':employment_type}

284

284

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?