《Inferring Decision Trees Using the Minimum Description Length Principle*》

Information And Computation 80, 227-248(1989)

My difficulty is: how to get 18.170 bits when computing Coding Decision Tree Costs?

here are some relevant part in this article.

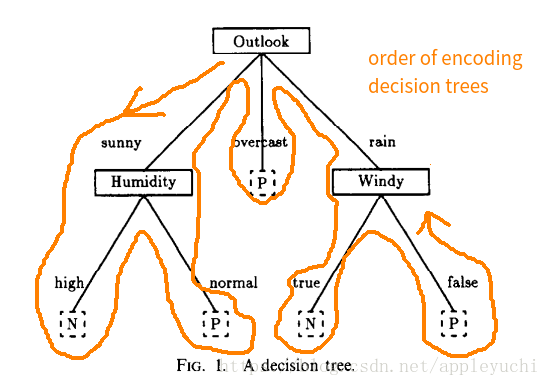

---------------the decision tree is:--------------

3rd page of article( page 229 on the top-right corner)

-----------------------------formula for computing cost-------------

10th page of article ( page 236 on the top-left corner)

The total cost for this procedure is thus:

L

(

n

,

k

,

b

)

=

l

o

g

2

(

b

+

1

)

+

l

o

g

2

[

C

n

k

]

L(n,k,b)=log_{2}(b+1)+log_{2}[C_n^{k}]

L(n,k,b)=log2(b+1)+log2[Cnk]

-----------------------the sequence relevant to the above decision tree-------------------------------

13th page of article ( page 239 on the top-right corner)

All above are trying to encode the whole decision tree,

and then send the decision tree from sender to receiver.

My difficulty is: how to get 18.170 bits mentioned above?

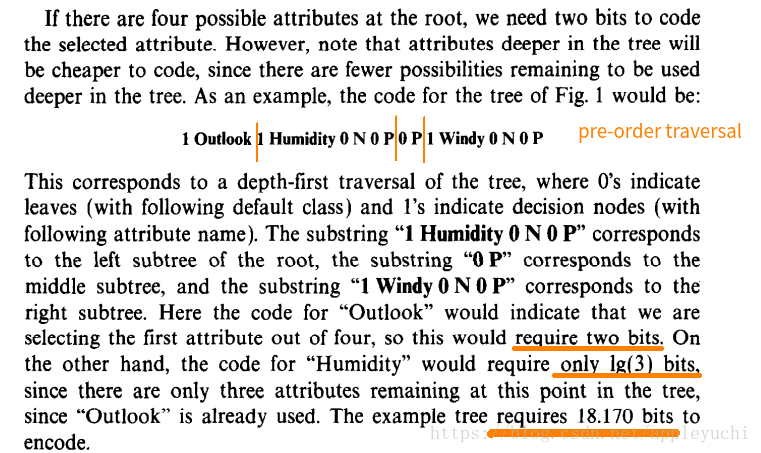

My understanding is:

Outlook: 2 bits

Humidity lg(3)bits

Windy lg(2)bit (not mentioned in article ,I just guess)

the whole sequence in this paper is :

1 Outlook 1 Humidity 0 N 0 P 0 P 1 Windy 0 N 0 P

then,

there are 8digits above, the following I guess may be wrong:

3 decicion nodes cost:3 bits

5 leaves cost: 5 bits

2+lg(3)+log(2)+3+5+?=18.17

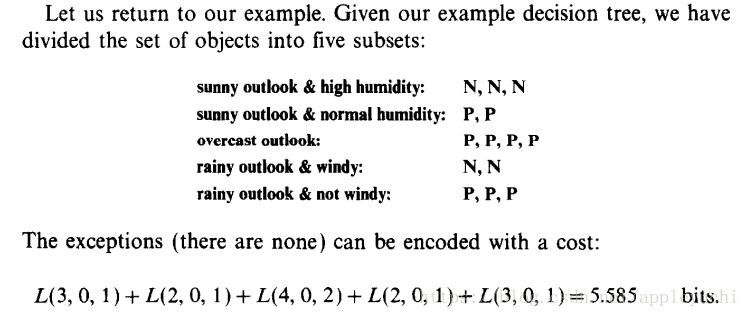

?=5.585,

but how to get 5.585?

Although the following picture(encoding exception) has the number 5.585,

I guess it cannot be used as the explanation of above 5.585.

Could you tell me how to get “18.170 bits” mentioned in this article ?

Thanks very much!

1万+

1万+

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?