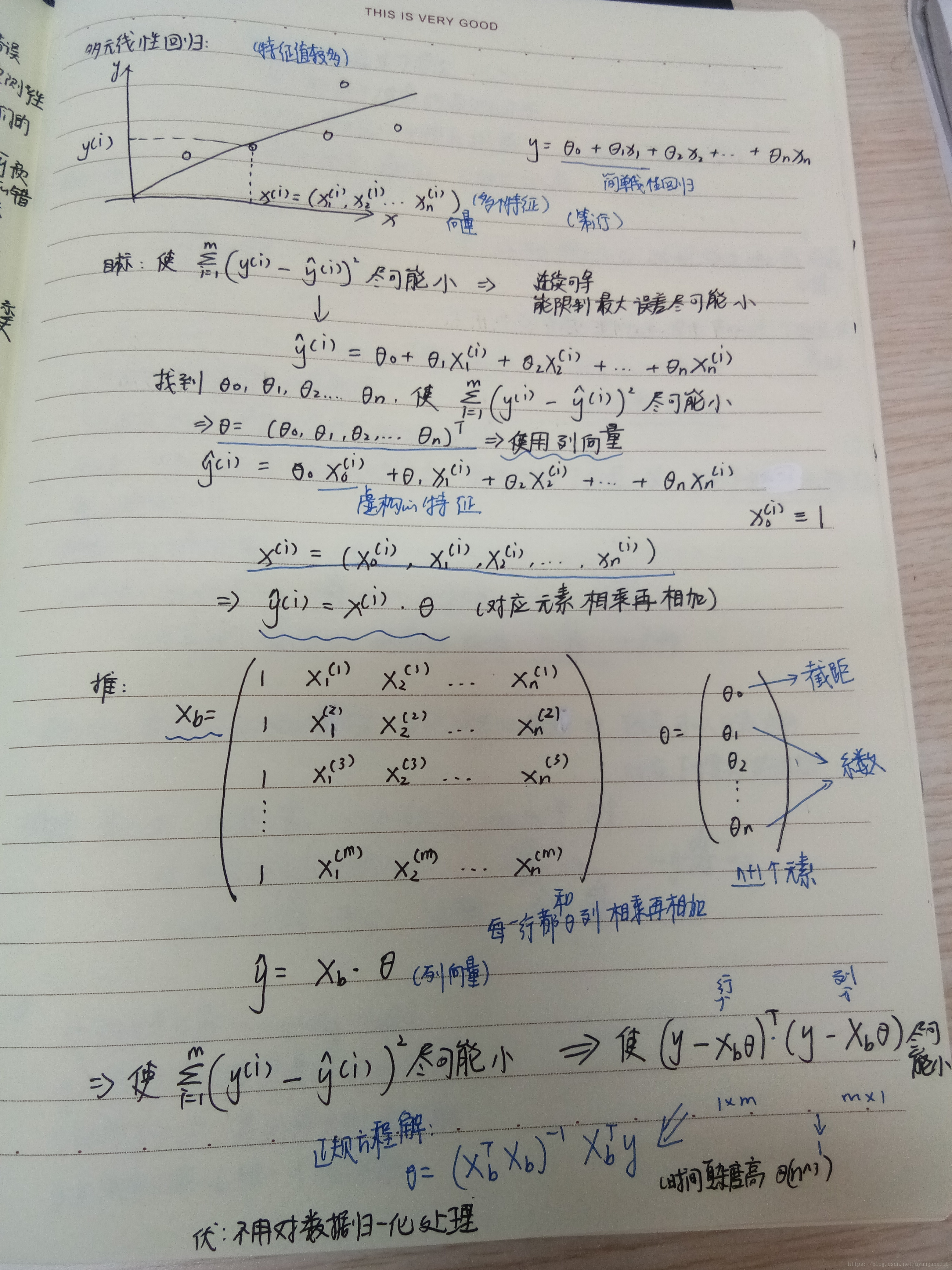

笔记

接下来在代码中实现多元线性回归:

import numpy as np

from sklearn.metrics import r2_score

"""

多元线性回归的实现

"""

class LinearRegression:

def __init__(self):

self.coef_=None

self.interception=None

self.theta=None

#训练模型

def fit_normal(self,X_train,y_train):

X_b=np.hstack([np.ones((len(X_train),1)),X_train])

self.theta=np.linalg.inv(X_b.T.dot(X_b)).dot(X_b.T).dot(y_train))

self.coef_=self.theta[0]

self.interception=self.theta[1:]

return self

#预测

def predict(self,X_predict):

X_b=np.hstack([np.ones((len(X_predict),1)),X_predict])

return X_b.dot(self.theta)

#计算精确度

def score(self,X_test,y_test):

y_predict=self.predict(X_test)

return r2_score(y_test,y_predict)

测试过程:

import numpy as np

from sklearn import datasetsboston=datasets.load_boston()X=boston.data

y=boston.targetX=X[y<50]

y=y[y<50]from Play.model_selection import train_test_splitX_train,X_test,y_train,y_test=train_test_split(X,y,seed=666)X_train.shape

(392, 13)y_train.shape(392,)X_test.shape(98, 13)from Play.LinearDay2 import LinearRegressionreg=LinearRegression()

reg.fit_normal(X_train,y_train)<Play.LinearDay2.LinearRegression at 0x10a6d5518>reg.predict(X_test)array([18.08047724, 25.52374702, 12.93068154, 32.89616169, 24.17956679,

2.67010028, 26.64700396, 32.23851244, 13.96168643, 24.04280799,

14.93247906, 10.58513734, 30.28710828, 16.2782111 , 23.67817017,

25.64047759, 18.67821777, 24.02076592, 28.77437534, 26.93946254,

12.81354434, 27.22770353, 26.0804716 , 23.41900039, 20.79727917,

31.96786535, 14.90862058, 20.954883 , 12.92314457, 29.80207127,

35.28684545, 5.03624207, 13.10143242, 35.54317123, 15.98890703,

21.53597166, 12.47621364, 29.12864349, 27.36022467, 24.05031901,

14.35220626, 23.61433371, 10.90347719, 22.38154099, 18.62937294,

16.37126778, 24.43078261, 33.06293684, 19.19809767, 27.04404675,

18.05674457, 14.85136715, 25.08935314, 16.0884098 , 21.74619772,

16.3194766 , 24.25591698, 11.72935395, 27.92260116, 31.05867941,

20.17444189, 24.9964365 , 25.99734127, 12.14007801, 16.58246637,

27.30690012, 22.26787948, 21.72492458, 31.50402544, 14.03991351,

16.42672344, 24.77534313, 25.18133042, 18.65228238, 17.34412768,

27.90749795, 23.71553798, 14.62906156, 11.22231617, 31.4243847 ,

33.66552044, 17.66375929, 18.69989012, 17.79423031, 25.15668084,

23.66633124, 24.55578753, 26.09123112, 25.49718056, 20.28898727,

24.87506605, 33.48356492, 36.08610386, 23.07558528, 18.79472835,

31.04138456, 35.78577626, 20.84603282])系数:

reg.coef_34.16143549622471截距:

reg.interceptionarray([-1.18919477e-01, 3.63991462e-02, -3.56494193e-02, 5.66737830e-02,

-1.16195486e+01, 3.42022185e+00, -2.31470282e-02, -1.19509560e+00,

2.59339091e-01, -1.40112724e-02, -8.36521175e-01, 7.92283639e-03,

-3.81966137e-01])测试精确度:

reg.score(X_test,y_test)0.8129802602658466bobo代码:

这里写代码片import numpy as np

from .metrics import r2_score

class LinearRegression:

def __init__(self):

"""初始化Linear Regression模型"""

self.coef_ = None

self.intercept_ = None

self._theta = None

#训练

def fit_normal(self, X_train, y_train):

"""根据训练数据集X_train, y_train训练Linear Regression模型"""

assert X_train.shape[0] == y_train.shape[0], \

"the size of X_train must be equal to the size of y_train"

X_b = np.hstack([np.ones((len(X_train), 1)), X_train])#X_b要多加一列数为1,通过hstack添加(全为1(传入行数为X_train的长度,列只有一列),X_train),两者组合在一起

self._theta = np.linalg.inv(X_b.T.dot(X_b)).dot(X_b.T).dot(y_train)#根据公式求解

self.intercept_ = self._theta[0]#截距是第0个元素

self.coef_ = self._theta[1:]#从第1个元素直至末尾

return self

#预测

def predict(self, X_predict):

"""给定待预测数据集X_predict,返回表示X_predict的结果向量"""

assert self.intercept_ is not None and self.coef_ is not None, \

"must fit before predict!"

assert X_predict.shape[1] == len(self.coef_), \

"the feature number of X_predict must be equal to X_train"

X_b = np.hstack([np.ones((len(X_predict), 1)), X_predict])

return X_b.dot(self._theta)#X_b*theta

def score(self, X_test, y_test):

"""根据测试数据集 X_test 和 y_test 确定当前模型的准确度"""

y_predict = self.predict(X_test)

return r2_score(y_test, y_predict)

def __repr__(self):

return "LinearRegression()"

3360

3360

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?