来自wikipedia,https://en.wikipedia.org/wiki/Precision_and_recall

| True condition | ||||||

| Total population | Condition positive | Condition negative | Prevalence = Σ Condition positive/Σ Total population | Accuracy (ACC) = Σ True positive + Σ True negative/Σ Total population | ||

| Predicted condition | Predicted condition positive | True positive | False positive, Type I error | Positive predictive value (PPV), Precision = Σ True positive/Σ Predicted condition positive | False discovery rate (FDR), probability of false alarm = Σ False positive/Σ Predicted condition positive | |

| Predicted condition negative | False negative, Type II error | True negative | False omission rate (FOR) = Σ False negative/Σ Predicted condition negative | Negative predictive value (NPV) = Σ True negative/Σ Predicted condition negative | ||

| True positive rate (TPR), Recall, Sensitivity, probability of detection = Σ True positive/Σ Condition positive | False positive rate (FPR), Fall-out= Σ False positive/Σ Condition negative | Positive likelihood ratio (LR+) = TPR/FPR | Diagnostic odds ratio (DOR) = LR+/LR− | F1 score = 2/1/Recall + 1/Precision | ||

| False negative rate (FNR), Miss rate = Σ False negative/Σ Condition positive | True negative rate (TNR), Specificity (SPC) = Σ True negative/Σ Condition negative | Negative likelihood ratio (LR−) = FNR/TNR | ||||

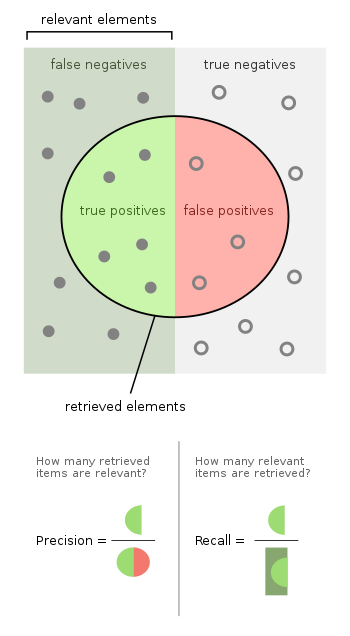

PRECISION=TP/TP+FP 查准率

RECALL=TP/TP+FN 查全率

假设有样本:正例90,反例10

比较三个不同的分类器

A:

TP86 FP2

FN4 TN8

ACC = 0.94

P = 86/88 = 0.98

R = 86/90 = 0.96

F1 = 0.97

B:

TP90 FP10

FN0 TN0

ACC = 0.9

P = 90/100

R = 90/90

F1 = 0.95

C:

TP90 FP0

FN0 TN10

ACC = 1

P= 1

R = 1

F1= 1

756

756

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?