基本用法参照:http://www.cnblogs.com/NicholasLee/archive/2012/09/14/2684815.html

一、Put操作

package hbase; import java.io.IOException; import org.apache.hadoop.conf.Configuration; import org.apache.hadoop.hbase.HBaseConfiguration; import org.apache.hadoop.hbase.HColumnDescriptor; import org.apache.hadoop.hbase.HTableDescriptor; import org.apache.hadoop.hbase.client.Get; import org.apache.hadoop.hbase.client.HBaseAdmin; import org.apache.hadoop.hbase.client.HTable; import org.apache.hadoop.hbase.client.HTablePool; import org.apache.hadoop.hbase.client.Put; import org.apache.hadoop.hbase.client.Result; import org.apache.hadoop.hbase.util.Bytes; public class HBaseClient { // 声明静态配置 static Configuration conf = null; static final HTablePool tablePool; static { conf = HBaseConfiguration.create(); conf.set("hbase.zookeeper.quorum", "libin2"); tablePool = new HTablePool(conf, 10); } /* * 创建表 * * @tableName 表名 * * @family 列族列表 */ public static void creatTable(String tableName, String[] family) throws Exception { HBaseAdmin admin = new HBaseAdmin(conf); HTableDescriptor desc = new HTableDescriptor(tableName); for (int i = 0; i < family.length; i++) { desc.addFamily(new HColumnDescriptor(family[i])); } if (admin.tableExists(tableName)) { System.out.println("table Exists!"); System.exit(0); } else { admin.createTable(desc); System.out.println("create table Success!"); } } public static void putData(String tableName) throws IOException{ HTable table = (HTable) tablePool.getTable(tableName);// 获取表 System.out.println("Auto flush:" + table.isAutoFlush()); table.setAutoFlush(false); Put put1 = new Put(Bytes.toBytes("rowkey1")); put1.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), Bytes.toBytes("val1")); table.put(put1); Put put2 = new Put(Bytes.toBytes("rowkey2")); put2.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), Bytes.toBytes("val1")); table.put(put2); Get get = new Get(Bytes.toBytes("rowkey1")); Result res1 = table.get(get); System.out.println("Result:" + res1); Put put3 = new Put(Bytes.toBytes("rowkey2")); put3.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual2"), Bytes.toBytes("val2")); table.put(put3); table.flushCommits(); Result res2= table.get(get); System.out.println("Result:" + res2); } public static void main(String[] args) throws Exception { //String tableName = "libinHTable"; String[] family = {"colfam1"}; //creatTable(tableName,family); putData("libinHTable"); } }

Auto flush:true

Result:keyvalues=NONE

Result:keyvalues={rowkey1/colfam1:qual1/1354092615995/Put/vlen=4}

其中,通过调用HTable.setAutoFlush(false)方法可以将HTable写客户端的自动flush关闭,这样可以批量写入数据到 HBase,而不是有一条put就执行一次更新,只有当put填满客户端写缓存时,才实际向HBase服务端发起写请求。默认情况下auto flush是开启的。

res1为NONE是因为客户端write buffer还在内存里,并没有发送到服务端。

批量Put操作

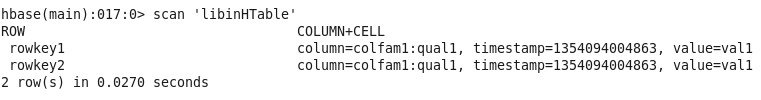

public static void putListData(String tableName) throws IOException{ HTable table = (HTable) tablePool.getTable(tableName);// 获取表 List<Put> puts = new ArrayList<Put>(); Put put1 = new Put(Bytes.toBytes("rowkey1")); put1.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), Bytes.toBytes("val1")); puts.add(put1); Put put2 = new Put(Bytes.toBytes("rowkey2")); put2.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), Bytes.toBytes("val1")); puts.add(put2); table.put(puts); }

这些Put进程如果在服务端那边出错后会保存在本地write buffer里,可以用getWriteBuffer()方法进行访问,批量插入的数据的时间戳都是相同的。

使用批量Put,我们无法控制put在服务端那边的顺序。

批量Put的出错处理:

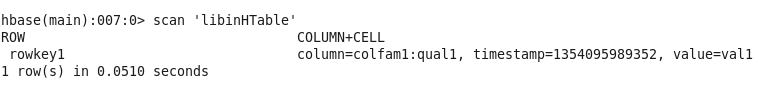

public static void putListData(String tableName) throws IOException { HTable table = (HTable) tablePool.getTable(tableName);// 获取表 List<Put> puts = new ArrayList<Put>(); Put put1 = new Put(Bytes.toBytes("rowkey1")); put1.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), Bytes.toBytes("val1")); puts.add(put1); Put put2 = new Put(Bytes.toBytes("rowkey2")); // put2.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), // Bytes.toBytes("val1")); puts.add(put2); Put put3 = new Put(Bytes.toBytes("rowkey3")); put3.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), Bytes.toBytes("val1")); puts.add(put3); try { table.put(puts); } catch (Exception e) { System.out.println("Error:" + e); table.flushCommits(); } }

table.put(puts);会先把put放到table中,如果出错,就自动提交flush。例如put2是空的,那么put2之前的数据可以put到表中:

Error:java.lang.IllegalArgumentException: No columns to insert

checkAndPut:

public static void putAndCheck(String tableName) throws IOException { HTable table = (HTable) tablePool.getTable(tableName);// 获取表 Put put1 = new Put(Bytes.toBytes("rowkey1")); put1.add(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), Bytes.toBytes("val1")); boolean res1 = table.checkAndPut(Bytes.toBytes("rowkey1"),Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), null, put1); System.out.println("Put applied:" + res1); boolean res2 = table.checkAndPut(Bytes.toBytes("rowkey1"),Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), null, put1); System.out.println("Put applied:" + res2); boolean res3 = table.checkAndPut(Bytes.toBytes("rowkey2"),Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"), null, put1); System.out.println("Put applied:" + res3); }

Put applied:true

Put applied:false

Exception in thread "main" org.apache.hadoop.hbase.DoNotRetryIOException: org.apache.hadoop.hbase.DoNotRetryIOException: Action's getRow must match the passed row

第一个put执行的时候,put1不存在,可以插入,第二个put因为put1已经存在,所以执行失败,第三个报错,因为check和put针对的是同一个row,即同一个rowkey。

二、Get操作

public static void getData(String tableName, String rowKey) throws IOException { HTable table = (HTable) tablePool.getTable(tableName);// 获取表 Get get = new Get(Bytes.toBytes(rowKey)); get.addColumn(Bytes.toBytes("colfam1"),Bytes.toBytes("qual1")); Result result = table.get(get); byte[] val = result.getValue(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1")); System.out.println("value:" + Bytes.toString(val)); System.out.println(Bytes.toString(result.getRow())); System.out.println(Bytes.toString(result.value())); System.out.println(result.size()); for (KeyValue kv : result.list()) { System.out.println("family:" + Bytes.toString(kv.getFamily())); System.out .println("qualifier:" + Bytes.toString(kv.getQualifier())); System.out.println("value:" + Bytes.toString(kv.getValue())); System.out.println("Timestamp:" + kv.getTimestamp()); } System.out.println(result.getColumn(Bytes.toBytes("colfam1"), Bytes.toBytes("qual1"))); }

value:val1

rowkey1

val1

1

family:colfam1

qualifier:qual1

value:val1

Timestamp:1354167940755

[rowkey1/colfam1:qual1/1354167940755/Put/vlen=4]

byte[] value返回的顺序是按字典排序的第一行;

size()返回KeyValue的个数,可以遍历KeyValue获取Result的详细信息;

getColumn返回的是完成的列的信息。批量Get操作的时候如果遇到任何一个get报错(比如get不存在的列)整个执行都会终端,不会返回任何结果。

815

815

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?