转载请注明作者和出处:http://blog.csdn.net/c406495762

Python版本: Python2.7

运行平台: Ubuntu14.04

一、前言

了解到上一篇笔记的内容,就可以尝试自己编写python程序生成prototxt文件了,当然也可以直接创建文件进行编写,不过显然,使用python生成这个配置文件更为简洁。之前已说过cifar10是使用cifar10_quick_solver.prototxt配置文件来生成model。cifar10_quick_solver.prototxt的内容如下:

# reduce the learning rate after 8 epochs (4000 iters) by a factor of 10

# The train/test net protocol buffer definition

net: "examples/cifar10/cifar10_quick_train_test.prototxt"

# test_iter specifies how many forward passes the test should carry out.

# In the case of MNIST, we have test batch size 100 and 100 test iterations,

# covering the full 10,000 testing images.

test_iter: 100

# Carry out testing every 500 training iterations.

test_interval: 500

# The base learning rate, momentum and the weight decay of the network.

base_lr: 0.001

momentum: 0.9

weight_decay: 0.004

# The learning rate policy

lr_policy: "fixed"

# Display every 100 iterations

display: 100

# The maximum number of iterations

max_iter: 4000

# snapshot intermediate results

snapshot: 4000

snapshot_format: HDF5

snapshot_prefix: "examples/cifar10/cifar10_quick"

# solver mode: CPU or GPU

solver_mode: GPU从以上代码中可以看出,第四行的net参数,指定了训练时使用的prototxt文件。这个prototxt文件也是可以分开写的,分为train.prototxt和test.prototxt。例如,第四行的配置可以改写为:

train_net = "examples/cifar10/cifar10_quick_train.prototxt"

test_net = "examples/cifar10/cifar10_quick_test.prototxt"二、Pycaffe API小试

solver.prototxt文件如何生成,在后续的笔记中讲解,先学习如何使用python生成简单的train.prtotxt文件和test.prototxt文件。

1.Data Layer:

# -*- coding: UTF-8 -*-

import caffe #导入caffe包

caffe_root = "/home/Jack-Cui/caffe-master/my-caffe-project/" #my-caffe-project目录

train_lmdb = caffe_root + "img_train.lmdb" #train.lmdb文件的位置

mean_file = caffe_root + "mean.binaryproto" #均值文件的位置

#网络规范

net = caffe.NetSpec()

#第一层Data层

net.data, net.label = caffe.layers.Data(source = train_lmdb, backend = caffe.params.Data.LMDB, batch_size = 64, ntop=2,

transform_param = dict(crop_size = 40,mean_file = mean_file,mirror = True))

print str(net.to_proto())运行结果:

layer {

name: "data"

type: "Data"

top: "data"

top: "label"

transform_param {

mirror: true

crop_size: 40

mean_file: "/home/Jack-Cui/caffe-master/my-caffe-project/mean.binaryproto"

}

data_param {

source: "/home/Jack-Cui/caffe-master/my-caffe-project/img_train.lmdb"

batch_size: 64

backend: LMDB

}

}

2.Convolution Layer:

添加卷积层:

# -*- coding: UTF-8 -*-

import caffe #导入caffe包

caffe_root = "/home/Jack-Cui/caffe-master/my-caffe-project/" #my-caffe-project目录

train_lmdb = caffe_root + "img_train.lmdb" #train.lmdb文件的位置

mean_file = caffe_root + "mean.binaryproto" #均值文件的位置

#网络规范

net = caffe.NetSpec()

#第一层Data层

net.data, net.label = caffe.layers.Data(source = train_lmdb, backend = caffe.params.Data.LMDB, batch_size = 64, ntop=2,

transform_param = dict(crop_size = 40,mean_file = mean_file,mirror = True))

#第二层Convolution层

net.conv1 = caffe.layers.Convolution(net.data, num_output=20, kernel_size=5,weight_filler={"type": "xavier"},

bias_filler={"type": "constant"})

print str(net.to_proto())

运行结果:

layer {

name: "data"

type: "Data"

top: "data"

top: "label"

transform_param {

mirror: true

crop_size: 40

mean_file: "/home/Jack-Cui/caffe-master/my-caffe-project/mean.binaryproto"

}

data_param {

source: "/home/Jack-Cui/caffe-master/my-caffe-project/img_train.lmdb"

batch_size: 64

backend: LMDB

}

}

layer {

name: "conv1"

type: "Convolution"

bottom: "data"

top: "conv1"

convolution_param {

num_output: 20

kernel_size: 5

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

}

}

}

3.ReLU Layer:

添加ReLu激活层:

# -*- coding: UTF-8 -*-

import caffe #导入caffe包

caffe_root = "/home/Jack-Cui/caffe-master/my-caffe-project/" #my-caffe-project目录

train_lmdb = caffe_root + "img_train.lmdb" #train.lmdb文件的位置

mean_file = caffe_root + "mean.binaryproto" #均值文件的位置

#网络规范

net = caffe.NetSpec()

#第一层Data层

net.data, net.label = caffe.layers.Data(source = train_lmdb, backend = caffe.params.Data.LMDB, batch_size = 64, ntop=2,

transform_param = dict(crop_size = 40,mean_file = mean_file,mirror = True))

#第二层Convolution视觉层

net.conv1 = caffe.layers.Convolution(net.data, num_output=20, kernel_size=5,weight_filler={"type": "xavier"},

bias_filler={"type": "constant"})

#第三层ReLU激活层

net.relu1 = caffe.layers.ReLU(net.conv1, in_place=True)

print str(net.to_proto())

运行结果:

layer {

name: "data"

type: "Data"

top: "data"

top: "label"

transform_param {

mirror: true

crop_size: 40

mean_file: "/home/Jack-Cui/caffe-master/my-caffe-project/mean.binaryproto"

}

data_param {

source: "/home/Jack-Cui/caffe-master/my-caffe-project/img_train.lmdb"

batch_size: 64

backend: LMDB

}

}

layer {

name: "conv1"

type: "Convolution"

bottom: "data"

top: "conv1"

convolution_param {

num_output: 20

kernel_size: 5

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

}

}

}

layer {

name: "relu1"

type: "ReLU"

bottom: "conv1"

top: "conv1"

}

4.类似的继续添加池化层、全连层、dropout层、softmax层等。

# -*- coding: UTF-8 -*-

import caffe #导入caffe包

caffe_root = "/home/Jack-Cui/caffe-master/my-caffe-project/" #my-caffe-project目录

train_lmdb = caffe_root + "img_train.lmdb" #train.lmdb文件的位置

mean_file = caffe_root + "mean.binaryproto" #均值文件的位置

#网络规范

net = caffe.NetSpec()

#第一层Data层

net.data, net.label = caffe.layers.Data(source = train_lmdb, backend = caffe.params.Data.LMDB, batch_size = 64, ntop=2,

transform_param = dict(crop_size = 40,mean_file = mean_file,mirror = True))

#第二层Convolution视觉层

net.conv1 = caffe.layers.Convolution(net.data, num_output=20, kernel_size=5,weight_filler={"type": "xavier"},

bias_filler={"type": "constant"})

#第三层ReLU激活层

net.relu1 = caffe.layers.ReLU(net.conv1, in_place=True)

#第四层Pooling池化层

net.pool1 = caffe.layers.Pooling(net.relu1, pool=caffe.params.Pooling.MAX, kernel_size=3, stride=2)

net.conv2 = caffe.layers.Convolution(net.pool1, kernel_size=3, stride=1,num_output=32, pad=1,weight_filler=dict(type='xavier'))

net.relu2 = caffe.layers.ReLU(net.conv2, in_place=True)

net.pool2 = caffe.layers.Pooling(net.relu2, pool=caffe.params.Pooling.MAX, kernel_size=3, stride=2)

#全连层

net.fc3 = caffe.layers.InnerProduct(net.pool2, num_output=1024,weight_filler=dict(type='xavier'))

net.relu3 = caffe.layers.ReLU(net.fc3, in_place=True)

#创建一个dropout层

net.drop3 = caffe.layers.Dropout(net.relu3, in_place=True)

net.fc4 = caffe.layers.InnerProduct(net.drop3, num_output=10,weight_filler=dict(type='xavier'))

#创建一个softmax层

net.loss = caffe.layers.SoftmaxWithLoss(net.fc4, net.label)

print str(net.to_proto())

运行结果:

layer {

name: "data"

type: "Data"

top: "data"

top: "label"

transform_param {

mirror: true

crop_size: 40

mean_file: "/home/Jack-Cui/caffe-master/my-caffe-project/mean.binaryproto"

}

data_param {

source: "/home/Jack-Cui/caffe-master/my-caffe-project/img_train.lmdb"

batch_size: 64

backend: LMDB

}

}

layer {

name: "conv1"

type: "Convolution"

bottom: "data"

top: "conv1"

convolution_param {

num_output: 20

kernel_size: 5

weight_filler {

type: "xavier"

}

bias_filler {

type: "constant"

}

}

}

layer {

name: "relu1"

type: "ReLU"

bottom: "conv1"

top: "conv1"

}

layer {

name: "pool1"

type: "Pooling"

bottom: "conv1"

top: "pool1"

pooling_param {

pool: MAX

kernel_size: 3

stride: 2

}

}

layer {

name: "conv2"

type: "Convolution"

bottom: "pool1"

top: "conv2"

convolution_param {

num_output: 32

pad: 1

kernel_size: 3

stride: 1

weight_filler {

type: "xavier"

}

}

}

layer {

name: "relu2"

type: "ReLU"

bottom: "conv2"

top: "conv2"

}

layer {

name: "pool2"

type: "Pooling"

bottom: "conv2"

top: "pool2"

pooling_param {

pool: MAX

kernel_size: 3

stride: 2

}

}

layer {

name: "fc3"

type: "InnerProduct"

bottom: "pool2"

top: "fc3"

inner_product_param {

num_output: 1024

weight_filler {

type: "xavier"

}

}

}

layer {

name: "relu3"

type: "ReLU"

bottom: "fc3"

top: "fc3"

}

layer {

name: "drop3"

type: "Dropout"

bottom: "fc3"

top: "fc3"

}

layer {

name: "fc4"

type: "InnerProduct"

bottom: "fc3"

top: "fc4"

inner_product_param {

num_output: 10

weight_filler {

type: "xavier"

}

}

}

layer {

name: "loss"

type: "SoftmaxWithLoss"

bottom: "fc4"

bottom: "label"

top: "loss"

}

三、生成并保存训练需要使用的train.prototxt和test.protxt文件

1.编写代码如下:

# -*- coding: UTF-8 -*-

import caffe #导入caffe包

def create_net(lmdb, mean_file, batch_size, include_acc=False):

#网络规范

net = caffe.NetSpec()

#第一层Data层

net.data, net.label = caffe.layers.Data(source=lmdb, backend=caffe.params.Data.LMDB, batch_size=batch_size, ntop=2,

transform_param = dict(crop_size = 40, mean_file=mean_file, mirror=True))

#第二层Convolution视觉层

net.conv1 = caffe.layers.Convolution(net.data, num_output=20, kernel_size=5,weight_filler={"type": "xavier"},

bias_filler={"type": "constant"})

#第三层ReLU激活层

net.relu1 = caffe.layers.ReLU(net.conv1, in_place=True)

#第四层Pooling池化层

net.pool1 = caffe.layers.Pooling(net.relu1, pool=caffe.params.Pooling.MAX, kernel_size=3, stride=2)

net.conv2 = caffe.layers.Convolution(net.pool1, kernel_size=3, stride=1,num_output=32, pad=1,weight_filler=dict(type='xavier'))

net.relu2 = caffe.layers.ReLU(net.conv2, in_place=True)

net.pool2 = caffe.layers.Pooling(net.relu2, pool=caffe.params.Pooling.MAX, kernel_size=3, stride=2)

#全连层

net.fc3 = caffe.layers.InnerProduct(net.pool2, num_output=1024,weight_filler=dict(type='xavier'))

net.relu3 = caffe.layers.ReLU(net.fc3, in_place=True)

#创建一个dropout层

net.drop3 = caffe.layers.Dropout(net.relu3, in_place=True)

net.fc4 = caffe.layers.InnerProduct(net.drop3, num_output=10,weight_filler=dict(type='xavier'))

#创建一个softmax层

net.loss = caffe.layers.SoftmaxWithLoss(net.fc4, net.label)

#训练的prototxt文件不包括Accuracy层,测试的时候需要。

if include_acc:

net.acc = caffe.layers.Accuracy(net.fc4, net.label)

return str(net.to_proto())

return str(net.to_proto())

def write_net():

caffe_root = "/home/Jack-Cui/caffe-master/my-caffe-project/" #my-caffe-project目录

train_lmdb = caffe_root + "train.lmdb" #train.lmdb文件的位置

test_lmdb = caffe_root + "test.lmdb" #test.lmdb文件的位置

mean_file = caffe_root + "mean.binaryproto" #均值文件的位置

train_proto = caffe_root + "train.prototxt" #保存train_prototxt文件的位置

test_proto = caffe_root + "test.prototxt" #保存test_prototxt文件的位置

#写入prototxt文件

with open(train_proto, 'w') as f:

f.write(str(create_net(train_lmdb, mean_file, batch_size=64)))

#写入prototxt文件

with open(test_proto, 'w') as f:

f.write(str(create_net(test_lmdb, mean_file, batch_size=32, include_acc=True)))

if __name__ == '__main__':

write_net()

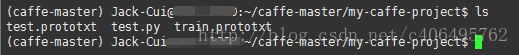

2.运行结果:

3.总结

现在已经学会了如何生成训练使用的train.prototxt、test.prototxt文件。后续将将继续讲解如何生成solver.prototxt文件。

1583

1583

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?