原创性申明

概述

之前发过一篇文章http://blog.csdn.net/zhujunxxxxx/article/details/38864817 已经实现过了UDP的分包发送数据的功能,而这篇文章主要是一个应用,使用udp传送语音和文本等信息。在这个系统中没有服务端和客户端,相互通讯都是直接相互联系的。能够很好的实现效果。

语音获取

要想发送语音信息,首先得获取语音,这里有几种方法,一种是使用DirectX的DirectXsound来录音,我为了简便使用一个开源的插件NAudio来实现语音录取。 在项目中引用NAudio.dll

//------------------录音相关-----------------------------

private IWaveIn waveIn;

private WaveFileWriter writer;

private void LoadWasapiDevicesCombo()

{

var deviceEnum = new MMDeviceEnumerator();

var devices = deviceEnum.EnumerateAudioEndPoints(DataFlow.Capture, DeviceState.Active).ToList();

comboBox1.DataSource = devices;

comboBox1.DisplayMember = "FriendlyName";

}

private void CreateWaveInDevice()

{

waveIn = new WaveIn();

waveIn.WaveFormat = new WaveFormat(8000, 1);

waveIn.DataAvailable += OnDataAvailable;

waveIn.RecordingStopped += OnRecordingStopped;

}

void OnDataAvailable(object sender, WaveInEventArgs e)

{

if (this.InvokeRequired)

{

this.BeginInvoke(new EventHandler<WaveInEventArgs>(OnDataAvailable), sender, e);

}

else

{

writer.Write(e.Buffer, 0, e.BytesRecorded);

int secondsRecorded = (int)(writer.Length / writer.WaveFormat.AverageBytesPerSecond);

if (secondsRecorded >= 10)//最大10s

{

StopRecord();

}

else

{

l_sound.Text = secondsRecorded + " s";

}

}

}

void OnRecordingStopped(object sender, StoppedEventArgs e)

{

if (InvokeRequired)

{

BeginInvoke(new EventHandler<StoppedEventArgs>(OnRecordingStopped), sender, e);

}

else

{

FinalizeWaveFile();

}

}

void StopRecord()

{

AllChangeBtn(btn_luyin, true);

AllChangeBtn(btn_stop, false);

AllChangeBtn(btn_sendsound, true);

AllChangeBtn(btn_play, true);

//btn_luyin.Enabled = true;

//btn_stop.Enabled = false;

//btn_sendsound.Enabled = true;

//btn_play.Enabled = true;

if (waveIn != null)

waveIn.StopRecording();

//Cleanup();

}

private void Cleanup()

{

if (waveIn != null)

{

waveIn.Dispose();

waveIn = null;

}

FinalizeWaveFile();

}

private void FinalizeWaveFile()

{

if (writer != null)

{

writer.Dispose();

writer = null;

}

}

//开始录音

private void btn_luyin_Click(object sender, EventArgs e)

{

btn_stop.Enabled = true;

btn_luyin.Enabled = false;

if (waveIn == null)

{

CreateWaveInDevice();

}

if (File.Exists(soundfile))

{

File.Delete(soundfile);

}

writer = new WaveFileWriter(soundfile, waveIn.WaveFormat);

waveIn.StartRecording();

}

语音发送

获取到语音后我们要把语音发送出去

当我们录好音后点击发送,这部分相关代码是

MsgTranslator tran = null;

public Form1()

{

InitializeComponent();

LoadWasapiDevicesCombo();//显示音频设备

Config cfg = SeiClient.GetDefaultConfig();

cfg.Port = 7777;

UDPThread udp = new UDPThread(cfg);

tran = new MsgTranslator(udp, cfg);

tran.MessageReceived += tran_MessageReceived;

tran.Debuged += new EventHandler<DebugEventArgs>(tran_Debuged);

}

private void btn_sendsound_Click(object sender, EventArgs e)

{

if (t_ip.Text == "")

{

MessageBox.Show("请输入ip");

return;

}

if (t_port.Text == "")

{

MessageBox.Show("请输入端口号");

return;

}

string ip = t_ip.Text;

int port = int.Parse(t_port.Text);

string nick = t_nick.Text;

string msg = "语音消息";

IPEndPoint remote = new IPEndPoint(IPAddress.Parse(ip), port);

Msg m = new Msg(remote, "zz", nick, Commands.SendMsg, msg, "Come From A");

m.IsRequireReceive = true;

m.ExtendMessageBytes = FileContent(soundfile);

m.PackageNo = Msg.GetRandomNumber();

m.Type = Consts.MESSAGE_BINARY;

tran.Send(m);

}

private byte[] FileContent(string fileName)

{

FileStream fs = new FileStream(fileName, FileMode.Open, FileAccess.Read);

try

{

byte[] buffur = new byte[fs.Length];

fs.Read(buffur, 0, (int)fs.Length);

return buffur;

}

catch (Exception ex)

{

return null;

}

finally

{

if (fs != null)

{

//关闭资源

fs.Close();

}

}

}如此一来我们就把产生的语音文件发送出去了

语音的接收与播放

其实语音的接收和文本消息的接收没有什么不同,只不过语音发送的时候是以二进制发送的,因此我们在收到语音后 就应该写入到一个文件里面去,接收完成后,播放这段语音就行了。

下面这段代码主要是把收到的数据保存到文件中去,这个函数式我的NetFrame里收到消息时所触发的事件,在文章前面提过的那篇文章里

void tran_MessageReceived(object sender, MessageEventArgs e)

{

Msg msg = e.msg;

if (msg.Type == Consts.MESSAGE_BINARY)

{

string m = msg.Type + "->" + msg.UserName + "发来二进制消息!";

AddServerMessage(m);

if (File.Exists(recive_soundfile))

{

File.Delete(recive_soundfile);

}

FileStream fs = new FileStream(recive_soundfile, FileMode.Create, FileAccess.Write);

fs.Write(msg.ExtendMessageBytes, 0, msg.ExtendMessageBytes.Length);

fs.Close();

//play_sound(recive_soundfile);

ChangeBtn(true);

}

else

{

string m = msg.Type + "->" + msg.UserName + "说:" + msg.NormalMsg;

AddServerMessage(m);

}

}收到语音消息后,我们要进行播放,播放时仍然用刚才那个插件播放

//--------播放部分----------

private IWavePlayer wavePlayer;

private WaveStream reader;

public void play_sound(string filename)

{

if (wavePlayer != null)

{

wavePlayer.Dispose();

wavePlayer = null;

}

if (reader != null)

{

reader.Dispose();

}

reader = new MediaFoundationReader(filename, new MediaFoundationReader.MediaFoundationReaderSettings() { SingleReaderObject = true });

if (wavePlayer == null)

{

wavePlayer = new WaveOut();

wavePlayer.PlaybackStopped += WavePlayerOnPlaybackStopped;

wavePlayer.Init(reader);

}

wavePlayer.Play();

}

private void WavePlayerOnPlaybackStopped(object sender, StoppedEventArgs stoppedEventArgs)

{

if (stoppedEventArgs.Exception != null)

{

MessageBox.Show(stoppedEventArgs.Exception.Message);

}

if (wavePlayer != null)

{

wavePlayer.Stop();

}

btn_luyin.Enabled = true;

}private void btn_play_Click(object sender, EventArgs e)

{

btn_luyin.Enabled = false;

play_sound(soundfile);

}

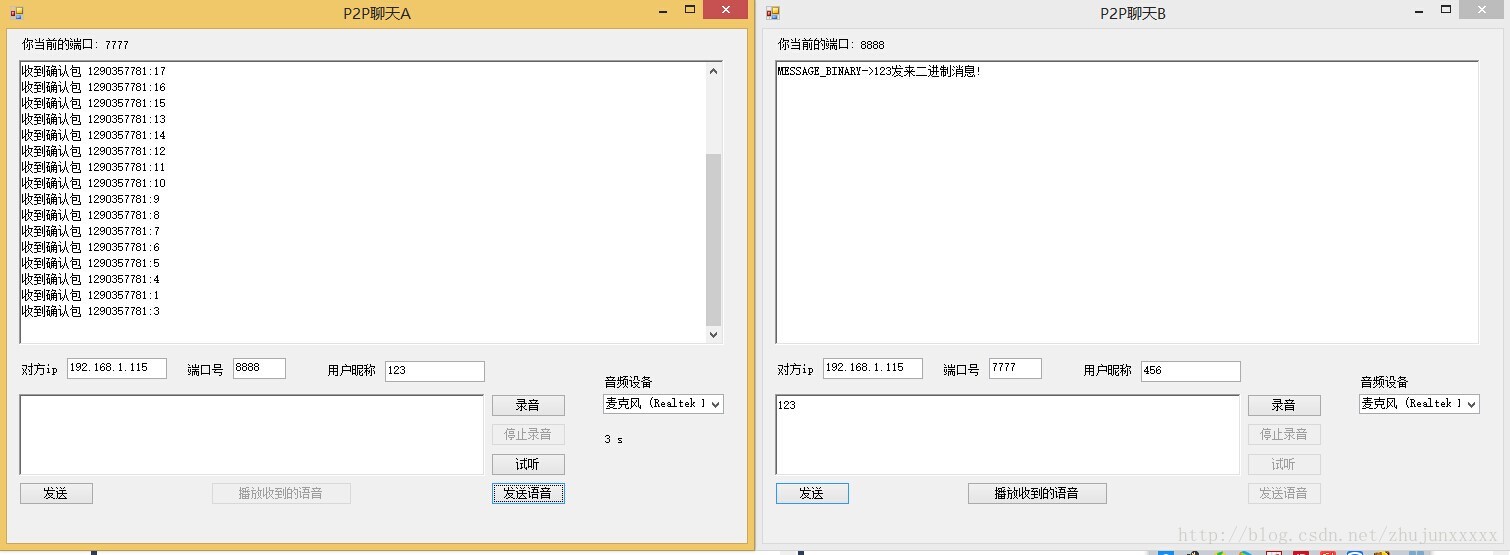

在上面演示了接收和发送一段语音消息的界面

技术总结

主要用到的技术就是UDP和NAudio的录音和播放功能

其中用到的UDP传输类我放在了github上面 地址在我的博客左边的个人介绍里有地址 项目地址 https://github.com/zhujunxxxxx/ZZNetFrame

希望这篇文章能够提供一个思路。

更新程序下载地址 http://download.csdn.net/detail/zhujunxxxxx/8061125 很好的代码,希望大家喜欢

2832

2832

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?