http://www.askmaclean.com/archives/%E5%88%A9%E7%94%A8rowid%E5%88%86%E5%9D%97%E5%AE%9E%E7%8E%B0%E9%9D%9E%E5%88%86%E5%8C%BA%E8%A1%A8%E7%9A%84%E5%B9%B6%E8%A1%8Cupdate%E4%B8%8Edelete.html

利用rowid分块实现非分区表的并行update与delete

大表中海量历史数据的更新与删除一直是令DBA非常头痛的事情,在表已经分区的前提下我们还可以利用并行或者truncate parition等手段来为UPDATE或者DELETE提速, 但是如果对象是普通的非分区对表(non-partitioned heap table)的话,似乎就没有太好的加速方法了, nologging或parallel 对非分区表都没有效果。

之前我也有介绍过一个利用rowid将非分区表分割成指定数量个区间块的方法,见;利用该脚本可以获取到这些分割后的区间块的起始rowid和结尾rowid,之后利用between start_rowid and end_rowid的条件构造多条DML语句, 因为这些DML语句所更新的数据都是在互不相关的区间内的,所以可以在多个终端内并行地运行这些DML语句,而不会造成锁的争用或者Oracle并行执行协调(Parallel Execution coordinator ) 所带来的一些开销。

为了加深理解,我们来实践一下中提到的方法,如下:

以下脚本可以用于将表按照rowid范围分区,获得指定数目的rowid Extent区间(Group sets of rows in the table into smaller chunks), 以便于非分区表利用rowid来实现并行删除或更新:

脚本名:rowid_chunk.sql

REM rowid_ranges should be at least 21

REM utilize this script help delete large table

REM if update large table Why not online redefinition or CTAS

-- This script spits desired number of rowid ranges to be used for any parallel operations.

-- Best to use it for copying a huge table with out of row lob columns in it or CTAS/copy the data over db links.

-- This can also be used to simulate parallel insert/update/delete operations.

-- Maximum number of rowid ranges you can get here is 255.

-- Doesn't work for partitioned tables, but with minor changes it can be adopted easily.

-- Doesn't display any output if the total table blocks are less than rowid ranges times 128.

-- It can split a table into more ranges than the number of extents

set verify off

undefine rowid_ranges

undefine segment_name

undefine owner

set head off

set pages 0

set trimspool on

select 'where rowid between ''' ||sys.dbms_rowid.rowid_create(1, d.oid, c.fid1, c.bid1, 0) ||''' and ''' ||sys.dbms_rowid.rowid_create(1, d.oid, c.fid2, c.bid2, 9999) || '''' ||';'

from (select distinct b.rn,

first_value(a.fid) over(partition by b.rn order by a.fid, a.bid rows between unbounded preceding and unbounded following) fid1,

last_value(a.fid) over(partition by b.rn order by a.fid, a.bid rows between unbounded preceding and unbounded following) fid2,

first_value(decode(sign(range2 - range1),

1,

a.bid +

((b.rn - a.range1) * a.chunks1),

a.bid)) over(partition by b.rn order by a.fid, a.bid rows between unbounded preceding and unbounded following) bid1,

last_value(decode(sign(range2 - range1),

1,

a.bid +

((b.rn - a.range1 + 1) * a.chunks1) - 1,

(a.bid + a.blocks - 1))) over(partition by b.rn order by a.fid, a.bid rows between unbounded preceding and unbounded following) bid2

from (select fid,

bid,

blocks,

chunks1,

trunc((sum2 - blocks + 1 - 0.1) / chunks1) range1,

trunc((sum2 - 0.1) / chunks1) range2

from (select /*+ rule */

relative_fno fid,

block_id bid,

blocks,

sum(blocks) over() sum1,

trunc((sum(blocks) over()) / &&rowid_ranges) chunks1,

sum(blocks) over(order by relative_fno, block_id) sum2

from dba_extents

where segment_name = upper('&&segment_name')

and owner = upper('&&owner'))

where sum1 > &&rowid_ranges) a,

(select rownum - 1 rn

from dual

connect by level <= &&rowid_ranges) b

where b.rn between a.range1 and a.range2) c,

(select max(data_object_id) oid

from dba_objects

where object_name = upper('&&segment_name')

and owner = upper('&&owner')

and data_object_id is not null) d

/

实验如下:

SQL> select * from v$version;

BANNER

--------------------------------------------------------------------------------

Oracle Database 11g Enterprise Edition Release 11.2.0.4.0 - 64bit Production

PL/SQL Release 11.2.0.4.0 - Production

CORE 11.2.0.4.0 Production

TNS for Linux: Version 11.2.0.4.0 - Production

NLSRTL Version 11.2.0.4.0 - Production

SQL>

SQL> create table tt as select * from dba_objects;

Table created.

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL>

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> commit;

Commit complete.

SQL> select count(*) from tt;

COUNT(*)

----------

259203

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> commit;

Commit complete.

SQL> select count(*) from tt;

COUNT(*)

----------

604807

tt 是一张非分区表, 存有60w条记录

SQL> select * from (select object_id,count(*) from tt group by object_id) where rownum <100;

OBJECT_ID COUNT(*)

---------- ----------

2 7

3 7

4 7

5 7

6 7

7 7

8 7

9 7

10 7

11 7

13 7

14 7

15 7

16 7

17 7

96 7

97 7

98 7

99 7

100 7

99 rows selected.

SQL>

SQL> select count(*) from tt where object_id <19000;

COUNT(*)

----------

130613

假设我们要删除tt表上 object_id<19000的所有记录,由于tt是非分区表,所以这里无法用Oracle的并行执行Parallel Execution来加速操作.因此我们利用上述脚本来构造多条DML语句:

SQL> @rowid_chunk.sql

Enter value for rowid_ranges: 5

Enter value for segment_name: tt

Enter value for owner: sys

where rowid between 'AAAVcAAABAAAXEYAAA' and 'AAAVcAAABAAAjoyCcP';

where rowid between 'AAAVcAAABAAAjozAAA' and 'AAAVcAAABAAAkEyCcP';

where rowid between 'AAAVcAAABAAAkEzAAA' and 'AAAVcAAABAAAkiyCcP';

where rowid between 'AAAVcAAABAAAkizAAA' and 'AAAVcAAABAAAk+yCcP';

where rowid between 'AAAVcAAABAAAk+zAAA' and 'AAAVcAAABAAAlMyCcP';

以上我们利用脚本构造出来5个由rowid标示的分区块(chunks),相当于我们人为地将一张非分区表划分成5个区域,每个区域都互不重叠,利用rowid做分界线。尝试利用上面获取到的条件condition来构造DML:UPDATE OR DELETE where rowid between 'start_rowid' and 'end_rowid' AND 其他条件

例如要删除非分区表上所有object_id<190000;的记录,如不优化则是一条语句:

DELETE FROM tt where object_id<190000;

COMMIT;

实际在很大的表上这样删除数据是不理想也不可行的,几点理由:

1. 单条SQL语句串行执行,速度缓慢

2. 运行时间过长可能引发ORA-1555等著名错误

3. 如果失败rollback回滚可能是一场灾难

若利用我这里介绍的方法, 则可以构造出多条DML语句并行删除,每一条均只删除一小部分:

DELETE FROM tt where rowid between 'AAAVcAAABAAAXEYAAA' and 'AAAVcAAABAAAjoyCcP' and object_id<19000;

COMMIT;

DELETE FROM tt where rowid between 'AAAVcAAABAAAjozAAA' and 'AAAVcAAABAAAkEyCcP' and object_id<19000;

COMMIT;

DELETE FROM tt where rowid between 'AAAVcAAABAAAkEzAAA' and 'AAAVcAAABAAAkiyCcP' and object_id<19000;

COMMIT;

DELETE FROM tt where rowid between 'AAAVcAAABAAAkizAAA' and 'AAAVcAAABAAAk+yCcP' and object_id<19000;

COMMIT;

DELETE FROM tt where rowid between 'AAAVcAAABAAAk+zAAA' and 'AAAVcAAABAAAlMyCcP' and object_id<19000;

COMMIT;

视乎你想要的并行度, 将以上构成DML语句再分割几块,打开多个终端同时执行。

这样做的几个优点:

1. 用户手动控制的并行执行,省去了Oracle Parallel并行控制的开销,使用得当的话比加parallel hint或者表上加并行度效率更高。

2. 将数据分割成小块(chunks)来处理,避免了ORA-1555错误

3. 用户可以根据主机负载和IO 动态地加减并行度

删除操作如下:

SQL> select count(*) from tt where object_id<19000;

130613

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAXEYAAA' and 'AAAVcAAABAAAjoyCcP' and object_id<19000;

COMMIT;

55977 rows deleted.

SQL>

Commit complete.

SQL> select count(*) from tt where object_id<19000;

74636

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAjozAAA' and 'AAAVcAAABAAAkEyCcP' and object_id<19000;

COMMIT;

37318 rows deleted.

SQL>

Commit complete.

SQL> select count(*) from tt where object_id<19000;

37318

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAkEzAAA' and 'AAAVcAAABAAAkiyCcP' and object_id<19000;

COMMIT;

18659 rows deleted.

SQL> select count(*) from tt where object_id<19000;

COMMIT;select count(*) from tt where object_id<19000

*

ERROR at line 1:

ORA-00911: invalid character

SQL> select count(*) from tt where object_id<19000;

18659

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAkizAAA' and 'AAAVcAAABAAAk+yCcP' and object_id<19000;

COMMIT;

18659 rows deleted.

SQL>

Commit complete.

SQL> select count(*) from tt where object_id<19000;

0

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAk+zAAA' and 'AAAVcAAABAAAlMyCcP' and object_id<19000;

0 rows deleted.

SQL> COMMIT;

Commit complete.

SQL>

SQL> select count(*) from tt where object_id<19000;

0

但是以上方法仍存在几点不足:

1. 《Script:partition table into rowid extent chunks》脚本目前不支持分区表

2. 因为《Script:partition table into rowid extent chunks》的脚本是根据表段的大小均匀地分割成指定数目的区域,试想当一些要更新或者删除的历史数据集中分布在segment的某些位置时(例如所要删除的数据均存放在一张表的前200个Extents中),因为脚本是根据大小均匀分割区域的,所以某些区域是根本没有我们所要处理的数据的,由这些区域构造出来的DML语句都是无意义的。

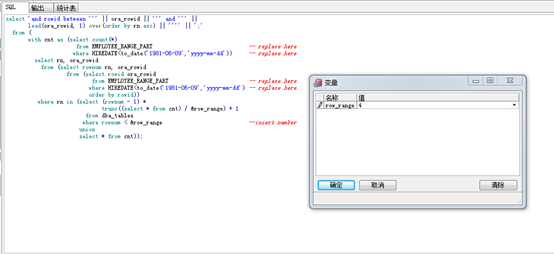

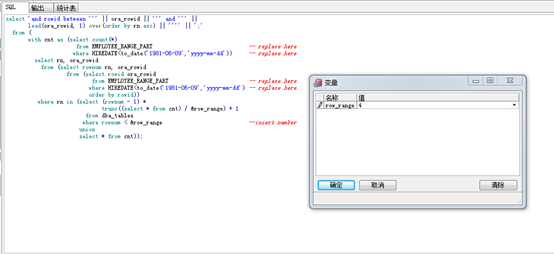

基于以上这些考虑,我重写了获取rowid分块的SQL脚本,如下:

REM put it in GUI TOOLS! otherwise caused ORA-00933

REM control commit yourself, avoid ORA-1555

select 'and rowid between ''' || ora_rowid || ''' and ''' ||

lead(ora_rowid, 1) over(order by rn asc) || '''' || ';'

from (

with cnt as (select count(*) from order_history) -- 注意替换这里!!

select rn, ora_rowid

from (select rownum rn, ora_rowid

from (select rowid ora_rowid

from order_history -- 注意替换这里!!

order by rowid))

where rn in (select (rownum - 1) *

trunc((select * from cnt) / &row_range) + 1

from dba_tables

where rownum < &row_range --输入分区的数目

union

select * from cnt))

以上脚本同样可以实现rowid分区的目的,但是因为其rowid是直接取自SELECT语句查询,所以不存在不支持分区表等复杂对象的情况。 也因为rowid是来源于SELECT,所以我们可以指定针对那些存在符合条件数据的范围分区。

实验分区表切片:

SQL> CREATE TABLE "EMPLOYEE_RANGE_PART"

( "EMPNO" NUMBER(4,0),

"ENAME" VARCHAR2(10),

"JOB" VARCHAR2(9),

"MGR" NUMBER(4,0),

"HIREDATE" DATE,

"SAL" NUMBER(7,2),

"COMM" NUMBER(7,2),

"DEPTNO" NUMBER(2,0)

)

PARTITION BY RANGE (HIREDATE)

(

PARTITION EMPLOYEE_PART01 VALUES LESS THAN (TO_DATE('1981-01-01','yyyy-mm-dd'))

TABLESPACE USERS,

PARTITION EMPLOYEE_PART02 VALUES LESS THAN (TO_DATE('1982-01-01','yyyy-mm-dd'))

TABLESPACE USERS,

PARTITION EMPLOYEE_PART03 VALUES LESS THAN (MAXVALUE)

TABLESPACE USERS

);

Table created.

SQL> insert into EMPLOYEE_RANGE_PART select * from scott.emp;

14 rows created.

SQL> insert into EMPLOYEE_RANGE_PART select * from EMPLOYEE_RANGE_PART;

14 rows created.

重复插入省略。。。。。。。。。。。。。。。。。。。

SQL> select count(*) from EMPLOYEE_RANGE_PART;

COUNT(*)

----------

3670016

SQL> select * from (select HIREDATE,count(*) from EMPLOYEE_RANGE_PART group by hiredate) where rownum<100;

HIREDATE COUNT(*)

------------------- ----------

1980-12-17 00:00:00 262144

1981-11-17 00:00:00 262144

1981-12-03 00:00:00 524288

1981-02-20 00:00:00 262144

1981-09-08 00:00:00 262144

1981-02-22 00:00:00 262144

1981-06-09 00:00:00 262144

1981-04-02 00:00:00 262144

1981-05-01 00:00:00 262144

1981-09-28 00:00:00 262144

1982-01-23 00:00:00 262144

1987-04-19 00:00:00 262144

1987-05-23 00:00:00 262144

13 rows selected.

SQL>

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

1310720

例如我们希望仅针对存有满足HIREDATE<1981-06-09 条件数据的范围rowid分块,那么将replace here的地方替换成你的条件:

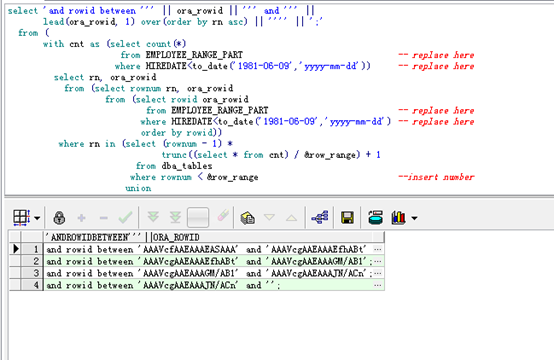

select 'and rowid between ''' || ora_rowid || ''' and ''' ||

lead(ora_rowid, 1) over(order by rn asc) || '''' || ';'

from (

with cnt as (select count(*)

from EMPLOYEE_RANGE_PART -- replace here

where HIREDATE<to_date('1981-06-09','yyyy-mm-dd')) -- replace here</to_date('1981-06-09','yyyy-mm-dd')) <>

select rn, ora_rowid

from (select rownum rn, ora_rowid

from (select rowid ora_rowid

from EMPLOYEE_RANGE_PART -- replace here

where HIREDATE<to_date('1981-06-09','yyyy-mm-dd') -- replace here

order by rowid))

where rn in (select (rownum - 1) *

trunc((select * from cnt) / &row_range) + 1

from dba_tables

where rownum < &row_range --insert number

union

select * from cnt));

--先查询:

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

1310720

---开始删除操作

SQL> DELETE EMPLOYEE_RANGE_PART WHERE HIREDATE<to_date('1981-06-09','yyyy-mm-dd') and="" rowid="" between="" 'aaavcfaaeaaaeasaaa'="" 'aaavcgaaeaaaefhabt';

327681 rows deleted.

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

983039

SQL> DELETE EMPLOYEE_RANGE_PART WHERE HIREDATE<to_date('1981-06-09','yyyy-mm-dd') and="" rowid="" between="" 'aaavcgaaeaaaefhabt'="" 'aaavcgaaeaaagm="" ab1';

327680 rows deleted.

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

655359

SQL> DELETE EMPLOYEE_RANGE_PART WHERE HIREDATE<to_date('1981-06-09','yyyy-mm-dd') and="" rowid="" between="" 'aaavcgaaeaaagm="" ab1'="" 'aaavcgaaeaaajn="" acn';

655359 rows deleted.

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

0

SQL>

几点注意事项:

1. 请将该脚本放到Pl/SQL Developer或Toad之类的工具中运行,在sqlplus中运行可能出现ORA-00933

2. 不要忘记替换红色标记的replace here的条件

3. 自行控制commit 避免出现ORA-1555错误

该脚本目前存在一个不足,在获取rowid分块时要求大表上有适当的索引,否则可能会因为全表扫描并排序而十分缓慢,若有恰当的索引则会使用INDEX FAST FULL SCAN。 这里的恰当索引是指至少有一个非空列的普通b*tree索引, 最好的情况是有主键索引或者bitmap位图索引。

Oracle在版本11.2中引入了DBMS_PARALLEL_EXECUTE 的新特性来帮助更新超大表,文档<11.2 New Feature : Using DBMS_PARALLEL_EXECUTE to Update Large Tables in Parallel [ID 1066555.1]>对该特性做了介绍。

该DBMS_PARALLEL_EXECUTE新特性的一大亮点就是 可以对表上的行数据进行分组为更小的块(chunks), 且并行更新这些小的块:

dbmspexe.sql – DBMS Parallel EXEcute package

This package contains APIs to chunk a table into smaller units and execute those chunks in parallel.

The DBMS_PARALLEL_EXECUTE package enables you to incrementally update the data in a large table in parallel, in two high-level steps:

Group sets of rows in the table into smaller chunks.

Apply the desired UPDATE statement to the chunks in parallel, committing each time you have finished processing a chunk.

This technique is recommended whenever you are updating a lot of data. Its advantages are:

You lock only one set of rows at a time, for a relatively short time, instead of locking the entire table.

You do not lose work that has been done if something fails before the entire operation finishes.

You reduce rollback space consumption.

You improve performance.

DBMS_PARALLEL_EXECUTE可以以3种方式将数据分块:

Different Ways to Spilt Workload

CREATE_CHUNKS_BY_NUMBER_COL : Chunks the table associated with the given task by the specified column.

CREATE_CHUNKS_BY_ROWID : Chunks the table associated with the given task by ROWID

CREATE_CHUNKS_BY_SQL : Chunks the table associated with the given task by means of a user-provided SELECT statement

其中就包含了CREATE_CHUNKS_BY_ROWID这种按照ROWID分块的方式。

利用rowid分块实现非分区表的并行update与delete

大表中海量历史数据的更新与删除一直是令DBA非常头痛的事情,在表已经分区的前提下我们还可以利用并行或者truncate parition等手段来为UPDATE或者DELETE提速, 但是如果对象是普通的非分区对表(non-partitioned heap table)的话,似乎就没有太好的加速方法了, nologging或parallel 对非分区表都没有效果。

之前我也有介绍过一个利用rowid将非分区表分割成指定数量个区间块的方法,见;利用该脚本可以获取到这些分割后的区间块的起始rowid和结尾rowid,之后利用between start_rowid and end_rowid的条件构造多条DML语句, 因为这些DML语句所更新的数据都是在互不相关的区间内的,所以可以在多个终端内并行地运行这些DML语句,而不会造成锁的争用或者Oracle并行执行协调(Parallel Execution coordinator ) 所带来的一些开销。

为了加深理解,我们来实践一下中提到的方法,如下:

以下脚本可以用于将表按照rowid范围分区,获得指定数目的rowid Extent区间(Group sets of rows in the table into smaller chunks), 以便于非分区表利用rowid来实现并行删除或更新:

脚本名:rowid_chunk.sql

REM rowid_ranges should be at least 21

REM utilize this script help delete large table

REM if update large table Why not online redefinition or CTAS

-- This script spits desired number of rowid ranges to be used for any parallel operations.

-- Best to use it for copying a huge table with out of row lob columns in it or CTAS/copy the data over db links.

-- This can also be used to simulate parallel insert/update/delete operations.

-- Maximum number of rowid ranges you can get here is 255.

-- Doesn't work for partitioned tables, but with minor changes it can be adopted easily.

-- Doesn't display any output if the total table blocks are less than rowid ranges times 128.

-- It can split a table into more ranges than the number of extents

set verify off

undefine rowid_ranges

undefine segment_name

undefine owner

set head off

set pages 0

set trimspool on

select 'where rowid between ''' ||sys.dbms_rowid.rowid_create(1, d.oid, c.fid1, c.bid1, 0) ||''' and ''' ||sys.dbms_rowid.rowid_create(1, d.oid, c.fid2, c.bid2, 9999) || '''' ||';'

from (select distinct b.rn,

first_value(a.fid) over(partition by b.rn order by a.fid, a.bid rows between unbounded preceding and unbounded following) fid1,

last_value(a.fid) over(partition by b.rn order by a.fid, a.bid rows between unbounded preceding and unbounded following) fid2,

first_value(decode(sign(range2 - range1),

1,

a.bid +

((b.rn - a.range1) * a.chunks1),

a.bid)) over(partition by b.rn order by a.fid, a.bid rows between unbounded preceding and unbounded following) bid1,

last_value(decode(sign(range2 - range1),

1,

a.bid +

((b.rn - a.range1 + 1) * a.chunks1) - 1,

(a.bid + a.blocks - 1))) over(partition by b.rn order by a.fid, a.bid rows between unbounded preceding and unbounded following) bid2

from (select fid,

bid,

blocks,

chunks1,

trunc((sum2 - blocks + 1 - 0.1) / chunks1) range1,

trunc((sum2 - 0.1) / chunks1) range2

from (select /*+ rule */

relative_fno fid,

block_id bid,

blocks,

sum(blocks) over() sum1,

trunc((sum(blocks) over()) / &&rowid_ranges) chunks1,

sum(blocks) over(order by relative_fno, block_id) sum2

from dba_extents

where segment_name = upper('&&segment_name')

and owner = upper('&&owner'))

where sum1 > &&rowid_ranges) a,

(select rownum - 1 rn

from dual

connect by level <= &&rowid_ranges) b

where b.rn between a.range1 and a.range2) c,

(select max(data_object_id) oid

from dba_objects

where object_name = upper('&&segment_name')

and owner = upper('&&owner')

and data_object_id is not null) d

/

实验如下:

SQL> select * from v$version;

BANNER

--------------------------------------------------------------------------------

Oracle Database 11g Enterprise Edition Release 11.2.0.4.0 - 64bit Production

PL/SQL Release 11.2.0.4.0 - Production

CORE 11.2.0.4.0 Production

TNS for Linux: Version 11.2.0.4.0 - Production

NLSRTL Version 11.2.0.4.0 - Production

SQL>

SQL> create table tt as select * from dba_objects;

Table created.

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL>

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> commit;

Commit complete.

SQL> select count(*) from tt;

COUNT(*)

----------

259203

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> insert into tt select * from dba_objects;

86401 rows created.

SQL> commit;

Commit complete.

SQL> select count(*) from tt;

COUNT(*)

----------

604807

tt 是一张非分区表, 存有60w条记录

SQL> select * from (select object_id,count(*) from tt group by object_id) where rownum <100;

OBJECT_ID COUNT(*)

---------- ----------

2 7

3 7

4 7

5 7

6 7

7 7

8 7

9 7

10 7

11 7

13 7

14 7

15 7

16 7

17 7

96 7

97 7

98 7

99 7

100 7

99 rows selected.

SQL>

SQL> select count(*) from tt where object_id <19000;

COUNT(*)

----------

130613

假设我们要删除tt表上 object_id<19000的所有记录,由于tt是非分区表,所以这里无法用Oracle的并行执行Parallel Execution来加速操作.因此我们利用上述脚本来构造多条DML语句:

SQL> @rowid_chunk.sql

Enter value for rowid_ranges: 5

Enter value for segment_name: tt

Enter value for owner: sys

where rowid between 'AAAVcAAABAAAXEYAAA' and 'AAAVcAAABAAAjoyCcP';

where rowid between 'AAAVcAAABAAAjozAAA' and 'AAAVcAAABAAAkEyCcP';

where rowid between 'AAAVcAAABAAAkEzAAA' and 'AAAVcAAABAAAkiyCcP';

where rowid between 'AAAVcAAABAAAkizAAA' and 'AAAVcAAABAAAk+yCcP';

where rowid between 'AAAVcAAABAAAk+zAAA' and 'AAAVcAAABAAAlMyCcP';

以上我们利用脚本构造出来5个由rowid标示的分区块(chunks),相当于我们人为地将一张非分区表划分成5个区域,每个区域都互不重叠,利用rowid做分界线。尝试利用上面获取到的条件condition来构造DML:UPDATE OR DELETE where rowid between 'start_rowid' and 'end_rowid' AND 其他条件

例如要删除非分区表上所有object_id<190000;的记录,如不优化则是一条语句:

DELETE FROM tt where object_id<190000;

COMMIT;

实际在很大的表上这样删除数据是不理想也不可行的,几点理由:

1. 单条SQL语句串行执行,速度缓慢

2. 运行时间过长可能引发ORA-1555等著名错误

3. 如果失败rollback回滚可能是一场灾难

若利用我这里介绍的方法, 则可以构造出多条DML语句并行删除,每一条均只删除一小部分:

DELETE FROM tt where rowid between 'AAAVcAAABAAAXEYAAA' and 'AAAVcAAABAAAjoyCcP' and object_id<19000;

COMMIT;

DELETE FROM tt where rowid between 'AAAVcAAABAAAjozAAA' and 'AAAVcAAABAAAkEyCcP' and object_id<19000;

COMMIT;

DELETE FROM tt where rowid between 'AAAVcAAABAAAkEzAAA' and 'AAAVcAAABAAAkiyCcP' and object_id<19000;

COMMIT;

DELETE FROM tt where rowid between 'AAAVcAAABAAAkizAAA' and 'AAAVcAAABAAAk+yCcP' and object_id<19000;

COMMIT;

DELETE FROM tt where rowid between 'AAAVcAAABAAAk+zAAA' and 'AAAVcAAABAAAlMyCcP' and object_id<19000;

COMMIT;

视乎你想要的并行度, 将以上构成DML语句再分割几块,打开多个终端同时执行。

这样做的几个优点:

1. 用户手动控制的并行执行,省去了Oracle Parallel并行控制的开销,使用得当的话比加parallel hint或者表上加并行度效率更高。

2. 将数据分割成小块(chunks)来处理,避免了ORA-1555错误

3. 用户可以根据主机负载和IO 动态地加减并行度

删除操作如下:

SQL> select count(*) from tt where object_id<19000;

130613

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAXEYAAA' and 'AAAVcAAABAAAjoyCcP' and object_id<19000;

COMMIT;

55977 rows deleted.

SQL>

Commit complete.

SQL> select count(*) from tt where object_id<19000;

74636

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAjozAAA' and 'AAAVcAAABAAAkEyCcP' and object_id<19000;

COMMIT;

37318 rows deleted.

SQL>

Commit complete.

SQL> select count(*) from tt where object_id<19000;

37318

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAkEzAAA' and 'AAAVcAAABAAAkiyCcP' and object_id<19000;

COMMIT;

18659 rows deleted.

SQL> select count(*) from tt where object_id<19000;

COMMIT;select count(*) from tt where object_id<19000

*

ERROR at line 1:

ORA-00911: invalid character

SQL> select count(*) from tt where object_id<19000;

18659

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAkizAAA' and 'AAAVcAAABAAAk+yCcP' and object_id<19000;

COMMIT;

18659 rows deleted.

SQL>

Commit complete.

SQL> select count(*) from tt where object_id<19000;

0

SQL> DELETE FROM tt where rowid between 'AAAVcAAABAAAk+zAAA' and 'AAAVcAAABAAAlMyCcP' and object_id<19000;

0 rows deleted.

SQL> COMMIT;

Commit complete.

SQL>

SQL> select count(*) from tt where object_id<19000;

0

但是以上方法仍存在几点不足:

1. 《Script:partition table into rowid extent chunks》脚本目前不支持分区表

2. 因为《Script:partition table into rowid extent chunks》的脚本是根据表段的大小均匀地分割成指定数目的区域,试想当一些要更新或者删除的历史数据集中分布在segment的某些位置时(例如所要删除的数据均存放在一张表的前200个Extents中),因为脚本是根据大小均匀分割区域的,所以某些区域是根本没有我们所要处理的数据的,由这些区域构造出来的DML语句都是无意义的。

基于以上这些考虑,我重写了获取rowid分块的SQL脚本,如下:

REM put it in GUI TOOLS! otherwise caused ORA-00933

REM control commit yourself, avoid ORA-1555

select 'and rowid between ''' || ora_rowid || ''' and ''' ||

lead(ora_rowid, 1) over(order by rn asc) || '''' || ';'

from (

with cnt as (select count(*) from order_history) -- 注意替换这里!!

select rn, ora_rowid

from (select rownum rn, ora_rowid

from (select rowid ora_rowid

from order_history -- 注意替换这里!!

order by rowid))

where rn in (select (rownum - 1) *

trunc((select * from cnt) / &row_range) + 1

from dba_tables

where rownum < &row_range --输入分区的数目

union

select * from cnt))

以上脚本同样可以实现rowid分区的目的,但是因为其rowid是直接取自SELECT语句查询,所以不存在不支持分区表等复杂对象的情况。 也因为rowid是来源于SELECT,所以我们可以指定针对那些存在符合条件数据的范围分区。

实验分区表切片:

SQL> CREATE TABLE "EMPLOYEE_RANGE_PART"

( "EMPNO" NUMBER(4,0),

"ENAME" VARCHAR2(10),

"JOB" VARCHAR2(9),

"MGR" NUMBER(4,0),

"HIREDATE" DATE,

"SAL" NUMBER(7,2),

"COMM" NUMBER(7,2),

"DEPTNO" NUMBER(2,0)

)

PARTITION BY RANGE (HIREDATE)

(

PARTITION EMPLOYEE_PART01 VALUES LESS THAN (TO_DATE('1981-01-01','yyyy-mm-dd'))

TABLESPACE USERS,

PARTITION EMPLOYEE_PART02 VALUES LESS THAN (TO_DATE('1982-01-01','yyyy-mm-dd'))

TABLESPACE USERS,

PARTITION EMPLOYEE_PART03 VALUES LESS THAN (MAXVALUE)

TABLESPACE USERS

);

Table created.

SQL> insert into EMPLOYEE_RANGE_PART select * from scott.emp;

14 rows created.

SQL> insert into EMPLOYEE_RANGE_PART select * from EMPLOYEE_RANGE_PART;

14 rows created.

重复插入省略。。。。。。。。。。。。。。。。。。。

SQL> select count(*) from EMPLOYEE_RANGE_PART;

COUNT(*)

----------

3670016

SQL> select * from (select HIREDATE,count(*) from EMPLOYEE_RANGE_PART group by hiredate) where rownum<100;

HIREDATE COUNT(*)

------------------- ----------

1980-12-17 00:00:00 262144

1981-11-17 00:00:00 262144

1981-12-03 00:00:00 524288

1981-02-20 00:00:00 262144

1981-09-08 00:00:00 262144

1981-02-22 00:00:00 262144

1981-06-09 00:00:00 262144

1981-04-02 00:00:00 262144

1981-05-01 00:00:00 262144

1981-09-28 00:00:00 262144

1982-01-23 00:00:00 262144

1987-04-19 00:00:00 262144

1987-05-23 00:00:00 262144

13 rows selected.

SQL>

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

1310720

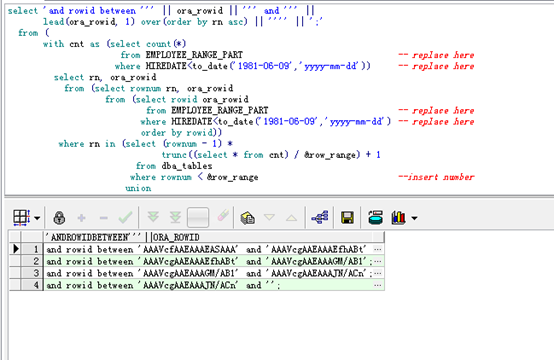

例如我们希望仅针对存有满足HIREDATE<1981-06-09 条件数据的范围rowid分块,那么将replace here的地方替换成你的条件:

select 'and rowid between ''' || ora_rowid || ''' and ''' ||

lead(ora_rowid, 1) over(order by rn asc) || '''' || ';'

from (

with cnt as (select count(*)

from EMPLOYEE_RANGE_PART -- replace here

where HIREDATE<to_date('1981-06-09','yyyy-mm-dd')) -- replace here</to_date('1981-06-09','yyyy-mm-dd')) <>

select rn, ora_rowid

from (select rownum rn, ora_rowid

from (select rowid ora_rowid

from EMPLOYEE_RANGE_PART -- replace here

where HIREDATE<to_date('1981-06-09','yyyy-mm-dd') -- replace here

order by rowid))

where rn in (select (rownum - 1) *

trunc((select * from cnt) / &row_range) + 1

from dba_tables

where rownum < &row_range --insert number

union

select * from cnt));

--先查询:

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

1310720

---开始删除操作

SQL> DELETE EMPLOYEE_RANGE_PART WHERE HIREDATE<to_date('1981-06-09','yyyy-mm-dd') and="" rowid="" between="" 'aaavcfaaeaaaeasaaa'="" 'aaavcgaaeaaaefhabt';

327681 rows deleted.

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

983039

SQL> DELETE EMPLOYEE_RANGE_PART WHERE HIREDATE<to_date('1981-06-09','yyyy-mm-dd') and="" rowid="" between="" 'aaavcgaaeaaaefhabt'="" 'aaavcgaaeaaagm="" ab1';

327680 rows deleted.

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

655359

SQL> DELETE EMPLOYEE_RANGE_PART WHERE HIREDATE<to_date('1981-06-09','yyyy-mm-dd') and="" rowid="" between="" 'aaavcgaaeaaagm="" ab1'="" 'aaavcgaaeaaajn="" acn';

655359 rows deleted.

SQL> select count(*) from EMPLOYEE_RANGE_PART where HIREDATE<to_date('1981-06-09','yyyy-mm-dd');</to_date('1981-06-09','yyyy-mm-dd');<>

COUNT(*)

----------

0

SQL>

几点注意事项:

1. 请将该脚本放到Pl/SQL Developer或Toad之类的工具中运行,在sqlplus中运行可能出现ORA-00933

2. 不要忘记替换红色标记的replace here的条件

3. 自行控制commit 避免出现ORA-1555错误

该脚本目前存在一个不足,在获取rowid分块时要求大表上有适当的索引,否则可能会因为全表扫描并排序而十分缓慢,若有恰当的索引则会使用INDEX FAST FULL SCAN。 这里的恰当索引是指至少有一个非空列的普通b*tree索引, 最好的情况是有主键索引或者bitmap位图索引。

Oracle在版本11.2中引入了DBMS_PARALLEL_EXECUTE 的新特性来帮助更新超大表,文档<11.2 New Feature : Using DBMS_PARALLEL_EXECUTE to Update Large Tables in Parallel [ID 1066555.1]>对该特性做了介绍。

该DBMS_PARALLEL_EXECUTE新特性的一大亮点就是 可以对表上的行数据进行分组为更小的块(chunks), 且并行更新这些小的块:

dbmspexe.sql – DBMS Parallel EXEcute package

This package contains APIs to chunk a table into smaller units and execute those chunks in parallel.

The DBMS_PARALLEL_EXECUTE package enables you to incrementally update the data in a large table in parallel, in two high-level steps:

Group sets of rows in the table into smaller chunks.

Apply the desired UPDATE statement to the chunks in parallel, committing each time you have finished processing a chunk.

This technique is recommended whenever you are updating a lot of data. Its advantages are:

You lock only one set of rows at a time, for a relatively short time, instead of locking the entire table.

You do not lose work that has been done if something fails before the entire operation finishes.

You reduce rollback space consumption.

You improve performance.

DBMS_PARALLEL_EXECUTE可以以3种方式将数据分块:

Different Ways to Spilt Workload

CREATE_CHUNKS_BY_NUMBER_COL : Chunks the table associated with the given task by the specified column.

CREATE_CHUNKS_BY_ROWID : Chunks the table associated with the given task by ROWID

CREATE_CHUNKS_BY_SQL : Chunks the table associated with the given task by means of a user-provided SELECT statement

其中就包含了CREATE_CHUNKS_BY_ROWID这种按照ROWID分块的方式。

来自 “ ITPUB博客 ” ,链接:http://blog.itpub.net/31397003/viewspace-2143692/,如需转载,请注明出处,否则将追究法律责任。

转载于:http://blog.itpub.net/31397003/viewspace-2143692/

1022

1022

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?