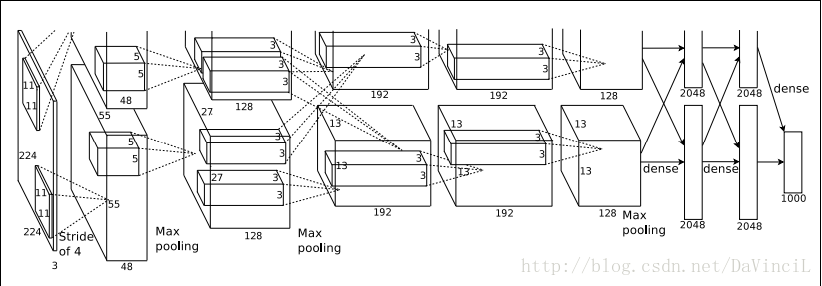

一、模型

模型向前向后传播时间的计算请参考:Tensorflow深度学习之十:Tensorflow实现经典卷积神经网络AlexNet

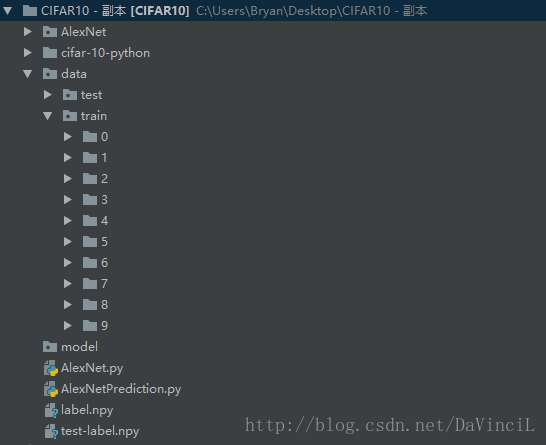

二、工程结构

由于我自己训练的机器内存显存不足,不能一次性读取10000张图片,因此,在这之前我按照图片的类别,将每一张图片都提取了出来,保存成了jpg格式。与此同时,在保存图片的过程中,存储了一个python的dict结构,键为每一张图片的相对地址,值为每一张图片对应的类别,将这个dict结构保存成npy文件。每一张jpg图片的大小为32*32,而AlexNet需要的输入为224*224,所以在读取图片的时候需要使用cv2.resize进行图片分辨率的调整。

分别对训练集和测试集做以上操作。得到的工程目录如下所示:

每个文件和文件夹的作用显示如下:

| 文件 | 作用 |

|---|---|

| AlexNet文件夹 | 保存相关日志的文件夹 |

| cifar-10-python文件夹 | 保存CIFAR-10数据集的源文件 |

| data\test | 测试集数据 |

| data\train | 训练集数据,按照标签分成十类,分别存储在0~9的文件夹内,test文件夹也是一样 |

| model文件夹 | 保存模型的目录 |

| AlexNet.py | 建立AlexNet网络结构和训练 |

| AlexNetPrediction.py | 使用训练好的模型进行预测 |

| label.npy | 保存训练集的文件名与标签的文件,是一个dict |

| test-label.npy | 保存测试集的文件名与标签的文件,是一个dict |

三,训练代码

import tensorflow as tf

import numpy as np

import random

import cv2

# 将传入的label转换成one hot的形式。

def getOneHotLabel(label, depth):

m = np.zeros([len(label), depth])

for i in range(len(label)):

m[i][label[i]] = 1

return m

# 建立神经网络。

def alexnet(image, keepprob=0.5):

# 定义卷积层1,卷积核大小,偏置量等各项参数参考下面的程序代码,下同。

with tf.name_scope("conv1") as scope:

kernel = tf.Variable(tf.truncated_normal([11, 11, 3, 64], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(image, kernel, [1, 4, 4, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[64]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv1 = tf.nn.relu(bias, name=scope)

pass

# LRN层

lrn1 = tf.nn.lrn(conv1, 4, bias=1.0, alpha=0.001/9, beta=0.75, name="lrn1")

# 最大池化层

pool1 = tf.nn.max_pool(lrn1, ksize=[1,3,3,1], strides=[1,2,2,1],padding="VALID", name="pool1")

# 定义卷积层2

with tf.name_scope("conv2") as scope:

kernel = tf.Variable(tf.truncated_normal([5,5,64,192], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(pool1, kernel, [1, 1, 1, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[192]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv2 = tf.nn.relu(bias, name=scope)

pass

# LRN层

lrn2 = tf.nn.lrn(conv2, 4, bias=1.0, alpha=0.001 / 9, beta=0.75, name="lrn2")

# 最大池化层

pool2 = tf.nn.max_pool(lrn2, ksize=[1, 3, 3, 1], strides=[1, 2, 2, 1], padding="VALID", name="pool2")

# 定义卷积层3

with tf.name_scope("conv3") as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,192,384], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(pool2, kernel, [1, 1, 1, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[384]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv3 = tf.nn.relu(bias, name=scope)

pass

# 定义卷积层4

with tf.name_scope("conv4") as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,384,256], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(conv3, kernel, [1, 1, 1, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[256]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv4 = tf.nn.relu(bias, name=scope)

pass

# 定义卷积层5

with tf.name_scope("conv5") as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,256,256], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(conv4, kernel, [1, 1, 1, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[256]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv5 = tf.nn.relu(bias, name=scope)

pass

# 最大池化层

pool5 = tf.nn.max_pool(conv5, ksize=[1,3,3,1], strides=[1,2,2,1], padding="VALID", name="pool5")

# 全连接层

flatten = tf.reshape(pool5, [-1, 6*6*256])

weight1 = tf.Variable(tf.truncated_normal([6*6*256, 4096], mean=0, stddev=0.01))

fc1 = tf.nn.sigmoid(tf.matmul(flatten, weight1))

dropout1 = tf.nn.dropout(fc1, keepprob)

weight2 = tf.Variable(tf.truncated_normal([4096, 4096], mean=0, stddev=0.01))

fc2 = tf.nn.sigmoid(tf.matmul(dropout1, weight2))

dropout2 = tf.nn.dropout(fc2, keepprob)

weight3 = tf.Variable(tf.truncated_normal([4096, 10], mean=0, stddev=0.01))

fc3 = tf.nn.sigmoid(tf.matmul(dropout2, weight3))

return fc3

def alexnet_main():

# 加载使用的训练集文件名和标签。

files = np.load("label.npy", encoding='bytes')[()]

# 提取文件名。

keys = [i for i in files]

print(len(keys))

myinput = tf.placeholder(dtype=tf.float32, shape=[None, 224, 224, 3], name='input')

mylabel = tf.placeholder(dtype=tf.float32, shape=[None, 10], name='label')

# 建立网络,keepprob为0.6。

myoutput = alexnet(myinput, 0.6)

# 定义训练的loss函数。

loss = tf.reduce_mean(tf.nn.softmax_cross_entropy_with_logits(logits=myoutput, labels=mylabel))

# 定义优化器,学习率设置为0.09,学习率可以设置为其他的数值。

optimizer = tf.train.GradientDescentOptimizer(learning_rate=0.09).minimize(loss)

# 定义准确率

valaccuracy = tf.reduce_mean(

tf.cast(

tf.equal(

tf.argmax(myoutput, 1),

tf.argmax(mylabel, 1)),

tf.float32))

# tensorflow的saver,可以用于保存模型。

saver = tf.train.Saver()

init = tf.global_variables_initializer()

with tf.Session() as sess:

sess.run(init)

# 40个epoch

for loop in range(40):

# 生成并打乱训练集的顺序。

indices = np.arange(50000)

random.shuffle(indices)

# batch size此处定义为200。

# 训练集一共50000张图片,前40000张用于训练,后10000张用于验证集。

for i in range(0, 0+40000, 200):

photo = []

label = []

for j in range(0, 200):

# print(keys[indices[i + j]])

photo.append(cv2.resize(cv2.imread(keys[indices[i + j]]), (224, 224))/225)

label.append(files[keys[indices[i + j]]])

m = getOneHotLabel(label, depth=10)

a, b = sess.run([optimizer, loss], feed_dict={myinput: photo, mylabel: m})

print("\r%lf"%b, end='')

acc = 0

# 每次取验证集的200张图片进行验证,返回这200张图片的正确率。

for i in range(40000, 40000+10000, 200):

photo = []

label = []

for j in range(i, i + 200):

photo.append(cv2.resize(cv2.imread(keys[indices[j]]), (224, 224))/225)

label.append(files[keys[indices[j]]])

m = getOneHotLabel(label, depth=10)

acc += sess.run(valaccuracy, feed_dict={myinput: photo, mylabel: m})

# 输出,一共有50次验证集数据相加,所以需要除以50。

print("Epoch ", loop, ': validation rate: ', acc/50)

# 保存模型。

saver.save(sess, "model/model.ckpt")

if __name__ == '__main__':

alexnet_main()以下为结果的部分输出:

50000

1.781297Epoch 0 : validation rate: 0.562699974775

1.775934Epoch 1 : validation rate: 0.547099971175

1.768913Epoch 2 : validation rate: 0.52679997623

1.719084Epoch 3 : validation rate: 0.548099977374

1.721695Epoch 4 : validation rate: 0.562299972177

1.745009Epoch 5 : validation rate: 0.56409997642

1.746290Epoch 6 : validation rate: 0.612299977541

1.726248Epoch 7 : validation rate: 0.574799978137

1.735083Epoch 8 : validation rate: 0.617399973869

1.722523Epoch 9 : validation rate: 0.61839998126

1.712282Epoch 10 : validation rate: 0.643999977112

1.697912Epoch 11 : validation rate: 0.63789998889

1.708088Epoch 12 : validation rate: 0.641699975729

1.716783Epoch 13 : validation rate: 0.64499997735

1.718689Epoch 14 : validation rate: 0.664099971056

1.712452Epoch 15 : validation rate: 0.659299976826

1.699410Epoch 16 : validation rate: 0.666799970865

1.682442Epoch 17 : validation rate: 0.660699977875

1.650028Epoch 18 : validation rate: 0.673199976683

1.662869Epoch 19 : validation rate: 0.692699990273

1.652857Epoch 20 : validation rate: 0.687699975967

1.672175Epoch 21 : validation rate: 0.710799975395

1.662848Epoch 22 : validation rate: 0.707699980736

1.653844Epoch 23 : validation rate: 0.708999979496

1.636483Epoch 24 : validation rate: 0.736199990511

1.658812Epoch 25 : validation rate: 0.688499983549

1.658808Epoch 26 : validation rate: 0.748899987936

1.642705Epoch 27 : validation rate: 0.751199992895

1.609915Epoch 28 : validation rate: 0.742099983692

1.610037Epoch 29 : validation rate: 0.757699984312

1.647516Epoch 30 : validation rate: 0.771899987459

1.615854Epoch 31 : validation rate: 0.762699997425

1.598617Epoch 32 : validation rate: 0.785299996138

1.579349Epoch 33 : validation rate: 0.791699982882

1.615915Epoch 34 : validation rate: 0.780799984932

1.586894Epoch 35 : validation rate: 0.790699990988

1.573043Epoch 36 : validation rate: 0.799299983978

1.580690Epoch 37 : validation rate: 0.812399986982

1.598764Epoch 38 : validation rate: 0.824699985981

1.566866Epoch 39 : validation rate: 0.821999987364在实际的训练过程中,我进行了多次训练,每次在前一模型的基础上调整学习率继续进行训练。最后的loss值可以下降到1.3~1.4,验证集的正确率可以到0.96~0.97。

四、预测代码

预测代码:

import tensorflow as tf

import numpy as np

import random

import cv2

def getOneHotLabel(label, depth):

m = np.zeros([len(label), depth])

for i in range(len(label)):

m[i][label[i]] = 1

return m

# 建立神经网络

def alexnet(image, keepprob=0.5):

# 定义卷积层1,卷积核大小,偏置量等各项参数参考下面的程序代码,下同

with tf.name_scope("conv1") as scope:

kernel = tf.Variable(tf.truncated_normal([11, 11, 3, 64], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(image, kernel, [1, 4, 4, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[64]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv1 = tf.nn.relu(bias, name=scope)

pass

# LRN层

lrn1 = tf.nn.lrn(conv1, 4, bias=1.0, alpha=0.001/9, beta=0.75, name="lrn1")

# 最大池化层

pool1 = tf.nn.max_pool(lrn1, ksize=[1,3,3,1], strides=[1,2,2,1],padding="VALID", name="pool1")

# 定义卷积层2

with tf.name_scope("conv2") as scope:

kernel = tf.Variable(tf.truncated_normal([5,5,64,192], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(pool1, kernel, [1, 1, 1, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[192]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv2 = tf.nn.relu(bias, name=scope)

pass

# LRN层

lrn2 = tf.nn.lrn(conv2, 4, bias=1.0, alpha=0.001 / 9, beta=0.75, name="lrn2")

# 最大池化层

pool2 = tf.nn.max_pool(lrn2, ksize=[1, 3, 3, 1], strides=[1, 2, 2, 1], padding="VALID", name="pool2")

# 定义卷积层3

with tf.name_scope("conv3") as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,192,384], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(pool2, kernel, [1, 1, 1, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[384]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv3 = tf.nn.relu(bias, name=scope)

pass

# 定义卷积层4

with tf.name_scope("conv4") as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,384,256], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(conv3, kernel, [1, 1, 1, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[256]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv4 = tf.nn.relu(bias, name=scope)

pass

# 定义卷积层5

with tf.name_scope("conv5") as scope:

kernel = tf.Variable(tf.truncated_normal([3,3,256,256], dtype=tf.float32, stddev=1e-1, name="weights"))

conv = tf.nn.conv2d(conv4, kernel, [1, 1, 1, 1], padding="SAME")

biases = tf.Variable(tf.constant(0.0, dtype=tf.float32, shape=[256]), trainable=True, name="biases")

bias = tf.nn.bias_add(conv, biases)

conv5 = tf.nn.relu(bias, name=scope)

pass

# 最大池化层

pool5 = tf.nn.max_pool(conv5, ksize=[1,3,3,1], strides=[1,2,2,1], padding="VALID", name="pool5")

# 全连接层

flatten = tf.reshape(pool5, [-1, 6*6*256])

weight1 = tf.Variable(tf.truncated_normal([6*6*256, 4096], mean=0, stddev=0.01))

fc1 = tf.nn.sigmoid(tf.matmul(flatten, weight1))

dropout1 = tf.nn.dropout(fc1, keepprob)

weight2 = tf.Variable(tf.truncated_normal([4096, 4096], mean=0, stddev=0.01))

fc2 = tf.nn.sigmoid(tf.matmul(dropout1, weight2))

dropout2 = tf.nn.dropout(fc2, keepprob)

weight3 = tf.Variable(tf.truncated_normal([4096, 10], mean=0, stddev=0.01))

fc3 = tf.nn.sigmoid(tf.matmul(dropout2, weight3))

return fc3

def alexnet_main():

# 加载测试集的文件名和标签。

files = np.load("test-label.npy", encoding='bytes')[()]

keys = [i for i in files]

print(len(keys))

myinput = tf.placeholder(dtype=tf.float32, shape=[None, 224, 224, 3], name='input')

mylabel = tf.placeholder(dtype=tf.float32, shape=[None, 10], name='label')

myoutput = alexnet(myinput, 0.6)

prediction = tf.argmax(myoutput, 1)

truth = tf.argmax(mylabel, 1)

valaccuracy = tf.reduce_mean(

tf.cast(

tf.equal(

prediction,

truth),

tf.float32))

saver = tf.train.Saver()

with tf.Session() as sess:

# 加载训练好的模型,路径根据自己的实际情况调整

saver.restore(sess, r"model/model.ckpt")

cnt = 0

for i in range(10000):

photo = []

label = []

photo.append(cv2.resize(cv2.imread(keys[i]), (224, 224))/225)

label.append(files[keys[i]])

m = getOneHotLabel(label, depth=10)

a, b= sess.run([prediction, truth], feed_dict={myinput: photo, mylabel: m})

print(a, ' ', b)

if a[0] == b[0]:

cnt += 1

print("Epoch ", 1, ': prediction rate: ', cnt / 10000)

if __name__ == '__main__':

alexnet_main()预测结果:(这里只显示部分输出结果)

10000

[3] [3]

[8] [8]

[6] [6]

[4] [4]

[5] [9]

[2] [3]

[9] [9]

[5] [5]

[1] [7]

[3] [4]

[4] [4]

[4] [3]

[9] [9]

[5] [5]

[8] [8]

[3] [8]

[0] [0]

[8] [8]

[7] [7]

[7] [4]

[7] [7]

[5] [5]

[6] [5]

...

[7] [7]

[3] [3]

[0] [0]

[7] [4]

[6] [2]

[0] [0]

[7] [7]

[2] [5]

[8] [8]

[5] [3]

[5] [5]

[1] [1]

[7] [7]

Epoch 1 : prediction rate: 0.7685五、结果分析

在测试集的表现上,自己训练的AlexNet网络的预测结果达到了0.7685,即76.85%的正确率。相比较LeNet,这个结果好很多,这是因为在网络结构中,使用了更多的卷积操作,可以提取更多的潜在特征。足以证明AlexNet在CIFAR-10数据集上表现比LeNet好。

但是0.7685的正确率还是不是很让人满意,所以后面可以选择继续调整网络的参数,调整网络的结构等手段继续进行网络的训练,或者可以选择使用预训练好的模型进行自己网络的训练,或者可以尝试使用其他更加优秀的网络结构。

接下来的任务是尝试使用GoogleNet模型进行CIFAR-10数据集的求解。

2018年6月13日更新

很多朋友在评论区问我两个npy文件怎么生成的,其实我就是把所有的图片都保存下来,然后把信息提取出来,保存了一下而已。下面是提取信息和保存的代码,非常简单。

import numpy as np

import os

train_label = {}

for i in range(10):

search_path = './data/train/{}'.format(i)

file_list = os.listdir(search_path)

for file in file_list:

train_label[os.path.join(search_path, file)] = i

np.save('label.npy', train_label)

test_label = {}

for i in range(10):

search_path = './data/test/{}'.format(i)

file_list = os.listdir(search_path)

for file in file_list:

test_label[os.path.join(search_path, file)] = i

np.save('test-label.npy', test_label)如果目录结构和上面的是一样的话,把这些代码文件放在工程的根目录下面就可以运行,也可以根据自己需要调整,目的可以达到就可以了。

需要的同学可以去这里下载:CIFAR10 label

421

421

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?