图解Volley

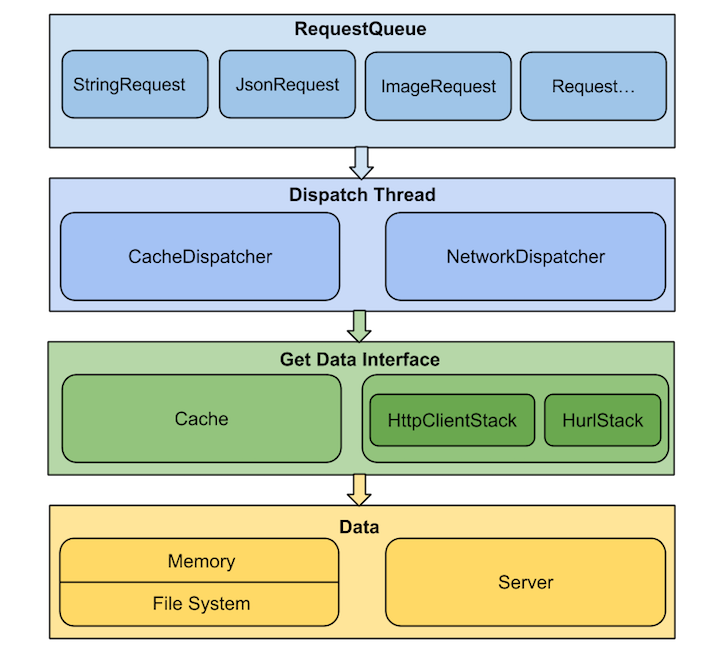

1、总体设计图

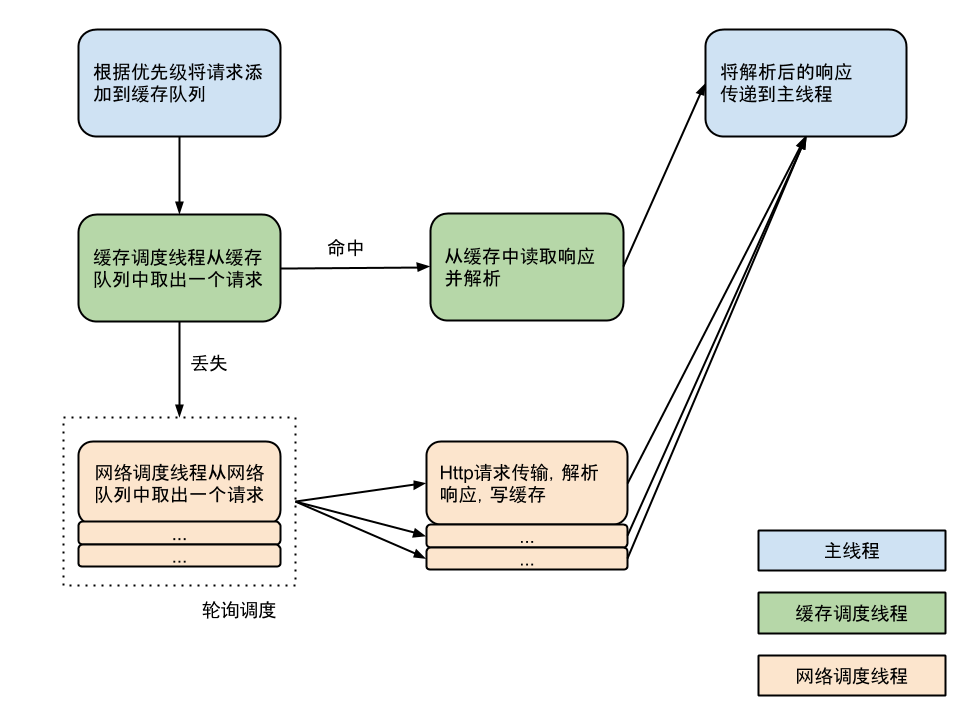

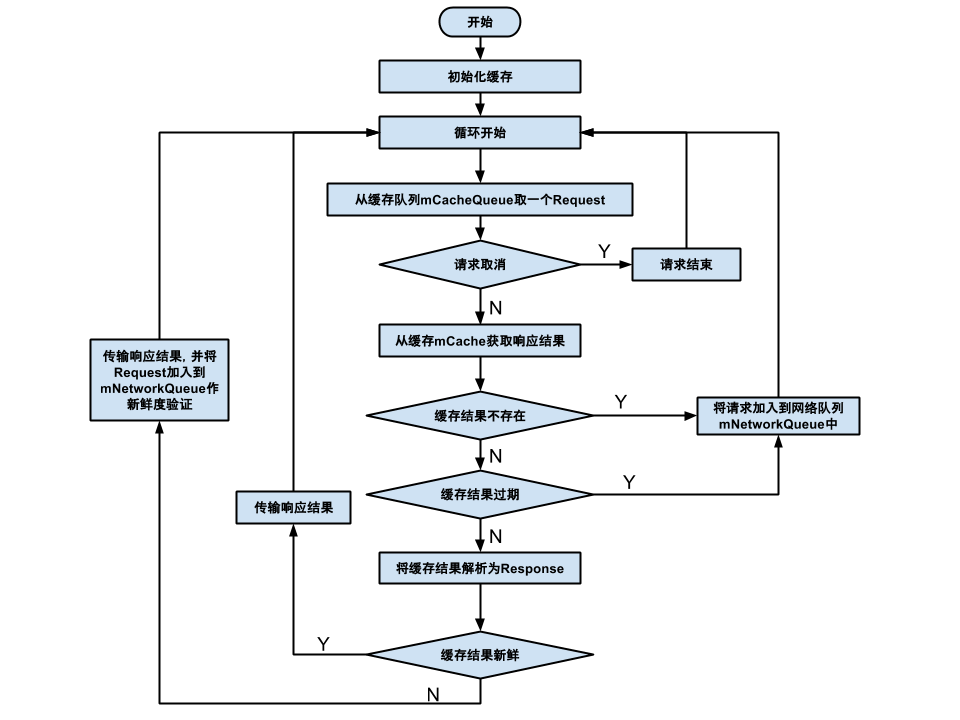

2、请求流程图

相关阅读:官方简介

基本用法

RequestQueue queue = Volley.newRequestQueue(this);

StringRequest stringRequest = new StringRequest("http://www.baidu.com", new Response.Listener<String>() {

@Override

public void onResponse(String response) {

tv.setText(response);

Logger.d(response);

}

}, new Response.ErrorListener() {

@Override

public void onErrorResponse(VolleyError error) {

}

});

queue.add(stringRequest);以上就是用Volley发起的一个最简单的网络请求。new一个请求队列,创建一个请求(请求中包括回调方法),添加请求到请求队列。OK,添加之后就可以了,剩下的框架会进行自行调度。相当简单,不过这么简单的API之后,肯定隐藏了大量的细节。

详细流程

首先是new一个请求队列,下面是实现的方法:

public static RequestQueue newRequestQueue(Context context, HttpStack stack) {

//获取磁盘缓存,默认处于data\data\包名\volley\缓存文件

File cacheDir = new File(context.getCacheDir(), DEFAULT_CACHE_DIR);

String userAgent = "volley/0";

try {

String packageName = context.getPackageName();

PackageInfo info = context.getPackageManager().getPackageInfo(packageName, 0);

//构造user-agent

userAgent = packageName + "/" + info.versionCode;

} catch (NameNotFoundException e) {

}

//9以上用HttpUrlconnnection

if (stack == null) {

if (Build.VERSION.SDK_INT >= 9) {

stack = new HurlStack();

} else {

// Prior to Gingerbread, HttpUrlConnection was unreliable.

// See: http://android-developers.blogspot.com/2011/09/androids-http-clients.html

stack = new HttpClientStack(AndroidHttpClient.newInstance(userAgent));

}

}

//代表一个网络操作,封装一个http客户端,完成网络请求

Network network = new BasicNetwork(stack);

//构造请求队列,传入参数磁盘缓存、网络接口

RequestQueue queue = new RequestQueue(new DiskBasedCache(cacheDir), network);

//队列调度开始,开启了volley的引擎

queue.start();

return queue;

}现在焦点转移到queue.start,到底引擎是如何构成以及启动的:

public void start() {

stop(); // Make sure any currently running dispatchers are stopped.

// Create the cache dispatcher and start it.

//缓存调度线程,是个Thread类,传入参数缓存队列,网络队列、缓存区、分发器

mCacheDispatcher = new CacheDispatcher(mCacheQueue, mNetworkQueue, mCache, mDelivery);

mCacheDispatcher.start();

// Create network dispatchers (and corresponding threads) up to the pool size.

//网络调度线程,也是个Thread类,系统默认4个,每个线程负责一个网络请求

for (int i = 0; i < mDispatchers.length; i++) {

NetworkDispatcher networkDispatcher = new NetworkDispatcher(mNetworkQueue, mNetwork,

mCache, mDelivery);

mDispatchers[i] = networkDispatcher;

networkDispatcher.start();

}上面看到了volley的v5发动机(1个缓存调度,4个网络调度,网络调度可以自定义),终于了解了volley的动力来源,不过有又看到了新的家伙,缓存队列和网络队列,他们是volley的管道系统,保证系统有条不紊地且高效运行,它们都会在queue.add展现出来:

这里展现了大量的管道系统,为了清楚地理解,先把他们罗列出来:

在队列中已经有重复请求的请求的暂存区?键为字符串(由请求生成的cache key),值为队列(所有的重复请求?)

/**

* Staging area for requests that already have a duplicate request in flight.

*

*

* containsKey(cacheKey) indicates that there is a request in flight for the given cache key.

* get(cacheKey) returns waiting requests for the given cache key. The in flight request is not contained in that list. Is null if no requests are staged.

*

*/

private final Map<String, Queue<Request<?>>> mWaitingRequests =

new HashMap<String, Queue<Request<?>>>();所有当前要处理的请求,不会包含重复请求

/**

* The set of all requests currently being processed by this RequestQueue. A Request

* will be in this set if it is waiting in any queue or currently being processed by

* any dispatcher.

*/

private final Set<Request<?>> mCurrentRequests = new HashSet<Request<?>>();这两个就不多说了,缓存队列和网络队列,而且都是优先级队列

/** The cache triage queue. */

private final PriorityBlockingQueue<Request<?>> mCacheQueue =

new PriorityBlockingQueue<Request<?>>();

/** The queue of requests that are actually going out to the network. */

private final PriorityBlockingQueue<Request<?>> mNetworkQueue =

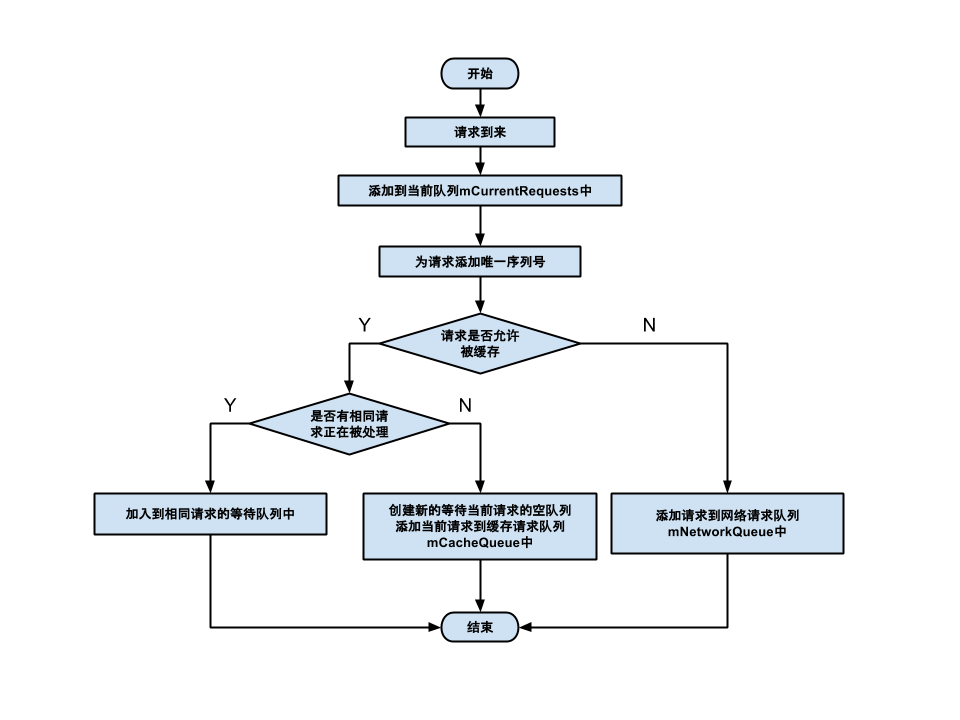

new PriorityBlockingQueue<Request<?>>();add方法也包含复杂的逻辑

public <T> Request<T> add(Request<T> request) {

// Tag the request as belonging to this queue and add it to the set of current requests.

request.setRequestQueue(this);

synchronized (mCurrentRequests) {

//不论请求走缓存还是网络,都加入到当前请求集合

mCurrentRequests.add(request);

}

// Process requests in the order they are added.

//添加唯一序列号

request.setSequence(getSequenceNumber());

request.addMarker("add-to-queue");

// If the request is uncacheable, skip the cache queue and go straight to the network.

//如果该请求不能缓存,就直接加入到网络请求队列

if (!request.shouldCache()) {

mNetworkQueue.add(request);

return request;

}

// Insert request into stage if there's already a request with the same cache key in flight.

synchronized (mWaitingRequests) {

//生成缓存的键

String cacheKey = request.getCacheKey();

//查看是否已经有重复请求队列(没有会新建一个)

if (mWaitingRequests.containsKey(cacheKey)) {

// There is already a request in flight. Queue up.

Queue<Request<?>> stagedRequests = mWaitingRequests.get(cacheKey);

if (stagedRequests == null) {

//新建空队列,LinkedList实现了Queue接口,可以当个队列用

stagedRequests = new LinkedList<Request<?>>();

}

//加入这个请求到队列

stagedRequests.add(request);

//加入这个队列到等待队列映射集合

mWaitingRequests.put(cacheKey, stagedRequests);

if (VolleyLog.DEBUG) {

VolleyLog.v("Request for cacheKey=%s is in flight, putting on hold.", cacheKey);

}

} else {

// Insert 'null' queue for this cacheKey, indicating there is now a request in

// flight.

//等待队列映射集合里没有,直接添加一个空的,所以每个请求都会在该映射中

mWaitingRequests.put(cacheKey, null);

//加入到缓存队列

mCacheQueue.add(request);

}

return request;

}

}所以其实一开始除过不能缓存的请求,都先加入到缓存队列,不管他们是否已经被缓存,就先当他们有缓存,若果没有或过期就下放到网络队列,盗一张codekk的图看下:

到了这里请求都已经上路了,不过他们到底是怎么被处理的,回到两种引擎那里看下:

首先是CacheDispatcher

@Override

public void run() {

...

//死循环

while (true) {

try {

// 从缓存队列里拿出一个请求,没有就阻塞

final Request<?> request = mCacheQueue.take();

request.addMarker("cache-queue-take");

// If the request has been canceled, don't bother dispatching it.

if (request.isCanceled()) {

request.finish("cache-discard-canceled");

continue;

}

// 尝试获取缓存

Cache.Entry entry = mCache.get(request.getCacheKey());

//缓存为空,请求进入网络队列

if (entry == null) {

request.addMarker("cache-miss");

// Cache miss; send off to the network dispatcher.

mNetworkQueue.put(request);

continue;

}

//查看缓存是否过期,若过期请求进入网络队列

if (entry.isExpired()) {

request.addMarker("cache-hit-expired");

request.setCacheEntry(entry);

mNetworkQueue.put(request);

continue;

}

//前面的判断都通过,说明缓存命中

request.addMarker("cache-hit");

//把缓存包装成一个网络响应(缓存里面包括了网络响应需要的响应头、响应体等)

Response<?> response = request.parseNetworkResponse(

new NetworkResponse(entry.data, entry.responseHeaders));

request.addMarker("cache-hit-parsed");

//缓存是否需要刷新

if (!entry.refreshNeeded()) {

//完全未过期缓存直接分发(应该是还有好长时间才过期)

mDelivery.postResponse(request, response);

} else {

// 即将要过期了,可以分发,但是还是加入到请求队列来刷新下缓存

request.addMarker("cache-hit-refresh-needed");

request.setCacheEntry(entry);

// Mark the response as intermediate.

response.intermediate = true;

// Post the intermediate response back to the user and have

// the delivery then forward the request along to the network.

mDelivery.postResponse(request, response, new Runnable() {

@Override

public void run() {

try {

//加入网络请求队列

mNetworkQueue.put(request);

} catch (InterruptedException e) {

// Not much we can do about this.

}

}

});

}

} catch (InterruptedException e) {

// We may have been interrupted because it was time to quit.

if (mQuit) {

return;

}

continue;

}

}

}再盗一张codekk的图

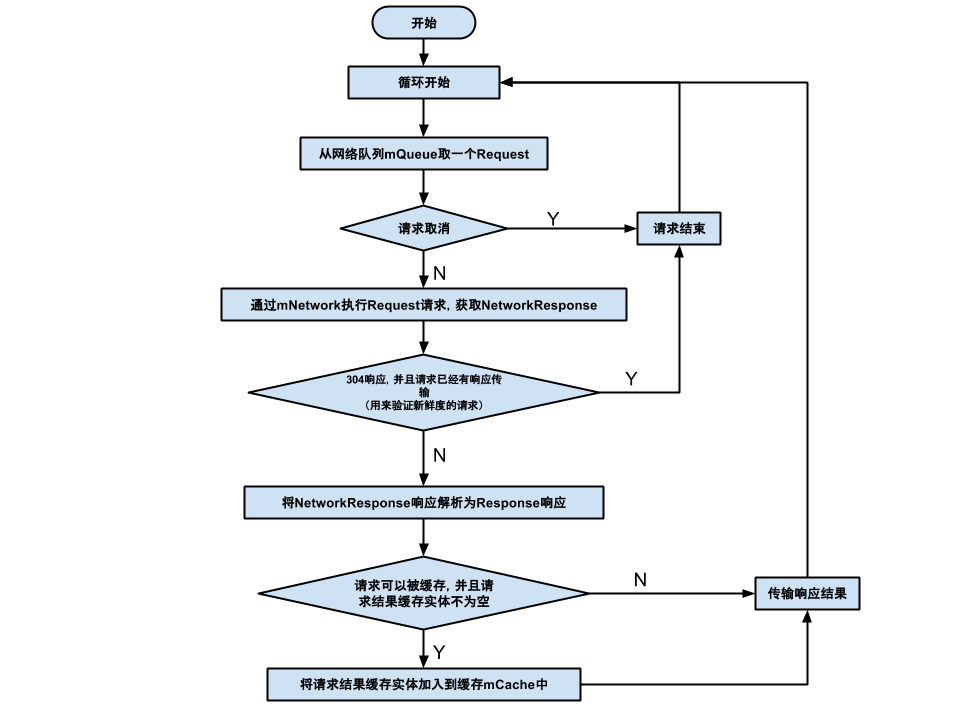

然后是网络队列NetworkDispatcher

@Override

public void run() {

Process.setThreadPriority(Process.THREAD_PRIORITY_BACKGROUND);

//死循环

while (true) {

long startTimeMs = SystemClock.elapsedRealtime();

Request<?> request;

try {

// Take a request from the queue.

request = mQueue.take();

} catch (InterruptedException e) {

// We may have been interrupted because it was time to quit.

if (mQuit) {

return;

}

continue;

}

try {

request.addMarker("network-queue-take");

// If the request was cancelled already, do not perform the

// network request.

if (request.isCanceled()) {

request.finish("network-discard-cancelled");

continue;

}

addTrafficStatsTag(request);

//执行网络请求

NetworkResponse networkResponse = mNetwork.performRequest(request);

request.addMarker("network-http-complete");

// If the server returned 304 AND we delivered a response already,

// we're done -- don't deliver a second identical response.

//缓存未修改

if (networkResponse.notModified && request.hasHadResponseDelivered()) {

request.finish("not-modified");

continue;

}

//解析网络请求,是在调度线程里面执行的

Response<?> response = request.parseNetworkResponse(networkResponse);

request.addMarker("network-parse-complete");

// Write to cache if applicable.

// TODO: Only update cache metadata instead of entire record for 304s.

//若要缓存,则缓存

if (request.shouldCache() && response.cacheEntry != null) {

mCache.put(request.getCacheKey(), response.cacheEntry);

request.addMarker("network-cache-written");

}

// Post the response back.

request.markDelivered();

mDelivery.postResponse(request, response);

} catch (VolleyError volleyError) {

volleyError.setNetworkTimeMs(SystemClock.elapsedRealtime() - startTimeMs);

parseAndDeliverNetworkError(request, volleyError);

} catch (Exception e) {

VolleyLog.e(e, "Unhandled exception %s", e.toString());

VolleyError volleyError = new VolleyError(e);

volleyError.setNetworkTimeMs(SystemClock.elapsedRealtime() - startTimeMs);

mDelivery.postError(request, volleyError);

}

}

}同样盗一张codekk的图

至此,volley执行流程已经过了一遍了。

为什么Volley不适合大数据量的通信

学习OkHttp时看到这样一句话

竟然还能拿到返回的inputStream,看到这个最起码能意识到一点,这里支持大文件下载

这时就想到Volley执行完网络请求后会把返回结果全部封装到NetworkResponse里面返回,并进行后续的解析。如果下载文件的话,会把所有的数据全部缓存在内存(Response里面有一个字节数组是用来存储数据的),就会挤爆内存。如果直接返回输入流的话,就可以边读取边写入,避免全部缓存在内存中才能拿回。

为什么说Volley适合数据量小,通信频繁的网络操作

相关阅读:

https://developer.android.com/training/volley/index.html

http://blog.csdn.net/guolin_blog/article/details/17482095

http://blog.csdn.net/lmj623565791/article/details/47721631

957

957

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?