由文章

5.ProxyFactory使用 Invoker创建消费端调用代理类

知 DemoService引用代理类为:

因此,当我们调用

demoService.sayHello("world") 方法时,将会调用代理类proxy0.sayHello()

public class proxy0 implements ClassGenerator.DC, EchoService, DemoService {

// methods包含proxy0实现的所有接口方法(去重)

public static Method[] methods; //methods = {syaHello,$echo}

private InvocationHandler handler;

public String sayHello(String arg0) {

Object[] args = new Object[1];

args[0] = arg0;

Object localObject = this.handler.invoke(this, methods[0], args);

return (String)localObject;

}

}

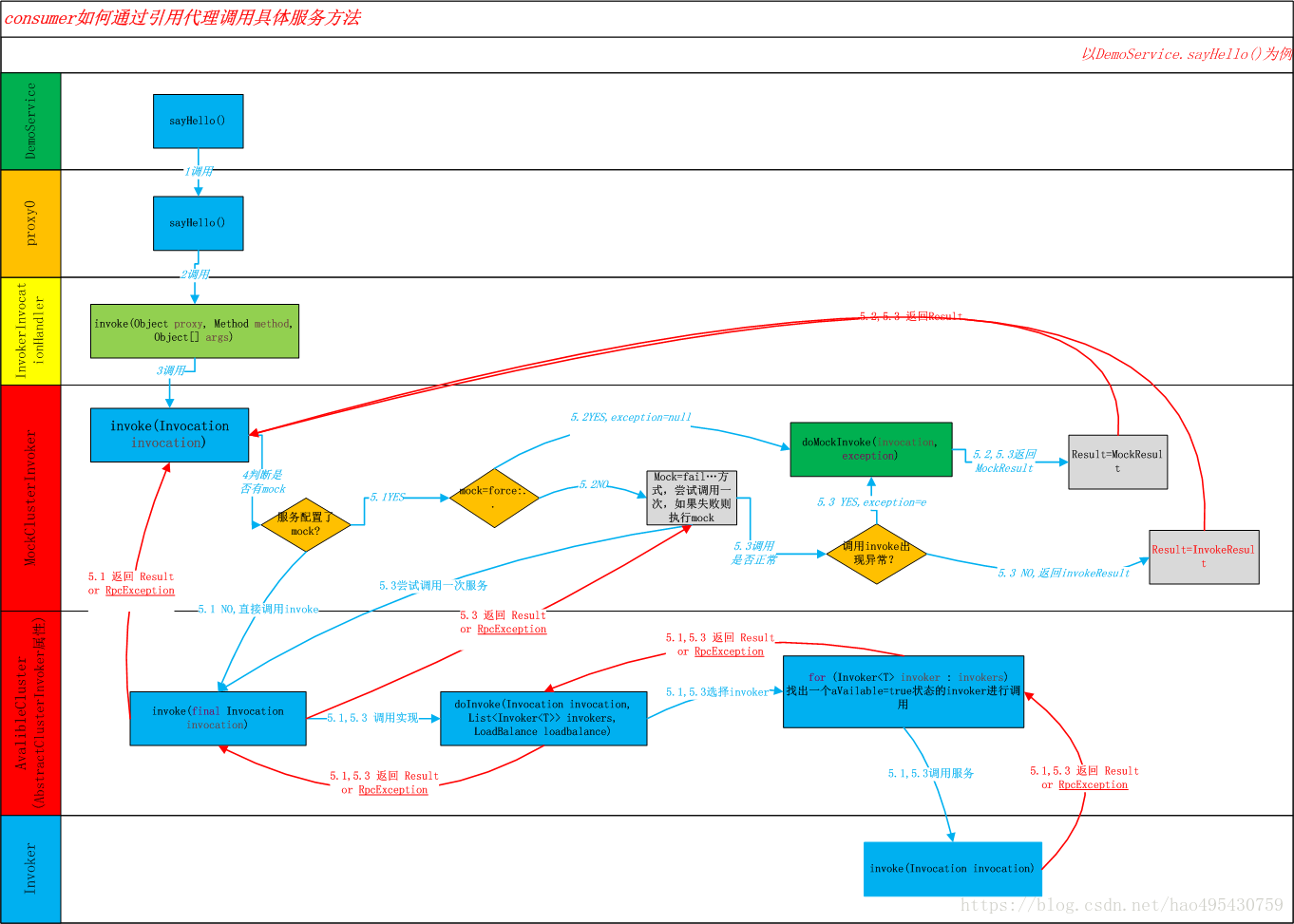

总体交互流程如下:

调用处理链为:

proxy0.sayHello() -> InvocationHandler.invoke(Object proxy, Method method, Object[] args) -> MockClusterInvoker.incoke(Invocation invocation) -> AbstractClusterInvoker.invoke(Invocation invocation) (AvalibleCluster内) -> AbstractClusterInvoker.doInvoke(Invocation invocation, List<Invoker<T>> invokers, LoadBalance loadbalance) (AvalibleCluster内)-> Invoker.invoke()

我们先看看MockClusterInvoker.invoke()方法:

主要流程:

1.

无mock设置,直接走正常流程

2.mock=force:*** 则直接走mock返回结果

3. 首先尝试调用invoke,如果出现异常则调用mock进行返回

public Result invoke(Invocation invocation) throws RpcException {

Result result = null;

String value = directory.getUrl().getMethodParameter(invocation.getMethodName(), Constants.MOCK_KEY, Boolean.FALSE.toString()).trim();

if (value.length() == 0 || value.equalsIgnoreCase("false")) {

//no mock

result = this.invoker.invoke(invocation); //无mock设置,直接走正常流程

} else if (value.startsWith("force")) {

if (logger.isWarnEnabled()) {

logger.info("force-mock: " + invocation.getMethodName() + " force-mock enabled , url : " + directory.getUrl());

}

//force:direct mock

result = doMockInvoke(invocation, null); //mock=force:*** 则直接走mock返回结果

} else {

//fail-mock

try {

result = this.invoker.invoke(invocation); //mock=fail:*** 首先尝试调用invoke,如果出现异常则调用mock进行返回

} catch (RpcException e) {

if (e.isBiz()) {

throw e;

} else {

if (logger.isWarnEnabled()) {

logger.info("fail-mock: " + invocation.getMethodName() + " fail-mock enabled , url : " + directory.getUrl(), e);

}

result = doMockInvoke(invocation, e);

}

}

}

return result;

}

接着执行 AvalibleCluster内 Invoker:(AvalibleCluster内的Invoker为一个

AbstractClusterInvoker 匿名类)

public class AvailableCluster implements Cluster {

public static final String NAME = "available";

public <T> Invoker<T> join(Directory<T> directory) throws RpcException {

return new AbstractClusterInvoker<T>(directory) {

public Result doInvoke(Invocation invocation, List<Invoker<T>> invokers, LoadBalance loadbalance) throws RpcException {

for (Invoker<T> invoker : invokers) {

if (invoker.isAvailable()) {

return invoker.invoke(invocation); //doInvoke方法会选择任意一个可用的Invoker执行

}

}

throw new RpcException("No provider available in " + invokers);

}

};

}

}

此时的Invoker为 RegistryProtocol在创建refer invoker 时构建的实例:

因此,在选择任意一个可用Invoker之后,类的调用关系为:

MockClusterInvoker

.invoke(Invocation invocation) -> FailoverClusterInvoker.invoke() -> RegistryDirectory.InvokerDelegete.invoke()

-> ListenerInvokerWrapper.invoke()

-> ProtocolFilterWrapper.invoke() 【

ConsumerContextFilter -> MonitorFilter -> FutureFilter】

->

DubboInvoker.invoke() -> DubboInvoker.doInvoke()

注:

1.RegistryDirectory.InvokerDelegete 实例何时创建的可以查看 RegistryDirectory.refreshInvokers -> toInvokers() 时创建的

2.ListenerInvokerWrapper 为创建refer时 ProtocolListenerWrapper.refer进行创建的实例:

public <T> Invoker<T> refer(Class<T> type, URL url) throws RpcException {

if (Constants.REGISTRY_PROTOCOL.equals(url.getProtocol())) {

return protocol.refer(type, url);

}

return new ListenerInvokerWrapper<T>(protocol.refer(type, url),

Collections.unmodifiableList(

ExtensionLoader.getExtensionLoader(InvokerListener.class)

.getActivateExtension(url, Constants.INVOKER_LISTENER_KEY)));

}

3.ProtocolFilterWrapper.invoke()为调用consumer端过滤器Filter链: 【调用链为: ConsumerContextFilter -> MonitorFilter -> FutureFilter】

创建Filter链为ProtocolFilterWrapper类如下代码片段:

public <T> Invoker<T> refer(Class<T> type, URL url) throws RpcException {

if (Constants.REGISTRY_PROTOCOL.equals(url.getProtocol())) {

return protocol.refer(type, url);

}

return buildInvokerChain(protocol.refer(type, url), Constants.REFERENCE_FILTER_KEY, Constants.CONSUMER);

}

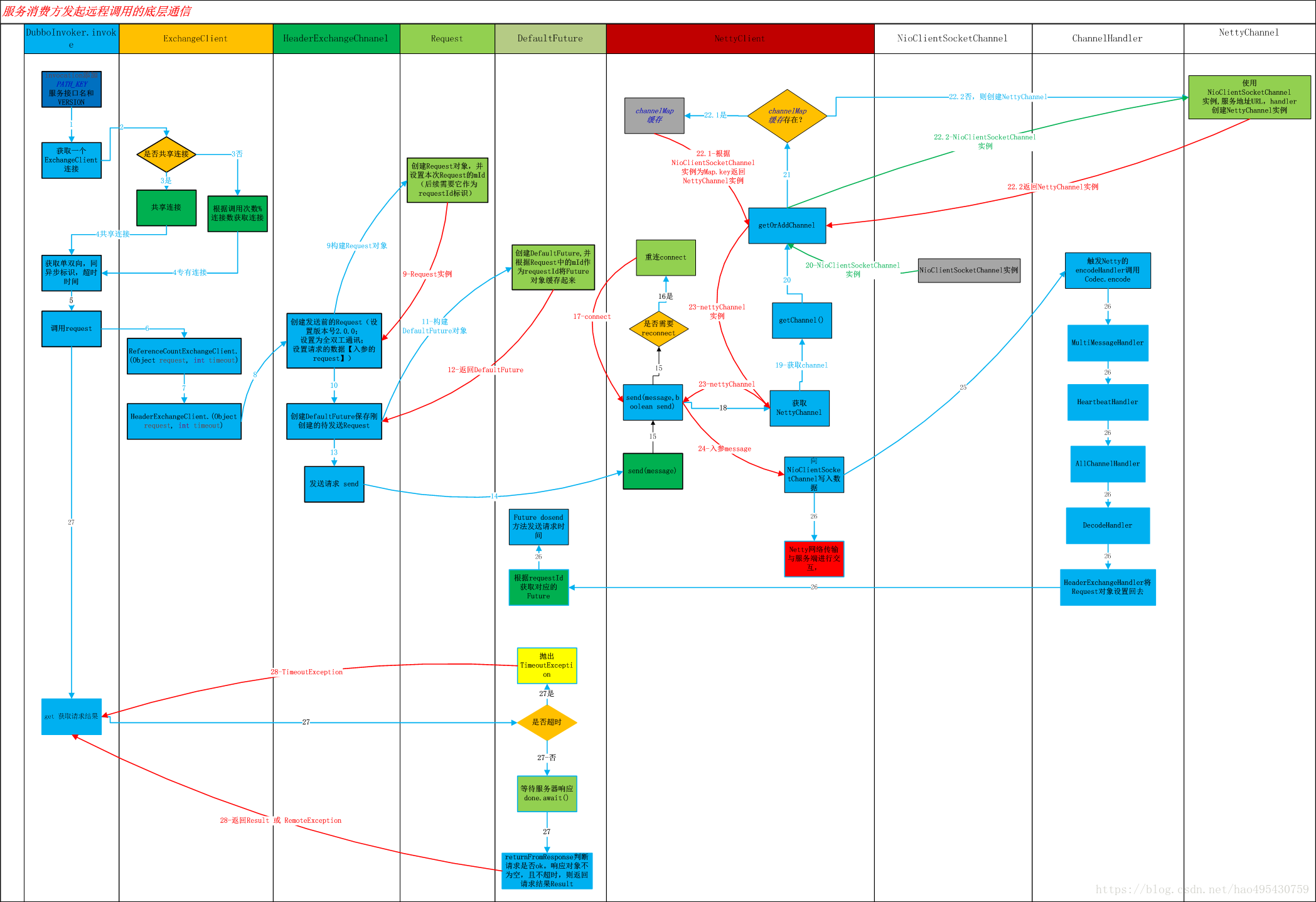

DubboInvoker.invoke发起远程调用底层通讯交互如下:

Netty网络传输与服务器交互流程图:

先看看

DubboInvoker.doInvoke()源码:

protected Result doInvoke(final Invocation invocation) throws Throwable {

RpcInvocation inv = (RpcInvocation) invocation;

final String methodName = RpcUtils.getMethodName(invocation);

inv.setAttachment(Constants.PATH_KEY, getUrl().getPath());

inv.setAttachment(Constants.VERSION_KEY, version);

/**1.首选会获取连接,如果是共享连接,直接获取,

*如果服务启动的是多个连接,那么根据 AtomicPositiveInteger index = new AtomicPositiveInteger() 对连接数取余的方式来选择连接 */

ExchangeClient currentClient;

if (clients.length == 1) {

currentClient = clients[0];

} else {

currentClient = clients[index.getAndIncrement() % clients.length];

}

try {

/**2.接着判断是否是异步调用,和单路调用(无返回,只管调用)*/

boolean isAsync = RpcUtils.isAsync(getUrl(), invocation);

boolean isOneway = RpcUtils.isOneway(getUrl(), invocation); //先判断服务是否设置 return=false,如果是 isOneWay=true;否则判断Method是否设置了 return(方法级的默认为true)

int timeout = getUrl().getMethodParameter(methodName, Constants.TIMEOUT_KEY, Constants.DEFAULT_TIMEOUT);

if (isOneway) { //单向调用,不需要等待调用结果

boolean isSent = getUrl().getMethodParameter(methodName, Constants.SENT_KEY, false);

currentClient.send(inv, isSent);

RpcContext.getContext().setFuture(null);

return new RpcResult();

} else if (isAsync) { //异步调用,将Future设置到ThreadLocal中

ResponseFuture future = currentClient.request(inv, timeout);

RpcContext.getContext().setFuture(new FutureAdapter<Object>(future));

return new RpcResult();

} else { //同步调用,等待调用结果后才返回

RpcContext.getContext().setFuture(null);

return (Result) currentClient.request(inv, timeout).get();

}

} catch (TimeoutException e) {

throw new RpcException(RpcException.TIMEOUT_EXCEPTION, "Invoke remote method timeout. method: " + invocation.getMethodName() + ", provider: " + getUrl() + ", cause: " + e.getMessage(), e);

} catch (RemotingException e) {

throw new RpcException(RpcException.NETWORK_EXCEPTION, "Failed to invoke remote method: " + invocation.getMethodName() + ", provider: " + getUrl() + ", cause: " + e.getMessage(), e);

}

}

接着使用

currentClient.request 进行消息发送:经过

ReferenceCountExchangeClient【统计数据调用次数】类后传入 HeaderExchangeClient.request(),接着在HeaderExchangeChannel.request处进行处理最终发送:[构建request,设置版本号,单双路模式等等],源码如下:

HeaderExchangeChannel.java

public ResponseFuture request(Object request, int timeout) throws RemotingException {

if (closed) {

throw new RemotingException(this.getLocalAddress(), null, "Failed to send request " + request + ", cause: The channel " + this + " is closed!");

}

// 创建Request,Request构造函数会设置本次Request的mId,作为本次调用的标识。

//设置版本号,数据,单双路模式

Request req = new Request();

req.setVersion("2.0.0");

req.setTwoWay(true);

req.setData(request);

DefaultFuture future = new DefaultFuture(channel, req, timeout); //创建DefaultFuture,并根据Request中的mId作为requestId将Future对象缓存起来

try {

channel.send(req); //执行发送 转入NettyClient.send

} catch (RemotingException e) {

future.cancel();

throw e;

}

return future;

}

NettyClient.java send方法:

public void send(Object message, boolean sent) throws RemotingException {

if (send_reconnect && !isConnected()) {

connect();

}

Channel channel = getChannel(); //getChannel会根据NioSocketClientChannel来获取缓存中的NettyChannel,如果不存在,则新建 NettyChannel 返回

if (channel == null || !channel.isConnected()) {

throw new RemotingException(this, "message can not send, because channel is closed . url:" + getUrl());

}

channel.send(message, sent);

}

protected com.alibaba.dubbo.remoting.Channel getChannel() {

Channel c = channel;

if (c == null || !c.isConnected())

return null;

return NettyChannel.getOrAddChannel(c, getUrl(), this);

}

static NettyChannel getOrAddChannel(org.jboss.netty.channel.Channel ch, URL url, ChannelHandler handler) {

if (ch == null) {

return null;

}

NettyChannel ret = channelMap.get(ch);

if (ret == null) {

NettyChannel nc = new NettyChannel(ch, url, handler);

if (ch.isConnected()) {

ret = channelMap.putIfAbsent(ch, nc);

}

if (ret == null) {

ret = nc;

}

}

return ret;

}

这里 nettyClient首先会判断当前连接的Channel是否正常,如果不正常连接,则重新获取connect,接着获取NettyChannel 后使用channel发送数据:

NettyChannel.java

public void send(Object message, boolean sent) throws RemotingException {

super.send(message, sent);

boolean success = true;

int timeout = 0;

try {

ChannelFuture future = channel.write(message);//往 NioSocketClientChannel 写入数据,返回的Future等待timeout时间

if (sent) {

timeout = getUrl().getPositiveParameter(Constants.TIMEOUT_KEY, Constants.DEFAULT_TIMEOUT);

success = future.await(timeout);

}

Throwable cause = future.getCause();

if (cause != null) {

throw cause;

}

} catch (Throwable e) {

throw new RemotingException(this, "Failed to send message " + message + " to " + getRemoteAddress() + ", cause: " + e.getMessage(), e);

}

if (!success) {

throw new RemotingException(this, "Failed to send message " + message + " to " + getRemoteAddress()

+ "in timeout(" + timeout + "ms) limit");

}

}

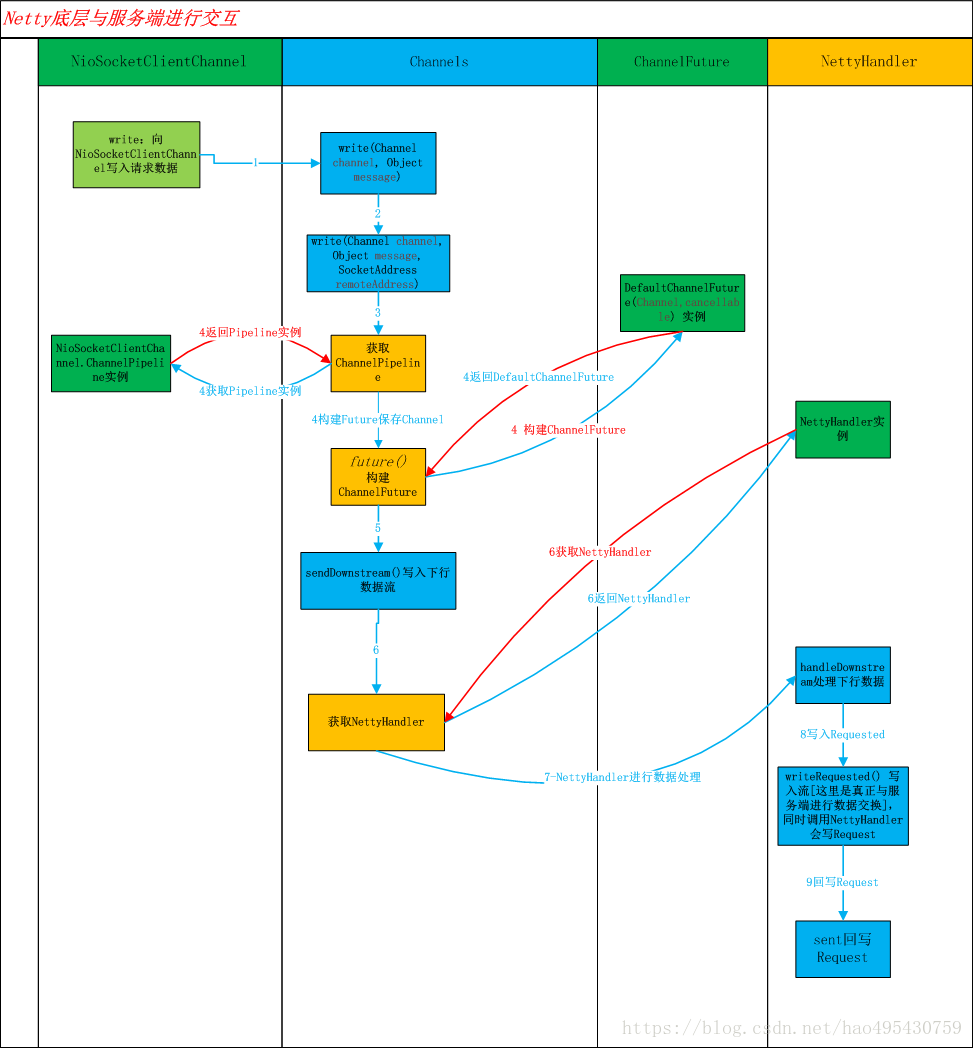

往

NioSocketClientChannel写入数据流后 会进入到Netty底层的通讯,调用类过程为:

NioSocketClientChannel.write() ->

org

.

jboss

.

netty

.

channel

.Channels.write(Channel channel, Object message) ->

Channels.write(Channel channel, Object message, SocketAddress remoteAddress) 设置下行数据流 ->

DefaultChannelPipeline.sendDownStream()

->:

Channels.java

public static ChannelFuture write(Channel channel, Object message, SocketAddress remoteAddress) {

ChannelFuture future = future(channel); //构建Future

//设置Netty下行流

channel.getPipeline().sendDownstream(

new DownstreamMessageEvent(channel, future, message, remoteAddress));

return future;

}

org

.

jboss

.

netty

.

channel

.DefaultChannelPipeline

.java

void sendDownstream(DefaultChannelHandlerContext ctx, ChannelEvent e) {

if (e instanceof UpstreamMessageEvent) {

throw new IllegalArgumentException("cannot send an upstream event to downstream");

}

try {

//这里开始获取Handler链进行处理,获取到的Handler为创建NettyClient实例时创建的 NettyHandler

((ChannelDownstreamHandler) ctx.getHandler()).handleDownstream(ctx, e);

} catch (Throwable t) {

e.getFuture().setFailure(t);

notifyHandlerException(e, t);

}

}

NettyHandler.handleDownstream()会判断事件类型进行相应处理,这里为调用

NettyHandler.writeRequested方法进行发送数据

public void writeRequested(ChannelHandlerContext ctx, MessageEvent e) throws Exception {

//这里将与服务端正式进行数据交互

super.writeRequested(ctx, e);

NettyChannel channel = NettyChannel.getOrAddChannel(ctx.getChannel(), url, handler);

try {

//接着调用Handler链将前面构造的请求对象数据 Request向上进行回写

handler.sent(channel, e.getMessage());

} finally {

NettyChannel.removeChannelIfDisconnected(ctx.getChannel());

}

}

在设置Netty下行流的时候会获取NettyHandler,这里首先会触发Netty对输入数据进行encode,类调用关系如下图:

具体使用的是什么编解码方式,可以查看创建NettyClient时的设置:

NettyClient.java

public NettyClient(final URL url, final ChannelHandler handler) throws RemotingException {

super(url, wrapChannelHandler(url, handler));

}

父类 AbstractEndpoint.java

public AbstractEndpoint(URL url, ChannelHandler handler) {

super(url, handler);

this.codec = getChannelCodec(url);

this.timeout = url.getPositiveParameter(Constants.TIMEOUT_KEY, Constants.DEFAULT_TIMEOUT);

this.connectTimeout = url.getPositiveParameter(Constants.CONNECT_TIMEOUT_KEY, Constants.DEFAULT_CONNECT_TIMEOUT);

}

protected static Codec2 getChannelCodec(URL url) {

String codecName = url.getParameter(Constants.CODEC_KEY, "telnet");

if (ExtensionLoader.getExtensionLoader(Codec2.class).hasExtension(codecName)) {

return ExtensionLoader.getExtensionLoader(Codec2.class).getExtension(codecName);

} else {

return new CodecAdapter(ExtensionLoader.getExtensionLoader(Codec.class)

.getExtension(codecName));

}

}

而在创建NettyClient时 DubboProtocol.initClient方法初始化连接时指定了codec方式: url = url.addParameter(Constants.CODEC_KEY, DubboCodec.NAME);

由此可知,codec=

com.alibaba.dubbo.rpc.protocol.dubbo.DubboCountCodec (

详情可查看文章4

)

又因为NettyHandler创建时封装了上层传递进来的Handler链,如下图:

因此,输入信息编码完成后,将调用Handler链层层调用,最终调用到DubboProtocol的 ExchangeHandlerAdapter ;

发送完成后HeaderExchangeHandler会将发送的Request设置回去

2384

2384

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?