上文已经说明了用户的协同过滤,这篇也来谈谈基于物品的协同过滤。

2.基于物品的协同过滤

类似的,也很容易做出一个简单的基于物品的过滤方法。

1. 单机基本算法实践

public static void ItemBased() {

try {

//DataModel model = new FileDataModel(new File("data/dataset.csv"));

DataModel model = new FileDataModel(new File("D:/tmp/recommandtestdata.csv"));

ItemSimilarity similarity = new PearsonCorrelationSimilarity(model);

ItemBasedRecommender recommender = new GenericItemBasedRecommender(model, similarity);

List<RecommendedItem> recommendations = recommender.recommend(2, 3);

for (RecommendedItem recommendation : recommendations) {

System.out.println(recommendation);

}

} catch (Exception e) {

e.printStackTrace();

}

}

一样可以采用上篇的数据,单机可以达到几百M,运行结果类似这样:

INFO - Creating FileDataModel for file D:\tmp\recommandtestdata.csv

INFO - Reading file info...

INFO - Processed 1000000 lines

。。。。。。(此处省略)

INFO - Processed 5000000 lines

INFO - Read lines: 5990001

INFO - Reading file info...

INFO - Processed 1000000 lines

INFO - Processed 2000000 lines

。。。。。。(此处省略)

INFO - Processed 999999 users

DEBUG - Recommending items for user ID '2'

DEBUG - Recommendations are: [RecommendedItem[item:7, value:4.0], RecommendedItem[item:5, value:4.0], RecommendedItem[item:3, value:4.0]]

RecommendedItem[item:7, value:4.0]

RecommendedItem[item:5, value:4.0]

RecommendedItem[item:3, value:4.0]

2. 分布式基于物品协同过滤实践

如果是到Hadoop上会怎么样呢?我们可以搞些百万级别的数据来试试。

用上面的方法生成数据,上传到HDFS。我上传到目录/user/hadoop/recommend。注意配置MAHOUT_CONF_DIR和HADOOP_CONF_DIR

export MAHOUT_HOME=/home/hadoop/mahout-0.11.0

export MAHOUT_CONF_DIR=$MAHOUT_HOME/conf

export HADOOP_CONF_DIR=$HADOOP_HOME/etc/hadoop

PATH=$PATH:$HOME/bin:$HADOOP_HOME/bin:$HADOOP_HOME/sbin:$HIVE_HOME/bin:$HBASE_HOME/bin:$SQOOP_HOME/bin:$SPARK_HOME/bin:$MAHOUT_HOME/bin

export PATH

下面就直接运行了。

$ mahout recommenditembased -s SIMILARITY_LOGLIKELIHOOD -i /user/hadoop/recommend/recommandtestdata.csv -o /user/hadoop/recommend/result --numRecommendations 25

通过-i -o指定输入输出

一般会遇到下面的错误:

1 , Error: Java heap space

可以修改hadoop-2.7.1/etc/hadoop/mapred-site.xml

<property>

<name>mapreduce.map.java.opts</name>

<value>-Xmx1024M</value>

</property>

<property>

<name>mapreduce.reduce.java.opts</name>

<value>-Xmx1024M</value>

</property>

我做的时候scp到其他的所有节点

然后执行 hadoop dfsadmin -refreshNodes 通知节点配置更改,这样就不用重启集群。

2,再次运行会报这样的错误:

Exception in thread "main" org.apache.hadoop.mapred.FileAlreadyExistsException: Output directory temp/preparePreferenceMatrix/itemIDIndex already exists

很简单,执行 hadoop fs -rm -r temp 删除这些目录,这个temp是当前用户的目录

打印的结果类似这样:

MAHOUT_LOCAL is not set; adding HADOOP_CONF_DIR to classpath.

Running on hadoop, using /home/hadoop/hadoop-2.7.1/bin/hadoop and HADOOP_CONF_DIR=/home/hadoop/hadoop-2.7.1/etc/hadoop

MAHOUT-JOB: /home/hadoop/mahout-0.11.0/mahout-examples-0.11.0-job.jar

。。。。。。

File Input Format Counters

Bytes Read=42435633

File Output Format Counters

Bytes Written=293110942

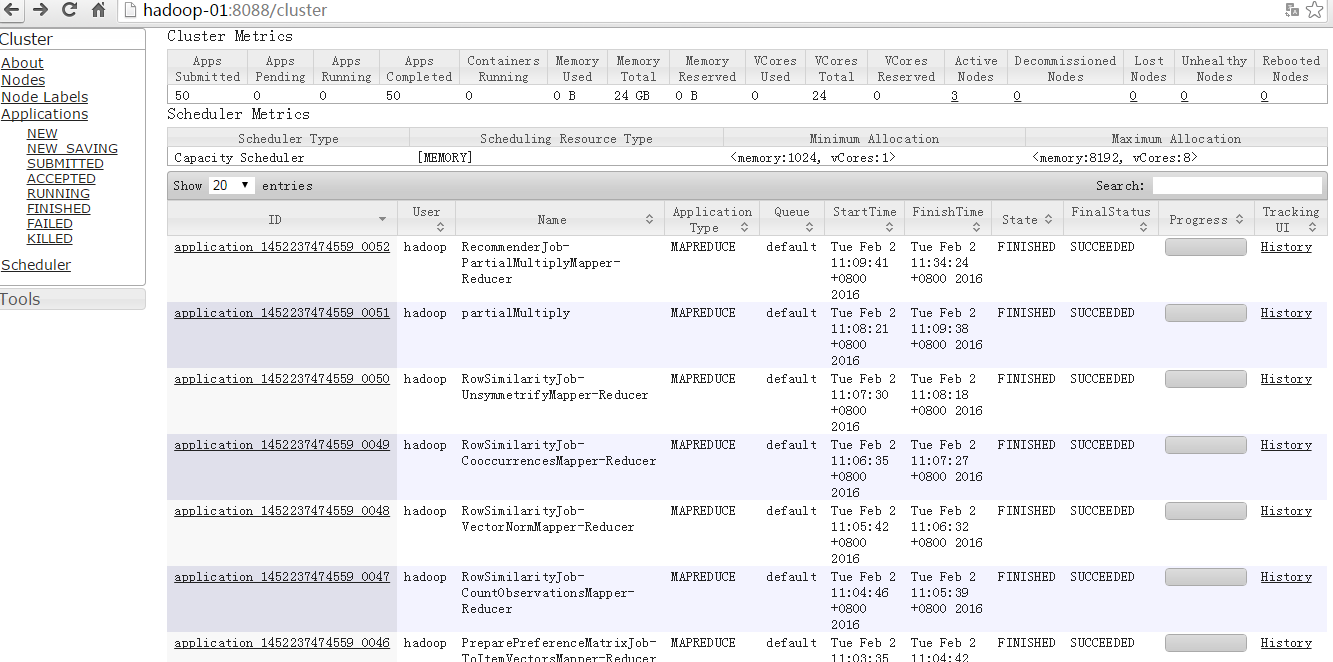

16/02/02 11:34:26 INFO MahoutDriver: Program took 2043686 ms (Minutes: 34.06143333333333)

最后运行的时间为34分钟,呵呵,我是3个虚拟机节点上运行的,user-item数据为600万,100万用户ID,1000物品ID,文件大小约为75M。

运行之后生成的结果文件类似这样:

[hadoop@Hadoop-01 ~]$ hadoop fs -tail /user/hadoop/recommend/result/part-r-00000

[944:3.5948825,665:3.503863,865:3.503816,489:3.5037806,645:3.503364,650:3.5012658,852:3.5012386,726:3.5012195,492:3.5012083,587:3.501197,800:3.5011563,153:3.5011272,526:3.5003066,863:3.4079022,884:3.4075432,465:3.3355443,100:3.3355238,89:3.2901146,655:3.1999612,530:3.1868088,938:3.1864436,26:3.1838968,631:3.170042,179:3.1388438,227:3.0067925]

999998 [935:2.0,242:2.0,686:2.0,606:2.0,931:2.0,620:2.0,135:2.0,253:2.0,285:2.0,612:2.0,397:2.0,260:2.0,243:2.0,179:2.0,328:2.0,569:1.7594754,67:1.7054411,852:1.6788439,563:1.6779507,570:1.6773306,792:1.6763401,594:1.6762494,87:1.6685698,800:1.6669749,158:1.6668898]

999999 [519:4.0,422:4.0,297:4.0,586:4.0,193:4.0,15:4.0,650:4.0,179:4.0,431:4.0,576:4.0,312:3.1151164,236:2.9857903,811:2.9856594,489:2.9852173,367:2.9850829,820:2.7635565,944:2.7610388,648:2.7313619,423:2.4961493,425:2.495597,716:2.4862907,50:2.4861178,901:2.4855912,362:2.4855912,983:2.4853737]

文件的格式是这样的:

UserID [ItemID1:score1,ItemID2:score2......] 这个userID是按顺序的,score也是按照从高到低排序的。

成功运行完了,还没有完事,我们可以去看看mapreduce执行的过程。

还是有不少的mapreduce过程,有时间在具体分析下里面的过程。

999

999

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?