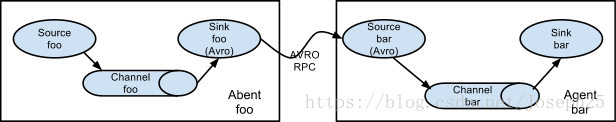

要求:Agent foo定时采集文件夹,将采集到的数据传到Agent bar ,在Agent bar里过滤,然后上传到HDFS

一:脚本将数据同步到软连接文件夹里

#! /bin/bash

mydate=`date +%Y-%m-%d-%H -d '-1 hour'`

monitorDir="/opt/dap/monitor/"

filePath="/opt/dap/response/"

fileName="response-""${mydate}"".log"

echo "文件地址:${filePath}"

echo "文件名字:${fileName}"

if [ -f "${filePath}""${fileName}" ];

then

echo "---日志文件存在---"

if [ ! -f "${monitorDir}""${fileName}" ] && [ ! -f "${monitorDir}""${fileName}"".OK" ] ;

then

echo "---软链接不存在---"

ln -s "${filePath}""${fileName}" "${monitorDir}""${fileName}"

fi

fi

exit

二:flume foo中的配置文件书写

a1.sources = r1

a1.sinks = k1

a1.channels = c1

#对于source的配置描述 监听文件中的新增数据 exec

a1.sources.r1.type = spooldir

a1.sources.r1.spoolDir = /opt/dap/monitor

a1.sources.r1.fileSuffix = .OK

a1.sources.r1.deletePolicy = never

a1.sources.r1.fileHeader = true

a1.sources.r1.basenameHeader=true

a1.sources.r1.basenameHeaderKey=fileName

#对于sink的配置描述 使用avro日志做数据的消费

a1.sinks.k1.type = avro

a1.sinks.k1.hostname = 49.4.86.238

a1.sinks.k1.port = 44444

#对于channel的配置描述 使用文件做数据的临时缓存 这种的安全性要高

#a1.channels.c1.type = file

#a1.channels.c1.checkpointDir = /home/uplooking/data/flume/checkpoint

#a1.channels.c1.dataDirs = /home/uplooking/data/flume/data

# 用于描述channel,在内存中做数据的临时的存储

a1.channels.c1.type = memory

# 该内存中最大的存储容量,1000个events事件

a1.channels.c1.capacity = 1000

# 能够同时对100个events事件监管事务

a1.channels.c1.transactionCapacity = 100

#通过channel c1将source r1和sink k1关联起来

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1三:过滤器代码书写,写完将代码打成jar包

package com.dap;

import java.util.List;

import java.util.regex.Matcher;

import java.util.regex.Pattern;

import org.apache.flume.Context;

import org.apache.flume.Event;

import org.apache.flume.interceptor.Interceptor;

import com.google.common.base.Charsets;

import com.google.common.collect.Lists;

import static org.apache.flume.interceptor.RegexFilteringInterceptor.Constants.DEFAULT_REGEX;

import static org.apache.flume.interceptor.RegexFilteringInterceptor.Constants.REGEX;

public class MyInterceptor implements Interceptor {

@SuppressWarnings("unused")

private final Pattern regex;

private MyInterceptor(Pattern regex) {

this.regex = regex;

}

@Override

public void initialize() {

// NO-OP...

}

@Override

public void close() {

// NO-OP...

}

@Override

public Event intercept(Event event) {

String body = new String(event.getBody(), Charsets.UTF_8);

//匹配日志信息中以 Parsing events: 为开头关键字,以END 为结尾关键字 的日志信息段

// String pattern= "(Parsing events)(.*)(END)";

//自定义正则表达式

String pattern= "(.*)(请求数据 ===>)(.*)";

// 创建 Pattern 对象

Pattern r= Pattern.compile(pattern);

// 现在创建 matcher 对象

Matcher m= r.matcher(body);

StringBuffer bodyoutput = new StringBuffer();

if(m.find())//此处可以用多个正则进行匹配,多条件可以用&& 或者|| 的方式约束连接。

{

//多个正则匹配后可以将多个匹配的结果append 到bodyoutput

bodyoutput=bodyoutput.append(m.group(0));

event.setBody(bodyoutput.toString().getBytes());

}else{

event=null;

}

return event;

}

@Override

public List<Event> intercept(List<Event> events) {

List<Event> intercepted = Lists.newArrayListWithCapacity(events.size());

for (Event event : events) {

Event interceptedEvent = intercept(event);

if (interceptedEvent != null) {

intercepted.add(interceptedEvent);

}

}

return intercepted;

}

public static class Builder implements Interceptor.Builder {

private Pattern regex;

//使用Builder初始化Interceptor

@Override

public Interceptor build() {

return new MyInterceptor(regex);

}

@Override

public void configure(Context context) {

String regexString = context.getString(REGEX, DEFAULT_REGEX);

regex = Pattern.compile(regexString);

}

}

}

四:在Agent bar配置文件,并且将代码打成的jar包放进/usr/flume/lib下,然后重启flume

## 注意:Flume agent的运行,主要就是配置source channel sink

## 下面的a1就是agent的代号,source叫r1 channel叫c1 sink叫k1

#########################################################

a1.sources = r1

a1.sinks = k1

a1.channels = c1

#对于source的配置描述 监听avro

a1.sources.r1.type = avro

a1.sources.r1.bind = 192.168.92.215

a1.sources.r1.port = 44444

a1.sources.r1.interceptors=i1

a1.sources.r1.interceptors.i1.type=com.dap.MyInterceptor$Builder

#对于sink的配置描述 使用log日志做数据的消费

a1.sinks.k1.type = hdfs

a1.sinks.k1.hdfs.path = hdfs://192.168.92.215:8020/home/flume6/%Y%m%d

a1.sinks.k1.hdfs.filePrefix = %{fileName}

#a1.sinks.k1.hdfs.fileSuffix = .com

a1.sinks.k1.hdfs.fileType = DataStream

#是否启用时间上舍弃法

a1.sinks.k1.hdfs.round = true

a1.sinks.k1.hdfs.roundValue = 3

a1.sinks.k1.hdfs.roundUnit = minute

a1.sinks.k1.hdfs.useLocalTimeStamp = true

#当events数据达到该数量时候,将临时文件滚动成目标文件,如果设置为0,则表示不根据events来滚动数据

a1.sinks.k1.hdfs.rollCount = 0

#当文件大小到134217728byte时滚动成目标文件

a1.sinks.k1.hdfs.rollSize = 134217728

#配置下面两项后,保存到HDFS中的数据才是文本

#否则通过hdfs dfs -text查看时,显示的是经过压缩的16进制

a1.sinks.k1.hdfs.serializer = TEXT

a1.sinks.k1.hdfs.fileType = DataStream

#对于channel的配置描述 使用内存缓冲区域做数据的临时缓存

a1.channels.c1.type = memory

a1.channels.c1.capacity = 1000

a1.channels.c1.transactionCapacity = 100

#通过channel c1将source r1和sink k1关联起来

a1.sources.r1.channels = c1

a1.sinks.k1.channel = c1

注意:启动时先启动接收端Agent bar配置文件,然后启动采集端Agent foo,此任务是与本组同事(陈*)共同完成

5381

5381

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?