1 准备

-

namenode1

-

namenode2

-

journalnode1

-

journalnode2

-

journalnode3

2 配置

2.1 core-site.xml

|

1

2

3

4

5

6

7

8

9

10

|

<

configuration

>

<

property

>

<

name

>fs.defaultFS</

name

>

<

value

>hdfs://mycluster</

value

>

</

property

>

<

property

>

<

name

>hadoop.tmp.dir</

name

>

<

value

>/home/tmp/hadoop2.0</

value

>

</

property

>

</

configuration

>

|

2.2 hdfs-site.xml

|

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

|

<

configuration

>

<

property

>

<

name

>dfs.replication</

name

>

<

value

>1</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.name.dir</

name

>

<

value

>/home/dfs/name</

value

>

</

property

>

<

property

>

<

name

>dfs.datanode.data.dir</

name

>

<

value

>/home/dfs/data</

value

>

</

property

>

<

property

>

<

name

>dfs.permissions</

name

>

<

value

>false</

value

>

</

property

>

<

property

>

<

name

>dfs.nameservices</

name

>

<

value

>mycluster</

value

>

</

property

>

<

property

>

<

name

>dfs.ha.namenodes.mycluster</

name

>

<

value

>nn1,nn2</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.rpc-address.mycluster.nn1</

name

>

<

value

>namenode1:8020</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.rpc-address.mycluster.nn2</

name

>

<

value

>namenode2:8020</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.http-address.mycluster.nn1</

name

>

<

value

>namenode1:50070</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.http-address.mycluster.nn2</

name

>

<

value

>namenode2:50070</

value

>

</

property

>

<

property

>

<

name

>dfs.namenode.shared.edits.dir</

name

>

<

value

>qjournal://journalnode1:8485;journalnode2:8485;journalnode3:8485/mycluster</

value

>

</

property

>

<

property

>

<

name

>dfs.journalnode.edits.dir</

name

>

<

value

>/home/dfs/journal</

value

>

</

property

>

<

property

>

<

name

>dfs.client.failover.proxy.provider.mycluster</

name

>

<

value

>org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider</

value

>

</

property

>

<

property

>

<

name

>dfs.ha.fencing.methods</

name

>

<

value

>sshfence</

value

>

</

property

>

<

property

>

<

name

>dfs.ha.fencing.ssh.private-key-files</

name

>

<

value

>/root/.ssh/id_rsa</

value

>

</

property

>

<

property

>

<

name

>dfs.ha.fencing.ssh.connect-timeout</

name

>

<

value

>6000</

value

>

</

property

>

<

property

>

<

name

>dfs.ha.automatic-failover.enabled</

name

>

<

value

>false</

value

>

</

property

>

</

configuration

>

|

-

dfs.ha.automatic-failover.enabled

-

fs.ha.namenodes.mycluster

-

dfs.namenode.shared.edits.dir

-

dfs.journalnode.edits.dir

-

dfs.ha.fencing.methods

3 启动

3.1 先在journalnode机器上启动journalnode

|

1

|

$HADOOP_HOME

/sbin/hadoop-daemon

.sh start journalnode

|

3.2 在namenode机器上启动namenode

3.2.1

|

1

|

$HADOOP_HOME

/bin/hadoop

namenode -

format

|

|

1

|

$HADOOP_HOME

/bin/hdfs

namenode -initializeSharedEdits

|

3.2.2

|

1

|

$HADOOP_HOME

/sbin/hadoop-daemon

.sh start namenode

|

3.2.3

|

1

|

$HADOOP_HOME

/sbin/hadoop-daemon

.sh start namenode -bootstrapStandby

|

3.2.4

|

1

|

$HADOOP_HOME

/sbin/hadoop-daemon

.sh start namenode

|

3.2.5

|

1

|

$HADOOP_HOME

/bin/hdfs

haadmin -transitionToActive nn1

|

3.3 在datanode机器上启动datanode

|

1

|

$HADOOP_HOME

/sbin/hadoop-daemon

.sh start datanode

|

3.4 检查

4 测试

|

1

|

$HADOOP_HOME

/bin/hdfs

haadmin -transitionToActive nn2

|

|

1

|

$HADOOP_HOME

/bin/hdfs

haadmin -failover nn1 nn2

|

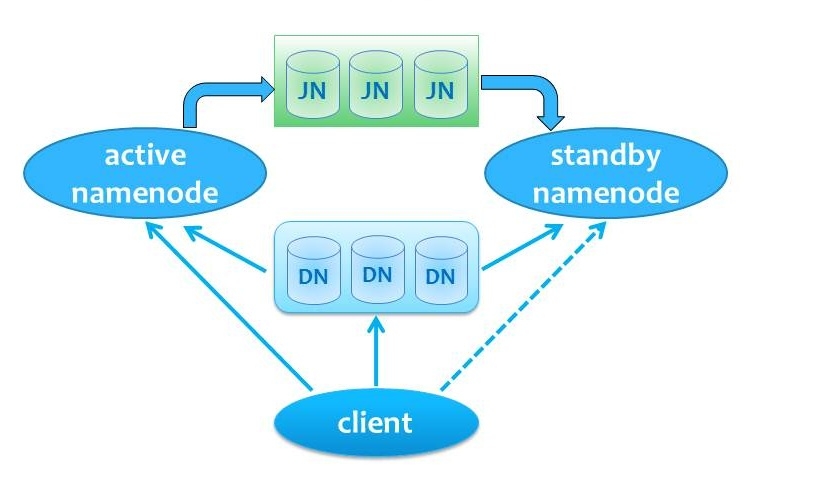

5 QJM方式HA的结构图

6 实战tips

-

在namenode1做的一些配置和操作,在namenode2上也要做一次,保持2台机器一致。

-

注意首次启动(第一次启动的时候就是HA)和非首次启动(运行一段时间后加入HA特性)的区别。

-

因为HA的自动切换容易出现ssh登陆和权限的问题,而且网上也有资料测试自动切换有时候会不成功,所以在生产环境还是建议采用手工切换的方式,这样更加可靠,有问题也可以及时查。

1462

1462

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?