idea编译kafka 2.6 源码

最近项目要接入kafka和flink,有时间就学一下kafka的源码,就编译了一下kafka的源码,期间还是踩了不少坑的。

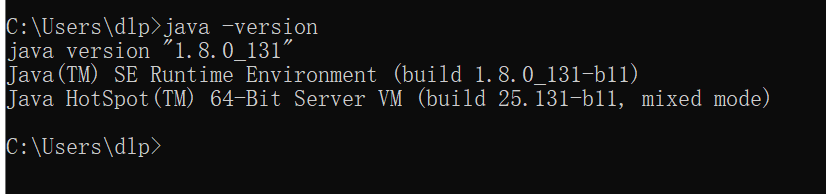

一、安装jdk

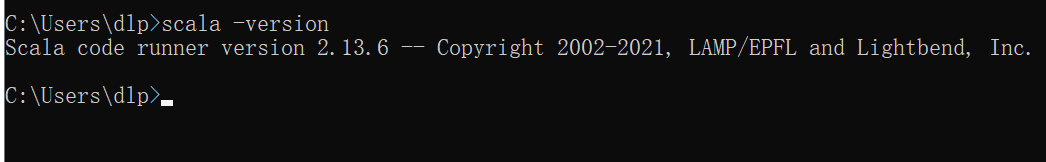

二、安装scala

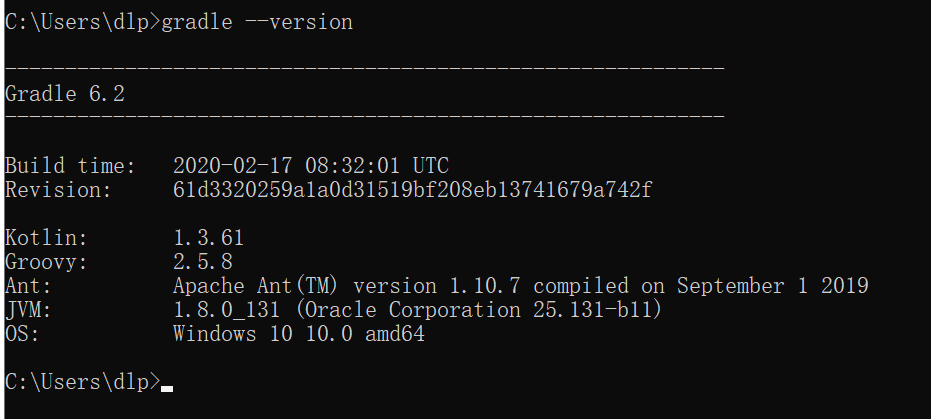

三、安装gradle

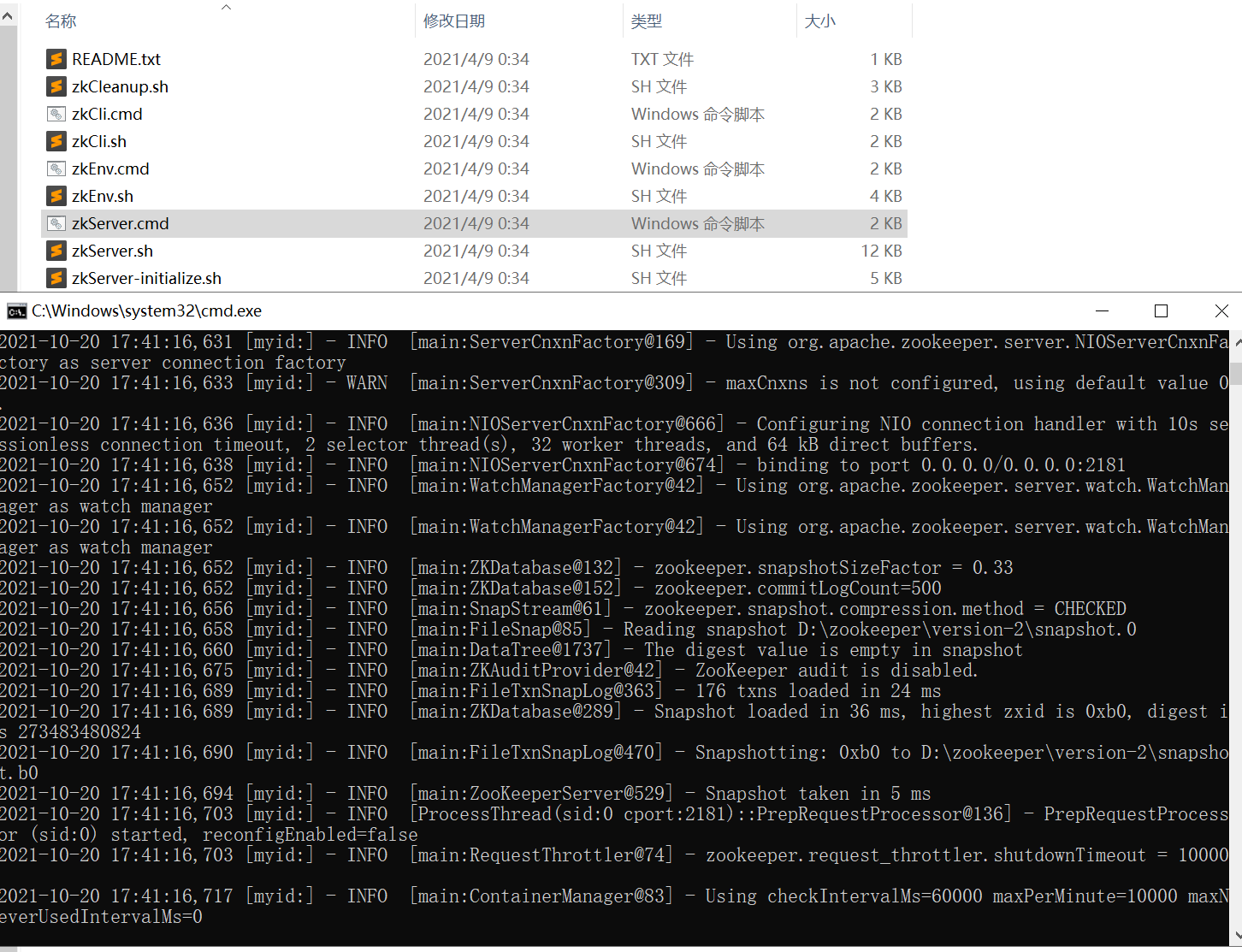

四、本地启动zookeeper

最近在官网下载,然后解压,调整配置文件,将zoo_sample.cfg复制一份为zoo.cfg,内容如下:

# The number of milliseconds of each tick

tickTime=2000

# The number of ticks that the initial

# synchronization phase can take

initLimit=10

# The number of ticks that can pass between

# sending a request and getting an acknowledgement

syncLimit=5

# the directory where the snapshot is stored.

# do not use /tmp for storage, /tmp here is just

# example sakes.

dataDir=D:/zookeeper

# the port at which the clients will connect

clientPort=2181

# the maximum number of client connections.

# increase this if you need to handle more clients

#maxClientCnxns=60之前问题就一直出现了这里,因为我有一台服务器,zookeeper是安装在上面的,然后本地kafka的源码的配置一直是服务器上的信息,就会导致kafka一运行就停了,一开始以为是kafka的版本问题,然后放了很久了,昨天就试了一下本地的zookeeper,结果就成功了。

运行一下cmd文件:

五、将kafka生成idea项目

在官网下载kafka的源码,我此处的版本为2.6.0,到源码的地方运行一下gradle idea,然后在idea里面安装一下scala的插件。在开始导入源码

-

修改config下的server.properties的kafka日志的位置和zookeeper的配置信息。

-

将log4j.properties移到到kafka-2.6.0-src\core\src\main\resources\log4j.properties

-

修改build.gradle文件,不然是没有日志的。如果是低版本的话,是不需要的,此处我用0.10.0.1版本测试过

project(':core') { println "Building project 'core' with Scala version ${versions.scala}" apply plugin: 'scala' apply plugin: "org.scoverage" archivesBaseName = "kafka_${versions.baseScala}" dependencies { compile project(':clients') compile libs.jacksonDatabind compile libs.jacksonModuleScala compile libs.jacksonDataformatCsv compile libs.jacksonJDK8Datatypes compile libs.joptSimple compile libs.metrics compile libs.scalaCollectionCompat compile libs.scalaJava8Compat compile libs.scalaLibrary // only needed transitively, but set it explicitly to ensure it has the same version as scala-library compile libs.scalaReflect compile libs.scalaLogging compile libs.slf4jApi compile libs.slf4jlog4j compile libs.log4j compile(libs.zookeeper) { // exclude module: 'slf4j-log4j12' // exclude module: 'log4j' } // ZooKeeperMain depends on commons-cli but declares the dependency as `provided` compile libs.commonsCli compileOnly libs.log4j testCompile project(':clients').sourceSets.test.output testCompile libs.bcpkix testCompile libs.mockitoCore testCompile libs.easymock testCompile(libs.apacheda) { exclude group: 'xml-apis', module: 'xml-apis' // `mina-core` is a transitive dependency for `apacheds` and `apacheda`. // It is safer to use from `apacheds` since that is the implementation. exclude module: 'mina-core' } testCompile libs.apachedsCoreApi testCompile libs.apachedsInterceptorKerberos testCompile libs.apachedsProtocolShared testCompile libs.apachedsProtocolKerberos testCompile libs.apachedsProtocolLdap testCompile libs.apachedsLdifPartition testCompile libs.apachedsMavibotPartition testCompile libs.apachedsJdbmPartition testCompile libs.junit testCompile libs.scalatest testCompile libs.slf4jlog4j testCompile libs.jfreechart } -

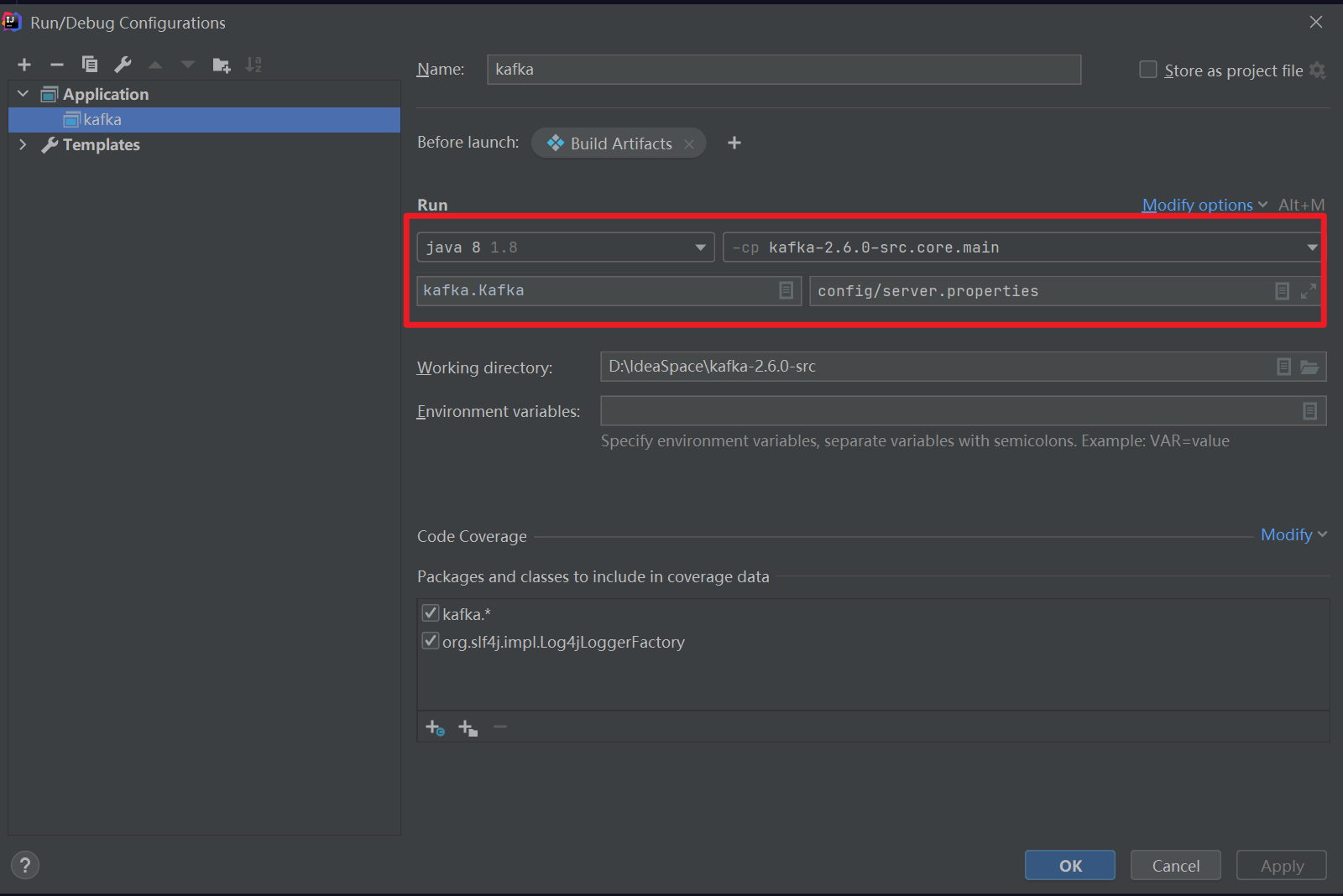

修改启动参数

-

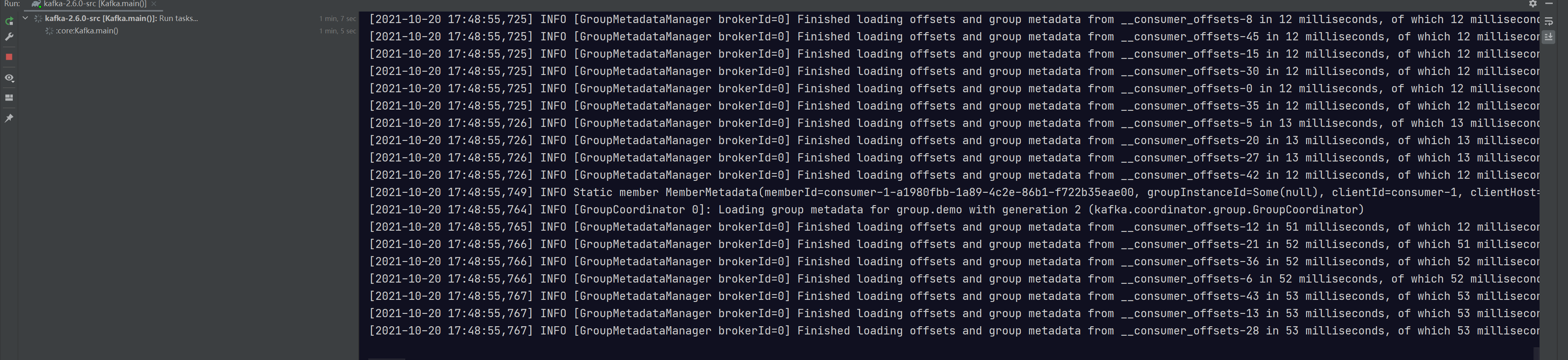

启动

六、进行测试

生成者

public class ProducerFastStart {

//kafka集群地址

private static final String brokerList = "localhost:9092";

//主体名称

private static final String topic = "dalianpai";

public static void main(String[] args) {

Properties properties = new Properties();

//设置序列化器

properties.put(ProducerConfig.KEY_SERIALIZER_CLASS_CONFIG, StringSerializer.class.getName());

//设置重试次数

properties.put(ProducerConfig.RETRIES_CONFIG,10);

properties.put(ProducerConfig.VALUE_SERIALIZER_CLASS_CONFIG,StringSerializer.class.getName());

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,brokerList);

// 2 构建拦截链

List<String> interceptors = new ArrayList<>();

interceptors.add(CounterInterceptor.class.getName());

interceptors.add(TimeInterceptor.class.getName());

properties.put(ProducerConfig.INTERCEPTOR_CLASSES_CONFIG, interceptors);

KafkaProducer<String, String> producer = new KafkaProducer<>(properties);

// 3 发送消息

for (int i = 0; i < 11; i++) {

ProducerRecord<String, String> record = new ProducerRecord<>(topic, "Kafka-demo-001", "hello, Kafka!"+i);

producer.send(record);

}

producer.close();

}

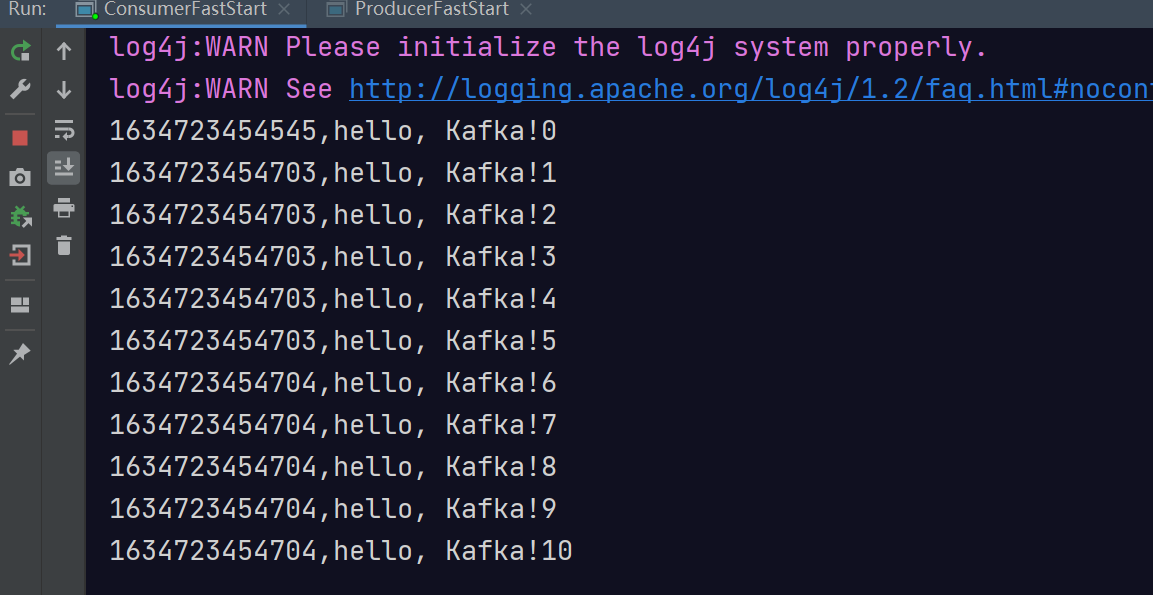

}消费者

public class ConsumerFastStart {

// Kafka集群地址

private static final String brokerList = "localhost:9092";

// 主题名称-之前已经创建

private static final String topic = "dalianpai";

// 消费组

private static final String groupId = "group.demo";

public static void main(String[] args) {

Properties properties = new Properties();

//设置序列化器

properties.put("key.deserializer", "org.apache.kafka.common.serialization.StringDeserializer");

properties.put("value.deserializer","org.apache.kafka.common.serialization.StringDeserializer");

properties.put(ProducerConfig.BOOTSTRAP_SERVERS_CONFIG,brokerList);

properties.put("group.id", groupId);

KafkaConsumer<String, String> consumer = new KafkaConsumer<>(properties);

consumer.subscribe(Collections.singletonList(topic));

while (true) {

ConsumerRecords<String, String> records =

consumer.poll(Duration.ofMillis(1000));

for (ConsumerRecord<String, String> record : records) {

System.out.println(record.value());

}

}

}

}测试结果:

7312

7312

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?