官方介绍:Logstash is an open source data collection engine with real-time pipelining capabilities。简单来说logstash就是一根具备实时数据传输能力的管道,负责将数据信息从管道的输入端传输到管道的输出端;与此同时这根管道还可以让你根据自己的需求在中间加上滤网,Logstash提供里很多功能强大的滤网以满足你的各种应用场景。

Logstash是ES下的一款开源软件,它能够同时 从多个来源采集数据、转换数据,Logstash常用于日志关系系统中做日志采集设备;

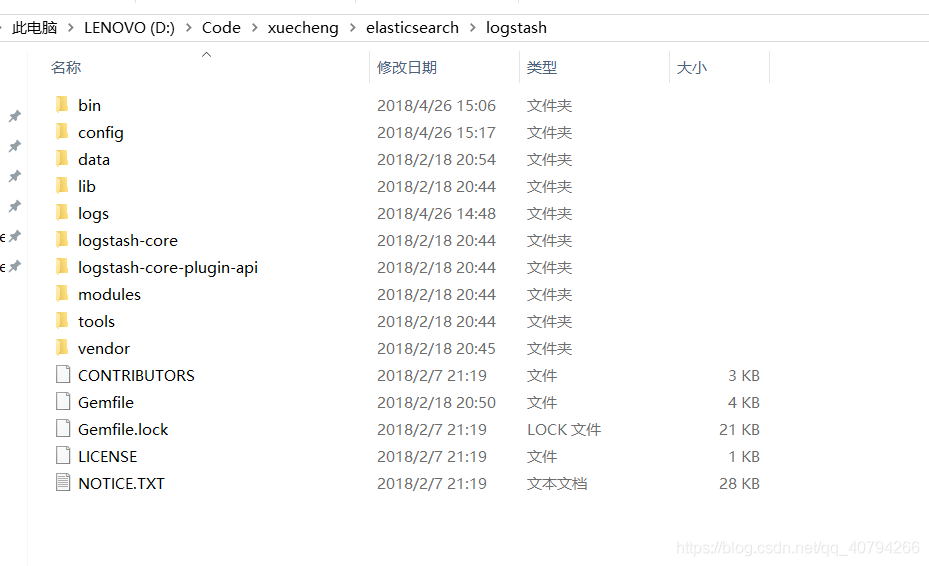

下载Logstash

logstash下载地址

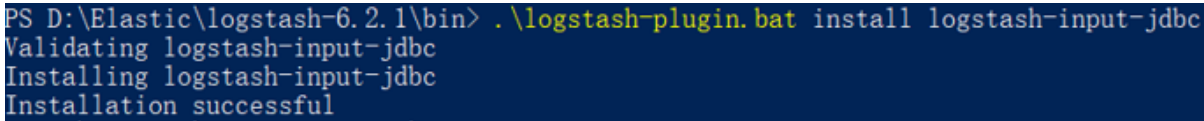

安装logstash-input-jdbc

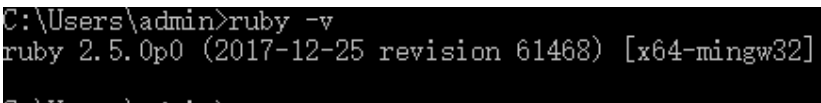

logstash-input-jdbc 是ruby开发的,先下载ruby并安装

下载地址: https://rubyinstaller.org/downloads/下载2.5版本即可。 安装完成查看是否安装成功

Logstash5.x以上版本本身自带有logstash-input-jdbc,6.x版本本身不带logstash-input-jdbc插件,需要手动安装

es 映射

{

"properties" : {

"description" : {

"analyzer" : "ik_max_word",

"search_analyzer": "ik_smart",

"type" : "text"

},"grade" : {

"type" : "keyword"

},"id" : {

"type" : "keyword"

},"mt" : {

"type" : "keyword"

},"name" : {

"analyzer" : "ik_max_word",

"search_analyzer": "ik_smart",

"type" : "text"

},"users" : {

"index" : false,

"type" : "text"

},"charge" : {

"type" : "keyword"

},"valid" : {

"type" : "keyword"

},"pic" : {

"index" : false,

"type" : "keyword"

},"qq" : {

"index" : false,

"type" : "keyword"

},"price" : {

"type" : "float"

},"price_old" : {

"type" : "float"

},"st" : {

"type" : "keyword"

},"status" : {

"type" : "keyword"

},"studymodel" : {

"type" : "keyword"

},"teachmode" : {

"type" : "keyword"

},"teachplan" : {

"analyzer" : "ik_max_word",

"search_analyzer": "ik_smart",

"type" : "text"

},"expires" : {

"type" : "date",

"format": "yyyy‐MM‐dd HH:mm:ss"

},"pub_time" : {

"type" : "date",

"format": "yyyy-MM-dd HH:mm:ss"

},"start_time" : {

"type" : "date",

"format": "yyyy‐MM‐dd HH:mm:ss"

},"end_time" : {

"type" : "date",

"format": "yyyy‐MM‐dd HH:mm:ss"

}

}

}

模板文件

Logstash的工作是从MySQL中读取数据,向ES中创建索引,这里需要提前创建mapping的模板文件以便logstash使用。

在logstach的config目录创建xc_course_template.json,内容如下:

下列内容对应的是 跟 es 的映射一样

{

"mappings" : {

"doc" : {

"properties" : {

"charge" : {

"type" : "keyword"

},

"description" : {

"analyzer" : "ik_max_word",

"search_analyzer" : "ik_smart",

"type" : "text"

},

"end_time" : {

"format" : "yyyy-MM-dd HH:mm:ss",

"type" : "date"

},

"expires" : {

"format" : "yyyy-MM-dd HH:mm:ss",

"type" : "date"

},

"grade" : {

"type" : "keyword"

},

"id" : {

"type" : "keyword"

},

"mt" : {

"type" : "keyword"

},

"name" : {

"analyzer" : "ik_max_word",

"search_analyzer" : "ik_smart",

"type" : "text"

},

"pic" : {

"index" : false,

"type" : "keyword"

},

"price" : {

"type" : "float"

},

"price_old" : {

"type" : "float"

},

"pub_time" : {

"format" : "yyyy-MM-dd HH:mm:ss",

"type" : "date"

},

"qq" : {

"index" : false,

"type" : "keyword"

},

"st" : {

"type" : "keyword"

},

"start_time" : {

"format" : "yyyy-MM-dd HH:mm:ss",

"type" : "date"

},

"status" : {

"type" : "keyword"

},

"studymodel" : {

"type" : "keyword"

},

"teachmode" : {

"type" : "keyword"

},

"teachplan" : {

"analyzer" : "ik_max_word",

"search_analyzer" : "ik_smart",

"type" : "text"

},

"users" : {

"index" : false,

"type" : "text"

},

"valid" : {

"type" : "keyword"

}

}

}

},

"template" : "xc_course"

}

编写mysql.conf 文件

在logstash的config目录下配置mysql.conf文件供logstash使用,logstash会根据mysql.conf文件的配置的地址从MySQL中读取数据向ES中写入索引。

input {

stdin {

}

jdbc {

jdbc_connection_string => "jdbc:mysql://localhost:3306/xc_course?useUnicode=true&characterEncoding=utf-8&useSSL=true&serverTimezone=UTC"

# the user we wish to excute our statement as

jdbc_user => "root"

jdbc_password => 1039191520

# the path to our downloaded jdbc driver

jdbc_driver_library => "D:/Code/xuecheng/repositry/repository3/mysql/mysql-connector-java/5.1.41/mysql-connector-java-5.1.41.jar"

# the name of the driver class for mysql

jdbc_driver_class => "com.mysql.jdbc.Driver"

jdbc_paging_enabled => "true"

jdbc_page_size => "50000"

#要执行的sql文件

#statement_filepath => "/conf/course.sql"

statement => "select * from course_pub where timestamp > date_add(:sql_last_value,INTERVAL 8 HOUR)"

#定时配置

schedule => "* * * * *"

record_last_run => true

last_run_metadata_path => "D:/Code/xuecheng/elasticsearch/logstash/logstash-6.2.1/config/logstash_metadata"

}

}

output {

elasticsearch {

#ES的ip地址和端口

hosts => "localhost:9200"

#hosts => ["localhost:9200","localhost:9202","localhost:9203"]

#ES索引库名称

index => "xc_course"

document_id => "%{id}"

document_type => "doc"

template =>"D:/Code/xuecheng/elasticsearch/logstash/logstash-6.2.1/config/xc_course_template.json"

template_name =>"xc_course"

template_overwrite =>"true"

}

stdout {

#日志输出

codec => json_lines

}

}

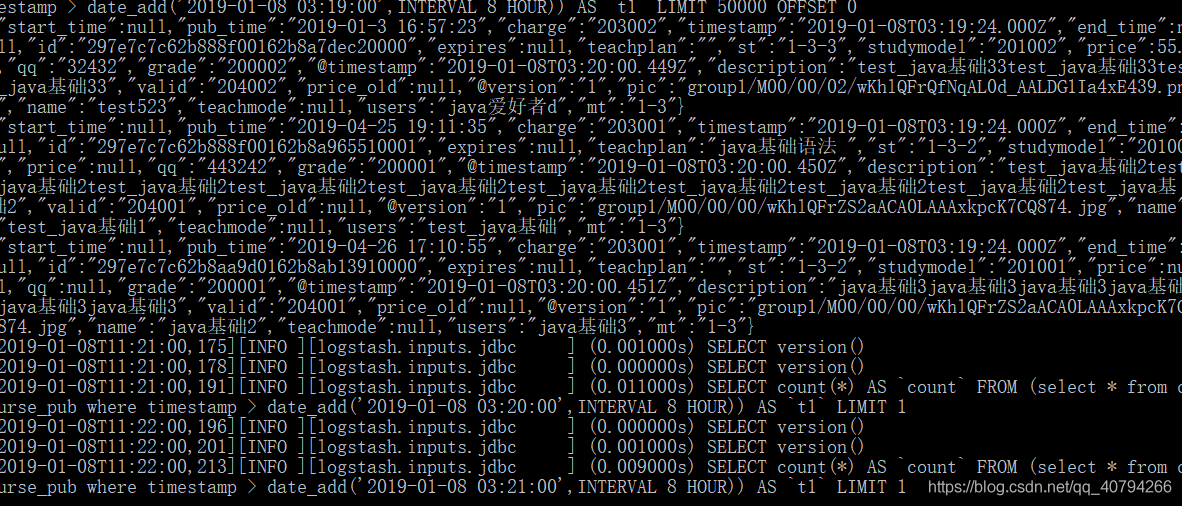

1、ES采用UTC时区问题

ES采用UTC 时区,比北京时间早8小时,所以ES读取数据时让最后更新时间加8小时

where timestamp > date_add(:sql_last_value,INTERVAL 8 HOUR)

2、logstash每个执行完成会在D:/ElasticSearch/logstash-6.2.1/config/logstash_metadata记录执行时间下次以此时间为基准进行增量同步数据到索引库。

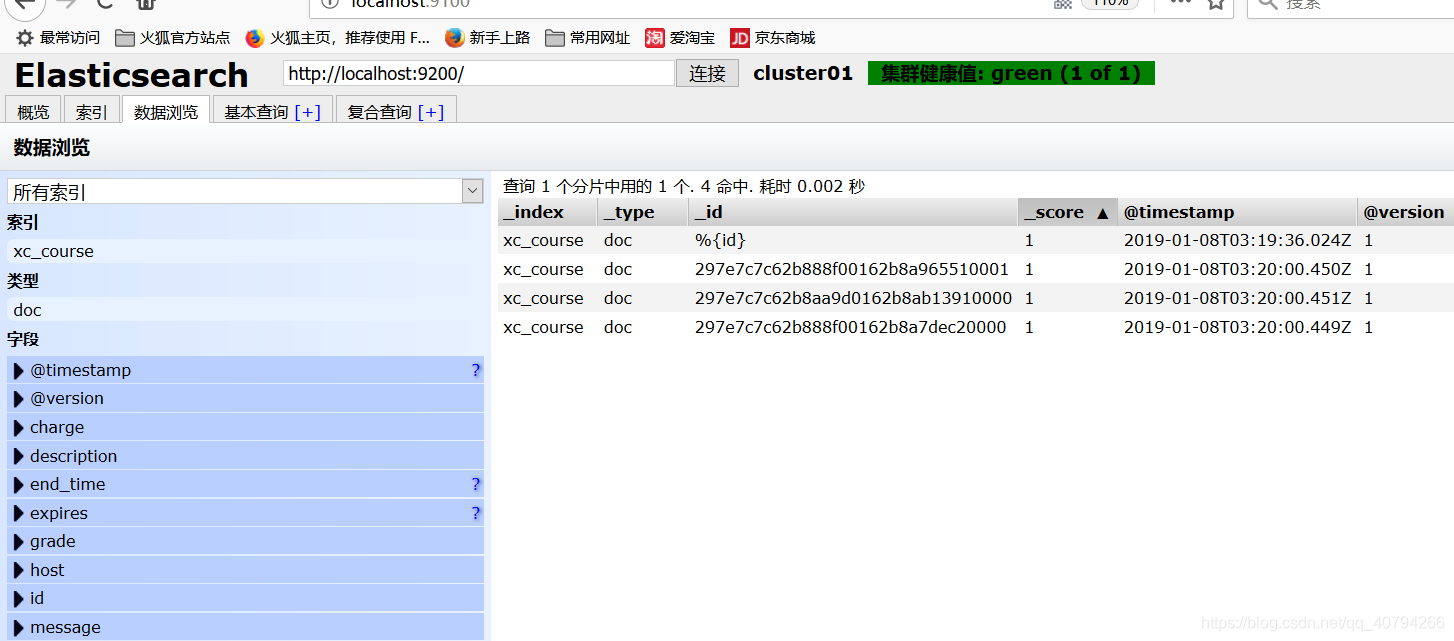

测试

启动logstash.bat:

.\logstash.bat ‐f ..\config\mysql.conf

修改数据库数据

并且修改 timestamp 为当前时间,logstash 才能找到这条数据并更新

在es head 中查看

2437

2437

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?