目录规划

两个机器的系统初始化(略)参考

https://blog.csdn.net/sudahai102448567/article/details/119611507

硬件配置和系统情况

CPU:

dmidecode |grep -i cpu|grep -i version|awk -F ':' '{print $2}'

内存:

dmidecode|grep -A5 "Memory Device"|grep Size|grep -v No |grep -v Range

or

grep MemTotal /proc/meminfo | awk '{print $2}'

检查swap

free -h

or

grep SwapTotal /proc/meminfo | awk '{print $2}'

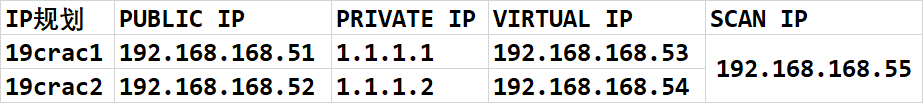

调整hosts文件

#public ip

192.168.168.51 19crac1

192.168.168.52 19crac2

#private ip

7.7.7.1 19crac1-priv

7.7.7.2 19crac2-priv

#vip

192.168.168.53 19crac1-vip

192.168.168.54 19crac2-vip

#scanip

192.168.168.55 ora19c-scan

(可选)禁用虚拟网卡,Note:对于虚拟机可选,需要重启操作系统

systemctl stop libvirtd

systemctl disable libvirtd

调整network

当使用Oracle集群的时候,Zero Configuration Network一样可能会导致节点间的通信问题,所以也应该停掉Without zeroconf, a network administrator must set up network services, such as Dynamic Host Configuration Protocol (DHCP) and Domain Name System (DNS), or configure each computer’s network settings manually.在使用平常的网络设置方式的情况下是可以停掉Zero Conf的

两个节点执行

echo "NOZEROCONF=yes" >>/etc/sysconfig/network && cat /etc/sysconfig/network

调整/dev/shm,把/dev/shm调整到4G

cp /etc/fstab /etc/fstab_`date +"%Y%m%d_%H%M%S"`

echo "tmpfs /dev/shm tmpfs rw,exec,size=4G 0 0">>/etc/fstab

mount -o remount /dev/shm

关闭THP和numa

检查:

cat /sys/kernel/mm/transparent_hugepage/enabled

cat /sys/kernel/mm/transparent_hugepage/defrag

修改

sed -i 's/quiet/quiet transparent_hugepage=never numa=off/' /etc/default/grub

grep quiet /etc/default/grub

grub2-mkconfig -o /boot/grub2/grub.cfg

重启后检查是否生效:

cat /sys/kernel/mm/transparent_hugepage/enabled

cat /proc/cmdline

不重启,临时生效

echo never > /sys/kernel/mm/transparent_hugepage/enabled

cat /sys/kernel/mm/transparent_hugepage/enabled

配置软件yum源,安装软件包

#检查(根据官方文档要求)

rpm -q --qf '%{NAME}-%{VERSION}-%{RELEASE} (%{ARCH})\n' \

bc \

binutils \

compat-libcap1 \

compat-libstdc++-33 \

elfutils-libelf \

elfutils-libelf-devel \

fontconfig-devel \

glibc \

gcc \

gcc-c++ \

glibc \

glibc-devel \

ksh \

libstdc++ \

libstdc++-devel \

libaio \

libaio-devel \

libXrender \

libXrender-devel \

libxcb \

libX11 \

libXau \

libXi \

libXtst \

libgcc \

libstdc++-devel \

make \

sysstat \

unzip \

readline \

smartmontools

#安装软件包和工具包

yum install -y bc* ntp* binutils* compat-libcap1* compat-libstdc++* dtrace-modules* dtrace-modules-headers* dtrace-modules-provider-headers* dtrace-utils* elfutils-libelf* elfutils-libelf-devel* fontconfig-devel* glibc* glibc-devel* ksh* libaio* libaio-devel* libdtrace-ctf-devel* libXrender* libXrender-devel* libX11* libXau* libXi* libXtst* libgcc* librdmacm-devel* libstdc++* libstdc++-devel* libxcb* make* net-tools* nfs-utils* python* python-configshell* python-rtslib* python-six* targetcli* smartmontools* sysstat* gcc* nscd* unixODBC* unzip readline

检查

rpm -q --qf '%{NAME}-%{VERSION}-%{RELEASE} (%{ARCH})\n' \

bc \

binutils \

compat-libcap1 \

compat-libstdc++-33 \

elfutils-libelf \

elfutils-libelf-devel \

fontconfig-devel \

glibc \

gcc \

gcc-c++ \

glibc \

glibc-devel \

ksh \

libstdc++ \

libstdc++-devel \

libaio \

libaio-devel \

libXrender \

libXrender-devel \

libxcb \

libX11 \

libXau \

libXi \

libXtst \

libgcc \

libstdc++-devel \

make \

sysstat \

unzip \

readline \

smartmontools

配置核心参数

编者注:在 Linux 7之前,内核参数文件是修改 /etc/sysctl.conf 文件,但在 Linux 7.x 之后发生了变化(/etc/sysctl.d/97-oracle-database-sysctl.conf):但仍然可以修改这个文件,没有什么不一样,官方文档中 19c 使用 97-oracle-database-sysctl.conf。生效方式: /sbin/sysctl --system

主要核心参数手工计算如下:

MEM=$(expr $(grep MemTotal /proc/meminfo|awk '{print $2}') \* 1024)

SHMALL=$(expr $MEM / $(getconf PAGE_SIZE))

SHMMAX=$(expr $MEM \* 3 / 5) # 这里配置为3/5 RAM大小

echo $MEM

echo $SHMALL

echo $SHMMAX

min_free_kbytes = sqrt(lowmem_kbytes * 16) = 4 * sqrt(lowmem_kbytes)(注:lowmem_kbytes即可认为是系统内存大小)

vm.nr_hugepages =(内存M/3+ASM内存大小4096M)/Hugepagesize M

#操作系统内存的1/3加上ASM实例内存4G。

#x86平台 Hugepagesize =2048即2M,linuxone平台Hugepagesize=1024 即1M

# 例x86平台64G内存 (64G*1024/3+4096M)/2M=12971

例x86平台32G内存 (32G*1024/3+4096M)/2M=7509

例x86平台16G内存 (16G*1024/3+4096M)/2M=4778

#linuxone平台 64G内存 (64G*1024/3+4096M)/1M=25942

#linuxone平台 32G内存 (32G*1024/3+4096M)/1M=12971

256*1024/3+4096

cp /etc/sysctl.conf /etc/sysctl.conf.bak

memTotal=$(grep MemTotal /proc/meminfo | awk '{print $2}')

totalMemory=$((memTotal / 2048))

shmall=$((memTotal / 4))

if [ $shmall -lt 2097152 ]; then

shmall=2097152

fi

shmmax=$((memTotal * 1024 - 1))

if [ "$shmmax" -lt 4294967295 ]; then

shmmax=4294967295

fi

cat <<EOF>>/etc/sysctl.conf

fs.aio-max-nr = 1048576

fs.file-max = 6815744

kernel.shmall = $shmall

kernel.shmmax = $shmmax

kernel.shmmni = 4096

kernel.sem = 250 32000 100 128

net.ipv4.ip_local_port_range = 9000 65500

net.core.rmem_default = 16777216

net.core.rmem_max = 16777216

net.core.wmem_max = 16777216

net.core.wmem_default = 16777216

fs.aio-max-nr = 6194304

vm.dirty_ratio=20

vm.dirty_background_ratio=3

vm.dirty_writeback_centisecs=100

vm.dirty_expire_centisecs=500

vm.swappiness=10

vm.min_free_kbytes=524288

net.core.netdev_max_backlog = 30000

net.core.netdev_budget = 600

#vm.nr_hugepages =

net.ipv4.conf.all.rp_filter = 2

net.ipv4.conf.default.rp_filter = 2

net.ipv4.ipfrag_time = 60

net.ipv4.ipfrag_low_thresh=6291456

net.ipv4.ipfrag_high_thresh = 8388608

EOF

sysctl -p

关闭avahi服务

systemctl stop avahi-deamon

systemctl disable avahi-deamon

systemctl stop avahi-chsconfd

systemctl disable avahi-chsconfd

关闭其他服务

–禁用开机启动

systemctl disable accounts-daemon.service

systemctl disable atd.service

systemctl disable avahi-daemon.service

systemctl disable avahi-daemon.socket

systemctl disable bluetooth.service

systemctl disable brltty.service

--systemctl disable chronyd.service

systemctl disable colord.service

systemctl disable cups.service

systemctl disable debug-shell.service

systemctl disable firewalld.service

systemctl disable gdm.service

systemctl disable ksmtuned.service

systemctl disable ktune.service

systemctl disable libstoragemgmt.service

systemctl disable mcelog.service

systemctl disable ModemManager.service

--systemctl disable ntpd.service

systemctl disable postfix.service

systemctl disable postfix.service

systemctl disable rhsmcertd.service

systemctl disable rngd.service

systemctl disable rpcbind.service

systemctl disable rtkit-daemon.service

systemctl disable tuned.service

systemctl disable upower.service

systemctl disable wpa_supplicant.service

–停止服务

systemctl stop accounts-daemon.service

systemctl stop atd.service

systemctl stop avahi-daemon.service

systemctl stop avahi-daemon.socket

systemctl stop bluetooth.service

systemctl stop brltty.service

--systemctl stop chronyd.service

systemctl stop colord.service

systemctl stop cups.service

systemctl stop debug-shell.service

systemctl stop firewalld.service

systemctl stop gdm.service

systemctl stop ksmtuned.service

systemctl stop ktune.service

systemctl stop libstoragemgmt.service

systemctl stop mcelog.service

systemctl stop ModemManager.service

--systemctl stop ntpd.service

systemctl stop postfix.service

systemctl stop postfix.service

systemctl stop rhsmcertd.service

systemctl stop rngd.service

systemctl stop rpcbind.service

systemctl stop rtkit-daemon.service

systemctl stop tuned.service

systemctl stop upower.service

systemctl stop wpa_supplicant.service

配置ssh服务

--配置LoginGraceTime参数为0, 将timeout wait设置为无限制

cp /etc/ssh/sshd_config /etc/ssh/sshd_config_`date +"%Y%m%d_%H%M%S"` && sed -i '/#LoginGraceTime 2m/ s/#LoginGraceTime 2m/LoginGraceTime 0/' /etc/ssh/sshd_config && grep LoginGraceTime /etc/ssh/sshd_config

--加快SSH登陆速度,禁用DNS

cp /etc/ssh/sshd_config /etc/ssh/sshd_config_`date +"%Y%m%d_%H%M%S"` && sed -i '/#UseDNS yes/ s/#UseDNS yes/UseDNS no/' /etc/ssh/sshd_config && grep UseDNS /etc/ssh/sshd_config

hugepage配置(可选)

与AMM冲突

如果您有较大的RAM和SGA,则HugePages对于在Linux上提高Oracle数据库性能至关重要

grep HugePagesize /proc/meminfo

Hugepagesize: 2048 kB

chmod 755 hugepages_settings.sh

需要在数据库启动情况下执行

脚本:

cat hugepages_settings.sh

#!/bin/bash

#

# hugepages_settings.sh

#

# Linux bash script to compute values for the

# recommended HugePages/HugeTLB configuration

# on Oracle Linux

#

# Note: This script does calculation for all shared memory

# segments available when the script is run, no matter it

# is an Oracle RDBMS shared memory segment or not.

#

# This script is provided by Doc ID 401749.1 from My Oracle Support

# http://support.oracle.com

# Welcome text

echo "

This script is provided by Doc ID 401749.1 from My Oracle Support

(http://support.oracle.com) where it is intended to compute values for

the recommended HugePages/HugeTLB configuration for the current shared

memory segments on Oracle Linux. Before proceeding with the execution please note following:

* For ASM instance, it needs to configure ASMM instead of AMM.

* The 'pga_aggregate_target' is outside the SGA and

you should accommodate this while calculating the overall size.

* In case you changes the DB SGA size,

as the new SGA will not fit in the previous HugePages configuration,

it had better disable the whole HugePages,

start the DB with new SGA size and run the script again.

And make sure that:

* Oracle Database instance(s) are up and running

* Oracle Database 11g Automatic Memory Management (AMM) is not setup

(See Doc ID 749851.1)

* The shared memory segments can be listed by command:

# ipcs -m

Press Enter to proceed..."

read

# Check for the kernel version

KERN=`uname -r | awk -F. '{ printf("%d.%d/n",$1,$2); }'`

# Find out the HugePage size

HPG_SZ=`grep Hugepagesize /proc/meminfo | awk '{print $2}'`

if [ -z "$HPG_SZ" ];then

echo "The hugepages may not be supported in the system where the script is being executed."

exit 1

fi

# Initialize the counter

NUM_PG=0

# Cumulative number of pages required to handle the running shared memory segments

for SEG_BYTES in `ipcs -m | cut -c44-300 | awk '{print $1}' | grep "[0-9][0-9]*"`

do

MIN_PG=`echo "$SEG_BYTES/($HPG_SZ*1024)" | bc -q`

if [ $MIN_PG -gt 0 ]; then

NUM_PG=`echo "$NUM_PG+$MIN_PG+1" | bc -q`

fi

done

RES_BYTES=`echo "$NUM_PG * $HPG_SZ * 1024" | bc -q`

# An SGA less than 100MB does not make sense

# Bail out if that is the case

if [ $RES_BYTES -lt 100000000 ]; then

echo "***********"

echo "** ERROR **"

echo "***********"

echo "Sorry! There are not enough total of shared memory segments allocated for

HugePages configuration. HugePages can only be used for shared memory segments

that you can list by command:

# ipcs -m

of a size that can match an Oracle Database SGA. Please make sure that:

* Oracle Database instance is up and running

* Oracle Database 11g Automatic Memory Management (AMM) is not configured"

exit 1

fi

# Finish with results

case $KERN in

'2.4') HUGETLB_POOL=`echo "$NUM_PG*$HPG_SZ/1024" | bc -q`;

echo "Recommended setting: vm.hugetlb_pool = $HUGETLB_POOL" ;;

'2.6') echo "Recommended setting: vm.nr_hugepages = $NUM_PG" ;;

'3.8') echo "Recommended setting: vm.nr_hugepages = $NUM_PG" ;;

'3.10') echo "Recommended setting: vm.nr_hugepages = $NUM_PG" ;;

'4.1') echo "Recommended setting: vm.nr_hugepages = $NUM_PG" ;;

'4.14') echo "Recommended setting: vm.nr_hugepages = $NUM_PG" ;;

'4.18') echo "Recommended setting: vm.nr_hugepages = $NUM_PG" ;;

'5.4') echo "Recommended setting: vm.nr_hugepages = $NUM_PG" ;;

*) echo "Kernel version $KERN is not supported by this script (yet). Exiting." ;;

esac

# End

计算需要的页数:

linux 一个大页的大小为 2M,开启大页的总内存应该比 sga_max_size 稍稍大一点,比如

sga_max_size=3g,则:hugepages > (3*1024)/2 = 1536

配置 sysctl.conf 文件,添加:

[root@ node01 ~]$ vi /etc/sysctl.conf

vm.nr_hugepages = 1550

配置/etc/security/limits.conf,添加(比 sga_max_size 稍大,官方建议为总物理内存的 90%,以 K 为

单位):

vi /etc/security/limits.conf

oracle soft memlock 3400000

oracle hard memlock 3400000

# vim /etc/sysctl.conf

vm.nr_hugepages = xxxx

# sysctl -p

vim /etc/security/limits.conf

oracle soft memlock xxxxxxxxxxx

oracle hard memlock xxxxxxxxxxx

修改login配置

cat >> /etc/pam.d/login <<EOF

session required pam_limits.so

EOF

配置用户限制

cat >> /etc/security/limits.conf <<EOF

grid soft nproc 2047

grid hard nproc 16384

grid soft nofile 1024

grid hard nofile 65536

grid soft stack 10240

grid hard stack 32768

oracle soft nproc 2047

oracle hard nproc 16384

oracle soft nofile 1024

oracle hard nofile 65536

oracle soft stack 10240

oracle hard stack 32768

oracle soft memlock 3145728

oracle hard memlock 3145728

EOF

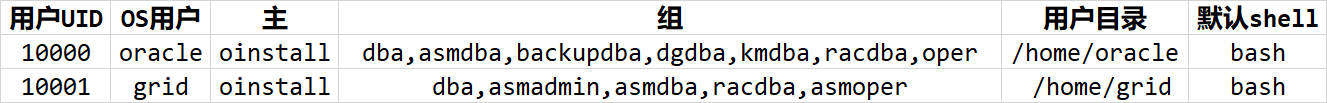

创建组和用户

groupadd -g 54321 oinstall

groupadd -g 54322 dba

groupadd -g 54323 oper

groupadd -g 54324 backupdba

groupadd -g 54325 dgdba

groupadd -g 54326 kmdba

groupadd -g 54327 asmdba

groupadd -g 54328 asmoper

groupadd -g 54329 asmadmin

groupadd -g 54330 racdba

useradd -g oinstall -G dba,oper,backupdba,dgdba,kmdba,asmdba,racdba -u 10000 oracle

useradd -g oinstall -G dba,asmdba,asmoper,asmadmin,racdba -u 10001 grid

echo "oracle" | passwd --stdin oracle

echo "grid" | passwd --stdin grid

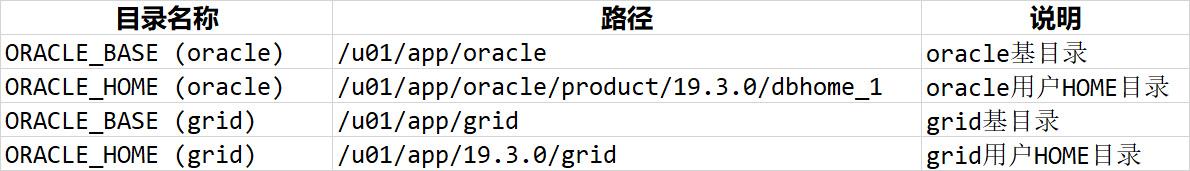

创建目录

mkdir -p /u01/app/19.3.0/grid

mkdir -p /u01/app/grid

mkdir -p /u01/app/oracle/product/19.3.0/dbhome_1

chown -R grid:oinstall /u01

chown -R oracle:oinstall /u01/app/oracle

chmod -R 775 /u01/

配置用户环境变量grid

export TMP=/tmp

export TMPDIR=$TMP

export ORACLE_BASE=/u01/app/grid

export ORACLE_HOME=/u01/app/19.3.0/grid

export TNS_ADMIN=$ORACLE_HOME/network/admin

export NLS_LANG=AMERICAN_AMERICA.AL32UTF8

export LD_LIBRARY_PATH=$ORACLE_HOME/lib:/lib:/usr/lib

export ORACLE_SID=+ASM1

export PATH=/usr/sbin:$PATH

export PATH=$ORACLE_HOME/bin:$ORACLE_HOME/OPatch:$PATH

alias sas='sqlplus / as sysasm'

export PS1="[\`whoami\`@\`hostname\`:"'$PWD]\$ '

oracle:

umask 022

export TMP=/tmp

export TMPDIR=$TMP

export NLS_LANG=AMERICAN_AMERICA.AL32UTF8

export ORACLE_BASE=/u01/app/oracle

export ORACLE_HOME=$ORACLE_BASE/product/19.3.0/dbhome_1

export ORACLE_HOSTNAME=19crac1

export TNS_ADMIN=\$ORACLE_HOME/network/admin

export LD_LIBRARY_PATH=\$ORACLE_HOME/lib:/lib:/usr/lib

export ORACLE_SID=db19c1

export PATH=/usr/sbin:$PATH

export PATH=$ORACLE_HOME/bin:$ORACLE_HOME/OPatch:$PATH

alias sas='sqlplus / as sysdba'

export PS1="[\`whoami\`@\`hostname\`:"'$PWD]\$ '

配置共享存储,参考

https://blog.csdn.net/sudahai102448567/article/details/108123811

https://blog.csdn.net/sudahai102448567/article/details/107631462

上传软件包并解压grid执行

unzip LINUX.X64_193000_grid_home.zip -d $ORACLE_HOME

安装cvuqdisk软件

cvuqdisk RPM 包含在 Oracle Grid Infrastructure 安装介质上的cv/rpm 目录中

设置环境变量 CVUQDISK_GRP,使其指向作为 cvuqdisk 的所有者所在的组(本文为 oinstall):

export CVUQDISK_GRP=oinstall

使用 CVU 验证是否满足 Oracle 集群件要求

记住要作为 grid 用户在将要执行 Oracle 安装的节点 (racnode1) 上运行。此外,必须为 grid

用户配置通过用户等效性实现的 SSH 连通性,

export CVUQDISK_GRP=oinstall

rpm -ivh cvuqdisk-1.0.7-1.rpm

配置grid 用户ssh(可选)

grid用户

$ORACLE_HOME/oui/prov/resources/scripts/sshUserSetup.sh -user grid -hosts "19crac1 19crac2" -advanced -noPromptPassphrase

oracle用户

$GRID_HOME/oui/prov/resources/scripts/sshUserSetup.sh -user oracle -hosts "19crac1 19crac2" -advanced -noPromptPassphrase

普通配置方法

分别配置 grid 和 oracle 用户的 ssh 两个节点都执行

# su - oracle

$ mkdir -p ~/.ssh

$ chmod 700 ~/.ssh

$ ssh-keygen -t rsa ->回车->回车->回车

$ ssh-keygen -t dsa ->回车->回车->回车

----------------------------------------------------------------

# su - oracle

$ mkdir ~/.ssh

$ chmod 700 ~/.ssh

$ ssh-keygen -t rsa ->回车->回车->回车

$ ssh-keygen -t dsa ->回车->回车->回车

以上两个节点都执行,下面就一个节点执行即可

$ cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys

$ cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys

$ ssh oracle19c-rac2 cat ~/.ssh/id_rsa.pub >> ~/.ssh/authorized_keys ->输入 oracle19c-rac2 密码

$ ssh oracle19c-rac2 cat ~/.ssh/id_dsa.pub >> ~/.ssh/authorized_keys ->输入 oracle19c-rac2 密码

$ scp ~/.ssh/authorized_keys oracle19c-rac2:~/.ssh/authorized_keys ->输入 oracle19c-rac2 密码

测试两节点连通性:

$ ssh 19crac1 date

$ ssh 19crac2 date

$ ssh 19crac1-priv date

$ ssh 19crac2-priv date

$ ssh 19crac1 date

$ ssh 19crac2 date

$ ssh 19crac1-priv date

$ ssh 19crac2-priv date

分别使用grid和oracle用户验证SSH connectivity:

<grid>$ for h in test1 test1-priv test2 test2-priv;do

ssh -l grid -o StrictHostKeyChecking=no $h date;

done

<oracle>$ for h in test1 test1-priv test2 test2-priv;do

ssh -l oracle -o StrictHostKeyChecking=no $h date;

done

安装前检查

RHEL 7 系统不支持 AFD,在安装 GRID 时,必须去掉 Configure Oracle ASM Fileter Driver,否则报错。

19C 版本 GIMR 管理功能是一个可选项,不强制要求配置,建议不配置此功能(本例是启用了 GIMR)。如果要配置 GIMR 功能,需要的容量不少于 35G,建议最小配置为 40G。最好 OCR 的磁盘组和 GIMR 的实例 ASM存储分开,以便于对 OCR 磁盘组的管理

GRID 安装完成,在进行集群校验时,因为 SCAN NAME 没有使用 DNS 解析报失败,此情况正常,忽略

在 grid 软件目录里运行以下命令:

使用 CVU 验证硬件和操作系统设置

./runcluvfy.sh stage -pre crsinst -n 19crac1,19crac2 -fixup -verbose >> runcluvfy01.log

./runcluvfy.sh stage -pre crsinst -n 19crac1,19crac2 -verbose >> runcluvfy02.log

./runcluvfy.sh stage -post hwos -n 19crac1,19crac2 -verbos >> runcluvfy03.log

图形化安装./gridSetup.sh

安装standalone cluster

填写集群名称和scan名字,scan名字和/etc/hosts一致,集群名字和scanip不能以数字开头,集群名字不能超过15个字符

添加节点二信息,进行互信

确保对应网卡和IP网段对应即可,19C心跳网段需要选ASM & Private,用于ASM实例的托管

选择ASM

不安装GIMR

修改路径,取消driver

不启动IPMI

不注册EM

核对用户组

节点一脚本二:

[root@19crac1 ~]# /u01/app/19.3.0/grid/root.sh

Performing root user operation.

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /u01/app/19.3.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Creating /etc/oratab file...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Relinking oracle with rac_on option

Using configuration parameter file: /u01/app/19.3.0/grid/crs/install/crsconfig_params

The log of current session can be found at:

/u01/app/grid/crsdata/19crac1/crsconfig/rootcrs_19crac1_2021-09-23_01-42-43PM.log

2021/09/23 13:42:50 CLSRSC-594: Executing installation step 1 of 19: 'SetupTFA'.

2021/09/23 13:42:50 CLSRSC-594: Executing installation step 2 of 19: 'ValidateEnv'.

2021/09/23 13:42:50 CLSRSC-363: User ignored prerequisites during installation

2021/09/23 13:42:50 CLSRSC-594: Executing installation step 3 of 19: 'CheckFirstNode'.

2021/09/23 13:42:52 CLSRSC-594: Executing installation step 4 of 19: 'GenSiteGUIDs'.

2021/09/23 13:42:53 CLSRSC-594: Executing installation step 5 of 19: 'SetupOSD'.

2021/09/23 13:42:53 CLSRSC-594: Executing installation step 6 of 19: 'CheckCRSConfig'.

2021/09/23 13:42:53 CLSRSC-594: Executing installation step 7 of 19: 'SetupLocalGPNP'.

2021/09/23 13:43:07 CLSRSC-594: Executing installation step 8 of 19: 'CreateRootCert'.

2021/09/23 13:43:10 CLSRSC-594: Executing installation step 9 of 19: 'ConfigOLR'.

2021/09/23 13:43:15 CLSRSC-4002: Successfully installed Oracle Trace File Analyzer (TFA) Collector.

2021/09/23 13:43:19 CLSRSC-594: Executing installation step 10 of 19: 'ConfigCHMOS'.

2021/09/23 13:43:19 CLSRSC-594: Executing installation step 11 of 19: 'CreateOHASD'.

2021/09/23 13:43:23 CLSRSC-594: Executing installation step 12 of 19: 'ConfigOHASD'.

2021/09/23 13:43:23 CLSRSC-330: Adding Clusterware entries to file 'oracle-ohasd.service'

2021/09/23 13:44:05 CLSRSC-594: Executing installation step 13 of 19: 'InstallAFD'.

2021/09/23 13:44:09 CLSRSC-594: Executing installation step 14 of 19: 'InstallACFS'.

2021/09/23 13:44:51 CLSRSC-594: Executing installation step 15 of 19: 'InstallKA'.

2021/09/23 13:44:56 CLSRSC-594: Executing installation step 16 of 19: 'InitConfig'.

ASM has been created and started successfully.

[DBT-30001] Disk groups created successfully. Check /u01/app/grid/cfgtoollogs/asmca/asmca-210923PM014527.log for details.

2021/09/23 13:46:16 CLSRSC-482: Running command: '/u01/app/19.3.0/grid/bin/ocrconfig -upgrade grid oinstall'

CRS-4256: Updating the profile

Successful addition of voting disk 2e72fbea32e84fdabf3e04014b5a5745.

Successfully replaced voting disk group with +OCR.

CRS-4256: Updating the profile

CRS-4266: Voting file(s) successfully replaced

## STATE File Universal Id File Name Disk group

-- ----- ----------------- --------- ---------

1. ONLINE 2e72fbea32e84fdabf3e04014b5a5745 (/dev/asm-diskc) [OCR]

Located 1 voting disk(s).

2021/09/23 13:47:30 CLSRSC-594: Executing installation step 17 of 19: 'StartCluster'.

2021/09/23 13:48:56 CLSRSC-343: Successfully started Oracle Clusterware stack

2021/09/23 13:48:56 CLSRSC-594: Executing installation step 18 of 19: 'ConfigNode'.

2021/09/23 13:50:12 CLSRSC-594: Executing installation step 19 of 19: 'PostConfig'.

2021/09/23 13:50:46 CLSRSC-325: Configure Oracle Grid Infrastructure for a Cluster ... succeeded

节点二脚本二:

[root@19crac2 ~]# /u01/app/19.3.0/grid/root.sh

Performing root user operation.

The following environment variables are set as:

ORACLE_OWNER= grid

ORACLE_HOME= /u01/app/19.3.0/grid

Enter the full pathname of the local bin directory: [/usr/local/bin]:

Copying dbhome to /usr/local/bin ...

Copying oraenv to /usr/local/bin ...

Copying coraenv to /usr/local/bin ...

Creating /etc/oratab file...

Entries will be added to the /etc/oratab file as needed by

Database Configuration Assistant when a database is created

Finished running generic part of root script.

Now product-specific root actions will be performed.

Relinking oracle with rac_on option

Using configuration parameter file: /u01/app/19.3.0/grid/crs/install/crsconfig_params

The log of current session can be found at:

/u01/app/grid/crsdata/19crac2/crsconfig/rootcrs_19crac2_2021-09-23_01-53-18PM.log

2021/09/23 13:53:22 CLSRSC-594: Executing installation step 1 of 19: 'SetupTFA'.

2021/09/23 13:53:22 CLSRSC-594: Executing installation step 2 of 19: 'ValidateEnv'.

2021/09/23 13:53:23 CLSRSC-363: User ignored prerequisites during installation

2021/09/23 13:53:23 CLSRSC-594: Executing installation step 3 of 19: 'CheckFirstNode'.

2021/09/23 13:53:24 CLSRSC-594: Executing installation step 4 of 19: 'GenSiteGUIDs'.

2021/09/23 13:53:24 CLSRSC-594: Executing installation step 5 of 19: 'SetupOSD'.

2021/09/23 13:53:24 CLSRSC-594: Executing installation step 6 of 19: 'CheckCRSConfig'.

2021/09/23 13:53:25 CLSRSC-594: Executing installation step 7 of 19: 'SetupLocalGPNP'.

2021/09/23 13:53:26 CLSRSC-594: Executing installation step 8 of 19: 'CreateRootCert'.

2021/09/23 13:53:26 CLSRSC-594: Executing installation step 9 of 19: 'ConfigOLR'.

2021/09/23 13:53:31 CLSRSC-594: Executing installation step 10 of 19: 'ConfigCHMOS'.

2021/09/23 13:53:31 CLSRSC-594: Executing installation step 11 of 19: 'CreateOHASD'.

2021/09/23 13:53:33 CLSRSC-594: Executing installation step 12 of 19: 'ConfigOHASD'.

2021/09/23 13:53:33 CLSRSC-330: Adding Clusterware entries to file 'oracle-ohasd.service'

2021/09/23 13:53:47 CLSRSC-4002: Successfully installed Oracle Trace File Analyzer (TFA) Collector.

2021/09/23 13:54:10 CLSRSC-594: Executing installation step 13 of 19: 'InstallAFD'.

2021/09/23 13:54:11 CLSRSC-594: Executing installation step 14 of 19: 'InstallACFS'.

2021/09/23 13:54:57 CLSRSC-594: Executing installation step 15 of 19: 'InstallKA'.

2021/09/23 13:54:58 CLSRSC-594: Executing installation step 16 of 19: 'InitConfig'.

2021/09/23 13:55:08 CLSRSC-594: Executing installation step 17 of 19: 'StartCluster'.

2021/09/23 13:56:13 CLSRSC-343: Successfully started Oracle Clusterware stack

2021/09/23 13:56:13 CLSRSC-594: Executing installation step 18 of 19: 'ConfigNode'.

2021/09/23 13:56:34 CLSRSC-594: Executing installation step 19 of 19: 'PostConfig'.

2021/09/23 13:56:39 CLSRSC-325: Configure Oracle Grid Infrastructure for a Cluster ... succeeded

检查

[grid@19crac1:/home/grid]$ crsctl stat res -t

--------------------------------------------------------------------------------

Name Target State Server State details

--------------------------------------------------------------------------------

Local Resources

--------------------------------------------------------------------------------

ora.LISTENER.lsnr

ONLINE ONLINE 19crac1 STABLE

ONLINE ONLINE 19crac2 STABLE

ora.chad

ONLINE ONLINE 19crac1 STABLE

ONLINE ONLINE 19crac2 STABLE

ora.net1.network

ONLINE ONLINE 19crac1 STABLE

ONLINE ONLINE 19crac2 STABLE

ora.ons

ONLINE ONLINE 19crac1 STABLE

ONLINE ONLINE 19crac2 STABLE

ora.proxy_advm

OFFLINE OFFLINE 19crac1 STABLE

OFFLINE OFFLINE 19crac2 STABLE

--------------------------------------------------------------------------------

Cluster Resources

--------------------------------------------------------------------------------

ora.19crac1.vip

1 ONLINE ONLINE 19crac1 STABLE

ora.19crac2.vip

1 ONLINE ONLINE 19crac2 STABLE

ora.ASMNET1LSNR_ASM.lsnr(ora.asmgroup)

1 ONLINE ONLINE 19crac1 STABLE

2 ONLINE ONLINE 19crac2 STABLE

3 OFFLINE OFFLINE STABLE

ora.LISTENER_SCAN1.lsnr

1 ONLINE ONLINE 19crac1 STABLE

ora.OCR.dg(ora.asmgroup)

1 ONLINE ONLINE 19crac1 STABLE

2 ONLINE ONLINE 19crac2 STABLE

3 OFFLINE OFFLINE STABLE

ora.asm(ora.asmgroup)

1 ONLINE ONLINE 19crac1 Started,STABLE

2 ONLINE ONLINE 19crac2 Started,STABLE

3 OFFLINE OFFLINE STABLE

ora.asmnet1.asmnetwork(ora.asmgroup)

1 ONLINE ONLINE 19crac1 STABLE

2 ONLINE ONLINE 19crac2 STABLE

3 OFFLINE OFFLINE STABLE

ora.cvu

1 ONLINE ONLINE 19crac1 STABLE

ora.qosmserver

1 ONLINE ONLINE 19crac1 STABLE

ora.scan1.vip

1 ONLINE ONLINE 19crac1 STABLE

--------------------------------------------------------------------------------

创建磁盘组

安装Oracle软件

Oracle用户

unzip LINUX.X64_193000_db_home.zip -d $ORACLE_HOM

./runInstaller

都是可以忽略的

执行脚本

dbca建库

高级安装

选择一般用途

选择创建数据库名,是否包含PDB

选择数据库安装路径和管理方式

是否启动FRA和归档

不使用vault

EM配置

常用命令

集群资源状态

crsctl stat res -t

集群服务状态

crsctl check cluster -all

数据库状态

srvctl status database -d db19c

监听状态

lsnrctl status

scan状态

srvctl status scan

srvctl status scan_listener

lsnrctl status LISTENER_SCAN1

nodeapps状态

srvctl status nodeapps

VIP状态

srvctl status vip -node 19crac1

srvctl status vip -node 19crac2

数据库配置

srvctl config database -d db19c

OCR

ocrcheck

VOTEDISK

crsctl query css votedisk

GI版本

crsctl query crs releaseversion

crsctl query crs activeversion

ASM

asmcmd lsdg

asmcmd lsof

启动和关闭RAC

-关闭\启动单个实例

$ srvctl stop\start instance -d db19c -i 19crac1

--关闭\启动所有实例

$ srvctl stop\start database -d db19c

--关闭\启动CRS

$ crsctl stop\start crs

--关闭\启动集群服务

crsctl stop\start cluster -all

crsctl start\stop crs 是单节管理

crsctl start\stop cluster [-all 所有节点] 可以管理多个节点

crsctl start\stop crs 管理crs 包含进程 OHASD

crsctl start\stop cluster 不包含OHASD进程 要先启动 OHASD进程才可以使用

srvctl stop\start database 启动\停止所有实例及其启用的服务

695

695

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?