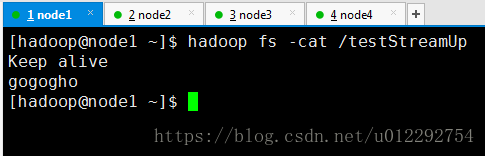

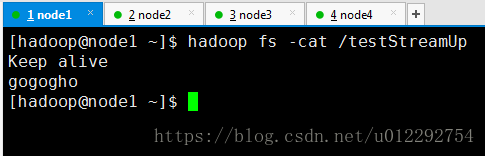

1 用流的方式上传文件

package com.tzb.hdfs

import org.apache.commons.io.IOUtils

import org.apache.hadoop.conf.Configuration

import org.apache.hadoop.fs.FSDataOutputStream

import org.apache.hadoop.fs.FileSystem

import org.apache.hadoop.fs.Path

import org.junit.Before

import org.junit.Test

import java.io.FileInputStream

import java.io.IOException

import java.net.URI

import java.net.URISyntaxException

public class HdfsStreamAccess {

FileSystem fs = null

Configuration conf = null

@Before

public void init() throws IOException, URISyntaxException, InterruptedException {

conf = new Configuration()

fs = FileSystem.get(conf)

fs = FileSystem.get(new URI("hdfs://node1:9000"), conf, "hadoop")

}

@Test

public void testUpload() throws IOException {

FSDataOutputStream outputStream = fs.create(new Path("/testStreamUp"), true)

FileInputStream inputStream= new FileInputStream("h:/testStream")

IOUtils.copy(inputStream,outputStream)

}

}

2 用流的方式下载

@Test

public void testDownload() throws IOException {

FSDataInputStream inputStream = fs.open(new Path("/testStreamUp"));

FileOutputStream outputStream = new FileOutputStream("g:/testStreamDown");

IOUtils.copy(inputStream, outputStream);

}

2.1 下载一部分

@Test

public void testRandomAccess() throws IOException {

FSDataInputStream inputStream = fs.open(new Path("/testStreamUp"));

inputStream.seek(12);

FileOutputStream outputStream = new FileOutputStream("g:/testStreamDown.part2");

IOUtils.copy(inputStream,outputStream);

}

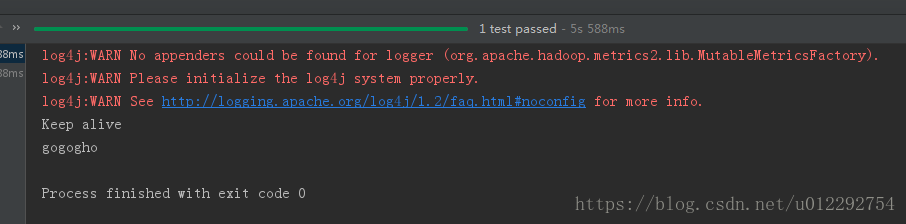

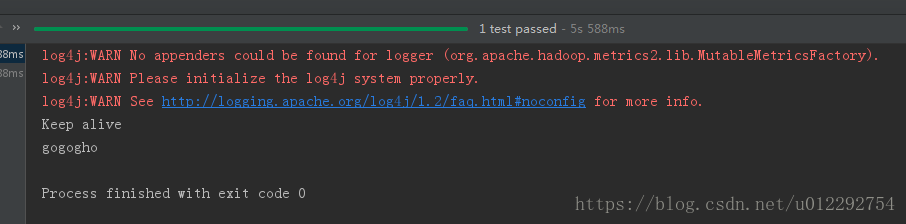

2.2 显示hdfs的内容

@Test

public void testCat() throws IOException {

FSDataInputStream in = fs.open(new Path("/testStreamUp"));

IOUtils.copy(in,System.out);

}

189

189

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?