简介

- mnist相当于ML和DL的

hello world程序,通过对手写数字的图片进行训练,并对测试图片进行测试,验证其有效性

数据集的准备

- 有时候直接在代码中下载可能需要一些时间,或者提示

IO error。因此在实际的使用过程中,会先下载好离线的数据 - 下载地址相关链接

基本的ML方法

参考链接

- https://www.tensorflow.org/versions/r0.10/tutorials/mnist/beginners/

- 通过下载https://github.com/tensorflow/tensorflow/blob/master/tensorflow/examples/tutorials/mnist/mnist_softmax.py中的

mnist_softmax.py,在本地,激活tensorflow环境之后,直接运行即可。 - 注意:上面的代码中,需要指定刚才下载的数据集所在的目录,需要自己修改一下,也可以通过参数传递进去,如

python mnist_softmax.py --data_dir=~/MNIST_data表示在home下面的MNIST_data文件夹下

DL方法

- 数据集和刚才的相同,下载

cnn_mnist.py,运行即可 - 在上面的代码中,可能需要下载数据集,因此需要首先指定已经下载好的数据集路径,在

tensorflow-master/tensorflow/examples/tutorials/mnist文件夹下的fully_connected_feed.py中,可以通过修改input_data_dir来确定数据集路径,如果搜索到其中含有需要的数据集,则不会下载,而直接训练和测试了。

按照ML重新写的一个mnist

- 参考链接:http://www.tensorfly.cn/tfdoc/tutorials/mnist_pros.html

具体代码

from __future__ import absolute_import from __future__ import division from __future__ import print_function import argparse import sys from tensorflow.examples.tutorials.mnist import input_data import tensorflow as tf FLAGS = None def main(_): # Import data mnist = input_data.read_data_sets(FLAGS.data_dir, one_hot=True) # Create the model x = tf.placeholder(tf.float32, [None, 784]) W = tf.Variable(tf.zeros([784, 10])) b = tf.Variable(tf.zeros([10])) y = tf.matmul(x, W) + b # Define loss and optimizer y_ = tf.placeholder(tf.float32, [None, 10]) # DL defs W_conv1 = weight_variable([5, 5, 1, 32]) b_conv1 = bias_variable([32]) x_image = tf.reshape(x, [-1,28,28,1]) h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1) h_pool1 = max_pool_2x2(h_conv1) W_conv2 = weight_variable([5, 5, 32, 64]) b_conv2 = bias_variable([64]) h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2) h_pool2 = max_pool_2x2(h_conv2) W_fc1 = weight_variable([7 * 7 * 64, 1024]) b_fc1 = bias_variable([1024]) h_pool2_flat = tf.reshape(h_pool2, [-1, 7*7*64]) h_fc1 = tf.nn.relu(tf.matmul(h_pool2_flat, W_fc1) + b_fc1) keep_prob = tf.placeholder("float") h_fc1_drop = tf.nn.dropout(h_fc1, keep_prob) W_fc2 = weight_variable([1024, 10]) b_fc2 = bias_variable([10]) y_conv=tf.nn.softmax(tf.matmul(h_fc1_drop, W_fc2) + b_fc2) cross_entropy = -tf.reduce_sum(y_*tf.log(y_conv)) train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy) correct_prediction = tf.equal(tf.argmax(y_conv,1), tf.argmax(y_,1)) accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float")) sess = tf.InteractiveSession() tf.global_variables_initializer().run() #sess.run(tf.initialize_all_variables()) for i in range(20000): batch = mnist.train.next_batch(50) if i%100 == 0: train_accuracy = accuracy.eval(feed_dict={x:batch[0], y_: batch[1], keep_prob: 1.0}) print("step %d, training accuracy %g"%(i, train_accuracy)) train_step.run(feed_dict={x: batch[0], y_: batch[1], keep_prob: 0.5}) print("test accuracy %g" % accuracy.eval(feed_dict={x: mnist.test.images, y_: mnist.test.labels, keep_prob: 1.0})) # end ## about DL def weight_variable(shape): initial = tf.truncated_normal(shape, stddev=0.1) return tf.Variable(initial) def bias_variable(shape): initial = tf.constant(0.1, shape=shape) return tf.Variable(initial) def conv2d(x, W): return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME') def max_pool_2x2(x): return tf.nn.max_pool(x, ksize=[1, 2, 2, 1], strides=[1, 2, 2, 1], padding='SAME') #region DL end if __name__ == '__main__': parser = argparse.ArgumentParser() parser.add_argument('--data_dir', type=str, default='MNIST_data', help='Directory for storing input data') FLAGS, unparsed = parser.parse_known_args() tf.app.run(main=main, argv=[sys.argv[0]] + unparsed)

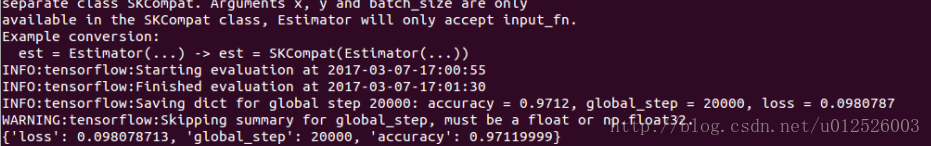

结果图

多层感知器训练mnist

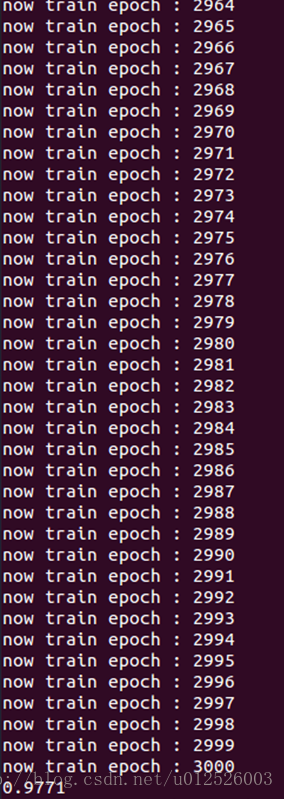

- 之前的直接利用softmax方法对数据集mnist进行处理,得到的准确率在91%左右,如果在输入层和输出层之前添加一个隐藏层,相当于多层感知器(MLP,multi-layer percepton),准确率会达到97%以上,虽然准确率无法与cnn相比,但是耗时也很少

代码

from tensorflow.examples.tutorials.mnist import input_data

import tensorflow as tf

mnist = input_data.read_data_sets( 'MNIST_data', one_hot = True )

sess = tf.InteractiveSession()

in_units = 784

h1_units = 300

W1 = tf.Variable( tf.truncated_normal( [ in_units, h1_units ], stddev = 0.1 ) )

b1 = tf.Variable( tf.zeros( [h1_units] ) )

W2 = tf.Variable( tf.zeros( [h1_units, 10] ) )

b2 = tf.Variable( tf.zeros( [10] ) )

x = tf.placeholder( tf.float32, [None, in_units] )

keep_prob = tf.placeholder( tf.float32 )

hidden1 = tf.nn.relu( tf.matmul( x, W1 ) + b1 )

hidden1_drop = tf.nn.dropout( hidden1, keep_prob )

y = tf.nn.softmax( tf.matmul(hidden1_drop, W2) + b2 )

y_ = tf.placeholder( tf.float32, [ None, 10 ] )

cross_entropy = tf.reduce_mean( -tf.reduce_sum( y_*tf.log(y),reduction_indices=[1] ) )

train_step = tf.train.AdagradOptimizer(0.3).minimize( cross_entropy )

tf.global_variables_initializer().run()

for i in range( 3000 ):

print( 'now train epoch : %04d' % (i+1) )

batch_xs, batch_ys = mnist.train.next_batch( 100 )

train_step.run( {x:batch_xs, y_:batch_ys, keep_prob:0.75 } )

correct_predication = tf.equal( tf.argmax(y,1), tf.argmax(y_,1) )

accuracy = tf.reduce_mean( tf.cast( correct_predication, tf.float32 ) )

print( accuracy.eval( { x:mnist.test.images, y_:mnist.test.labels, keep_prob:1.0 } ) )

结果图

总结

- 利用基于softmax的ML方法得到的准确率大概在91%左右,但是耗时较少,用基于cnn的DL方法,准确率为99%以上,但是耗时较长

663

663

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?