pytorch学习

这篇文章主要讲pytorch框架的学习笔记

1.基本数据类型和基本运算

1.1 张量

python导入pytorch为:

import torch

在torch中,常量通常表示成张量的类型(Tensor),与numpy中的array类似。创建一个5行3列的随机初始化张量矩阵为:

x = torch.Tensor(5, 3)创建5行3列的[0,1]均匀分布的张量矩阵

x = torch.rand(5, 3)创建5行3列的[-1,1]高斯分布的张量矩阵

x = torch.randn(5, 3)张量的大小,返回的是个tuple类型的数据

print x.size()1.2 基本运算

可以直接用运算符,也可以直接用函数,如计算x+y

y=torch.rand(5.3)

z=x+y

#或者

z=torch.Tensor(5,3)

torch.add(x,y,out=z)改变自身值的运算,需要在函数后加_,如自加

y.add_(x)此外,Tensor类型数据具有numpy类型数据的100种操作详情见这里

1.3 与numpy互相转换

Tensor->numpy

a = torch.ones(5)

b = a.numpy()numpy->Tensor

a=np.ones(5)

b=torch.from_numpy(a)1.4 变量Variable

相当于tensorflow中的placeholder,由autograd包引入,这个包可以计算所有Tensor的梯度信息,定义好变量后,用backward()就可以自动计算梯度

data是变量的初始值,grad是梯度值,grad_fn是计算梯度的函数,例:定义一个[2,2]的变量,初始值为1,并包含梯度

import torch

from torch.autograd import Variable

x = Variable(torch.ones(2, 2), requires_grad=True)定义一个计算来求x在1的梯度,令

z=3(x+2)^2则

z=3*pow(x+2,2)

out=mean(z)

out.backward()

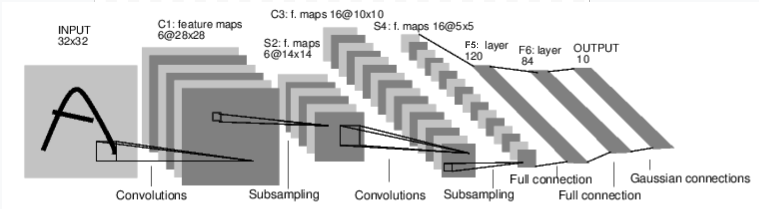

print x.grad()1.5 一个简单的CNN

我们以手写体识别的LeNet为例,说明pytorch写神经网络结构的框架,神经网络的有关运算有nn引入,相关函数由nn.functional引入:

import torch.nn as nn

import torch.nn.functional as FLeNet如下所示:

pytorch中每个模型都看成一个类,接收的输入是nn.Module.

**首先定义**LeNet,一个完整的模型定义如下:

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

# 1 input image channel, 6 output channels, 5x5 square convolution

# kernel

self.conv1 = nn.Conv2d(1, 6, 5)

self.conv2 = nn.Conv2d(6, 16, 5)

# an affine operation: y = Wx + b

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

# Max pooling over a (2, 2) window

x = F.max_pool2d(F.relu(self.conv1(x)), (2, 2))

# If the size is a square you can only specify a single number

x = F.max_pool2d(F.relu(self.conv2(x)), 2)

x = x.view(-1, self.num_flat_features(x))

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return x

net=Net()包括类的构造函数和前向计算,构造函数就是自己定义的一些运算层,参数是随机初始化的,前向计算则是层之间的运算,反向传播相关运算则是模型自动定义。注意到的是,输入到模型中做前向计算的一定是一个Variable

其次利用定义好的网络做一次前向计算

input = Variable(torch.randn(1, 1, 32, 32))

out = net(input)

print(out)接着初始化网络中所有参数的梯度,然后用随机的梯度做一次反向传播

net.zero_grad()

out.backward(torch.randn(1, 10))神经网络的参数是要用训练数据去训练的,这就需要定义loss funtion,pytorch中的nn模块内定义了各种loss function

,torch中的loss function 包含输出和目标值。手写体字符有10个元素,我们就用1-10来表示,定义loss如下:

output = net(input)

target = Variable(torch.arange(1, 11)) # a dummy target, for example

target = target.view(1, -1) # make it the same shape as output

criterion = nn.MSELoss()

loss = criterion(output, target)利用loss做一次反向传播就可以求出所有参数的梯度值,一般在计算定义loss后,先初始化所有参数的梯度值,再更新梯度。我们知道,梯度下降法只是求解优化问题中的参数的一种方法。其他方法还有Adam, RMSProp等。

import torch.optim as optim

# create your optimizer

optimizer = optim.SGD(net.parameters(), lr=0.01)

# in your training loop:

optimizer.zero_grad() # zero the gradient buffers

output = net(input)

loss = criterion(output, target)

loss.backward()

optimizer.step() # Does the update1.6 简单的分类网络架构

一个完整的分类网络包括:读取数据→展示数据样例→定义网络结构→定义loss和优化方法→训练网络→测试网络。以CIFAR10为例

读取数据

import torch

import torchvision

import torchvision.transforms as transforms

transform = transforms.Compose(

[transforms.ToTensor(),

transforms.Normalize((0.5, 0.5, 0.5), (0.5, 0.5, 0.5))])

trainset = torchvision.datasets.CIFAR10(root='./data', train=True,

download=True, transform=transform)

trainloader = torch.utils.data.DataLoader(trainset, batch_size=4,

shuffle=True, num_workers=2)

testset = torchvision.datasets.CIFAR10(root='./data', train=False,

download=True, transform=transform)

testloader = torch.utils.data.DataLoader(testset, batch_size=4,

shuffle=False, num_workers=2)

classes = ('plane', 'car', 'bird', 'cat',

'deer', 'dog', 'frog', 'horse', 'ship', 'truck')展示数据样例

import matplotlib.pyplot as plt

import numpy as np

# functions to show an image

def imshow(img):

img = img / 2 + 0.5 # unnormalize

npimg = img.numpy()

plt.imshow(np.transpose(npimg, (1, 2, 0)))

# get some random training images

dataiter = iter(trainloader)

images, labels = dataiter.next()

# show images

imshow(torchvision.utils.make_grid(images))

# print labels

print(' '.join('%5s' % classes[labels[j]] for j in range(4)))定义网络结构以类的形式定义包括架构和前向计算

from torch.autograd import Variable

import torch.nn as nn

import torch.nn.functional as F

class Net(nn.Module):

def __init__(self):

super(Net, self).__init__()

self.conv1 = nn.Conv2d(3, 6, 5)

self.pool = nn.MaxPool2d(2, 2)

self.conv2 = nn.Conv2d(6, 16, 5)

self.fc1 = nn.Linear(16 * 5 * 5, 120)

self.fc2 = nn.Linear(120, 84)

self.fc3 = nn.Linear(84, 10)

def forward(self, x):

x = self.pool(F.relu(self.conv1(x)))

x = self.pool(F.relu(self.conv2(x)))

x = x.view(-1, 16 * 5 * 5)

x = F.relu(self.fc1(x))

x = F.relu(self.fc2(x))

x = self.fc3(x)

return xnet = Net()

查看模型信息

有时候想要查看模型的信息,并打印出某些层的参数(weights,bias),可以用以下语句:

params=net.state_dict()

for k,v in params.items():

print(k) #打印网络中的变量名

print(params['conv1.weight']) #打印conv1的weight

print(params['conv1.bias']) #打印conv1的bias定义loss函数和优化方法

import torch.optim as optim

criterion = nn.CrossEntropyLoss()

optimizer = optim.SGD(net.parameters(), lr=0.001, momentum=0.9)训练网络

for epoch in range(2): # loop over the dataset multiple times

running_loss = 0.0

for i, data in enumerate(trainloader, 0):

# get the inputs

inputs, labels = data

# wrap them in Variable

inputs, labels = Variable(inputs), Variable(labels)

# zero the parameter gradients

optimizer.zero_grad()

# forward + backward + optimize

outputs = net(inputs)

loss = criterion(outputs, labels)

loss.backward()

optimizer.step()

# print statistics

running_loss += loss.data[0]

if i % 2000 == 1999: # print every 2000 mini-batches

print('[%d, %5d] loss: %.3f' %

(epoch + 1, i + 1, running_loss / 2000))

running_loss = 0.0

print('Finished Training')测试网络

dataiter = iter(testloader)

images, labels = dataiter.next()

# print images

imshow(torchvision.utils.make_grid(images))

print('GroundTruth: ', ' '.join('%5s' % classes[labels[j]] for j in range(4)))

outputs = net(Variable(images))

# 预测

_, predicted = torch.max(outputs.data, 1)

print('Predicted: ', ' '.join('%5s' % classes[predicted[j]]

for j in range(4)))

3866

3866

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?