引言:在学习Match查询之前,一定要先了解倒排序索引和Analysis分词【ElasticSearch系列05:倒排序索引与分词Analysis】,这样才能快乐的学习ik分词和Match query查询。

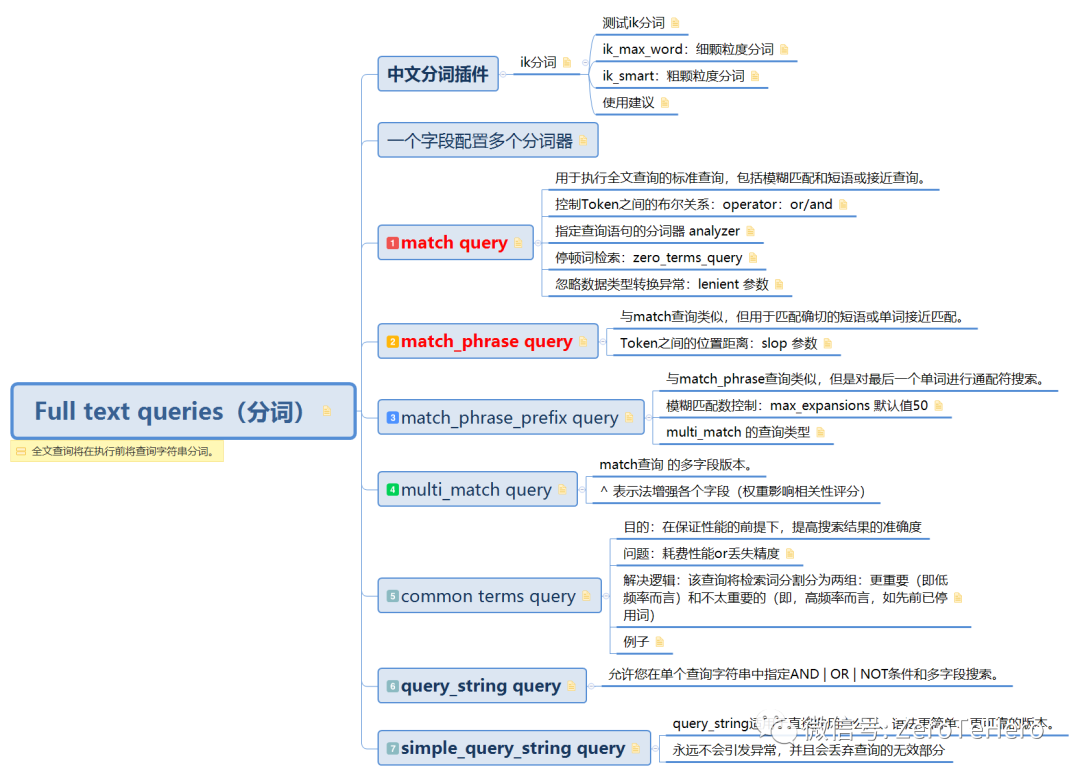

本文结构【开局一张图】

Full text queries 将在执行前将查询字符串分词。因为ES本身提供的分词器不太适合中文分词,所以在学习全文查询前,我们先简单了解下中文分词插件ik分词。

一、ik 分词

官网简介:The IK Analysis plugin integrates Lucene IK analyzer (http://code.google.com/p/ik-analyzer/) into elasticsearch, support customized dictionary.

Analyzer: ik_smart , ik_max_word , Tokenizer: ik_smart , ik_max_word

1.1 ik_max_word:细颗粒度分词

ik_max_word: 会将文本做最细粒度的拆分,比如会将“关注我系统学习ES”拆分为“关注,我,系统学,系统,学习,es”,会穷尽各种可能的组合,适合 Term Query;

# 测试分词效果

GET /_analyze

{

"text": ["关注我系统学习ES"],

"analyzer": "ik_max_word"

}# 效果

{

"tokens": [

{

"token": "关注",

"start_offset": 0,

"end_offset": 2,

"type": "CN_WORD",

"position": 0

},

{

"token": "我",

"start_offset": 2,

"end_offset": 3,

"type": "CN_CHAR",

"position": 1

},

{

"token": "系统学",

"start_offset": 3,

"end_offset": 6,

"type": "CN_WORD",

"position": 2

},

{

"token": "系统",

"start_offset": 3,

"end_offset": 5,

"type": "CN_WORD",

"position": 3

},

{

"token": "学习",

"start_offset": 5,

"end_offset": 7,

"type": "CN_WORD",

"position": 4

},

{

"token": "es",

"start_offset": 7,

"end_offset": 9,

"type": "ENGLISH",

"position": 5

}

]

}

2283

2283

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?