Logstash是一个开源的,可以对分布式环境中的日志进行收集分析的工具。 Kibana也是一个开源和免费的工具,他可以帮助汇总,分析和搜索重要日志数据并提供友好的web界面,它可以为Logstash和ElasticSearch提供日志分析的web界面。

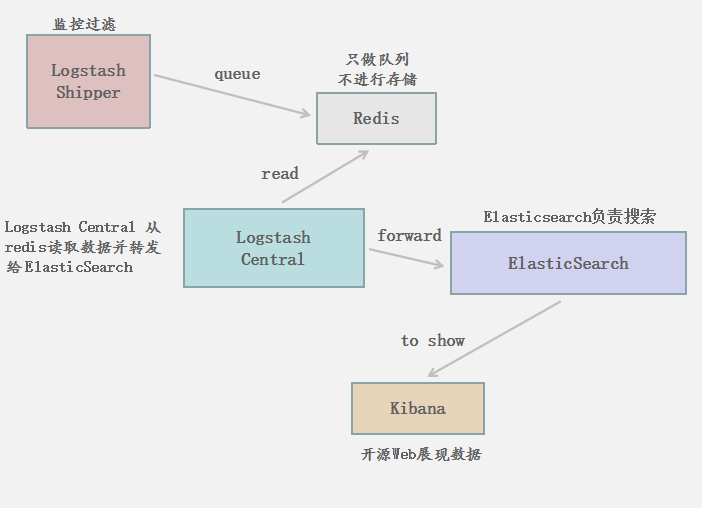

下面看看各组件的作用:

redis:在这里做一个缓存的机制,logstash shipper 将 log 转发到redis(只做队列处理不做存储)。Logstash central 从 redis 中读取数据并转发给 Elasticsearch 。 这里加上 redis 是为了提高 Logstash shipper 的日志提交到Logstash central 的速度,同时避免由于突然断电等导致的数据的丢失。

Elasticsearch : 开源的搜索引擎框架,它提供了一个分布式多用户能力的全文搜索引擎,基于 RESTful web 接口。也可进行多数据集群,提高效率。这里的目的是从 redis中 读取数据,并转发到 kibana中。

Kibana:漂亮的界面展示,用 web 界面将数据挖掘后的数据以图表等形式进行形象的展示

下面是我画的一张原理图,可以看看其工作流程及各组件作用:

所用到的包,包含:

JDK、Elasticsearch、Logstash、Redis、Kibana

http://yunpan.cn/cuhTEuTiCBnpb (提取码:fcc4)

环境:

OS : Centos 6.5

Kernel : 2.6.32-431.el6.x86_64

Memory : 4g

Logstash central : 10.1.0.100

Logstash shipper : 10.1.0.101

Software :

elasticsearch-1.7.4.tar.gz

jdk-7u79-linux-x64.gz

kibana-4.1.4-linux-x64.tar.gz

logstash-2.1.1.tar.gz

redis-3.0.6.tar.gzJAVA 环境及变量设置

解包

tar zxf jdk-7u79-linux-x64.gz -C /usr/local/

mv /usr/local/jdk1.7.0_79/ /usr/local/java设置环境变量

JAVA_HOME=/usr/local/java

JAVA_BIN=$JAVA_HOME/bin

PATH=$PATH:$JAVA_BIN

CLASSPATH=$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export JAVA_HOME JAVA_BIN PATH CLASS

PATH

source /etc/profileRedis 部署

安装依赖包

yum -y install make gcc-c++

解包并且编译

tar zxf redis-3.0.6.tar.gz -C /usr/local/

cd /usr/local/redis-3.0.6

make MALLOC=libc

创建日志目录及数据目录

mkdir /usr/local/redis-3.0.6/logs

mkdir /usr/local/redis-3.0.6/data

配置 redis 后台运行、监听地址和端口、AOF存储方式、日志级别及位置

vim /usr/local/redis-3.0.6/redis.conf

daemonize yes

port 6379

bind 10.1.0.100

appendonly yes

loglevel notice

logfile "/usr/local/redis-3.0.6/logs/redislog"

dir /usr/local/redis-3.0.6/dataRedis 警告信息调整

echo "vm.overcommit_memory = 1" >> /etc/sysctl.conf

echo 511 > /proc/sys/net/core/somaxconn

echo never > /sys/kernel/mm/transparent_hugepage/enabled

sysctl -p启动 Redis 并测试是否可用

/usr/local/redis-3.0.6/src/redis-server /usr/local/redis-3.0.6/redis.conf

/usr/local/redis-3.0.6/src/redis-cli -h 10.1.0.100

10.1.0.100:6379> set x 1

OK

10.1.0.100:6379> get x

"1"

10.1.0.100:6379> Logstash central 配置 (server 端)

解包

tar zxf logstash-2.1.1.tar.gz -C /usr/local/

创建日志存放目录及配置档目录

mkdir -p /usr/local/logstash-2.1.1/logs

mkdir -p /usr/local/logstash-2.1.1/conf配置 central.conf

vim /usr/local/logstash-2.1.1/conf/central.conf

input {

redis {

host => "10.1.0.100"

port => "6379"

type => "channelui-ci1"

data_type => "list"

key => "logstash"

}

redis {

host => "10.1.0.100"

port => "6379"

type => "cartui-ci1"

data_type => "list"

key => "logstash"

}

redis {

host => "10.1.0.100"

port => "6379"

type => "bbsui-ci1"

data_type => "list"

key => "logstash"

}

}

output {

elasticsearch {

hosts => "10.1.0.100:9200"

}

}启动 logstash central 并查看日志是否有问题

/usr/local/logstash-2.1.1/bin/logstash agent –verbose –config /usr/local/logstash-2.1.1/conf/central.conf –log /usr/local/logstash-2.1.1/logs/logstash.log &

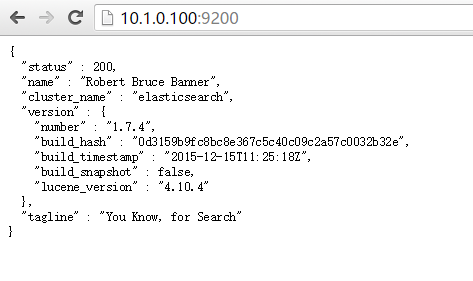

elasticsearch 配置

tar zxf elasticsearch-1.7.4.tar.gz -C /usr/local/

编辑 elasticsearch.yml 并且配置监听地址及监听端口

vim /usr/local/elasticsearch-1.7.4/config/elasticsearch.yml

network.bind_host: 10.1.0.100

http.port: 9200Elasticsearch 内存设置

vim /usr/local/elasticsearch-1.7.4/bin/elasticsearch.in.sh

if [ "x$ES_MIN_MEM" = "x" ]; then

ES_MIN_MEM=4g

fi

if [ "x$ES_MAX_MEM" = "x" ]; then

ES_MAX_MEM=4g

fi

if [ "x$ES_HEAP_SIZE" != "x" ]; then

ES_MIN_MEM=$ES_HEAP_SIZE

ES_MAX_MEM=$ES_HEAP_SIZE

fi

启动 Elasticsearch

如果直接启动则会报错:java.net.UnknownHostException: slave-master: slave-master: Name or service not known

所以记得在 /etc/hosts 中添加 ip 地址和 hostname 的映射信息

10.1.0.100 logstash-server

启动 elasticsearch

/usr/local/elasticsearch-1.7.4/bin/elasticsearch &

注意:如果出现如下错误,请删除索引

删除操作: curl -XDELETE 10.1.0.100:9200/.kibana

{"name":"Kibana","hostname":"logstash-server","pid":2629,"level":30,"msg":"Elasticsearch is still initializing the kibana index... Trying again in 2.5 second.","time":"2015-11-26T18:10:38.597Z","v":0}

{"name":"Kibana","hostname":"logstash-server","pid":2629,"level":30,"msg":"Elasticsearch is still initializing the kibana index... Trying again in 2.5 second.","time":"2015-11-26T18:10:41.109Z","v":0}

{"name":"Kibana","hostname":"logstash-server","pid":2629,"level":30,"msg":"Elasticsearch is still initializing the kibana index... Trying again in 2.5 second.","time":"2015-11-26T18:10:43.626Z","v":0}

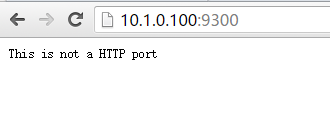

访问 http://10.1.0.100:9200 , http://10.1.0.100:9300 查看是否正常

部署 kibana

解包

tar zxf kibana-4.1.4-linux-x64.tar.gz -C /usr/local/

编辑配置并修改

vim /usr/local/kibana-4.1.4-linux-x64/config/kibana.yml

host: "10.1.0.100"

port: 5601

elasticsearch.url: "http://10.1.0.100:9200"

启动

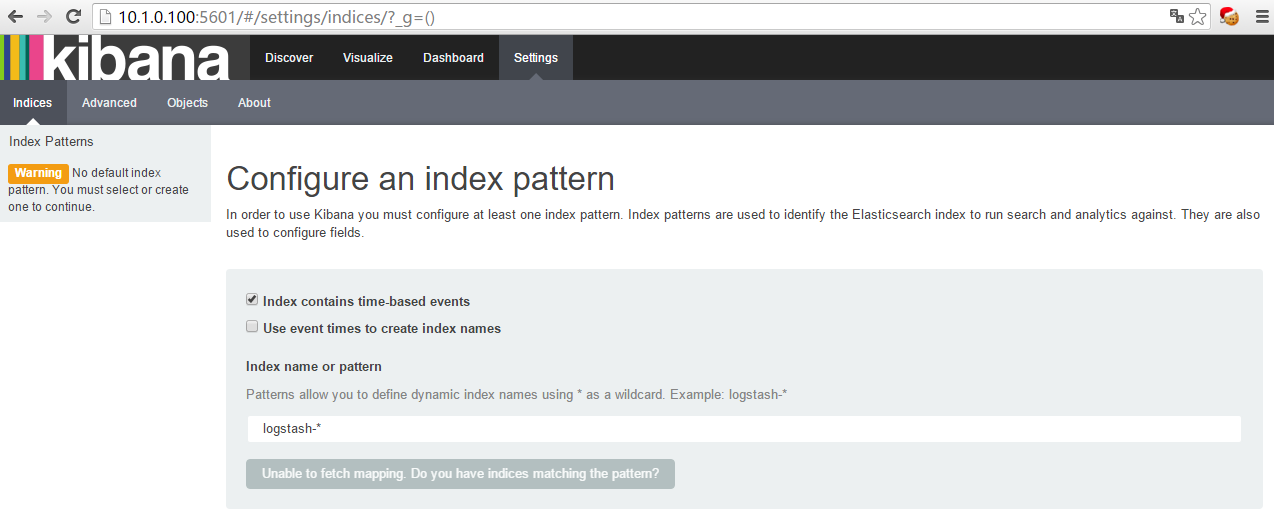

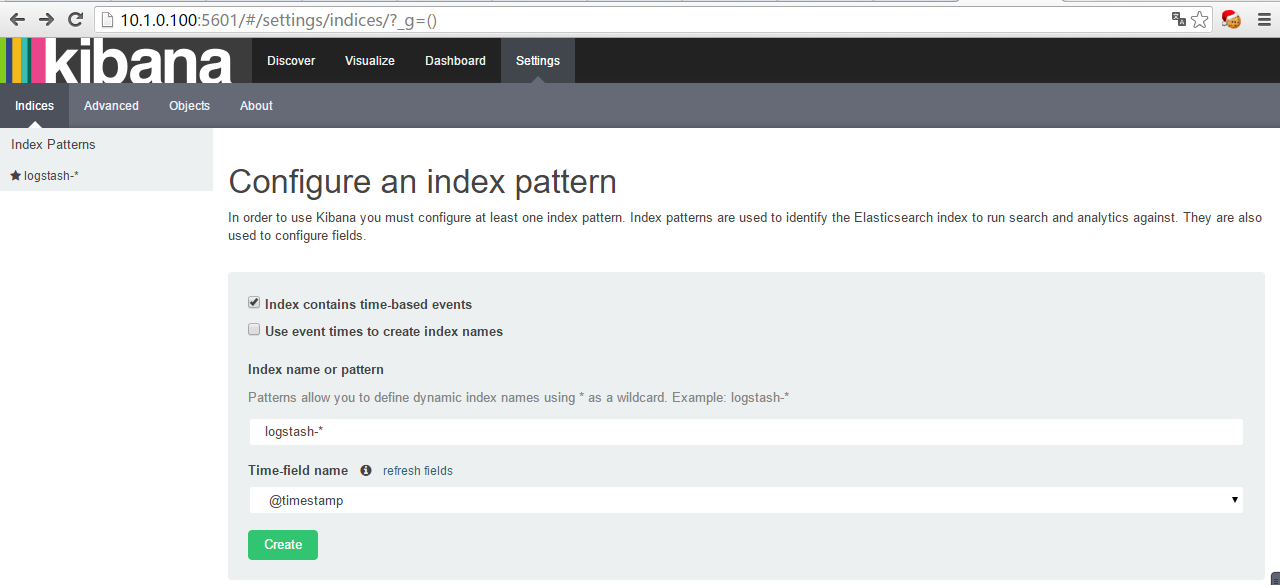

/usr/local/kibana-4.1.4-linux-x64/bin/kibana &打开浏览器输入 http://10.1.0.100:5601

提示 Kibana 正在加载并缓存,马上就可打开页面

进来之后他要你创建一个默认索引,可是没有 create 的字样

其实是你装完 kibana 然后日志文件要是一直没有新的日志产生呢这里就一直是灰的,这个时候你只需要去访问一下你的网站,然后就行了

(当时我碰到这个问题的时候,因为用的虚拟机做的,卡了几个小时啊坑爹啊)

下面我们做完客户端再来看这个问题!

配置 Logstash-client

JAVA 环境及变量设置

解包

tar zxf jdk-7u79-linux-x64.gz -C /usr/local/

mv /usr/local/jdk1.7.0_79/ /usr/local/java设置环境变量

JAVA_HOME=/usr/local/java

JAVA_BIN=$JAVA_HOME/bin

PATH=$PATH:$JAVA_BIN

CLASSPATH=$JAVA_HOME/lib/dt.jar:$JAVA_HOME/lib/tools.jar

export JAVA_HOME JAVA_BIN PATH CLASS

PATH

source /etc/profileLogstash shipper 配置 (client 端)

解包、并且创建日志目录和配置档目录

tar zxf logstash-2.1.1.tar.gz -C /usr/local/

mkdir -p /usr/local/logstash-2.1.1/logs

mkdir -p /usr/local/logstash-2.1.1/conf编辑 shipper.conf 文件

vim /usr/local/logstash-2.1.1/conf/shipper.conf

input {

file{

type => "channelui-ci1"

path => "/data/docker_logs/channelui-ci1/catalina.2015-12-28.log"

}

file {

type => "cartui-ci1"

path => "/data/docker_logs/cartui-ci1/catalina.2015-12-28.log"

}

file {

type => "bbsui-ci1"

path => "/data/docker_logs/bbsui-ci1/catalina.2015-12-28.log"

}

}

output {

redis {

host => "10.1.0.100"

data_type => "list"

key => "logstash"

}

}

启动 logstash shipper 并查看日志是否有问题

/usr/local/logstash-2.1.1/bin/logstash agent –verbose –config /usr/local/logstash-2.1.1/conf/shipper.conf –log /usr/local/logstash-2.1.1/logs/logstash.log &

ok , 这时候基本环境都搭建的差不多了,但是访问 http://10.1.0.100:5601 还是老页面,那么在 logstash-client 中改变监控的日志文件,就可以进行创建了

logstash-client 端操作:

cat localhost.2015-12-21.log >> catalina.2015-12-28.log接着打开 http://10.1.0.100:5601 页面,一切ok!

如果有新日志生成,那么在图标中也会显示出来

2969

2969

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?