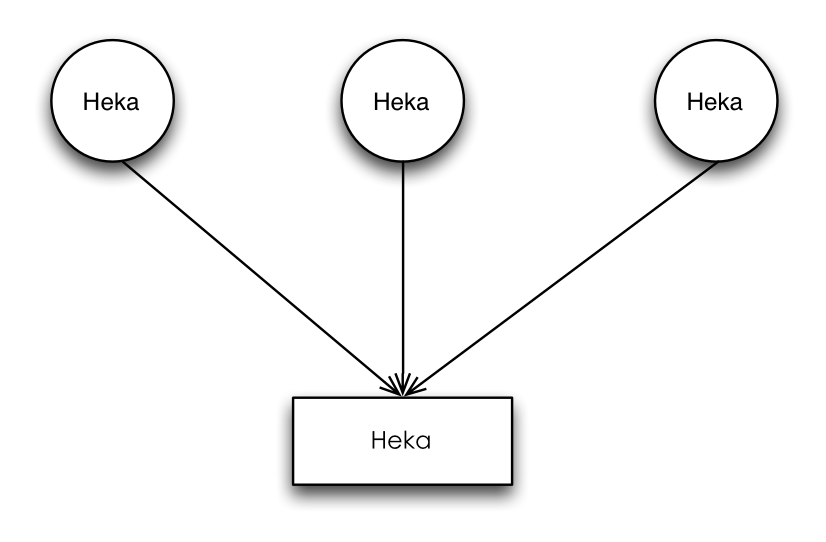

配置架构:

a. Heka’s Agent/Aggregator架构

b:以一台agent为例进行说明,agent1配置文件如下:

[NginxLogInput]

type = "LogstreamerInput"

log_directory = "/usr/local/openresty/nginx/logs/"

file_match = 'access\.log'

decoder = "NginxLogDecoder"

hostname = "ID:XM_1_1"

[NginxLogDecoder]

type = "SandboxDecoder"

filename = "lua_decoders/nginx_access.lua"

[NginxLogDecoder.config]

log_format = '$remote_addr - $remote_user [$time_local] "$request" $status $body_bytes_sent "$http_referer" "$http_user_agent"'

type = "nginx-access"

[ProtobufEncoder]

[NgxLogOutput]

type = "HttpOutput"

message_matcher = "TRUE"

address = "http://127.0.0.1:10000"

method = "POST"

encoder = "ProtobufEncoder"

# [NgxLogOutput.headers]

# content-type = "application/octet-stream"

# [NgxLogOutput]

# type = "LogOutput"

# message_matcher = "TRUE"

# encoder = "ProtobufEncoder"aggregator配置如下:

[LogInput]

type = "HttpListenInput"

#parser_type = "message.proto"

address = "0.0.0.0:10000"

decoder = "ProtobufDecoder"

[ProtobufDecoder]

[ESJsonEncoder]

index = "%{Type}-%{2006.01.02}"

es_index_from_timestamp = true

type_name = "%{Type}"

[PayloadEncoder]

[LogOutput]

type = "LogOutput"

message_matcher = "TRUE"

encoder = "ESJsonEncoder" 2. 问题描述:通过以上配置以后本应该可以将nginx log文件中数据发送到aggregator,并显示出来,但实际上并未显示;

3. 解决方法:

a. 修改protobuf.go中的Decode接口:

if err = proto.Unmarshal([]byte(*pack.Message.Payload), pack.Message); err == nil {

// fmt.Println("ProtobufDecoder:", string(pack.MsgBytes))

//fmt.Println("ProtobufDecoder", pack.Message.Fields)

packs = []*PipelinePack{pack}

} else {

atomic.AddInt64(&p.processMessageFailures, 1)

}通过以上代码可以看出我们是将Unmarshal接口中的第一个参数pack.MsgBytes修改为pack.Message.Payload这样既可将agent端发送的数据在aggregator正确解析,但因pack.Message.Payload是string类型,不太建议这种修改方式;

b 修改http_listen_input.go:

pack.Message.SetSeverity(int32(6))

if hli.conf.UnescapeBody {

unEscapedBody, _ := url.QueryUnescape(string(body))

pack.Message.SetPayload(unEscapedBody)

} else {

pack.Message.SetPayload(string(body))

}

pack.MsgBytes = body //添加这个赋值这样通过修改后,在protobuf Decode时直接将pack.MsgBytes 进行Unmarshal即可;

6897

6897

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?