public class kafkaProducer extends Thread{

private String topic;

public kafkaProducer(String topic){

super();

this.topic = topic;

}

@Override

public void run() {

Producer producer = createProducer();

int i=0;

while(true){

i++;

String string = "hello"+i;

producer.send(new KeyedMessage<Integer, String>(topic,string));

if(i==100){

break;

}

try {

TimeUnit.SECONDS.sleep(1);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

private Producer createProducer() {

Properties properties = new Properties();

properties.put("serializer.class", StringEncoder.class.getName());

properties.put("metadata.broker.list", "ip1:9092,ip2:9092,ip3:9092");

return new Producer<Integer, String>(new ProducerConfig(properties));

}

public static void main(String[] args) {

new kafkaProducer("user11").start();

}

} 发现其只向topic:user11中的某一个partiton中写数据。后来查了一下相关的参数:partitioner.class

partitioner.class

# 分区的策略

# 默认为kafka.producer.DefaultPartitioner,取模

partitioner.class = kafka.producer.DefaultPartitioner在上面的程序中,我在producer中没有定义分区策略,也就是说程序采用默认的kafka.producer.DefaultPartitioner,来看看源码中是怎么定义的:

class DefaultPartitioner(props: VerifiableProperties = null) extends Partitioner {

private val random = new java.util.Random

def partition(key: Any, numPartitions: Int): Int = {

Utils.abs(key.hashCode) % numPartitions

}

}其核心思想就是对每个消息的key的hash值对partition数取模得到。再来看看我的程序中有这么一段:

producer.send(new KeyedMessage<Integer, String>(topic,string))来看看keyMessage:

case class KeyedMessage[K, V](val topic: String, val key: K, val partKey: Any, val message: V) {

if(topic == null)

throw new IllegalArgumentException("Topic cannot be null.")

def this(topic: String, message: V) = this(topic, null.asInstanceOf[K], null, message)

def this(topic: String, key: K, message: V) = this(topic, key, key, message)

def partitionKey = {

if(partKey != null)

partKey

else if(hasKey)

key

else

null

}

def hasKey = key != null

}由于上面生产者代码中没有传入key,所以程序调用:

def this(topic: String, message: V) = this(topic, null.asInstanceOf[K], null, message)这里的key值可以为空,在这种情况下, kafka会将这个消息发送到哪个分区上呢?依据Kafka官方的文档, 默认的分区类会随机挑选一个分区。这里的随机是指在参数"topic.metadata.refresh.ms"刷新后随机选择一个, 这个时间段内总是使用唯一的分区。 默认情况下每十分钟才可能重新选择一个新的分区。

private def getPartition(topic: String, key: Any, topicPartitionList: Seq[PartitionAndLeader]): Int = {

val numPartitions = topicPartitionList.size

if(numPartitions <= 0)

throw new UnknownTopicOrPartitionException("Topic " + topic + " doesn't exist")

val partition =

if(key == null) {

// If the key is null, we don't really need a partitioner

// So we look up in the send partition cache for the topic to decide the target partition

val id = sendPartitionPerTopicCache.get(topic)

id match {

case Some(partitionId) =>

// directly return the partitionId without checking availability of the leader,

// since we want to postpone the failure until the send operation anyways

partitionId

case None =>

val availablePartitions = topicPartitionList.filter(_.leaderBrokerIdOpt.isDefined)

if (availablePartitions.isEmpty)

throw new LeaderNotAvailableException("No leader for any partition in topic " + topic)

val index = Utils.abs(Random.nextInt) % availablePartitions.size

val partitionId = availablePartitions(index).partitionId

sendPartitionPerTopicCache.put(topic, partitionId)

partitionId

}

} else

partitioner.partition(key, numPartitions)

if(partition < 0 || partition >= numPartitions)

throw new UnknownTopicOrPartitionException("Invalid partition id: " + partition + " for topic " + topic +

"; Valid values are in the inclusive range of [0, " + (numPartitions-1) + "]")

trace("Assigning message of topic %s and key %s to a selected partition %d".format(topic, if (key == null) "[none]" else key.toString, partition))

partition

}如果key为null, 它会从sendPartitionPerTopicCache查选缓存的分区, 如果没有,随机选择一个分区,否则就用缓存的分区。

LinkedIn工程师Guozhang Wang在邮件列表中解释了这一问题,

最初kafka是按照大部分用户理解的那样每次都随机选择一个分区, 后来改成了定期选择一个分区, 这是为了减少服务器段socket的数量。不过这的确很误导用户,据称0.8.2版本后又改回了每次随机选取。但是我查看0.8.2的代码还没看到改动。

所以,如果有可能,还是为KeyedMessage设置一个key值吧。比如:

producer.send(new KeyedMessage<String, String>(topic,String.valueOf(i),string)); 自定义partitioner.class

如下所示为自定义的分区函数,分区函数实现了Partitioner接口

public class PersonalPartition implements Partitioner{

public PersonalPartition(VerifiableProperties properties){

}

public int partition(Object arg0, int arg1) {

if(arg0==null){

return 0;

}

else{

return 1;

}

}

}然后修改配置即可:

properties.put("partitioner.class", "com.xx.kafka.PersonalPartition"); 向指定的partition写入数据

当然,也可以向topic中指定的partition中写数据,如下代码为向”user11”中partition 1中写入数据:

public class kafkaProducer extends Thread{

private String topic;

public kafkaProducer(String topic){

super();

this.topic = topic;

}

@Override

public void run() {

KafkaProducer producer = createProducer();

int i=0;

while(true){

i++;

String string = "hello"+i;

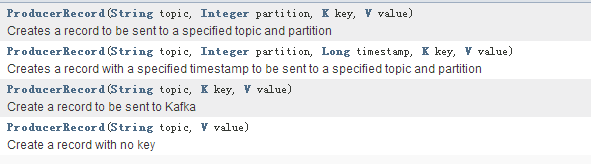

producer.send(new ProducerRecord(topic,1,null,string.getBytes()));

if(i==10000){

break;

}

try {

TimeUnit.SECONDS.sleep(1);

} catch (InterruptedException e) {

e.printStackTrace();

}

}

}

private KafkaProducer createProducer() {

Properties properties = new Properties();

properties.put("serializer.class", StringEncoder.class.getName());

properties.put("metadata.broker.list", "ip1:9092,ip2:9092,ip3:9092");

return new KafkaProducer(properties);

}

}

832

832

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?