不多说,直接上干货!

解决办法

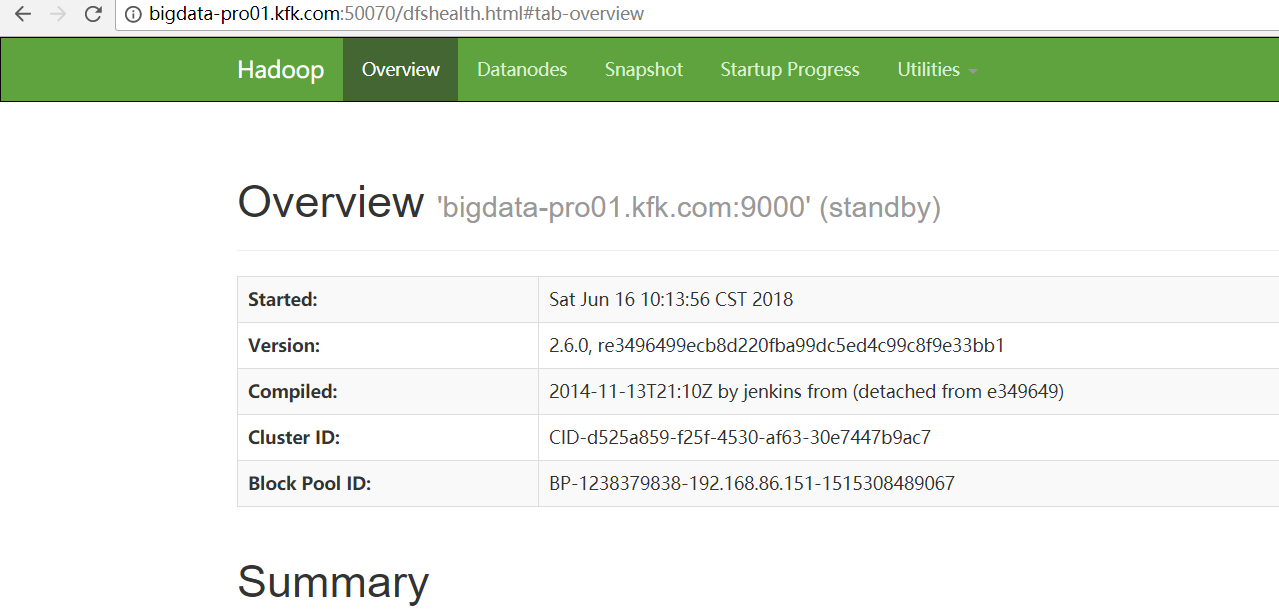

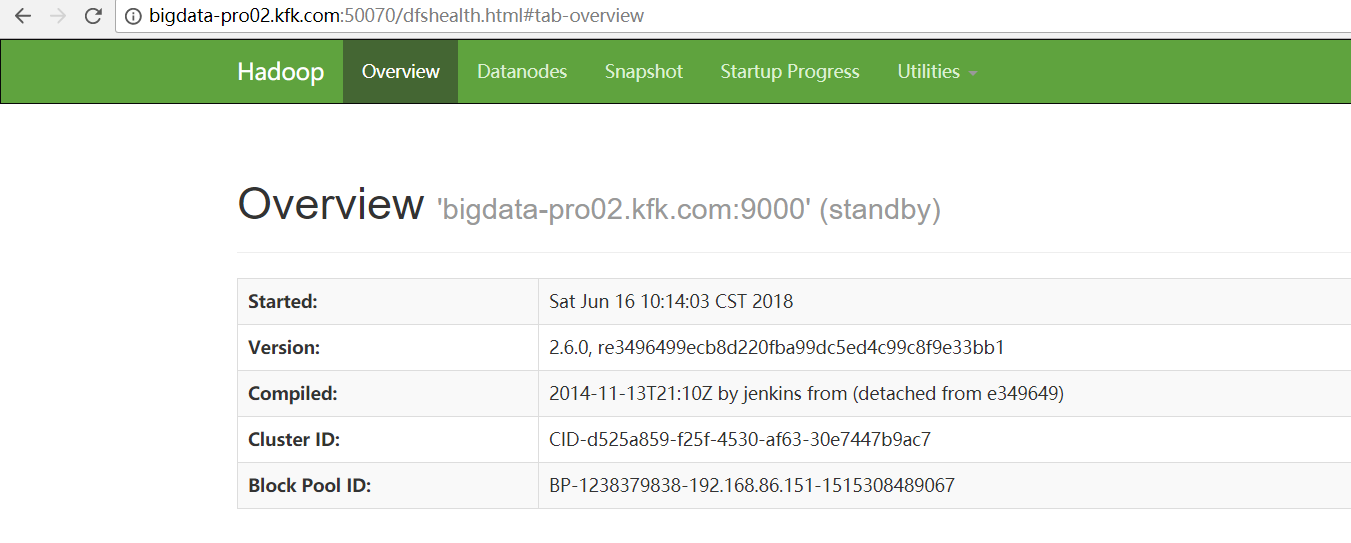

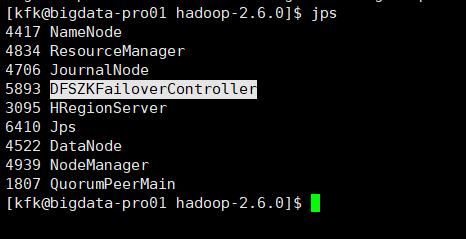

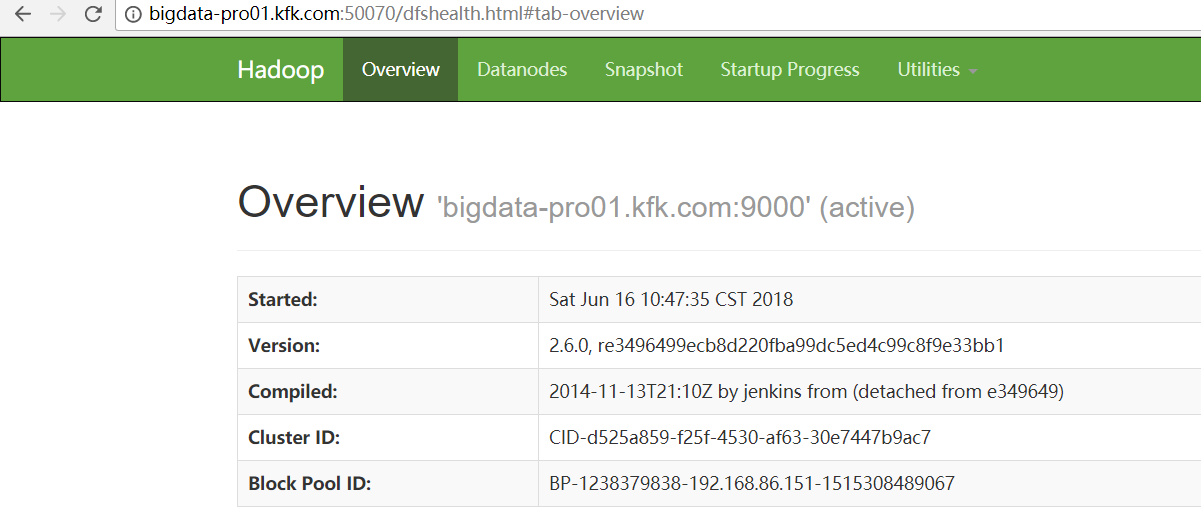

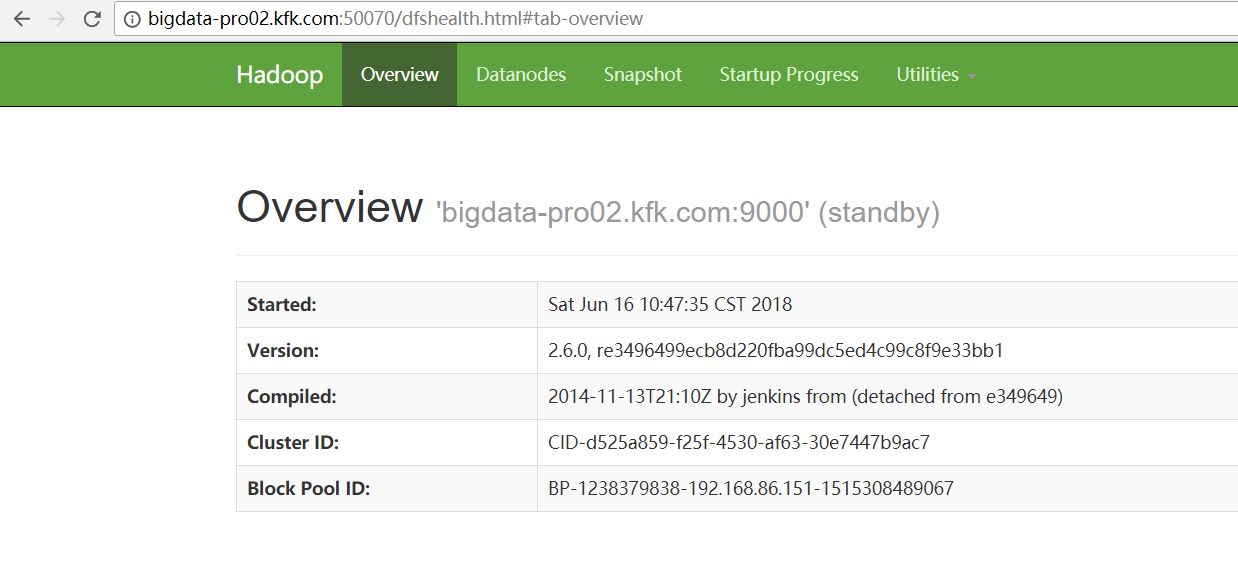

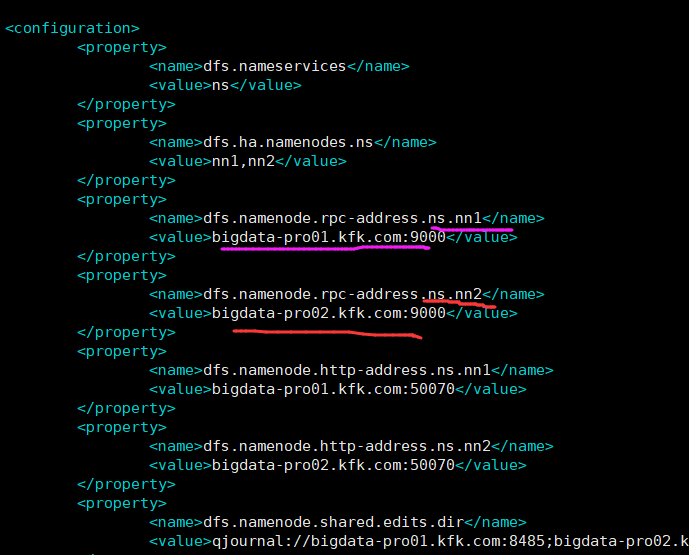

因为,如下,我的Hadoop HA集群。

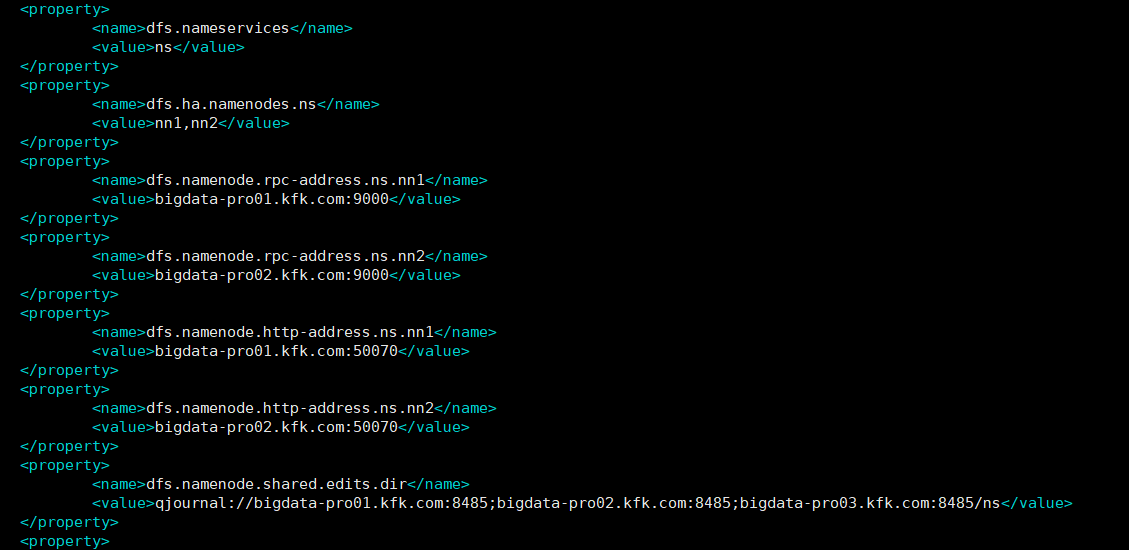

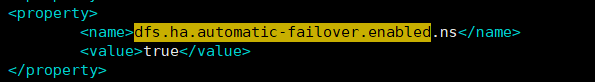

1、首先在hdfs-site.xml中添加下面的参数,该参数的值默认为false:

<property>

<name>dfs.ha.automatic-failover.enabled.ns</name>

<value>true</value>

</property>

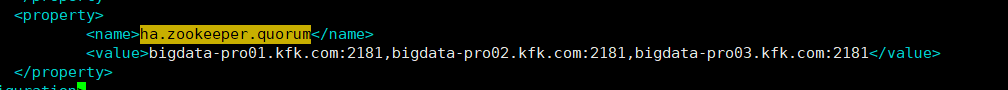

2、在core-site.xml文件中添加下面的参数,该参数的值为ZooKeeper服务器的地址,ZKFC将使用该地址。

在HA或者HDFS联盟中,上面的两个参数还需要以NameServiceID为后缀,比如dfs.ha.automatic-failover.enabled.mycluster。除了上面的两个参数外,还有其它几个参数用于自动故障转移,比如ha.zookeeper.session-timeout.ms,但对于大多数安装来说都不是必须的。

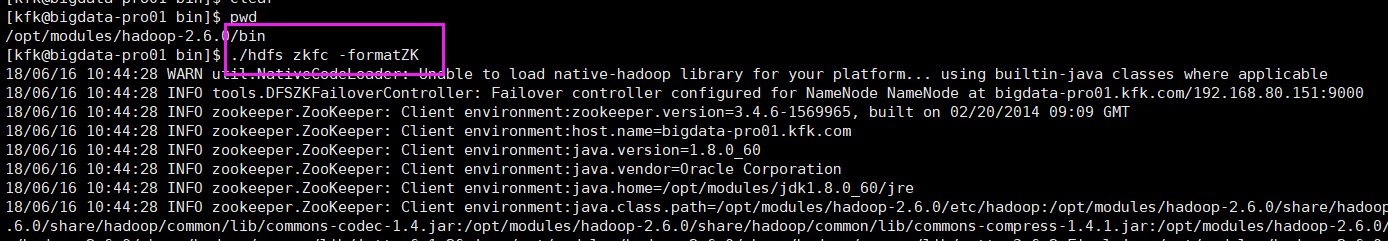

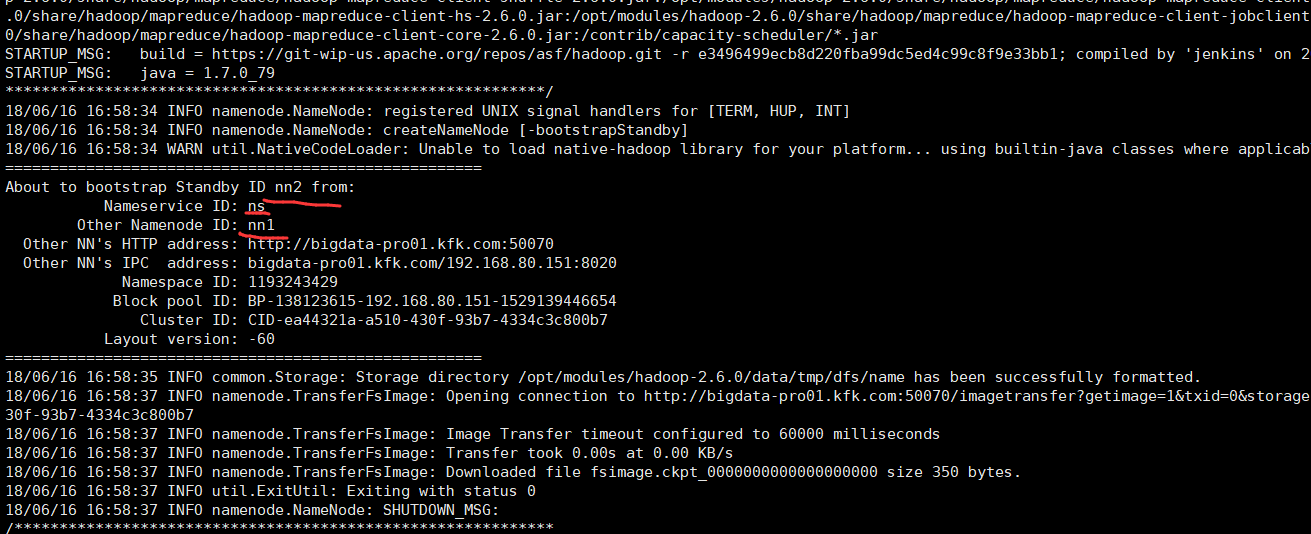

在添加了上述的配置参数后,下一步就是在ZooKeeper中初始化要求的状态,可以在任一NameNode中运行下面的命令实现该目的,该命在ZooKeeper中创建znode:

执行该命令需要进入Hadoop的安装目录下面的bin目录中找到hdfs这个命令,输入上面的命令执行,然后就可以修复这个问题了。

注意:之前,先得启动好,每台机器的zookeeper进程。

[kfk@bigdata-pro01 bin]$ pwd /opt/modules/hadoop-2.6.0/bin [kfk@bigdata-pro01 bin]$ ./hdfs zkfc -formatZK

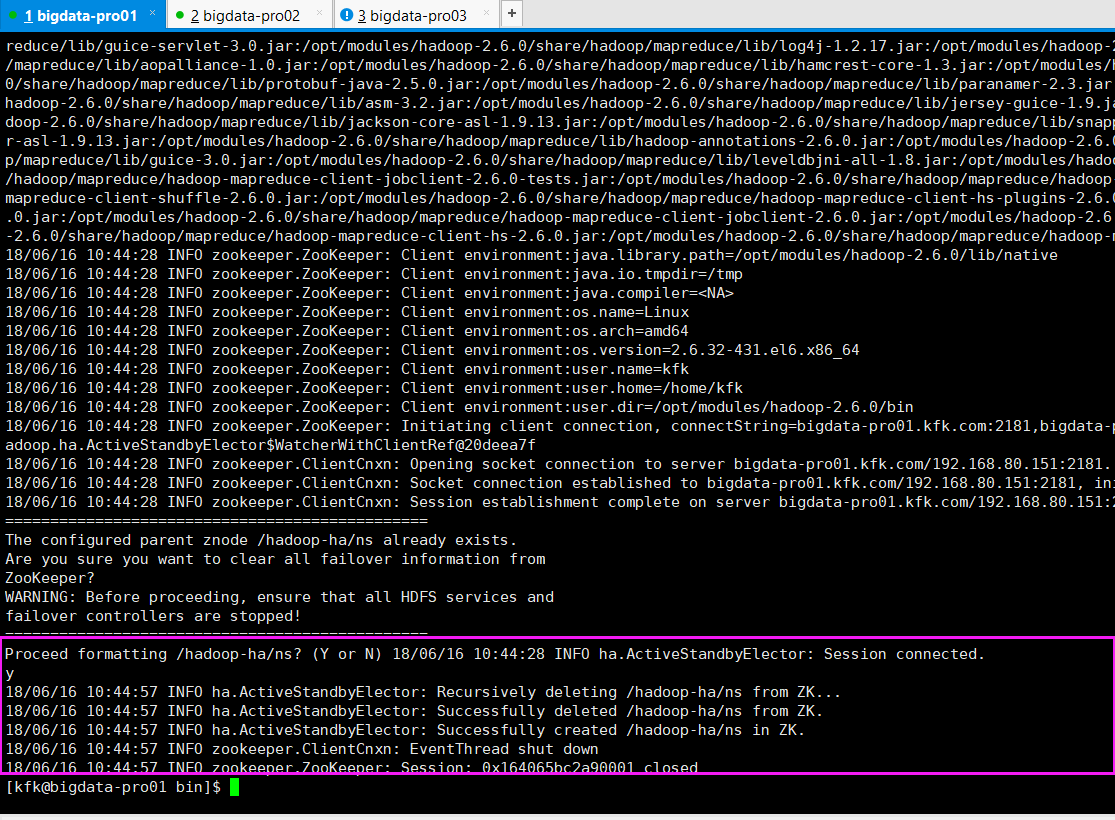

18/06/16 10:44:28 INFO zookeeper.ZooKeeper: Initiating client connection, connectString=bigdata-pro01.kfk.com:2181,bigdata-pro02.kfk.com:2181,bigdata-pro03.kfk.com:2181 sessionTimeout=5000 watcher=org.apache.hadoop.ha.ActiveStandbyElector$WatcherWithClientRef@20deea7f 18/06/16 10:44:28 INFO zookeeper.ClientCnxn: Opening socket connection to server bigdata-pro01.kfk.com/192.168.80.151:2181. Will not attempt to authenticate using SASL (unknown error) 18/06/16 10:44:28 INFO zookeeper.ClientCnxn: Socket connection established to bigdata-pro01.kfk.com/192.168.80.151:2181, initiating session 18/06/16 10:44:28 INFO zookeeper.ClientCnxn: Session establishment complete on server bigdata-pro01.kfk.com/192.168.80.151:2181, sessionid = 0x164065bc2a90001, negotiated timeout = 5000 =============================================== The configured parent znode /hadoop-ha/ns already exists. Are you sure you want to clear all failover information from ZooKeeper? WARNING: Before proceeding, ensure that all HDFS services and failover controllers are stopped! =============================================== Proceed formatting /hadoop-ha/ns? (Y or N) 18/06/16 10:44:28 INFO ha.ActiveStandbyElector: Session connected. y 18/06/16 10:44:57 INFO ha.ActiveStandbyElector: Recursively deleting /hadoop-ha/ns from ZK... 18/06/16 10:44:57 INFO ha.ActiveStandbyElector: Successfully deleted /hadoop-ha/ns from ZK. 18/06/16 10:44:57 INFO ha.ActiveStandbyElector: Successfully created /hadoop-ha/ns in ZK. 18/06/16 10:44:57 INFO zookeeper.ClientCnxn: EventThread shut down 18/06/16 10:44:57 INFO zookeeper.ZooKeeper: Session: 0x164065bc2a90001 closed [kfk@bigdata-pro01 bin]$

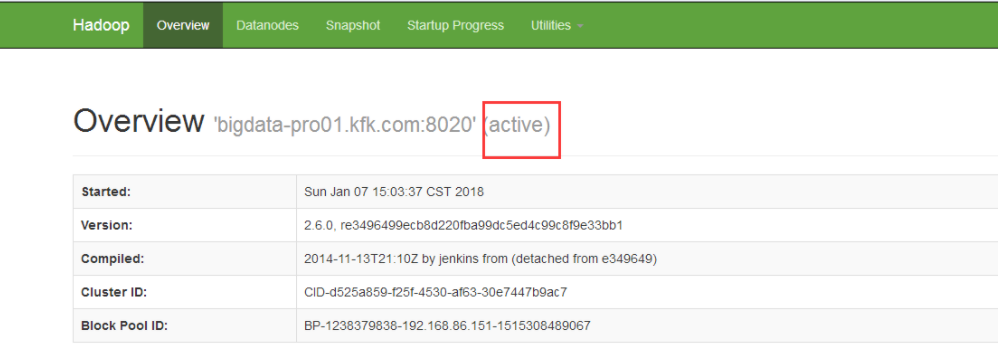

启动并测试

1、先停止掉Hadoop和zookeeper的进程。

2、启动zookeeper进程。

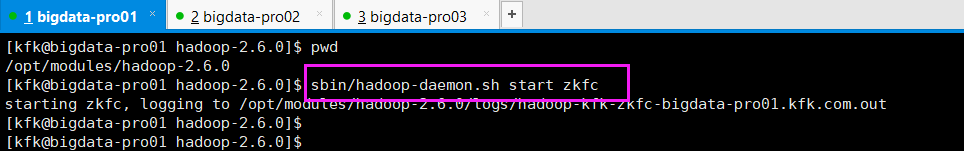

3、开启zkfc进程

[kfk@bigdata-pro01 hadoop-2.6.0]$ pwd /opt/modules/hadoop-2.6.0 [kfk@bigdata-pro01 hadoop-2.6.0]$ sbin/hadoop-daemon.sh start zkfc starting zkfc, logging to /opt/modules/hadoop-2.6.0/logs/hadoop-kfk-zkfc-bigdata-pro01.kfk.com.out

4、进入Hadoop的安装目录下面的sbin目录中,找到start-dfs.sh命令可以启动NameNode,当然这里需要你在配置了NameNode主节点的Hadoop节点上面来执行他。

或者,直接sbin/start-all.sh

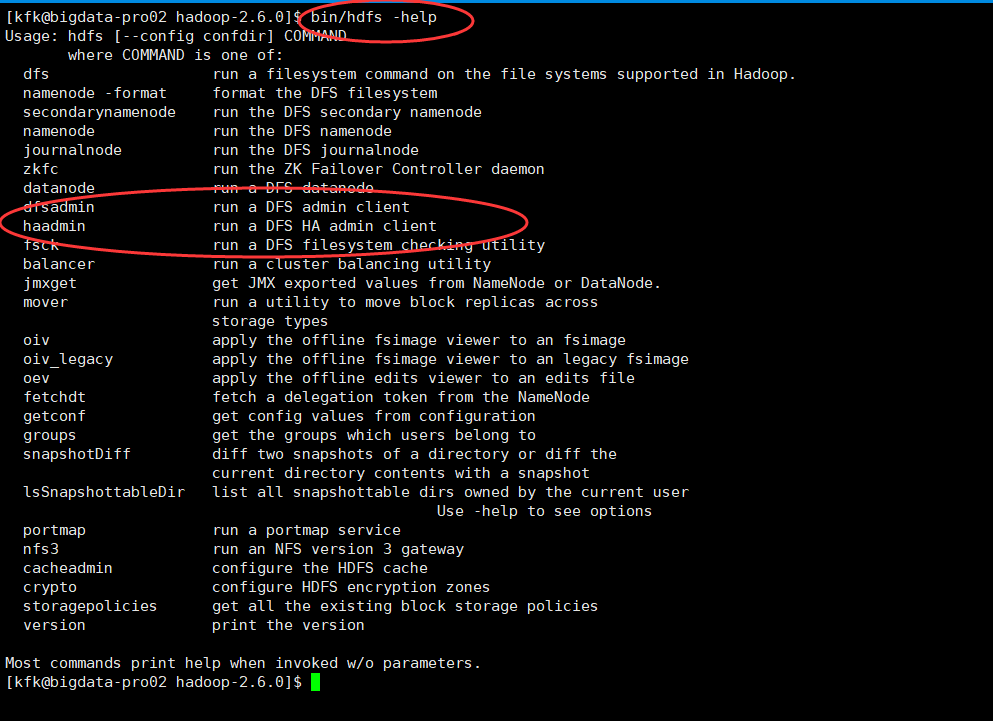

[kfk@bigdata-pro02 hadoop-2.6.0]$ bin/hdfs -help Usage: hdfs [--config confdir] COMMAND where COMMAND is one of: dfs run a filesystem command on the file systems supported in Hadoop. namenode -format format the DFS filesystem secondarynamenode run the DFS secondary namenode namenode run the DFS namenode journalnode run the DFS journalnode zkfc run the ZK Failover Controller daemon datanode run a DFS datanode dfsadmin run a DFS admin client haadmin run a DFS HA admin client fsck run a DFS filesystem checking utility balancer run a cluster balancing utility jmxget get JMX exported values from NameNode or DataNode. mover run a utility to move block replicas across storage types oiv apply the offline fsimage viewer to an fsimage oiv_legacy apply the offline fsimage viewer to an legacy fsimage oev apply the offline edits viewer to an edits file fetchdt fetch a delegation token from the NameNode getconf get config values from configuration groups get the groups which users belong to snapshotDiff diff two snapshots of a directory or diff the current directory contents with a snapshot lsSnapshottableDir list all snapshottable dirs owned by the current user Use -help to see options portmap run a portmap service nfs3 run an NFS version 3 gateway cacheadmin configure the HDFS cache crypto configure HDFS encryption zones storagepolicies get all the existing block storage policies version print the version Most commands print help when invoked w/o parameters.

[kfk@bigdata-pro02 hadoop-2.6.0]$ [kfk@bigdata-pro02 hadoop-2.6.0]$ bin/hdfs haadmin -help Usage: DFSHAAdmin [-ns <nameserviceId>] [-transitionToActive <serviceId> [--forceactive]] [-transitionToStandby <serviceId>] [-failover [--forcefence] [--forceactive] <serviceId> <serviceId>] [-getServiceState <serviceId>] [-checkHealth <serviceId>] [-help <command>] Generic options supported are -conf <configuration file> specify an application configuration file -D <property=value> use value for given property -fs <local|namenode:port> specify a namenode -jt <local|resourcemanager:port> specify a ResourceManager -files <comma separated list of files> specify comma separated files to be copied to the map reduce cluster -libjars <comma separated list of jars> specify comma separated jar files to include in the classpath. -archives <comma separated list of archives> specify comma separated archives to be unarchived on the compute machines. The general command line syntax is bin/hadoop command [genericOptions] [commandOptions] [kfk@bigdata-pro02 hadoop-2.6.0]$

注意,其实自带的命令里,都提供了,若两者都是standby状态怎么执行。若两者都是active状态怎么执行。这里,不多赘述。

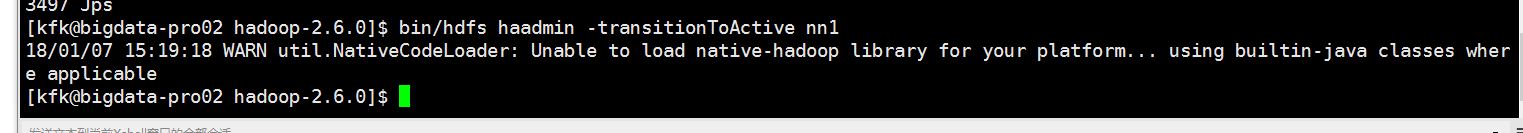

如果,还是没解决的话,则

bin/hdfs haadmin -transitionToActive nn1

同时,大家可以关注我的个人博客:

http://www.cnblogs.com/zlslch/ 和 http://www.cnblogs.com/lchzls/ http://www.cnblogs.com/sunnyDream/

详情请见:http://www.cnblogs.com/zlslch/p/7473861.html

人生苦短,我愿分享。本公众号将秉持活到老学到老学习无休止的交流分享开源精神,汇聚于互联网和个人学习工作的精华干货知识,一切来于互联网,反馈回互联网。

目前研究领域:大数据、机器学习、深度学习、人工智能、数据挖掘、数据分析。 语言涉及:Java、Scala、Python、Shell、Linux等 。同时还涉及平常所使用的手机、电脑和互联网上的使用技巧、问题和实用软件。 只要你一直关注和呆在群里,每天必须有收获

对应本平台的讨论和答疑QQ群:大数据和人工智能躺过的坑(总群)(161156071)

1671

1671

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?