当kafka中有敏感数据,或者不想kafka中的数据被不可控因素污染的时候,就需要考虑kafka的安全性了,kafka的安全机制主要分为两部分:身份认证(Authentication)和权限控制(Authorization),本文就这两部分进行测试。

身份认证(Authentication)

Kafka目前支持SSL、SASL/Kerberos、SASL/PLAIN三种认证机制,为例方便维护,以及最小化的性能损失,我们采用SASL/PLAIN这种方式。

kafka服务端配置

/etc/kafka/server.properties

broker.id=50

delete.topic.enable=true

listeners=SASL_PLAINTEXT://10.205.51.50:9092

advertised.listeners=SASL_PLAINTEXT://10.205.51.50:9092

security.inter.broker.protocol=SASL_PLAINTEXT

sasl.enabled.mechanisms=PLAIN

sasl.mechanism.inter.broker.protocol=PLAIN

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/var/lib/kafka

num.partitions=1

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=10.205.51.50:2181

zookeeper.connection.timeout.ms=6000

confluent.support.metrics.enable=true

confluent.support.customer.id=anonymousps:security.inter.broker.protocol还可以选择SASL_SSL模式。

/etc/kafka/kafka_server_jaas.conf

KafkaServer {

org.apache.kafka.common.security.plain.PlainLoginModule required

username="admin"

password="admin"

user_admin="admin"

user_producer="producer"

user_consumer="consumer"

user_dalu="dalu";

};简单介绍一下jaas的配置,配置定义了四个用户:admin、producer、consumer、dalu。username和password两个配置项是用于broker之间的内部通讯的,在上面的例子中admin是用于broker内部通讯的。user_{xxx}='yyy'配置项才是设置外部通讯用户的,表示定义了一个xxx用户,密码是yyy。具体参照confluent

/usr/bin/kafka-run-class

271 KAFKA_SASL_OPTS='-Djava.security.auth.login.config=/etc/kafka/kafka_server_jaas.conf'

272 # Launch mode

273 if [ "x$DAEMON_MODE" = "xtrue" ]; then

274 nohup $JAVA $KAFKA_HEAP_OPTS $KAFKA_JVM_PERFORMANCE_OPTS $KAFKA_GC_LOG_OPTS $KAFKA_SASL_OPTS $KAFKA_JMX_OPTS $KAFKA_LOG4J_OPTS -cp $CLASSPATH $KAFKA_OPTS "$@" > "$CONSOLE_OUTPUT_FILE" 2>&1 < /dev/null &

275 else

276 exec $JAVA $KAFKA_HEAP_OPTS $KAFKA_JVM_PERFORMANCE_OPTS $KAFKA_GC_LOG_OPTS $KAFKA_SASL_OPTS $KAFKA_JMX_OPTS $KAFKA_LOG4J_OPTS -cp $CLASSPATH $KAFKA_OPTS "$@"

277 fi

服务端创建test2 topic

kafka-topics --create --zookeeper 10.205.51.50 --partitions 1 --replication-factor 1 --topic test2kafka客户端配置(以dalu用户进行连接)

/etc/kafka/kafka_client_jaas.conf

KafkaClient {

org.apache.kafka.common.security.plain.PlainLoginModule required

username="dalu"

password="dalu";

};/usr/bin/kafka-run-class

271 KAFKA_SASL_OPTS='-Djava.security.auth.login.config=/etc/kafka/kafka_client_jaas.conf'

272 # Launch mode

273 if [ "x$DAEMON_MODE" = "xtrue" ]; then

274 nohup $JAVA $KAFKA_HEAP_OPTS $KAFKA_JVM_PERFORMANCE_OPTS $KAFKA_GC_LOG_OPTS $KAFKA_SASL_OPTS $KAFKA_JMX_OPTS $KAFKA_LOG4J_OPTS -cp $CLASSPATH $KAFKA_OPTS "$@" > "$CONSOLE_OUTPUT_FILE" 2>&1 < /dev/null &

275 else

276 exec $JAVA $KAFKA_HEAP_OPTS $KAFKA_JVM_PERFORMANCE_OPTS $KAFKA_GC_LOG_OPTS $KAFKA_SASL_OPTS $KAFKA_JMX_OPTS $KAFKA_LOG4J_OPTS -cp $CLASSPATH $KAFKA_OPTS "$@"

277 fi

/etc/kafka/{producer.properties,consumer.properties}追加以下内容

security.protocol=SASL_PLAINTEXT

sasl.mechanism=PLAINkafka客户端连接

原始方法启动producer:

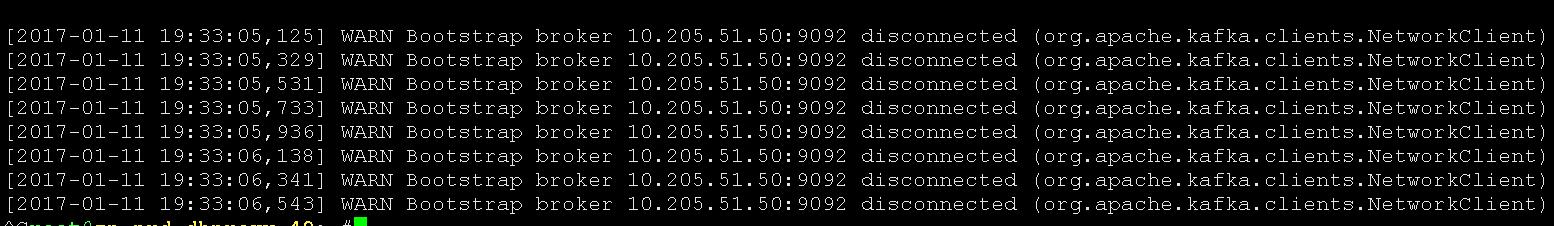

kafka-console-producer --broker-list 10.205.51.50:9092 --topic test2

可以看到当启动命令后,出现了大量的无法连接到服务端的状况,下面我们以认证方法启动:

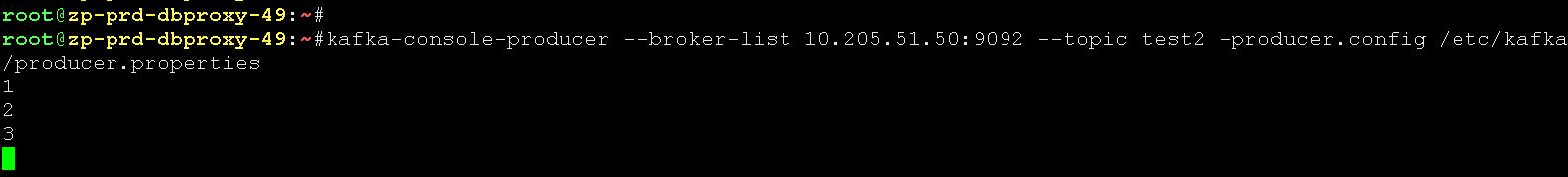

kafka-console-producer --broker-list 10.205.51.50:9092 --topic test2 --producer.config /etc/kafka/producer.properties

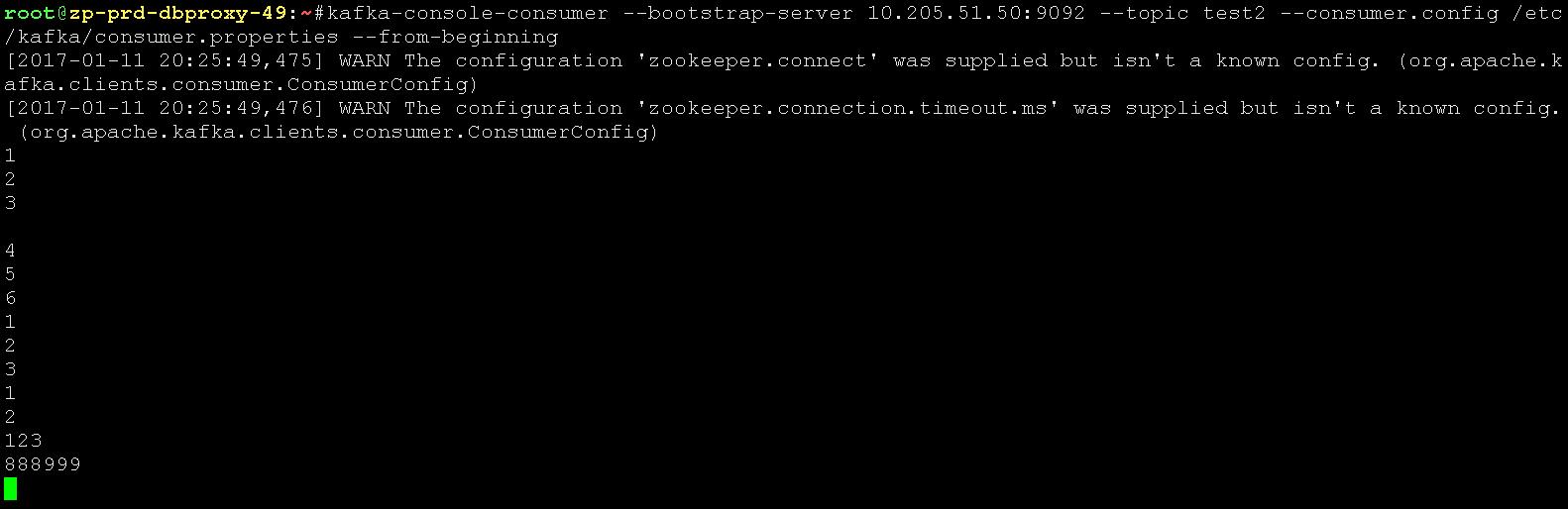

启动consumer:

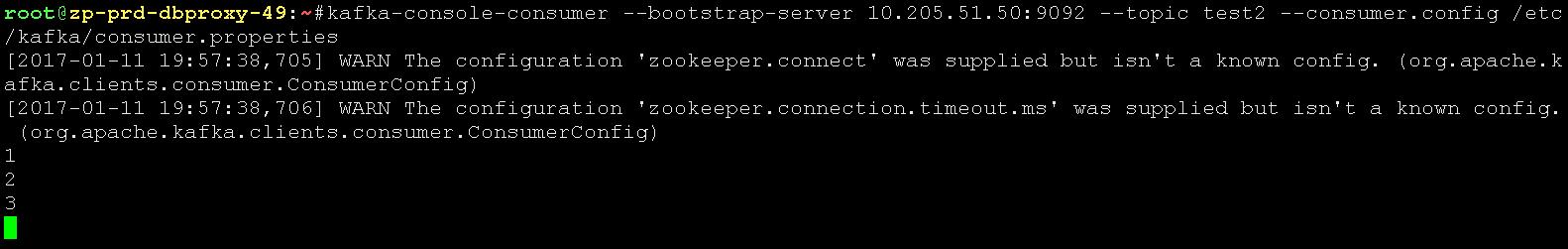

kafka-console-consumer --bootstrap-server 10.205.51.50:9092 --topic test2 --consumer.config /etc/kafka/consumer.properties

权限控制(Authorization)

kafka自带的ACL机制,可以针对topic的访问权限进行细化。

kafka服务端配置

/etc/kafka/server.properties

broker.id=50

delete.topic.enable=true

listeners=SASL_PLAINTEXT://10.205.51.50:9092

advertised.listeners=SASL_PLAINTEXT://10.205.51.50:9092

security.inter.broker.protocol=SASL_PLAINTEXT

sasl.enabled.mechanisms=PLAIN

sasl.mechanism.inter.broker.protocol=PLAIN

authorizer.class.name=kafka.security.auth.SimpleAclAuthorizer

super.users=User:admin

num.network.threads=3

num.io.threads=8

socket.send.buffer.bytes=102400

socket.receive.buffer.bytes=102400

socket.request.max.bytes=104857600

log.dirs=/var/lib/kafka

num.partitions=1

num.recovery.threads.per.data.dir=1

log.retention.hours=168

log.segment.bytes=1073741824

log.retention.check.interval.ms=300000

zookeeper.connect=10.205.51.50:2181

zookeeper.connection.timeout.ms=6000

confluent.support.metrics.enable=true

confluent.support.customer.id=anonymous在访问控制的基础上增加authorizer.class.name=kafka.security.auth.SimpleAclAuthorizer,super.users=User:admin两行配置。

为用户 producer 在 test(topic)上添加写的权限

kafka-acls --authorizer-properties zookeeper.connect=10.205.51.50:2181 --add --allow-principal User:producer --operation Write --topic test为用户 consumer 在 test(topic)上添加写的权限

kafka-acls --authorizer-properties zookeeper.connect=10.205.51.50:2181 --add --allow-principal User:consumer --operation Read --topic test对于 topic 为 test 的消息队列,拒绝来自 ip 为10.205.51.48账户为dalu进行 read 操作,其他用户都允许

kafka-acls --authorizer-properties zookeeper.connect=10.205.51.50:2181 --add --allow-principal User:* --allow-host * --deny-principal User:dalu --deny-host 10.205.51.48 --operation Read --topic test为dalu添加all,以允许来自 ip 为10.205.51.49或者10.205.51.48的读写请求

kafka-acls --authorizer-properties zookeeper.connect=10.205.51.50:2181 --add --allow-principal User:dalu --allow-host 10.205.51.49 --allow-host 10.205.51.48 --operation Read --operation Write --topic testps:以上四种方法都能很好的对topic进行权限控制,不过在生产环境中部署如此严格的策略,对于维护者来说,也是一项很烦恼的事,在非如此安全的策略下,我们可以使用角色权限。

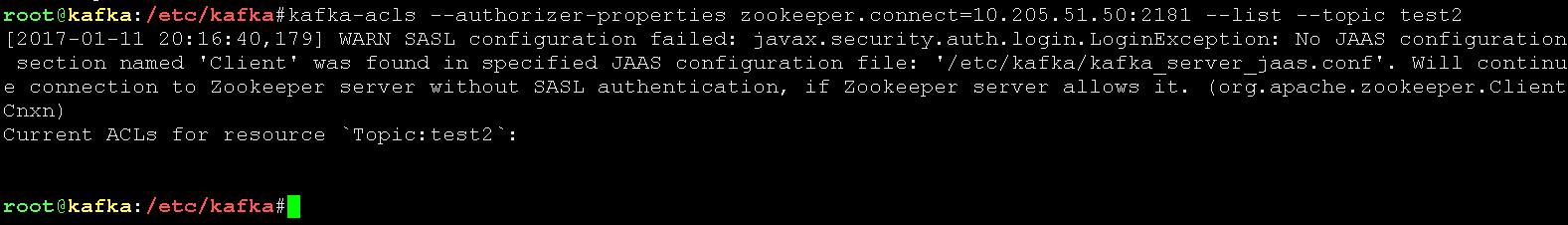

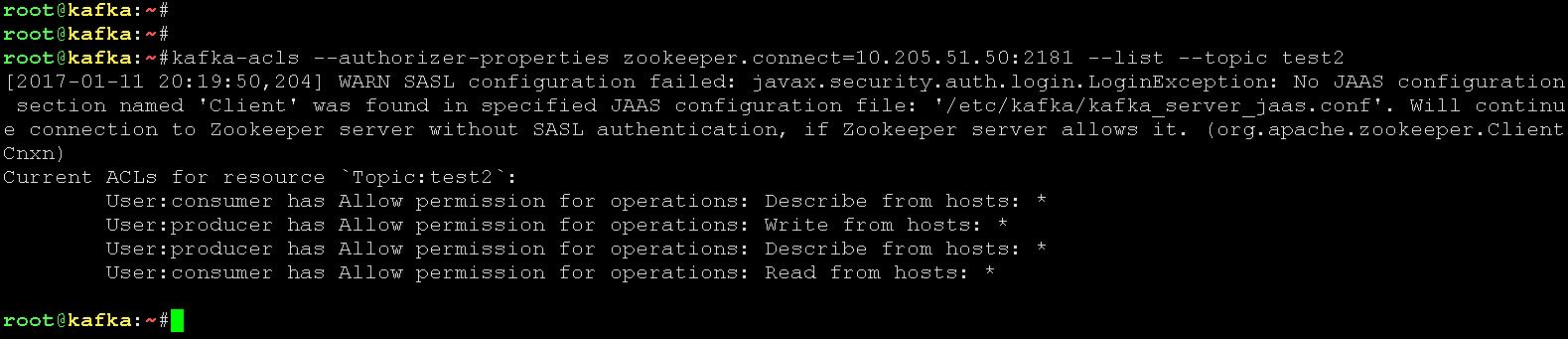

查看test2 topic当前权限

kafka-acls --authorizer-properties zookeeper.connect=10.205.51.50:2181 --list --topic test2

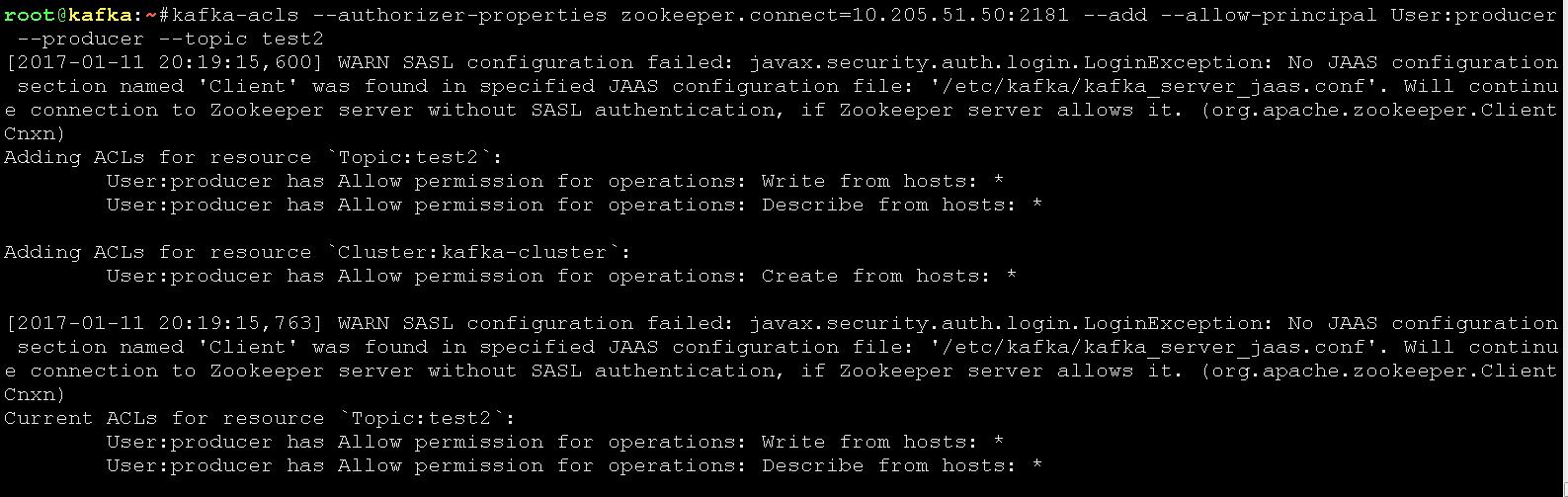

为producer用户赋权

kafka-acls --authorizer-properties zookeeper.connect=10.205.51.50:2181 --add --allow-principal User:producer --producer --topic test2

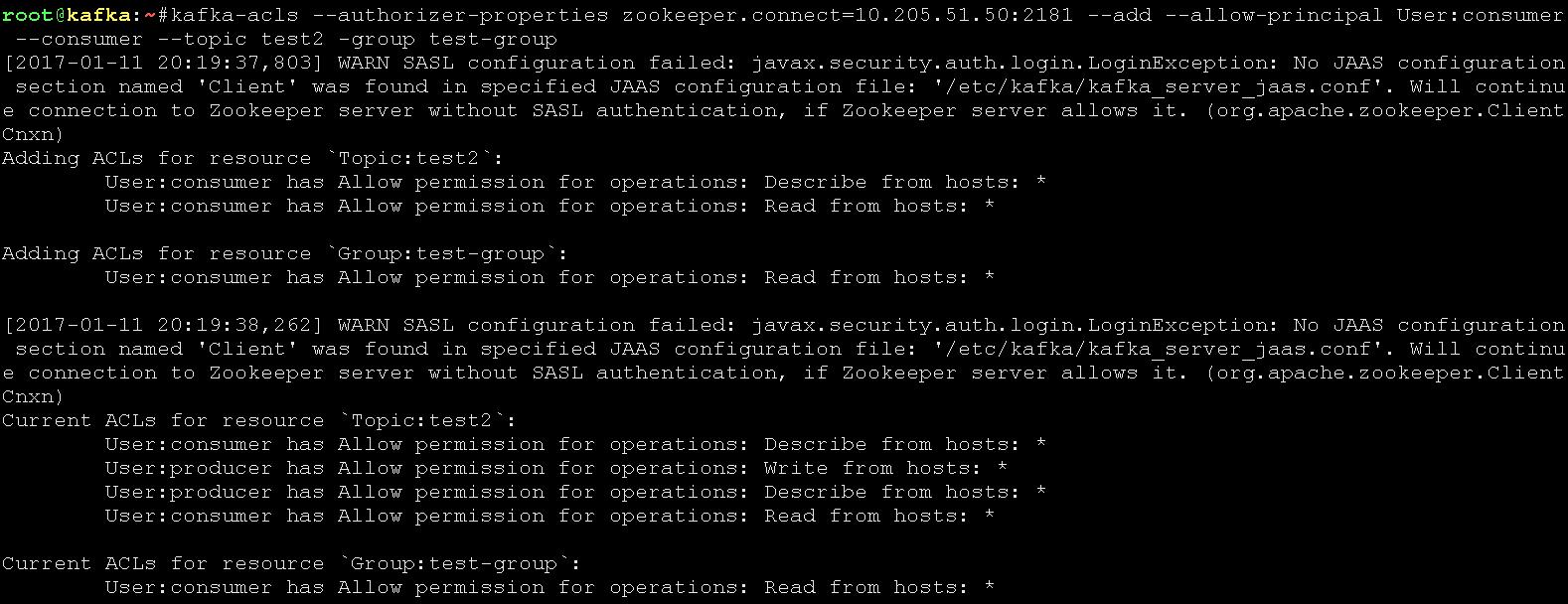

为consumer用户赋权

kafka-acls --authorizer-properties zookeeper.connect=10.205.51.50:2181 --add --allow-principal User:consumer --consumer --topic test -group test-group

查看test2 topic当前权限

kafka-acls --authorizer-properties zookeeper.connect=10.205.51.50:2181 --list --topic test2

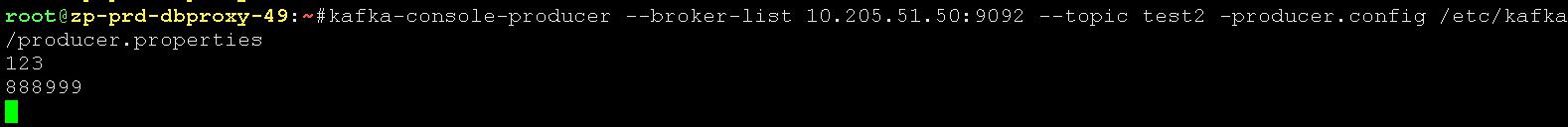

producer用户的客户端发送信息

kafka-console-producer --broker-list 10.205.51.50:9092 --topic test2 -producer.config /etc/kafka/producer.properties

确保consumer用户的客户端配置文件中group.id是之前申请的id:/etc/kafka/consumer.properties

#consumer group id

group.id=test-groupconsumer用户的客户端接收消息

kafka-console-consumer --bootstrap-server 10.205.51.50:9092 --topic test2 --consumer.config /etc/kafka/consumer.properties --from-beginning

ps:不太好的方面是,当你以dalu用户消费/生产test2 topic的时候,虽然无法正常运行,但是系统没有任何报错,不太友好。

1058

1058

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?