一、环境

开发环境:

系统:Win10

开发工具:scala-eclipse-IDE

项目管理工具:Maven 3.6.0

JDK 1.8

Scala 2.11.11

Spark 2.4.3

MySQL 5.1.47

MySQL c3p0 0.9.1.2 连接池

作业运行环境:

系统:Linux CentOS7(两台机:主从节点)

master : 192.168.190.200

slave1 : 192.168.190.201

JDK 1.8

Scala 2.11.11

Spark 2.4.3

MySQL 5.1.47(只运行在master节点)二、案例

1. 在之前博文Spark Streaming之流式词频统计(Socket数据源)基础上,利用从Socket数据源获取单词,进行单词统计,并将统计结果输出至MySQL数据库中;(注:相关Netcat 的安装使用,也可参看之前的这篇文章)

2. 利用 c3p0 建立数据库连接池,每次连接MySQL都从连接池中获取,减少建立连接的开销;

3. 对 c3p0 连接池进行封装,形成惰性单例模式,减少连接池创建的开销。

4. 对离散数据流(DStream)的每个弹性分布式数据集(RDD)的每个分区(partition)中的数据记录进行一次批量SQL插入的执行,因为每个分区的数据都在同一Worker节点,不会跨机器,所以按分区执行批量SQL插入;

5. MySQL接收表格式如下:(*实际生产中数据量会很大,一张表不好管理,会按日期分表,如:wordfreq_yyyy_MM_dd)

# 创建数据库spark

create database spark;

# 向hadoop用户授权从slave1节点登陆访问MySQL的spark数据库所有表,密码123456

# 因为master节点是执行Spark的Driver任务,

# 而slave1节点是执行Spark的Executor计算任务的,

# 所以需要从计算结点slave1访问master节点的MySQL。

grant all on spark.* to hadoop@'slave1' identified by '123456';

# 使用数据库spark

use spark;

# 词频统计表

drop table if exists wordfreq;

create table if not exists wordfreq (

id bigint not null auto_increment primary key,

word varchar(20) not null comment '单词',

cnt int not null comment '单词计数',

time timestamp not null default '0000-00-00 00:00:00' comment '统计时间'

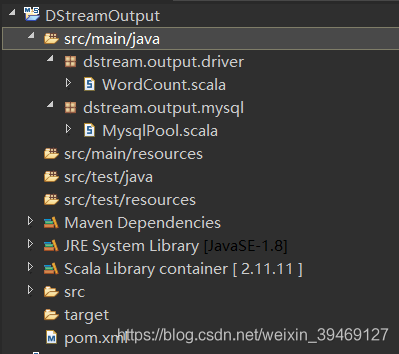

);三、代码实现(Maven项目:DStreamOutput)

1. pom.xml:

<project xmlns="http://maven.apache.org/POM/4.0.0" xmlns:xsi="http://www.w3.org/2001/XMLSchema-instance" xsi:schemaLocation="http://maven.apache.org/POM/4.0.0 http://maven.apache.org/xsd/maven-4.0.0.xsd">

<modelVersion>4.0.0</modelVersion>

<groupId>com</groupId>

<artifactId>DStreamOutput</artifactId>

<version>0.1</version>

<dependencies>

<dependency><!-- Spark核心依赖包 -->

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.4.3</version>

<scope>provided</scope><!-- 运行时提供,打包不添加,Spark集群已自带 -->

</dependency>

<dependency><!-- Spark Streaming依赖包 -->

<groupId>org.apache.spark</groupId>

<artifactId>spark-streaming_2.11</artifactId>

<version>2.4.3</version>

<scope>provided</scope><!-- 运行时提供,打包不添加,Spark集群已自带 -->

</dependency>

<dependency><!-- Log 日志依赖包 -->

<groupId>log4j</groupId>

<artifactId>log4j</artifactId>

<version>1.2.17</version>

</dependency>

<dependency><!-- 日志依赖接口 -->

<groupId>org.slf4j</groupId>

<artifactId>slf4j-log4j12</artifactId>

<version>1.7.12</version>

</dependency>

<dependency><!-- MySQL 依赖包 -->

<groupId>mysql</groupId>

<artifactId>mysql-connector-java</artifactId>

<version>5.1.47</version>

</dependency>

<dependency><!-- MySQL 连接池依赖包 -->

<groupId>c3p0</groupId>

<artifactId>c3p0</artifactId>

<version>0.9.1.2</version>

</dependency>

</dependencies>

<build>

<plugins>

<!-- 混合scala/java编译 -->

<plugin><!-- scala编译插件 -->

<groupId>org.scala-tools</groupId>

<artifactId>maven-scala-plugin</artifactId>

<executions>

<execution>

<id>compile</id>

<goals>

<goal>compile</goal>

</goals>

<phase>compile</phase>

</execution>

<execution>

<id>test-compile</id>

<goals>

<goal>testCompile</goal>

</goals>

<phase>test-compile</phase>

</execution>

<execution>

<phase>process-resources</phase>

<goals>

<goal>compile</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin>

<artifactId>maven-compiler-plugin</artifactId>

<configuration>

<source>1.8</source><!-- 设置Java源 -->

<target>1.8</target>

</configuration>

</plugin>

<!-- for fatjar -->

<plugin><!-- 将所有依赖包打入同一个jar包中 -->

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-assembly-plugin</artifactId>

<version>2.4</version>

<configuration>

<descriptorRefs>

<!-- jar包的后缀名 -->

<descriptorRef>jar-with-dependencies</descriptorRef>

</descriptorRefs>

</configuration>

<executions>

<execution>

<id>assemble-all</id>

<phase>package</phase>

<goals>

<goal>single</goal>

</goals>

</execution>

</executions>

</plugin>

<plugin><!-- Maven打包插件 -->

<groupId>org.apache.maven.plugins</groupId>

<artifactId>maven-jar-plugin</artifactId>

<configuration>

<archive>

<manifest>

<!-- 添加类路径 -->

<addClasspath>true</addClasspath>

<!-- 设置程序的入口类 -->

<mainClass>dstream.output.driver.WordCount</mainClass>

</manifest>

</archive>

</configuration>

</plugin>

</plugins>

</build>

<repositories>

<repository>

<id>alimaven</id>

<name>aliyun maven</name>

<url>http://maven.aliyun.com/nexus/content/groups/public/</url>

<releases>

<enabled>true</enabled>

</releases>

<snapshots>

<enabled>false</enabled>

</snapshots>

</repository>

</repositories>

</project>2. c3p0连接池惰性单例:

package dstream.output.mysql

import com.mchange.v2.c3p0.ComboPooledDataSource

import java.sql.Connection

import org.apache.log4j.Logger

class MysqlPool extends Serializable{

private val cpds: ComboPooledDataSource = new ComboPooledDataSource(true) //自动注册

try {

//设置Mysql信息

cpds.setJdbcUrl("jdbc:mysql://master:3306/spark?useUnicode=true&characterEncoding=UTF-8")

cpds.setDriverClass("com.mysql.jdbc.Driver") //对应mysql-connector-java-5.1.47版本的驱动

cpds.setUser("hadoop")

cpds.setPassword("123456")

cpds.setMaxPoolSize(10) //连接池最大连接数

cpds.setMinPoolSize(2) //连接池最小连接数

cpds.setAcquireIncrement(2) //连接数每次递增数量

cpds.setMaxStatements(180) //连接池最大空闲时间

} catch {

case e: Exception => e.printStackTrace()

}

//获取连接

def getConnection: Connection = {

try {

return cpds.getConnection()

} catch {

case e: Exception => e.printStackTrace()

null

}

}

}

//惰性单例,真正计算时才初始化对象

object MysqlManager {

@volatile private var mysqlPool: MysqlPool = _

def getMysqlPool: MysqlPool = {

if (mysqlPool == null) {

synchronized {

if (mysqlPool == null) {

mysqlPool = new MysqlPool

}

}

}

mysqlPool

}

}3. 单词统计入口:

package dstream.output.driver

import org.apache.spark.SparkConf

import org.apache.spark.streaming.StreamingContext

import org.apache.spark.streaming.Seconds

import org.apache.spark.rdd.RDD

import org.apache.spark.streaming.Time

import scala.collection.Iterator

import dstream.output.mysql.MysqlManager

object WordCount extends App{

//Spark配置项

val conf = new SparkConf()

.setAppName("SocketWordFreq")

.setMaster("spark://master:7077")

//创建流式上下文,2s的批处理间隔

val ssc = new StreamingContext(conf, Seconds(2))

//创建一个DStream,连接指定的hostname:port,比如master:9999

val lines = ssc.socketTextStream("master", 9999) //DS1

//将接收到的每条信息分割成单个词汇

val words = lines.flatMap(_.split(" ")) //DS2

//统计每个batch的词频

val pairs = words.map(word => (word, 1)) //DS3

// 汇总词汇

val wordCounts = pairs.reduceByKey(_ + _) //DS4

//在reduce聚合之后,输出结果至MySQL(输出操作)

wordCounts.foreachRDD((rdd: RDD[(String, Int)], time: Time) => {

//RDD为空时,无需再向下执行,否则在分区中还需要获取数据库连接(无用操作)

if (!rdd.isEmpty()) {

//一个分区执行一批SQL

rdd.foreachPartition((partition: Iterator[(String, Int)]) => {

//partition和record共同位于本地计算节点Worker,故无需序列化发送conn和statement

//获取Mysql连接

val conn = MysqlManager.getMysqlPool.getConnection

if (conn == null) {

//做好判空,否则如果某个新加的节点还没设置访问数据库权限,会卡在数据库连接处,不断尝试连接

println("conn is null.") //在Worker节点的Executor中打印

} else {

println("conn is not null.")

//创建语句

val statement = conn.createStatement()

try {

conn.setAutoCommit(false) //设置手动提交

partition.foreach((record: (String, Int)) => {

//创建sql,加入批处理

//注:字符串列的值需用单引号括起来,如:word列

val sql = "insert into spark.wordfreq (word, cnt, time) value ('" + record._1 + "', " + record._2 + ", sysdate());"

statement.addBatch(sql) //加入语句batch

})

statement.executeBatch() //批量执行sql语句

conn.commit() //事务提交

} catch {

case e: Exception => e.printStackTrace()

} finally {

statement.close() //关闭语句

conn.close() //关闭连接

}

}

})

}

})

//打印从DStream中生成的RDD的前10个元素到控制台中

wordCounts.print() //print() 是输出操作,默认前10条数据

ssc.start() //开始计算

ssc.awaitTermination() //等待计算结束

}四、打包运行

1.在项目的根目录下运行命令行窗口(在目录下 "shift+右键",选择命令行窗口 Power Shell)

执行如下命令:(编译代码)

> mvn clean install

编译成功后,会在当前目录的 ".\target\" 下产生两个jar包;

其中的 DStreamOutput-0.1-jar-with-dependencies.jar 用来提交给Spaek集群

用终端A(如:Windows的PowerShell)通过ssh登陆master节点,执行

2.master节点建立Socket服务器(9999端口)

$ nc -lk 9999

>

用终端B(如:Windows的PowerShell,可连接多台)通过ssh登陆master节点,执行

3.将Jar包提交至主节点上,执行Spark作业:

提交Spark作业:(需先配置Spark_HOME环境变量)

$ spark-submit \

--class dstream.output.driver.WordCount \

/opt/DStreamOutput-0.1-jar-with-dependencies.jar

注1:其中每行的末尾 "\" 代表不换行,命令需在一行上输入,此处只为方便观看

注2:提交的Jar包放在 /opt/ 目录下

Spark流式作业运行过程的输出(以2s间隔,不停打印时间信息)

-----------------------------

Time: 1560853450000 ms

-----------------------------

-----------------------------

Time: 1560853452000 ms

-----------------------------

-----------------------------

Time: 1560853454000 ms

-----------------------------

4.在终端A执行,输入数据

$ nc -lk 9999

> hello word hello words hello world

>

5.终端B中可以看到统计结果输出

-----------------------------

Time: 1560853460000 ms

-----------------------------

(hello,3)

(word,1)

(words,1)

(world,1)

-----------------------------

Time: 1560853462000 ms

-----------------------------

6.同时,进入MySQL中查看接收到的数据

select * from spark.wordfreq;

+----+-------+-----+---------------------+

| id | word | cnt | time |

+----+-------+-----+---------------------+

| 1 | hello | 3 | 2019-06-18 18:24:20 |

| 2 | word | 1 | 2019-06-18 18:24:20 |

| 3 | words | 1 | 2019-06-18 18:24:20 |

| 4 | world | 1 | 2019-06-18 18:24:20 |

+----+-------+-----+---------------------+

此刻,即表示成功将流数据输出至MySQL中。

六、计算节点输出信息和错误日志

1. 计算节点(192.168.190.201: slave1)的 输出日志 和 错误日志 在下图红色区域点击链接;

七、参考文章

1.《Spark Streaming 实时流式大数据处理实战》

2018

2018

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?