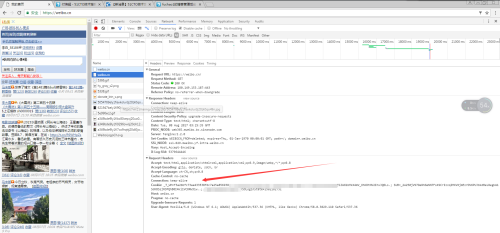

新浪微博爬取的话需要设计到登录,这里我没有模拟登录,而是使用cookie进行爬取。

获取cookie:

代码:#-*-coding:utf8-*-

from bs4 import BeautifulSoup

import requests

import time

import os

import sys

import random

reload(sys)

sys.setdefaultencoding('utf-8')

user_id = 用户id

cookie = {"Cookie": "_T_WM=f3a2assae4335dfdf38fdc7a25a88; SCF=ApMI3mluv9yH6yKz4i7-HMlHojzPtQULc5G0xlrri-NeO3Xn1FRWI5W1HElZWG1bMkX4mV_OhKDtNV2IhxJQGLs.; SUB=_2A250jET_DeRhGeNN7FsX9CrIzzqIHXVXj2y3rDV6PUJbkdBeLUrnkW1AtfoOlrd_kyd1Izu7Q1uKaFvRDQ..; SUHB=0k1ySJSrJVBDGD; SSOLoginState=1502098607"}

for page in range(100):

url = 'https://weibo.cn/573550093?page=%d' % page

response = requests.get(url, cookies = cookie)

html = response.text

soup = BeautifulSoup(html, 'lxml')

username = soup.title.string

cttlist = []

for ctt in soup.find_all('span',class_="ctt"):

cttlist.append(ctt.get_text())

ctlist = []

for ct in soup.find_all('span',class_="ct"):

ctlist.append(ct.get_text())

if page == 0:

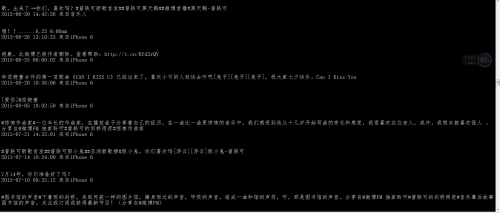

print "微博用户资料:" + cttlist[0]

print "微博用户个性签名:" + cttlist[1]

print "用户的微博动态:\n"

imgurllist = []

for img in soup.find_all('a'):

if img.find('img') is not None :

if 'http://tva3.' not in img.find('img')['src'] and 'https://h5' not in img.find('img')['src']:

imgurllist.append(img.find('img')['src'])

#imgname = soup.title.string + '_' + str(page) + str(time.time()) +str(random.randrange(0, 1000, 3)) +'.jpg'

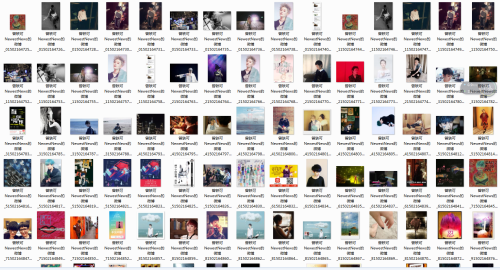

if not os.path.exists(str(soup.title.string)):

os.mkdir(str(soup.title.string))

#imgname ='./'+ str(soup.title.string) + '/'+ soup.title.string + '_' + str(time.time()) +'.jpg'

for imgurl in imgurllist:

imgname = './'+ str(soup.title.string) + '/'+soup.title.string + '_' + str(page) + str(time.time()) +str(random.randrange(0, 1000, 3)) +'.jpg'

response = requests.get('%s' % imgurl)

dirw = str(soup.title.string)

open(imgname, 'wb').write(response.content)

time.sleep(1.5)

try:

for i in range(len(ctlist)):

print cttlist[2+i]

print ctlist[i]

print "\n"

except:

for i in range(len(ctlist)):

print cttlist[i]

print ctlist[i]

print "\n"

if "下页" not in soup.select('div[id="pagelist"]')[0].get_text():

break

time.sleep(random.randint(1,3))

效果展示:

1006

1006

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?