这一篇我们来总结下PyTorch的常见报错信息,首先放上一个石墨文档,里面汇总着各种报错信息以及可能的原因,解决的办法,也希望的大家共建这份文档哦,为所有学习PyTorch的朋友提供帮助~ (Ps.注意遵守格式。)

石墨文档shimo.im下面先讲几个:

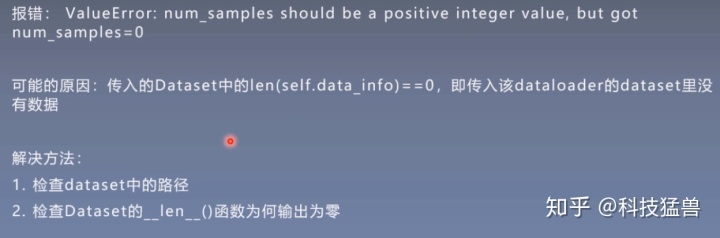

错误1:

错误2:

# TypeError: pic should be PIL Image or ndarray. Got <class 'torch.Tensor'>

错误3:

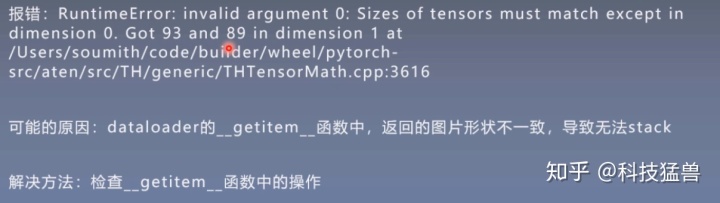

# RuntimeError: invalid argument 0: Sizes of tensors must match except in dimension 0

flag = 0

# flag = 1

if flag:

class FooDataset(Dataset):

def __init__(self, num_data, data_dir=None, transform=None):

self.foo = data_dir

self.transform = transform

self.num_data = num_data

def __getitem__(self, item):

size = torch.randint(63, 64, size=(1, ))

fake_data = torch.zeros((3, size, size))

fake_label = torch.randint(0, 10, size=(1, ))

return fake_data, fake_label

def __len__(self):

return self.num_data

foo_dataset = FooDataset(num_data=10)

foo_dataloader = DataLoader(dataset=foo_dataset, batch_size=4)

data, label = next(iter(foo_dataloader))错误4:

# Given groups=1, weight of size 6 3 5 5, expected input[16, 1, 32, 32] to have 3 channels, but got 1 channels instead

# RuntimeError: size mismatch, m1: [16 x 576], m2: [400 x 120] at ../aten/src/TH/generic/THTensorMath.cpp:752

flag = 0

# flag = 1

if flag:

class FooDataset(Dataset):

def __init__(self, num_data, shape, data_dir=None, transform=None):

self.foo = data_dir

self.transform = transform

self.num_data = num_data

self.shape = shape

def __getitem__(self, item):

fake_data = torch.zeros(self.shape)

fake_label = torch.randint(0, 10, size=(1, ))

if self.transform is not None:

fake_data = self.transform(fake_data)

return fake_data, fake_label

def __len__(self):

return self.num_data错误5:

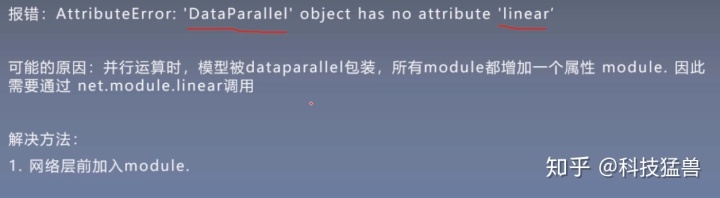

# AttributeError: 'DataParallel' object has no attribute 'linear'

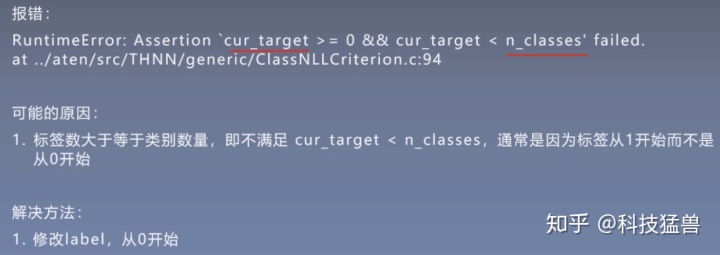

错误6:

错误7:

错误8:

# RuntimeError: Assertion `cur_target >= 0 && cur_target < n_classes' failed.

错误9:

# RuntimeError: expected device cuda:0 and dtype Long but got device cpu and dtype Long

flag = 0

# flag = 1

if flag:

x = torch.tensor([1])

w = torch.tensor([2]).to(device)

# y = w * x

x = x.to(device)

y = w * xx在GPU上才能正常运行。

# RuntimeError: Expected object of backend CPU but got backend CUDA for argument #2 'weight'

看到这你可能没有明白上面这段代码是想表达什么意思,我们先把resnet18 迁移到GPU里面,但并未把数据也迁移进去。

inputs.to(device)

labels.to(device)

这2行并不会把数据也迁移进GPU里面,因为数据是Tensor,不会执行inplace操作。

所以,要使用下面2行:

inputs = inputs.to(device)

labels = labels.to(device)

错误10:

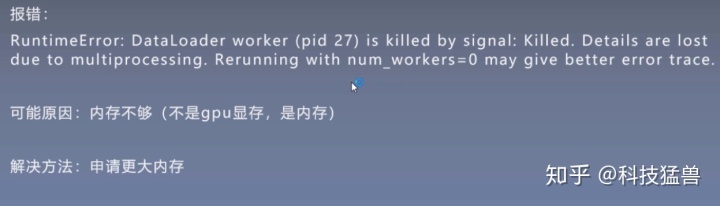

注意,看到这个报错信息,你一眼看不出来是哪里错了。但是他给了个解决办法:设置num_workers=0,也就是不用多线程加载数据。但如果num_workers=0的话,训练是非常慢的。这个问题的原因是是内存不够,而不是GPU memory不够。

错误11:

这个错误的原因是GPU显存不足,可能是你的师兄师姐把卡给占完了,解决办法是等他们跑完代码或者偷偷滴把他们的进程kill掉嘻嘻~

618

618

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?