1、继承 RichSinkFunction 类

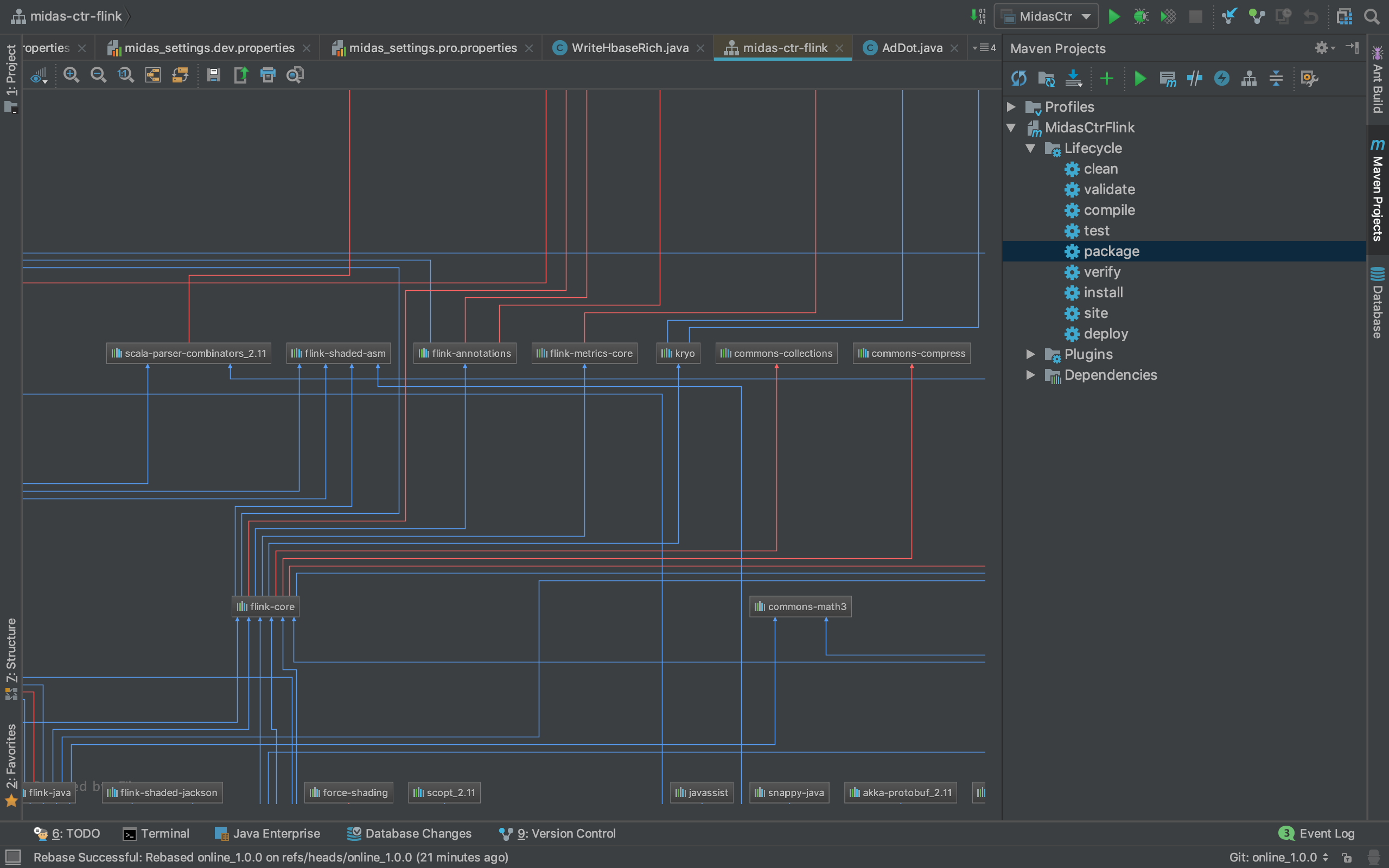

mvn配置:

org.apache.flink

flink-hbase_2.12

1.7.2

org.apache.hadoop

hadoop-client

2.7.3

xml-apis

xml-apis

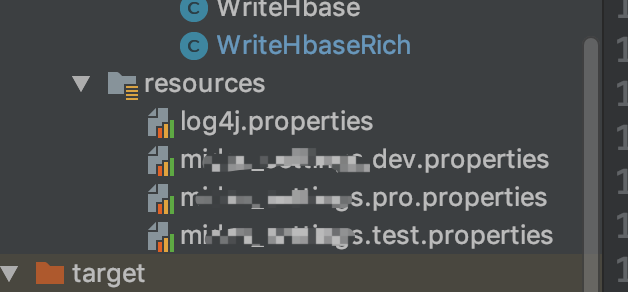

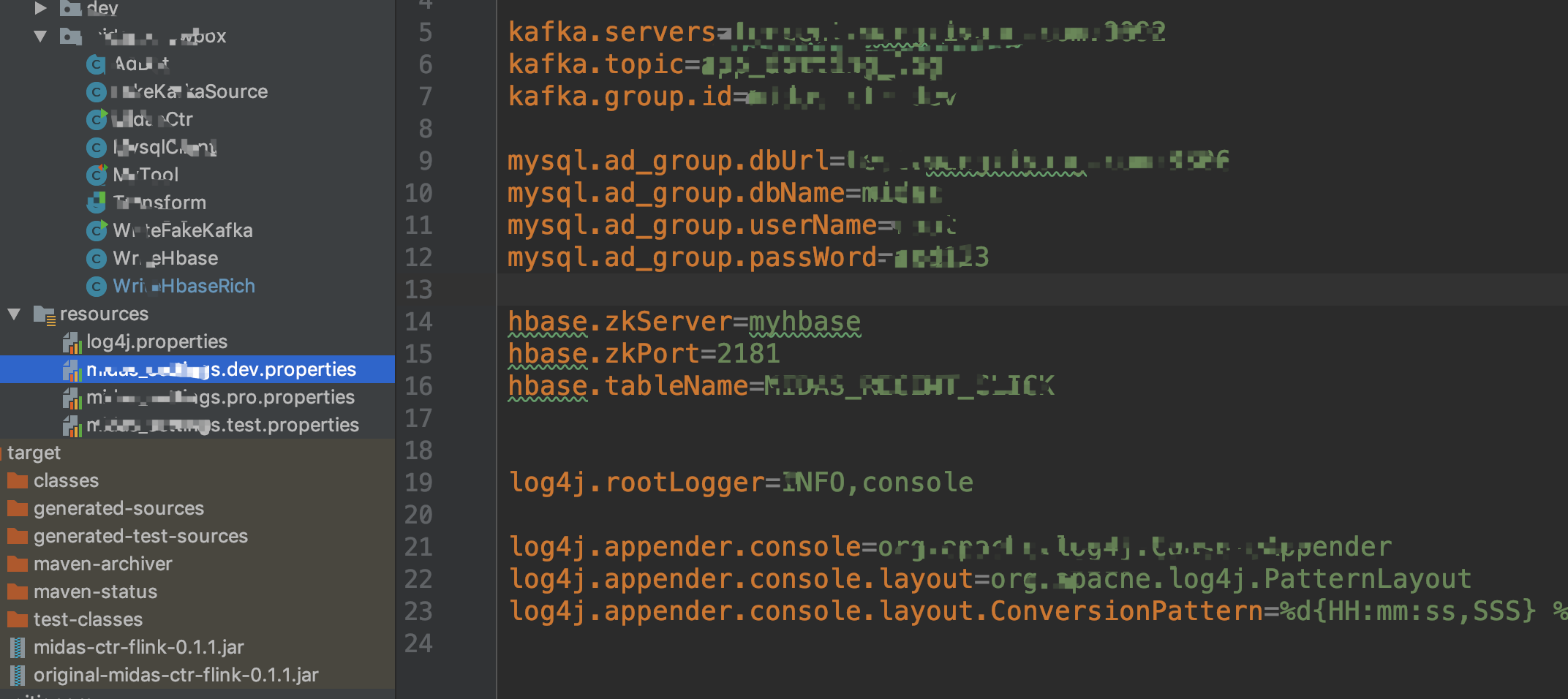

config配置:

flink接入config代码:

public static void main(String[] args) throwsException {/*Env and Config*/

if (args.length > 0) {

configEnv= args[0];

}

StreamExecutionEnvironment env=StreamExecutionEnvironment.getExecutionEnvironment();

String confName= String.format("xxx.%s.properties", configEnv);

InputStream in= MidasCtr.class.getClassLoader().getResourceAsStream(confName);

ParameterTool parameterTool=ParameterTool.fromPropertiesFile(in);

env.getConfig().setGlobalJobParameters(parameterTool);

}

代码:

packagemidas.knowbox;importorg.apache.flink.api.java.utils.ParameterTool;importorg.apache.flink.configuration.Configuration;importorg.apache.flink.streaming.api.functions.sink.RichSinkFunction;importorg.apache.hadoop.hbase.HBaseConfiguration;importorg.apache.hadoop.hbase.HColumnDescriptor;importorg.apache.hadoop.hbase.HTableDescriptor;importorg.apache.hadoop.hbase.TableName;import org.apache.hadoop.hbase.client.*;importorg.apache.hadoop.hbase.util.Bytes;public class WriteHbaseRich extends RichSinkFunction{private Connection conn = null;private Table table = null;private staticString zkServer;private staticString zkPort;private staticTableName tableName;private static final String click = "click";

BufferedMutatorParams params;

BufferedMutator mutator;

@Overridepublic void open(Configuration parameters) throwsException {

ParameterTool para=(ParameterTool)

getRuntimeContext().getExecutionConfig().getGlobalJobParameters();

zkServer= para.getRequired("hbase.zkServer");

zkPort= para.getRequired("hbase.zkPort");

String tName= para.getRequired("hbase.tableName");

tableName=TableName.valueOf(tName);

org.apache.hadoop.conf.Configuration config=HBaseConfiguration.create();

config.set("hbase.zookeeper.quorum", zkServer);

config.set("hbase.zookeeper.property.clientPort", zkPort);

conn=ConnectionFactory.createConnection(config);

Admin admin=conn.getAdmin();

admin.listTableNames();if (!admin.tableExists(tableName)) {

HTableDescriptor tableDes= newHTableDescriptor(tableName);

tableDes.addFamily(new HColumnDescriptor(click).setMaxVersions(3));

System.out.println("create table");

admin.flush(tableName);

}//连接表

table =conn.getTable(tableName);//设置缓存

params = newBufferedMutatorParams(tableName);

params.writeBufferSize(1024);

mutator=conn.getBufferedMutator(params);

}

@Overridepublic void invoke(AdDot record, Context context) throwsException {

Put put= newPut(Bytes.toBytes(String.valueOf(record.userID)));

System.out.println("hbase write");

System.out.println(record.recent10Data);

put.addColumn(Bytes.toBytes(click),Bytes.toBytes("recent_click"),Bytes.toBytes(String.valueOf(record.toJson())));

mutator.mutate(put);

System.out.println("hbase write");

}

@Overridepublic void close() throwsException {

mutator.flush();

conn.close();

}

}

调用:

dataStream.addSink(new WriteHbaseRich());

2、实现接口OutputFormat(不知道如何使用flink的配置文件)

packagemidas.knowbox;importorg.apache.flink.api.common.io.OutputFormat;importorg.apache.flink.configuration.Configuration;importorg.apache.hadoop.hbase.HBaseConfiguration;importorg.apache.hadoop.hbase.HColumnDescriptor;importorg.apache.hadoop.hbase.HTableDescriptor;importorg.apache.hadoop.hbase.TableName;import org.apache.hadoop.hbase.client.*;importorg.apache.hadoop.hbase.util.Bytes;importjava.io.IOException;importjava.util.ArrayList;public class WriteHbase implements OutputFormat{private Connection conn = null;private Table table = null;private static String zkServer = "";private static String port = "2181";private static TableName tableName = TableName.valueOf("test");private static final String userCf = "user";private static final String adCf = "ad";

@Overridepublic voidconfigure(Configuration parameters) {

}

@Overridepublic void open(int taskNumber, int numTasks) throwsIOException {

org.apache.hadoop.conf.Configuration config=HBaseConfiguration.create();

config.set("hbase.zookeeper.quorum", zkServer);

config.set("hbase.zookeeper.property.clientPort", port);

conn=ConnectionFactory.createConnection(config);

Admin admin=conn.getAdmin();

admin.listTableNames();if (!admin.tableExists(tableName)) {//添加表描述

HTableDescriptor tableDes = newHTableDescriptor(tableName);//添加列族

tableDes.addFamily(newHColumnDescriptor(userCf));

tableDes.addFamily(newHColumnDescriptor(adCf));//创建表

admin.createTable(tableDes);

}

table=conn.getTable(tableName);

}

@Overridepublic void writeRecord(AdDot record) throwsIOException {

Put put= new Put(Bytes.toBytes(record.userID + "_" + record.adID + "_" + record.actionTime)); //指定行//参数分别:列族、列、值

put.addColumn(Bytes.toBytes("user"), Bytes.toBytes("uerid"), Bytes.toBytes(record.userID));

put.addColumn(Bytes.toBytes("ad"), Bytes.toBytes("ad_id"), Bytes.toBytes(record.adID));

table.put(put);

}

@Overridepublic void close() throwsIOException {

conn.close()

}

}

3、遇到的问题

写入hbase的时候出现包引用错误 剔除 xml-apis 就好了

org.apache.hadoop

hadoop-client

2.7.3

xml-apis

xml-apis

1738

1738

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?