1. 问题背景

版本:Flink 1.10 (Flink1.9没有问题)

(1)自定义数据源TableSource,对应的Table为testsource,数据格式如下:

|-- name: STRING |-- age: INT |-- score: INT |-- timedate: DATE |-- timehour: TIME(0) |-- numbertest: DOUBLE |-- dt: TIMESTAMP(3)(2)输出源ElasticSearch ,对应的Table是establesink

Sink对应的es中信息:

curl -XGET "http://127.0.0.1:9200/test/students/_mapping?pretty"

{ "test" : { "mappings" : { "students" : { "properties" : { "data" : { "type" : "date" }, "sage" : { "type" : "long" }, "sex" : { "type" : "text", "fields" : { "keyword" : { "type" : "keyword", "ignore_above" : 256 } } }, "sname" : { "type" : "text", "fields" : { "keyword" : { "type" : "keyword", "ignore_above" : 256 } } } } } } }}(3)执行的SQL语句

insert into establesink select name as sname, cast(score as decimal(38,18)) as sage, dt as data from testsource使用flink connect es,配置的Json shema如下:

{type:'object', properties:{ sname:{type:'string'}, sage: { type: 'integer' }, //这个对应Flink SQL内的TimeStamp类型 data:{type: 'string', format: 'date-time'} } } 2. 异常错误

data字段存在一个类形问题:

Caused by: java.lang.ClassCastException: java.time.LocalDateTime cannot be cast to java.sql.Timestamp at org.apache.flink.formats.json.JsonRowSerializationSchema.convertTimestamp(JsonRowSerializationSchema.java:292) at org.apache.flink.formats.json.JsonRowSerializationSchema.lambda$wrapIntoNullableConverter$1fa09b5b$1(JsonRowSerializationSchema.java:189) at org.apache.flink.formats.json.JsonRowSerializationSchema.lambda$assembleRowConverter$dd344700$1(JsonRowSerializationSchema.java:346) at org.apache.flink.formats.json.JsonRowSerializationSchema.lambda$wrapIntoNullableConverter$1fa09b5b$1(JsonRowSerializationSchema.java:189) at org.apache.flink.formats.json.JsonRowSerializationSchema.serialize(JsonRowSerializationSchema.java:138) ... 34 common frames omitted3.问题排查

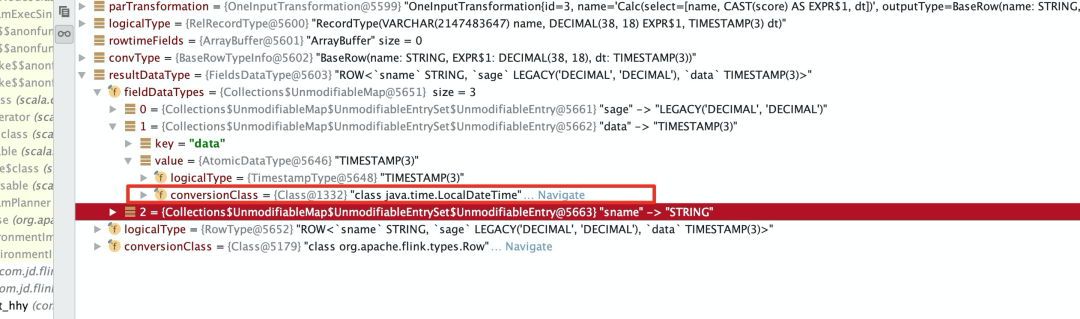

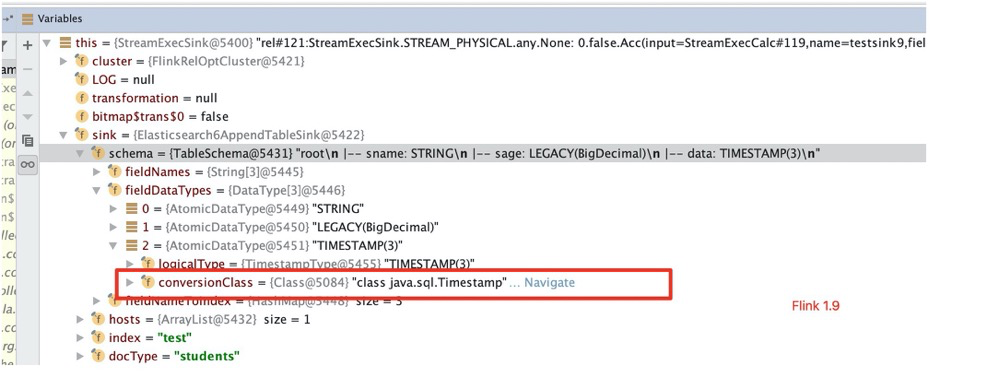

在StreamExecSink类Debug发现:

上面是Flink 1.10

下面是Flink 1.9 做个简单对比

TableSink中对应的TableSchema里面的data字段类型是

java.time.LocalDateTime,

但是我们设置的Json格式里面data字段对应json序列化器里面是

java.sql.Timestamp。

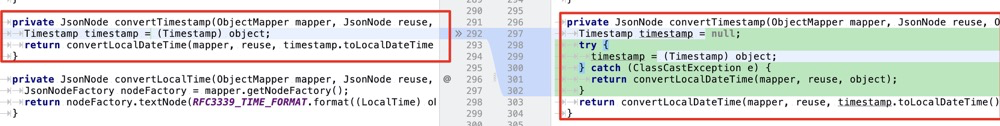

ElasticsearchUpsertTableSinkBase中处理数据源码: private void processUpsert(Row row, RequestIndexer indexer) { // 错误在这里抛出来的 final byte[] document = serializationSchema.serialize(row); if (keyFieldIndices.length == 0) { ..... }JSON序列化类在序列化Row数据时候,把object强转到了TimeStamp类型,如果object是LocalDataTIme,那么肯定会强转报错:

private JsonNode convertTimestamp(ObjectMapper mapper, JsonNode reuse, Object object) { Timestamp timestamp = (Timestamp) object; return convertLocalDateTime(mapper, reuse, timestamp.toLocalDateTime()); }3 简单解决办法

这里只是在Flink-json 模块里面简单了修改了一下,只是比较局限的解决办法。具体还是要涉及到flink-table-common模块里面存在TableSink创建TableSchema时候对应的TypeInformation与Json序列化里面的TypeInformation不一致。

!关注不迷路~ 各种福利、资源定期分享!

3925

3925

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?