注意:单击此处

https://urlify.cn/jMzu6r

下载完整的示例代码,或通过Binder在浏览器中运行此示例

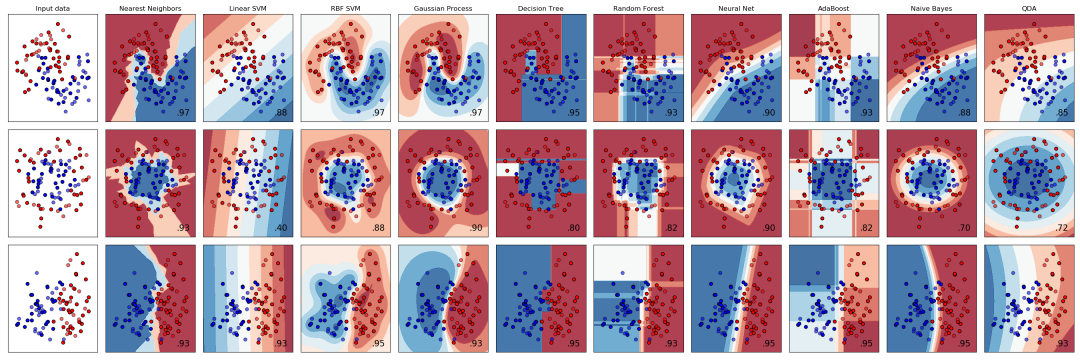

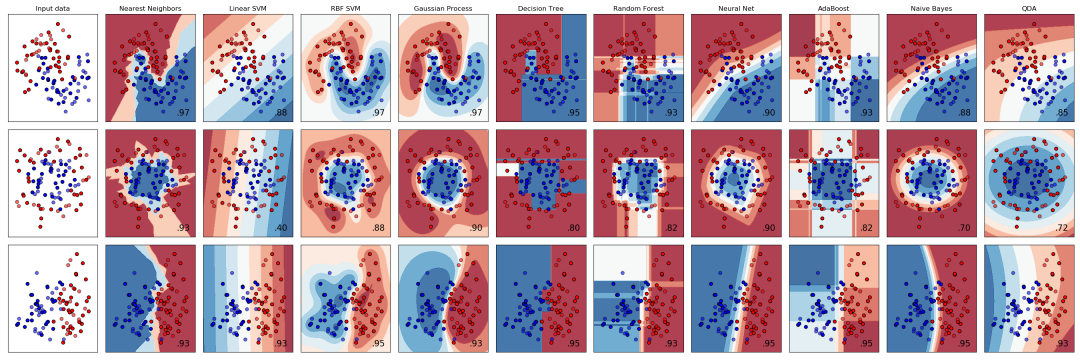

scikit-learn中的几个分类器在合成数据集上的比较。该示例的目的是为来说明不同分类器的决策边界的性质。应该谨慎对待这些示例,因为这些示例给人的直觉不一定会在实际的数据集中出现一样结果。

特别是在高维空间中,可以更轻松地线性分离数据,简单的分类器(如朴素贝叶斯和线性SVM)可能比其他分类器具有更好的普遍性。

这些图以纯色(solid colors)显示训练点,测试点是半透明的。右下方显示测试集上的分类准确度。

sphx_glr_plot_classifier_comparison_001

print(__doc__)# 源代码: Gaël Varoquaux# Andreas Müller# 由Jaques Grobler修改过文档# 许可证: BSD 3 clauseimport numpy as npimport matplotlib.pyplot as pltfrom matplotlib.colors import ListedColormapfrom sklearn.model_selection import train_test_splitfrom sklearn.preprocessing import StandardScalerfrom sklearn.datasets import make_moons, make_circles, make_classificationfrom sklearn.neural_network import MLPClassifierfrom sklearn.neighbors import KNeighborsClassifierfrom sklearn.svm import SVCfrom sklearn.gaussian_process import GaussianProcessClassifierfrom sklearn.gaussian_process.kernels import RBFfrom sklearn.tree import DecisionTreeClassifierfrom sklearn.ensemble import RandomForestClassifier, AdaBoostClassifierfrom sklearn.naive_bayes import GaussianNBfrom sklearn.discriminant_analysis import QuadraticDiscriminantAnalysish = .02 # mesh的步长names = ["Nearest Neighbors", "Linear SVM", "RBF SVM", "Gaussian Process", "Decision Tree", "Random Forest", "Neural Net", "AdaBoost", "Naive Bayes", "QDA"]classifiers = [ KNeighborsClassifier(3), SVC(kernel="linear", C=0.025), SVC(gamma=2, C=1), GaussianProcessClassifier(1.0 * RBF(1.0)), DecisionTreeClassifier(max_depth=5), RandomForestClassifier(max_depth=5, n_estimators=10, max_features=1), MLPClassifier(alpha=1, max_iter=1000), AdaBoostClassifier(), GaussianNB(), QuadraticDiscriminantAnalysis()]X, y = make_classification(n_features=2, n_redundant=0, n_informative=2, random_state=1, n_clusters_per_class=1)rng = np.random.RandomState(2)X += 2 * rng.uniform(size=X.shape)linearly_separable = (X, y)datasets = [make_moons(noise=0.3, random_state=0), make_circles(noise=0.2, factor=0.5, random_state=1), linearly_separable ]figure = plt.figure(figsize=(27, 9))i = 1# 遍历数据集for ds_cnt, ds in enumerate(datasets): # 处理数据集, 把它划分成训练集和测试集 X, y = ds X = StandardScaler().fit_transform(X) X_train, X_test, y_train, y_test = \ train_test_split(X, y, test_size=.4, random_state=42) x_min, x_max = X[:, 0].min() - .5, X[:, 0].max() + .5 y_min, y_max = X[:, 1].min() - .5, X[:, 1].max() + .5 xx, yy = np.meshgrid(np.arange(x_min, x_max, h), np.arange(y_min, y_max, h)) # 先绘制数据集 cm = plt.cm.RdBu cm_bright = ListedColormap(['#FF0000', '#0000FF']) ax = plt.subplot(len(datasets), len(classifiers) + 1, i) if ds_cnt == 0: ax.set_title("Input data") # 绘制训练集的点 ax.scatter(X_train[:, 0], X_train[:, 1], c=y_train, cmap=cm_bright, edgecolors='k') # 绘制测试集的点 ax.scatter(X_test[:, 0], X_test[:, 1], c=y_test, cmap=cm_bright, alpha=0.6, edgecolors='k') ax.set_xlim(xx.min(), xx.max()) ax.set_ylim(yy.min(), yy.max()) ax.set_xticks(()) ax.set_yticks(()) i += 1 # 遍历分类器 for name, clf in zip(names, classifiers): ax = plt.subplot(len(datasets), len(classifiers) + 1, i) clf.fit(X_train, y_train) score = clf.score(X_test, y_test) # 绘制决策边界. 为此,我们把每种颜色分配给 # mesh [x_min, x_max]x[y_min, y_max]中的点 if hasattr(clf, "decision_function"): Z = clf.decision_function(np.c_[xx.ravel(), yy.ravel()]) else: Z = clf.predict_proba(np.c_[xx.ravel(), yy.ravel()])[:, 1] # 把结果放入颜色图(color plot) Z = Z.reshape(xx.shape) ax.contourf(xx, yy, Z, cmap=cm, alpha=.8) # 绘制训练集的点 ax.scatter(X_train[:, 0], X_train[:, 1], c=y_train, cmap=cm_bright, edgecolors='k') # 绘制测试集的点 ax.scatter(X_test[:, 0], X_test[:, 1], c=y_test, cmap=cm_bright, edgecolors='k', alpha=0.6) ax.set_xlim(xx.min(), xx.max()) ax.set_ylim(yy.min(), yy.max()) ax.set_xticks(()) ax.set_yticks(()) if ds_cnt == 0: ax.set_title(name) ax.text(xx.max() - .3, yy.min() + .3, ('%.2f' % score).lstrip('0'), size=15, horizontalalignment='right') i += 1plt.tight_layout()plt.show()

下载Python源代码: plot_classifier_comparison.py

下载Jupyter notebook源代码: plot_classifier_comparison.ipynb

由Sphinx-Gallery生成的画廊

文壹由“伴编辑器”提供技术支持

☆☆☆为方便大家查阅,小编已将scikit-learn学习路线专栏 文章统一整理到公众号底部菜单栏,同步更新中,关注公众号,点击左下方“系列文章”,如图:

欢迎大家和我一起沿着scikit-learn文档这条路线,一起巩固机器学习算法基础。(添加微信:mthler,备注:sklearn学习,一起进【sklearn机器学习进步群】开启打怪升级的学习之旅。)

9240

9240

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?