8-6 cifar手动读取

程序:

import numpy as np

from scipy.misc import imsave

filename = 'D:/temp/cifar10_data/cifar-10-batches-bin/test_batch.bin'

bytestream = open(filename, "rb")

buf = bytestream.read(10000 * (1 + 32 * 32 * 3))

bytestream.close()

data = np.frombuffer(buf, dtype=np.uint8)

data = data.reshape(10000, 1 + 32 * 32 * 3)

labels_images = np.hsplit(data, [1])

labels = labels_images[0].reshape(10000)

images = labels_images[1].reshape(10000, 32, 32, 3)

img = np.reshape(images[0], (3, 32, 32)) # 导出第一幅图

img = img.transpose(1, 2, 0)

import pylab

print(labels[0])

pylab.imshow(img)

pylab.show()

结果:

8-7 queue

程序:

import tensorflow as tf

# 创建长度为100的队列

queue = tf.FIFOQueue(100, "float")

c = tf.Variable(0.0) # 计数器

# 加1操作

op = tf.assign_add(c, tf.constant(1.0))

# 操作:将计数器的结果加入队列

enqueue_op = queue.enqueue(c)

# 创建一个队列管理器QueueRunner,用这两个操作向q中添加元素。目前我们只使用一个线程:

qr = tf.train.QueueRunner(queue, enqueue_ops=[op, enqueue_op])

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

coord = tf.train.Coordinator()

## 启动入队线程, Coordinator是线程的参数

enqueue_threads = qr.create_threads(sess, coord=coord, start=True) # 启动入队线程

# 主线程

for i in range(0, 10):

print("-------------------------")

print(sess.run(queue.dequeue()))

coord.request_stop() # 通知其他线程关闭 其他所有线程关闭之后,这一函数才能返回

# join操作经常用在线程当中,其作用是等待某线程结束

# coord.join(enqueue_threads)

结果:

8-10 MNIST卷积

程序:

import tensorflow as tf

# 导入 MINST 数据集

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("F:/shendu/MNIST_data/", one_hot=True)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1], padding='SAME')

def avg_pool_7x7(x):

return tf.nn.avg_pool(x, ksize=[1, 7, 7, 1],

strides=[1, 7, 7, 1], padding='SAME')

# tf Graph Input

x = tf.placeholder(tf.float32, [None, 784]) # mnist data维度 28*28=784

y = tf.placeholder(tf.float32, [None, 10]) # 0-9 数字=> 10 classes

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x, [-1, 28, 28, 1])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

W_conv2 = weight_variable([5, 5, 32, 64])

b_conv2 = bias_variable([64])

h_conv2 = tf.nn.relu(conv2d(h_pool1, W_conv2) + b_conv2)

h_pool2 = max_pool_2x2(h_conv2)

#########################################################new

W_conv3 = weight_variable([5, 5, 64, 10])

b_conv3 = bias_variable([10])

h_conv3 = tf.nn.relu(conv2d(h_pool2, W_conv3) + b_conv3)

nt_hpool3 = avg_pool_7x7(h_conv3) # 64

nt_hpool3_flat = tf.reshape(nt_hpool3, [-1, 10])

y_conv = tf.nn.softmax(nt_hpool3_flat)

cross_entropy = -tf.reduce_sum(y * tf.log(y_conv))

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

# 启动session

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

for i in range(200): # 20000

batch = mnist.train.next_batch(50) # 50

if i % 20 == 0:

train_accuracy = accuracy.eval(feed_dict={

x: batch[0], y: batch[1]})

print("step %d, training accuracy %g" % (i, train_accuracy))

train_step.run(feed_dict={x: batch[0], y: batch[1]})

print("test accuracy %g" % accuracy.eval(feed_dict={

x: mnist.test.images, y: mnist.test.labels}))

结果:

Extracting F:/shendu/MNIST_data/train-images-idx3-ubyte.gz

Extracting F:/shendu/MNIST_data/train-labels-idx1-ubyte.gz

Extracting F:/shendu/MNIST_data/t10k-images-idx3-ubyte.gz

Extracting F:/shendu/MNIST_data/t10k-labels-idx1-ubyte.gz

step 0, training accuracy 0.1

step 20, training accuracy 0.06

step 40, training accuracy 0.06

step 60, training accuracy 0.1

step 80, training accuracy 0.16

step 100, training accuracy 0.2

step 120, training accuracy 0.12

step 140, training accuracy 0.18

step 160, training accuracy 0.26

step 180, training accuracy 0.26

test accuracy 0.3052

8-12反卷积操作

通过模拟数据进行卷积和反卷积操作,比较卷积与反卷积中padding在SAME,VALID下的变化,

程序:

import numpy as np

import tensorflow as tf

img = tf.Variable(tf.constant(1.0, shape=[1, 4, 4, 1]))#模拟数据

filter = tf.Variable(tf.constant([1.0, 0, -1, -2], shape=[2, 2, 1, 1]))

conv = tf.nn.conv2d(img, filter, strides=[1, 2, 2, 1], padding='VALID')#分别进行VALID和SAME操作

cons = tf.nn.conv2d(img, filter, strides=[1, 2, 2, 1], padding='SAME')

print(conv.shape)

print(cons.shape)

contv = tf.nn.conv2d_transpose(conv, filter, [1, 4, 4, 1], strides=[1, 2, 2, 1], padding='VALID')#反卷积

conts = tf.nn.conv2d_transpose(cons, filter, [1, 4, 4, 1], strides=[1, 2, 2, 1], padding='SAME')

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

print("conv:\n", sess.run([conv, filter]))

print("cons:\n", sess.run([cons]))

print("contv:\n", sess.run([contv]))

print("conts:\n", sess.run([conts]))

结果:

(1, 2, 2, 1)

(1, 2, 2, 1)

conv:

[array([[[[-2.],

[-2.]],

[[-2.],

[-2.]]]], dtype=float32), array([[[[ 1.]],

[[ 0.]]],

[[[-1.]],

[[-2.]]]], dtype=float32)]

cons:

[array([[[[-2.],

[-2.]],

[[-2.],

[-2.]]]], dtype=float32)]

contv:

[array([[[[-2.],

[ 0.],

[-2.],

[ 0.]],

[[ 2.],

[ 4.],

[ 2.],

[ 4.]],

[[-2.],

[ 0.],

[-2.],

[ 0.]],

[[ 2.],

[ 4.],

[ 2.],

[ 4.]]]], dtype=float32)]

conts:

[array([[[[-2.],

[ 0.],

[-2.],

[ 0.]],

[[ 2.],

[ 4.],

[ 2.],

[ 4.]],

[[-2.],

[ 0.],

[-2.],

[ 0.]],

[[ 2.],

[ 4.],

[ 2.],

[ 4.]]]], dtype=float32)]

8-13 反池化操作

定义一个数组作为模拟图片,将其进行最大池化,接着再进行反池化,比较原始数据与反池化后的数据。

程序:

import tensorflow as tf

import numpy as np

def max_pool_with_argmax(net, stride):#重新定义最大池化函数

_, mask = tf.nn.max_pool_with_argmax(net, ksize=[1, stride, stride, 1], strides=[1, stride, stride, 1],

padding='SAME')

mask = tf.stop_gradient(mask)

net = tf.nn.max_pool(net, ksize=[1, stride, stride, 1], strides=[1, stride, stride, 1], padding='SAME')

return net, mask

def unpool(net, mask, stride):#定义反最大池化

ksize = [1, stride, stride, 1]

input_shape = net.get_shape().as_list()

# calculation new shape

output_shape = (input_shape[0], input_shape[1] * ksize[1], input_shape[2] * ksize[2], input_shape[3])

#计算索引 calculation indices for batch, height, width and feature maps

one_like_mask = tf.ones_like(mask)

batch_range = tf.reshape(tf.range(output_shape[0], dtype=tf.int64), shape=[input_shape[0], 1, 1, 1])

b = one_like_mask * batch_range

y = mask // (output_shape[2] * output_shape[3])

x = mask % (output_shape[2] * output_shape[3]) // output_shape[3]

feature_range = tf.range(output_shape[3], dtype=tf.int64)

f = one_like_mask * feature_range

#转置索引 transpose indices & reshape update values to one dimension

updates_size = tf.size(net)

indices = tf.transpose(tf.reshape(tf.stack([b, y, x, f]), [4, updates_size]))

values = tf.reshape(net, [updates_size])

ret = tf.scatter_nd(indices, values, output_shape)

return ret

img = tf.constant([

[[0.0, 4.0], [0.0, 4.0], [0.0, 4.0], [0.0, 4.0]],

[[1.0, 5.0], [1.0, 5.0], [1.0, 5.0], [1.0, 5.0]],

[[2.0, 6.0], [2.0, 6.0], [2.0, 6.0], [2.0, 6.0]],

[[3.0, 7.0], [3.0, 7.0], [3.0, 7.0], [3.0, 7.0]]

])#定义数组

img = tf.reshape(img, [1, 4, 4, 2])

pooling2 = tf.nn.max_pool(img, [1, 2, 2, 1], [1, 2, 2, 1], padding='SAME')#池化

encode, mask = max_pool_with_argmax(img, 2)

img2 = unpool(encode, mask, 2)#反池化

print(img.shape)

print(encode.shape)

print(mask.shape)

print(img2.shape)

with tf.Session() as sess:

print("image:")

print(sess.run(img))

result = sess.run(pooling2)

print("pooling2:\n", result)

result, mask2 = sess.run([encode, mask])

print("encode:\n", result, mask2)

print("-------------------------------")

result = sess.run(img2)

print("reslut:\n", result)

结果:

(1, 4, 4, 2)

(1, 2, 2, 2)

(1, 4, 4, 2)

image:

[[[[0. 4.]

[0. 4.]

[0. 4.]

[0. 4.]]

[[1. 5.]

[1. 5.]

[1. 5.]

[1. 5.]]

[[2. 6.]

[2. 6.]

[2. 6.]

[2. 6.]]

[[3. 7.]

[3. 7.]

[3. 7.]

[3. 7.]]]]

pooling2:

[[[[1. 5.]

[1. 5.]]

[[3. 7.]

[3. 7.]]]]

encode:

[[[[1. 5.]

[1. 5.]]

[[3. 7.]

[3. 7.]]]] [[[[ 8 9]

[12 13]]

[[24 25]

[28 29]]]]

-------------------------------

reslut:

[[[[0. 0.]

[0. 0.]

[0. 0.]

[0. 0.]]

[[1. 5.]

[0. 0.]

[1. 5.]

[0. 0.]]

[[0. 0.]

[0. 0.]

[0. 0.]

[0. 0.]]

[[3. 7.]

[0. 0.]

[3. 7.]

[0. 0.]]]]

8-15 gradients1

有2个op,4个参数,演示使用gradients同时为两个式子4个参数求梯度。

程序:

import tensorflow as tf

tf.reset_default_graph()

w1 = tf.get_variable('w1', shape=[2])

w2 = tf.get_variable('w2', shape=[2])

w3 = tf.get_variable('w3', shape=[2])

w4 = tf.get_variable('w4', shape=[2])

y1 = w1 + w2+ w3

y2 = w3 + w4

a = w1+w2

a_stoped = tf.stop_gradient(a)

y3= a_stoped+w3

gradients = tf.gradients([y1, y2], [w1, w2, w3, w4], grad_ys=[tf.convert_to_tensor([1.,2.]),

tf.convert_to_tensor([3.,4.])])#grad_ys求梯度的输入值

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

print(sess.run(gradients))

结果:

[array([1., 2.], dtype=float32), array([1., 2.], dtype=float32), array([4., 6.], dtype=float32), array([3., 4.], dtype=float32)]

8-16 gradients2

演示梯度停止的用法,并观察当变量设置梯度停止后,对其求梯度的结果。

程序:

import tensorflow as tf

tf.reset_default_graph()

w1 = tf.get_variable('w1', shape=[2])

w2 = tf.get_variable('w2', shape=[2])

w3 = tf.get_variable('w3', shape=[2])

w4 = tf.get_variable('w4', shape=[2])

y1 = w1 + w2 + w3

y2 = w3 + w4

a = w1 + w2

a_stoped = tf.stop_gradient(a)

y3 = a_stoped + w3

gradients = tf.gradients([y1, y2], [w1, w2, w3, w4], grad_ys=[tf.convert_to_tensor([1., 2.]),

tf.convert_to_tensor([3., 4.])])

gradients2 = tf.gradients(y3, [w1, w2, w3], grad_ys=tf.convert_to_tensor([1., 2.]))

print(gradients2)

gradients3 = tf.gradients(y3, [w3], grad_ys=tf.convert_to_tensor([1., 2.]))

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

print(sess.run(gradients))

# print(sess.run(gradients2))#程序试图求一个None的梯度,报错

print(sess.run(gradients3))

结果:

[None, None, <tf.Tensor 'gradients_1/grad_ys_0:0' shape=(2,) dtype=float32>]

[array([1., 2.], dtype=float32), array([1., 2.], dtype=float32), array([4., 6.], dtype=float32), array([3., 4.], dtype=float32)]

[array([1., 2.], dtype=float32)]

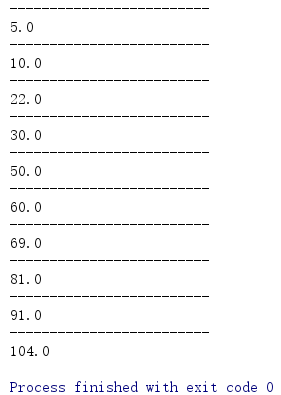

8-22 带BN的多通道MNIST

程序:

import tensorflow as tf

import numpy as np

from tensorflow.contrib.layers.python.layers import batch_norm

# 导入 MINST 数据集

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("F:/shendu/MNIST_data/", one_hot=True)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1], padding='SAME')

def avg_pool_7x7(x):

return tf.nn.avg_pool(x, ksize=[1, 7, 7, 1],

strides=[1, 7, 7, 1], padding='SAME')

def batch_norm_layer(value, train=None, name='batch_norm'):#BN函数

if train is not None:

return batch_norm(value, decay=0.9, updates_collections=None, is_training=True)

else:

return batch_norm(value, decay=0.9, updates_collections=None, is_training=False)

# tf Graph Input

x = tf.placeholder(tf.float32, [None, 784]) # mnist data维度 28*28=784

y = tf.placeholder(tf.float32, [None, 10]) # 0-9 数字=> 10 classes

train = tf.placeholder(tf.float32)

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x, [-1, 28, 28, 1])

h_conv1 = tf.nn.relu(batch_norm_layer((conv2d(x_image, W_conv1) + b_conv1), train))

h_pool1 = max_pool_2x2(h_conv1)

######################################################多卷积核

W_conv2_5x5 = weight_variable([5, 5, 32, 32])

b_conv2_5x5 = bias_variable([32])

W_conv2_7x7 = weight_variable([7, 7, 32, 32])

b_conv2_7x7 = bias_variable([32])

h_conv2_5x5 = tf.nn.relu(batch_norm_layer((conv2d(h_pool1, W_conv2_5x5) + b_conv2_5x5), train))

h_conv2_7x7 = tf.nn.relu(batch_norm_layer((conv2d(h_pool1, W_conv2_7x7) + b_conv2_7x7), train))

h_conv2 = tf.concat([h_conv2_5x5, h_conv2_7x7], 3)

h_pool2 = max_pool_2x2(h_conv2)

#########################################################new 池化

W_conv3 = weight_variable([5, 5, 64, 10])

b_conv3 = bias_variable([10])

h_conv3 = tf.nn.relu(conv2d(h_pool2, W_conv3) + b_conv3)

nt_hpool3 = avg_pool_7x7(h_conv3) # 10

nt_hpool3_flat = tf.reshape(nt_hpool3, [-1, 10])

y_conv = tf.nn.softmax(nt_hpool3_flat)

keep_prob = tf.placeholder("float")

cross_entropy = -tf.reduce_sum(y * tf.log(y_conv))

decaylearning_rate = tf.train.exponential_decay(0.04, 20000, 1000, 0.9)

train_step = tf.train.AdamOptimizer(decaylearning_rate).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

# 启动session

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

for i in range(20000): # 20000

batch = mnist.train.next_batch(50)

if i % 100 == 0:

train_accuracy = accuracy.eval(feed_dict={

x: batch[0], y: batch[1], keep_prob: 1.0})

print("step %d, training accuracy %g" % (i, train_accuracy))

train_step.run(feed_dict={x: batch[0], y: batch[1], keep_prob: 0.5})

print("test accuracy %g" % accuracy.eval(feed_dict={

x: mnist.test.images, y: mnist.test.labels, keep_prob: 1.0}))

结果:

D:\Anaconda3\python.exe F:/PycharmProjects/test20190624/test.py

Extracting F:/shendu/MNIST_data/train-images-idx3-ubyte.gz

Extracting F:/shendu/MNIST_data/train-labels-idx1-ubyte.gz

Extracting F:/shendu/MNIST_data/t10k-images-idx3-ubyte.gz

Extracting F:/shendu/MNIST_data/t10k-labels-idx1-ubyte.gz

step 0, training accuracy 0.14

step 100, training accuracy 0.68

step 200, training accuracy 0.8

step 300, training accuracy 0.7

step 400, training accuracy 0.86

step 500, training accuracy 0.7

step 600, training accuracy 0.8

step 700, training accuracy 0.74

step 800, training accuracy 0.9

step 900, training accuracy 0.86

step 1000, training accuracy 0.78

step 1100, training accuracy 0.84

step 1200, training accuracy 0.84

step 1300, training accuracy 0.96

step 1400, training accuracy 0.84

step 1500, training accuracy 0.92

step 1600, training accuracy 0.9

step 1700, training accuracy 0.88

step 1800, training accuracy 0.88

step 1900, training accuracy 0.88

step 2000, training accuracy 0.86

step 2100, training accuracy 0.9

step 2200, training accuracy 0.82

step 2300, training accuracy 0.82

step 2400, training accuracy 0.86

step 2500, training accuracy 0.9

step 2600, training accuracy 0.88

step 2700, training accuracy 0.86

step 2800, training accuracy 0.96

step 2900, training accuracy 0.86

step 3000, training accuracy 0.86

step 3100, training accuracy 0.92

step 3200, training accuracy 0.96

step 3300, training accuracy 0.9

step 3400, training accuracy 0.96

step 3500, training accuracy 0.86

step 3600, training accuracy 0.86

step 3700, training accuracy 0.82

step 3800, training accuracy 0.86

step 3900, training accuracy 0.86

step 4000, training accuracy 0.88

step 4100, training accuracy 0.88

step 4200, training accuracy 0.94

step 4300, training accuracy 0.84

step 4400, training accuracy 0.86

step 4500, training accuracy 0.92

step 4600, training accuracy 0.9

step 4700, training accuracy 0.84

step 4800, training accuracy 0.86

step 4900, training accuracy 0.88

step 5000, training accuracy 0.88

step 5100, training accuracy 0.9

step 5200, training accuracy 0.88

step 5300, training accuracy 0.9

step 5400, training accuracy 0.9

step 5500, training accuracy 0.9

step 5600, training accuracy 0.88

step 5700, training accuracy 0.98

step 5800, training accuracy 0.98

step 5900, training accuracy 0.98

step 6000, training accuracy 1

step 6100, training accuracy 1

step 6200, training accuracy 0.98

step 6300, training accuracy 0.98

step 6400, training accuracy 0.98

step 6500, training accuracy 1

step 6600, training accuracy 1

step 6700, training accuracy 1

step 6800, training accuracy 1

step 6900, training accuracy 1

step 7000, training accuracy 1

step 7100, training accuracy 1

step 7200, training accuracy 1

step 7300, training accuracy 1

step 7400, training accuracy 1

step 7500, training accuracy 1

step 7600, training accuracy 1

step 7700, training accuracy 1

step 7800, training accuracy 0.98

step 7900, training accuracy 0.98

step 8000, training accuracy 1

step 8100, training accuracy 1

step 8200, training accuracy 1

step 8300, training accuracy 1

step 8400, training accuracy 1

step 8500, training accuracy 1

step 8600, training accuracy 1

step 8700, training accuracy 1

step 8800, training accuracy 1

step 8900, training accuracy 1

step 9000, training accuracy 1

step 9100, training accuracy 1

step 9200, training accuracy 1

step 9300, training accuracy 1

step 9400, training accuracy 1

step 9500, training accuracy 1

step 9600, training accuracy 1

step 9700, training accuracy 1

step 9800, training accuracy 1

step 9900, training accuracy 1

step 10000, training accuracy 1

step 10100, training accuracy 1

step 10200, training accuracy 1

step 10300, training accuracy 1

step 10400, training accuracy 1

step 10500, training accuracy 1

step 10600, training accuracy 0.96

step 10700, training accuracy 0.98

step 10800, training accuracy 1

step 10900, training accuracy 1

step 11000, training accuracy 1

step 11100, training accuracy 1

step 11200, training accuracy 1

step 11300, training accuracy 1

step 11400, training accuracy 1

step 11500, training accuracy 1

step 11600, training accuracy 1

step 11700, training accuracy 1

step 11800, training accuracy 1

step 11900, training accuracy 1

step 12000, training accuracy 0.98

step 12100, training accuracy 1

step 12200, training accuracy 1

step 12300, training accuracy 1

step 12400, training accuracy 1

step 12500, training accuracy 1

step 12600, training accuracy 0.96

step 12700, training accuracy 1

step 12800, training accuracy 1

step 12900, training accuracy 1

step 13000, training accuracy 0.98

step 13100, training accuracy 1

step 13200, training accuracy 1

step 13300, training accuracy 1

step 13400, training accuracy 1

step 13500, training accuracy 1

step 13600, training accuracy 0.98

step 13700, training accuracy 1

step 13800, training accuracy 0.98

step 13900, training accuracy 1

step 14000, training accuracy 1

step 14100, training accuracy 1

step 14200, training accuracy 1

step 14300, training accuracy 0.98

step 14400, training accuracy 1

step 14500, training accuracy 1

step 14600, training accuracy 1

step 14700, training accuracy 1

step 14800, training accuracy 1

step 14900, training accuracy 1

step 15000, training accuracy 0.98

step 15100, training accuracy 1

step 15200, training accuracy 1

step 15300, training accuracy 1

step 15400, training accuracy 1

step 15500, training accuracy 0.98

step 15600, training accuracy 1

step 15700, training accuracy 0.98

step 15800, training accuracy 1

step 15900, training accuracy 1

step 16000, training accuracy 1

step 16100, training accuracy 1

step 16200, training accuracy 1

step 16300, training accuracy 0.98

step 16400, training accuracy 1

step 16500, training accuracy 1

step 16600, training accuracy 1

step 16700, training accuracy 1

step 16800, training accuracy 1

step 16900, training accuracy 0.98

step 17000, training accuracy 1

step 17100, training accuracy 1

step 17200, training accuracy 1

step 17300, training accuracy 1

step 17400, training accuracy 1

step 17500, training accuracy 1

step 17600, training accuracy 1

step 17700, training accuracy 1

step 17800, training accuracy 1

step 17900, training accuracy 1

step 18000, training accuracy 1

step 18100, training accuracy 1

step 18200, training accuracy 0.98

step 18300, training accuracy 1

step 18400, training accuracy 1

step 18500, training accuracy 1

step 18600, training accuracy 1

step 18700, training accuracy 1

step 18800, training accuracy 1

step 18900, training accuracy 1

step 19000, training accuracy 1

step 19100, training accuracy 1

step 19200, training accuracy 1

step 19300, training accuracy 1

step 19400, training accuracy 1

step 19500, training accuracy 1

step 19600, training accuracy 1

step 19700, training accuracy 1

step 19800, training accuracy 1

step 19900, training accuracy 1

test accuracy 0.9924

Process finished with exit code 0

8-23 多通道MNIST

程序:

import tensorflow as tf

import numpy as np

# 导入 MINST 数据集

from tensorflow.examples.tutorials.mnist import input_data

mnist = input_data.read_data_sets("F:/shendu/MNIST_data/", one_hot=True)

def weight_variable(shape):

initial = tf.truncated_normal(shape, stddev=0.1)

return tf.Variable(initial)

def bias_variable(shape):

initial = tf.constant(0.1, shape=shape)

return tf.Variable(initial)

def conv2d(x, W):

return tf.nn.conv2d(x, W, strides=[1, 1, 1, 1], padding='SAME')

def max_pool_2x2(x):

return tf.nn.max_pool(x, ksize=[1, 2, 2, 1],

strides=[1, 2, 2, 1], padding='SAME')

def avg_pool_7x7(x):

return tf.nn.avg_pool(x, ksize=[1, 7, 7, 1],

strides=[1, 7, 7, 1], padding='SAME')

# tf Graph Input

x = tf.placeholder(tf.float32, [None, 784]) # mnist data维度 28*28=784

y = tf.placeholder(tf.float32, [None, 10]) # 0-9 数字=> 10 classes

W_conv1 = weight_variable([5, 5, 1, 32])

b_conv1 = bias_variable([32])

x_image = tf.reshape(x, [-1, 28, 28, 1])

h_conv1 = tf.nn.relu(conv2d(x_image, W_conv1) + b_conv1)

h_pool1 = max_pool_2x2(h_conv1)

######################################################多卷积核

W_conv2_5x5 = weight_variable([5, 5, 32, 32])

b_conv2_5x5 = bias_variable([32])

W_conv2_7x7 = weight_variable([7, 7, 32, 32])

b_conv2_7x7 = bias_variable([32])

h_conv2_5x5 = tf.nn.relu(conv2d(h_pool1, W_conv2_5x5) + b_conv2_5x5)

h_conv2_7x7 = tf.nn.relu(conv2d(h_pool1, W_conv2_7x7) + b_conv2_7x7)

h_conv2 = tf.concat([h_conv2_5x5, h_conv2_7x7], 3)

h_pool2 = max_pool_2x2(h_conv2)

#########################################################new 池化

W_conv3 = weight_variable([5, 5, 64, 10])

b_conv3 = bias_variable([10])

h_conv3 = tf.nn.relu(conv2d(h_pool2, W_conv3) + b_conv3)

nt_hpool3 = avg_pool_7x7(h_conv3) # 10

nt_hpool3_flat = tf.reshape(nt_hpool3, [-1, 10])

y_conv = tf.nn.softmax(nt_hpool3_flat)

keep_prob = tf.placeholder("float")

cross_entropy = -tf.reduce_sum(y * tf.log(y_conv))

# 不同的优化方法测测效果

# train_step = tf.train.GradientDescentOptimizer(1e-3).minimize(cross_entropy)

# train_step = tf.train.AdagradOptimizer(1e-5).minimize(cross_entropy)

train_step = tf.train.AdamOptimizer(1e-4).minimize(cross_entropy)

correct_prediction = tf.equal(tf.argmax(y_conv, 1), tf.argmax(y, 1))

accuracy = tf.reduce_mean(tf.cast(correct_prediction, "float"))

# 启动session

with tf.Session() as sess:

sess.run(tf.global_variables_initializer())

for i in range(1000): # 20000

batch = mnist.train.next_batch(50)

if i % 100 == 0:

train_accuracy = accuracy.eval(feed_dict={

x: batch[0], y: batch[1], keep_prob: 1.0})

print("step %d, training accuracy %g" % (i, train_accuracy))

train_step.run(feed_dict={x: batch[0], y: batch[1], keep_prob: 0.5})

print("test accuracy %g" % accuracy.eval(feed_dict={

x: mnist.test.images, y: mnist.test.labels, keep_prob: 1.0}))

结果:

Extracting F:/shendu/MNIST_data/train-images-idx3-ubyte.gz

Extracting F:/shendu/MNIST_data/train-labels-idx1-ubyte.gz

Extracting F:/shendu/MNIST_data/t10k-images-idx3-ubyte.gz

Extracting F:/shendu/MNIST_data/t10k-labels-idx1-ubyte.gz

step 0, training accuracy 0.12

step 100, training accuracy 0.26

step 200, training accuracy 0.32

step 300, training accuracy 0.38

step 400, training accuracy 0.34

step 500, training accuracy 0.5

step 600, training accuracy 0.62

step 700, training accuracy 0.54

step 800, training accuracy 0.88

step 900, training accuracy 0.84

test accuracy 0.8438

Process finished with exit code 0

1998

1998

被折叠的 条评论

为什么被折叠?

被折叠的 条评论

为什么被折叠?